(PR) Optical Scale-up Consortium Established to Create an Open Specification for AI Infrastructure

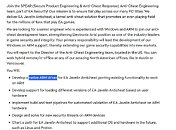

The Optical Compute Interconnect (OCI) Multi-Source Agreement (MSA) group today announced its formation, led by founding members AMD, Broadcom, Meta, Microsoft, NVIDIA and OpenAI. This industry consortium marks a pivotal shift toward a hyperscaler-driven open ecosystem to enable the development of a multi-vendor supply chain for optical scale-up interconnects. By aligning on an open specification, the OCI MSA members are promoting a robust optical ecosystem which will ensure that the future of AI interconnects is built with a flexible, multi-vendor foundation to meet the optical interconnect needs of modern AI infrastructure.

The Physics and Power Mandate

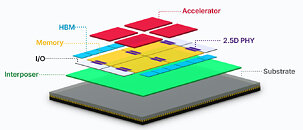

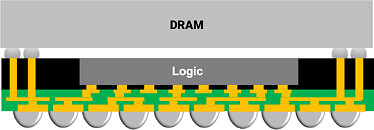

As large language models (LLMs) advance toward super intelligence, traditional copper-based connectivity is reaching limitations in physical reach which are impacting AI cluster scale-up domain architectures. OCI will enable migration from copper-based to optical-based scale-up architectures, alleviating copper interconnect bottlenecks.

The Physics and Power Mandate

As large language models (LLMs) advance toward super intelligence, traditional copper-based connectivity is reaching limitations in physical reach which are impacting AI cluster scale-up domain architectures. OCI will enable migration from copper-based to optical-based scale-up architectures, alleviating copper interconnect bottlenecks.