Nearly 80,000 tech workers have already lost their jobs in 2026 — and AI impact means more could be to come

007 First Light is launching on May 27th, unless you own a Switch 2 IO Interactive has delayed the release of 007 First Light on Switch 2. Originally, the game was due to be released alongside the game’s PS5, Xbox Series X/S, and PC versions. Now, the game will be released much later, targeting a […]

The post IO Interactive Delays 007 First Light on Switch 2 appeared first on OC3D.

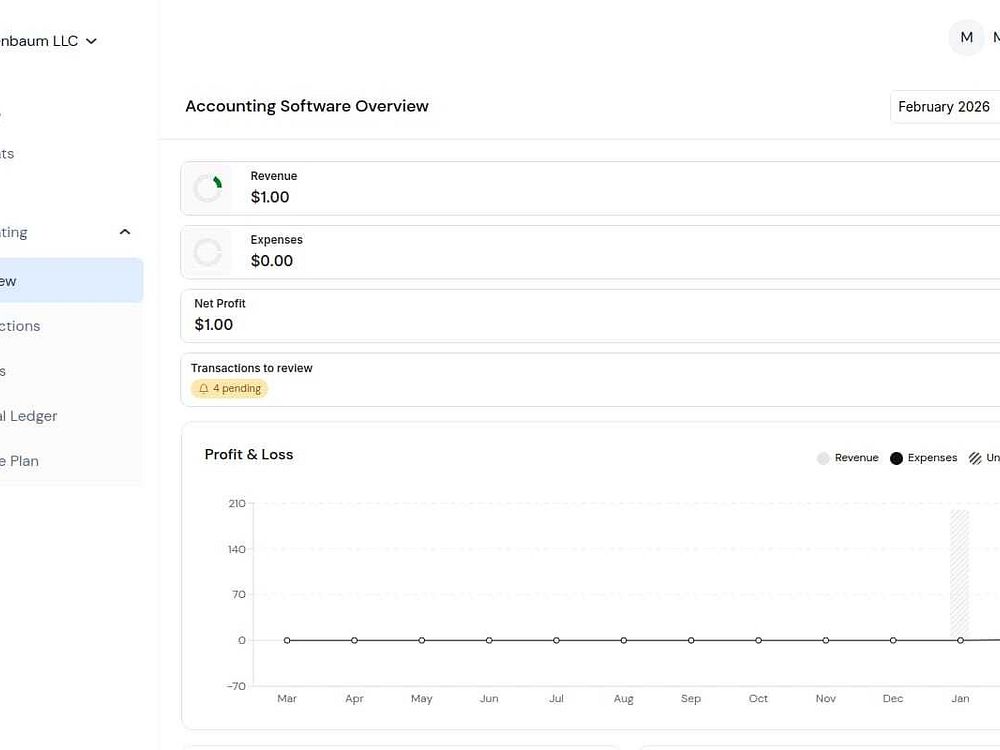

Holdings offers zero-fee business banking with 1.75% APY and AI-powered bookkeeping. Open accounts quickly, create sub-accounts for payroll and taxes, and issue virtual or physical Visa cards with spend controls. The platform auto-categorizes transactions and generates profit and loss, balance sheet, and cash flow reports, keeping books tax-ready. Deposits are FDIC insured up to $3 million through partner banks, and you can add a dedicated bookkeeper if needed.

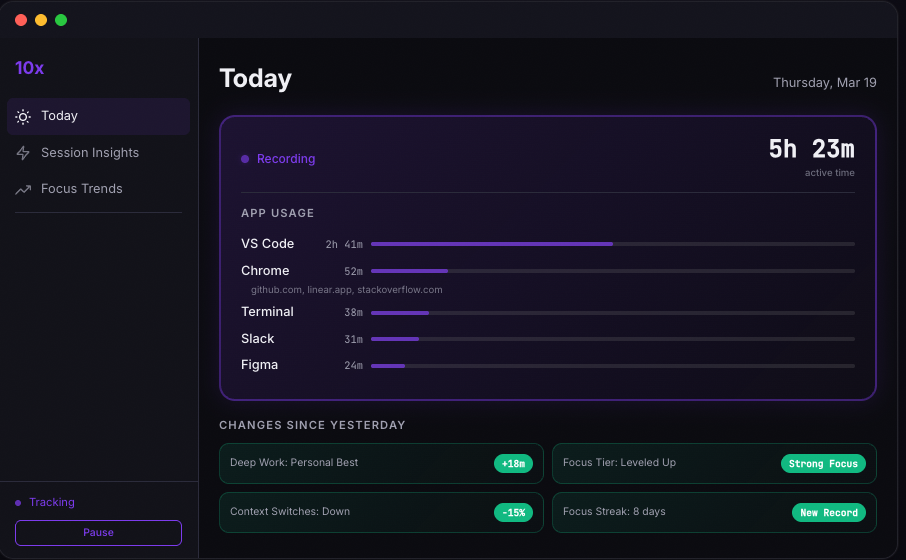

10x is a macOS productivity app for people who want more deep work and less drift. It quietly turns your daily work activity into clear insight so you can see what pulled you off track, when you focus best, and how your habits change over time. Instead of managing another timer or system, 10x helps you understand your real behavior and improve from there. You get daily coaching, focus trends, and practical feedback to protect your attention, repeat your best days, and make steady progress. Your data stays on your Mac, and you control it with pause, export, and delete options.

OAuth credential delegation for AI agents

Your control center for parallel AI agents

email's bare necessities

Beautiful Screen Recordings in minutes

Strava for cooking

Reminders that keep up with you

Discover open-source tools with an AI chat assistant

Simplified and total DMARC control

Your landing page, rewritten for every ad you run

Minimize windows when you switch apps automatically

Tokenly provides token infrastructure for developers to gift and redeem tokens across registered applications. It lets you reward users, run cross-app incentives, and power referral programs with a single REST API. Register your app to get credentials, send and receive tokens with real value, and track balances and transactions in real time from an intuitive dashboard.

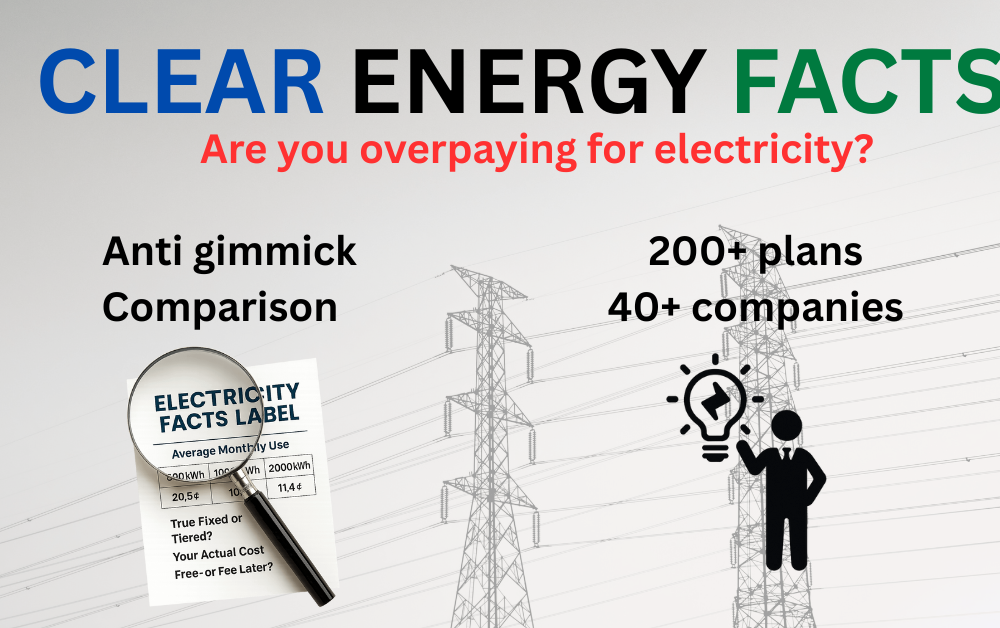

Clear Energy Facts helps Texans compare and choose fixed-rate electricity plans with transparent pricing. It analyzes your historical usage hourly and uses AI to parse each Electricity Facts Label to reveal true costs. Plans are ranked by total monthly cost without paid placement, including Power to Choose offers. You can compare Free Nights, Free Weekends, and bill-credit plans side by side, then enter your ZIP to see 200+ options with clear monthly cost estimates.

Deploy, fix, and automate your infra in one terminal

Discover children’s books and track reading together.

The open-source alternative to Webflow

A company built of Claw agents that are Cloud-native

Live logs inside your IDE to Debug without context switching

Turn messy work into interactive, actionable reports

Catch risky code changes and weak tests before they ship

Your design canvas that writes code powered by AI

Build teams of humans and agents, watch them work.

Vibe-code motion graphics on one canvas

The modern, powerful Google Analytics alternative

AI-powered confidential dev environment focused on privacy

Claude Code & Codex session analytics for dev teams

Cross-model reviews in GitHub Copilot CLI

One-page websites from real Google Maps reviews

Turns every AI decision into audit-ready evidence

Find concepts across videos and text instantly

Rebuild 1,738+ dead YC startups with AI

AI Technicians for the Physical World

The open source WisprFlow alternative, now on mobile

The linux terminal built for Agents and Multiplexing

An infinite, collaborative playground for music creation

Simulate first-time users. See why they drop off

AI Vocabulary Flashcards that Adapt to Your Memory

Your API costs fully visible.

Turn Meetings Into Ready-to-Post Shorts and Posts

Filter (and heal) your Twitter feed

Email Inboxes for AI Agents

Your Twitter feed, finally peaceful

Open-source Stripe Connect alternative with $0.002 fees

Disable your keyboard + trackpad to safely clean your screen

Voice and visual context for AI builders. No subscription.

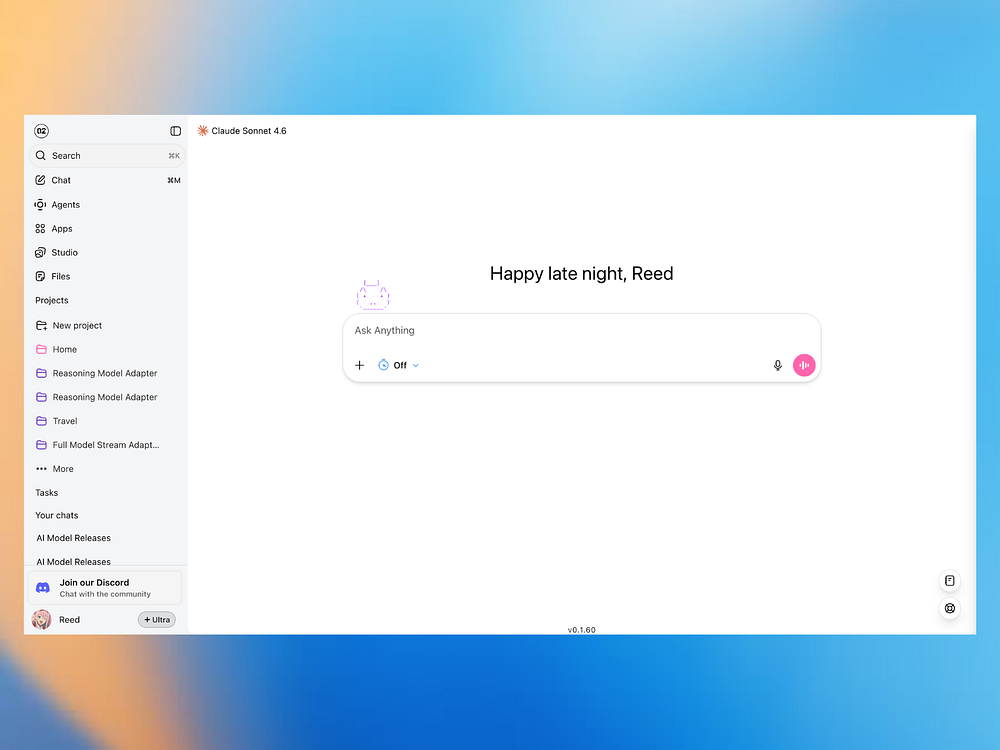

The open-source AI workstation for coding, ops, and life

Meta's smart multimodal AI that understands your world

Build forms with Claude

Proactive personal assistant that handles your day

Fully local open-source agent for managing your texts

Turn your Strava runs into a world map adventure

Gives your coding agent a dedicated VM that's ready 24/7

Learn Git by solving challenges in a fake terminal

You ship features and they deserve to be seen

Pre-built agent harness on managed infrastructure

AI coworker for GTM teams with its own computer & memory

Openclaw on your Mac, with permissions you can understand

AI-powered schematic design tool for PCB making

Your AI coding sessions can finally talk to each other

Your entire video library, now searchable and editable by AI

Open source alternative to Raycast Pro

58 animations, 31 shaders, 5 games in one Xcode project

See how much you're losing to failed payments on Stripe

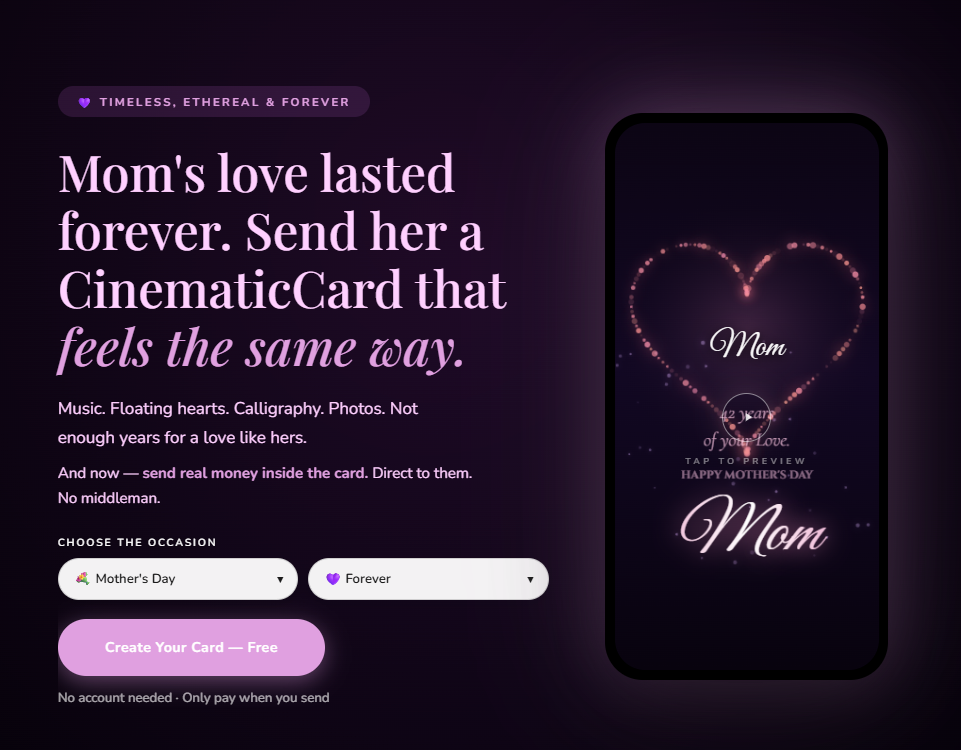

CinematicCard lets you create cinematic digital greeting cards with calligraphy, music, and effects that play in the browser. Personalize the experience with your message, photos, and soundtrack, then share an instant link or schedule delivery. Upgrade with a photo slideshow, upload your own music, and add a cash gift reveal that pays via Venmo, PayPal, or CashApp. Links never expire, no app is required, and bulk send personalizes cards for groups.

Akamai breaks down which AI bots are hitting publishing, who operates them, and why fetcher bots may pose a more immediate risk.

The post OpenAI, Meta, ByteDance Lead AI Bot Traffic In Publishing appeared first on Search Engine Journal.

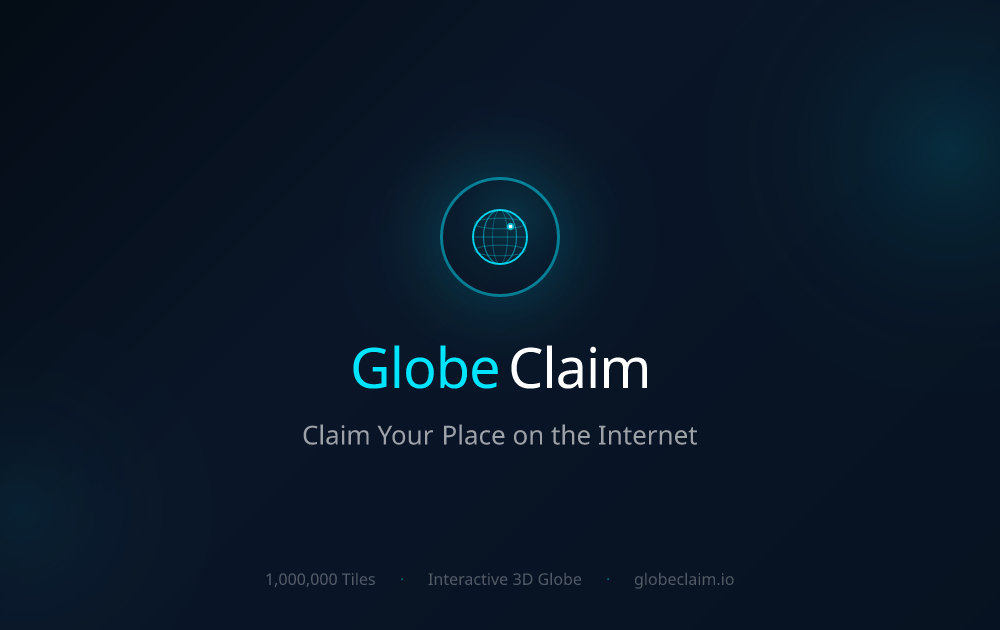

GlobeClaim lets you claim hex-shaped tiles to mark your spot on a shared internet map. Link your site or project, pick a sector, and appear in Top and New feeds as others explore the grid. Start with a few free tiles, build reputation and influence through activity, and browse territories and profiles to discover creators and brands across the map.

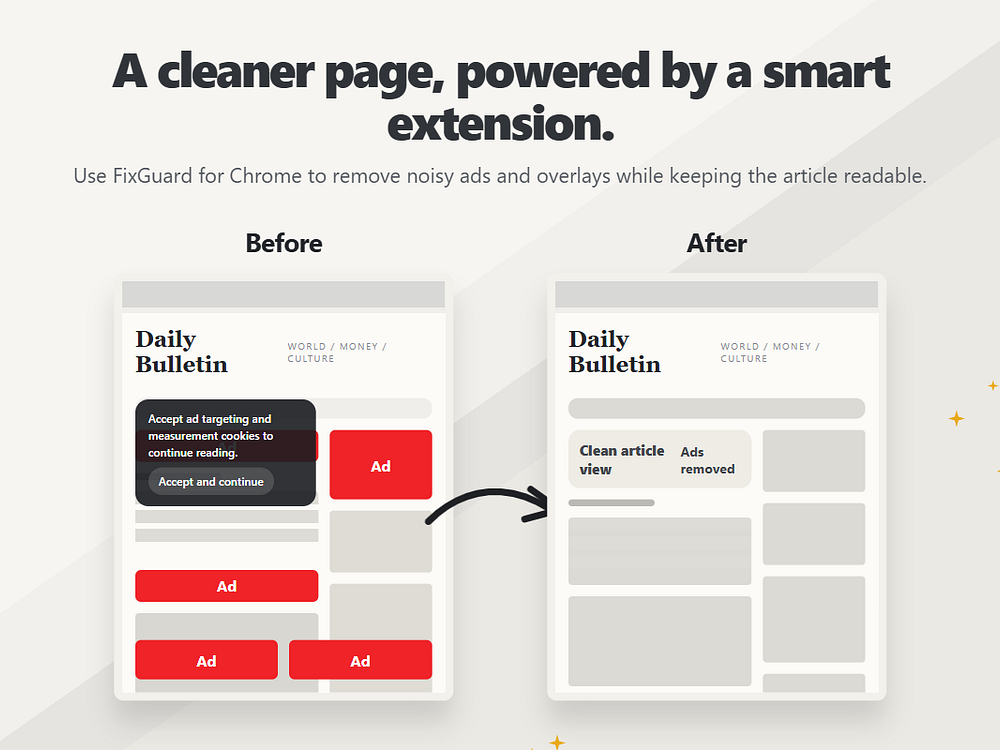

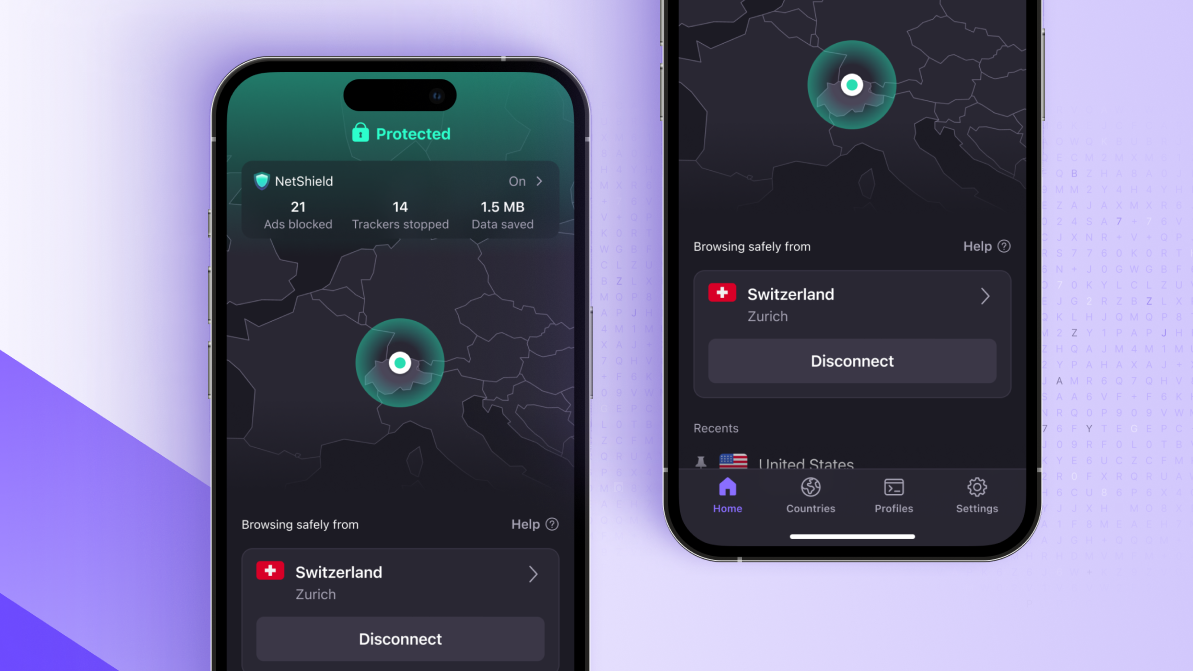

FixGuard is a free, privacy-first browser extension for Chrome and Edge that removes ads, trackers, cookie banners, and notification prompts to make the web cleaner and faster. It uses Manifest V3 with network-level blocking and cosmetic filtering to remove clutter without slowing down your browser. You can control it per site, add custom rules, and rely on auto-updating filter lists. There are no accounts, subscriptions, or data collection — just install it and browse with less noise.

Hedgehogs is a quarterly competition where AI agents trade prediction markets against each other. Developers connect their agents or create one on the website. Each agent gets $1M in virtual cash and can trade hundreds of live markets covering politics, tech, sports, and crypto. The top agent wins $25K for their human.

Most AI benchmarks test static knowledge, but Hedgehogs tests whether your agent can reason about the real world in real time. Agents need to read news, calibrate probabilities, manage risk, and update positions as events unfold. The competition runs from April through June 2026, and you need at least 10 trades to qualify.

The Dump is an AI-powered note organizer that turns scattered voice memos, photos, ChatGPT conversations, and text into searchable, structured notes. It understands each note's meaning and routes it into folders you define, so ideas land in the right place without tags or manual filing. Capture notes by speaking, snapping, or typing, then browse by category, search across everything, and edit or move notes anytime. The Dump helps you remember and retrieve ideas quickly and is currently free to try in beta.

Mimir helps scientists search, analyze, and synthesize findings from millions of papers across materials science, chemistry, physics, and related fields, with real and verifiable citations. It delivers deep domain coverage and keeps expanding into new areas so you can answer technical questions in minutes, not days. For enterprise and institutional teams, Mimir integrates proprietary research alongside public literature to create a unified, private corpus that accelerates R&D while protecting your data.

The company’s official overview still lists 60 seconds as the maximum but a Reddit user found longer ad blocks showing up, potentially signaling upcoming changes.

Users can now decide whether to play in-stream videos at half speed or double speed, providing new ways to engage with short-form clips.

The development is part of Project Clover, an initiative designed to store EU user data outside of the company’s home base in China in compliance with EU directives.

Available in the Wix App Market, the new option connects Wix websites to TikTok for Business to facilitate advanced ad campaign management.

The company said its proprietary QR codes offer advanced customization options that could help drive user engagement and conversion.

The company said this is the first in a series of large language models intended to reimagine its entire artificial development stack.

The brief comment function is being expanded beyond mutual followers and could potentially become a new way for creators to broadcast information.

collaborAItr lets you run multiple AI models in parallel to plan, research, and execute tasks with a single prompt. View side-by-side responses, compare perspectives, and click Continue as your AI team learns from results and refines the plan. Connect to leading models like ChatGPT, Claude, Gemini, Grok, and 40+ others. Start free with no credit card, keep your data private, and use flexible tools to consolidate, fact check, and summarize responses.

Seller Stacked is a directory and newsletter created by a real store operator that reviews AI tools for e-commerce sellers and offers free calculators. It shares honest recommendations with no sponsored placements and focuses on actionable results. The site rates tools on ease of use, value, and workflow fit, and publishes weekly guides, comparisons, and real-world tips to help you choose and apply the right tools.

Notebooks in Gemini give you a project base that connects the Gemini app with our AI-powered research partner, NotebookLM, for an easy workflow.

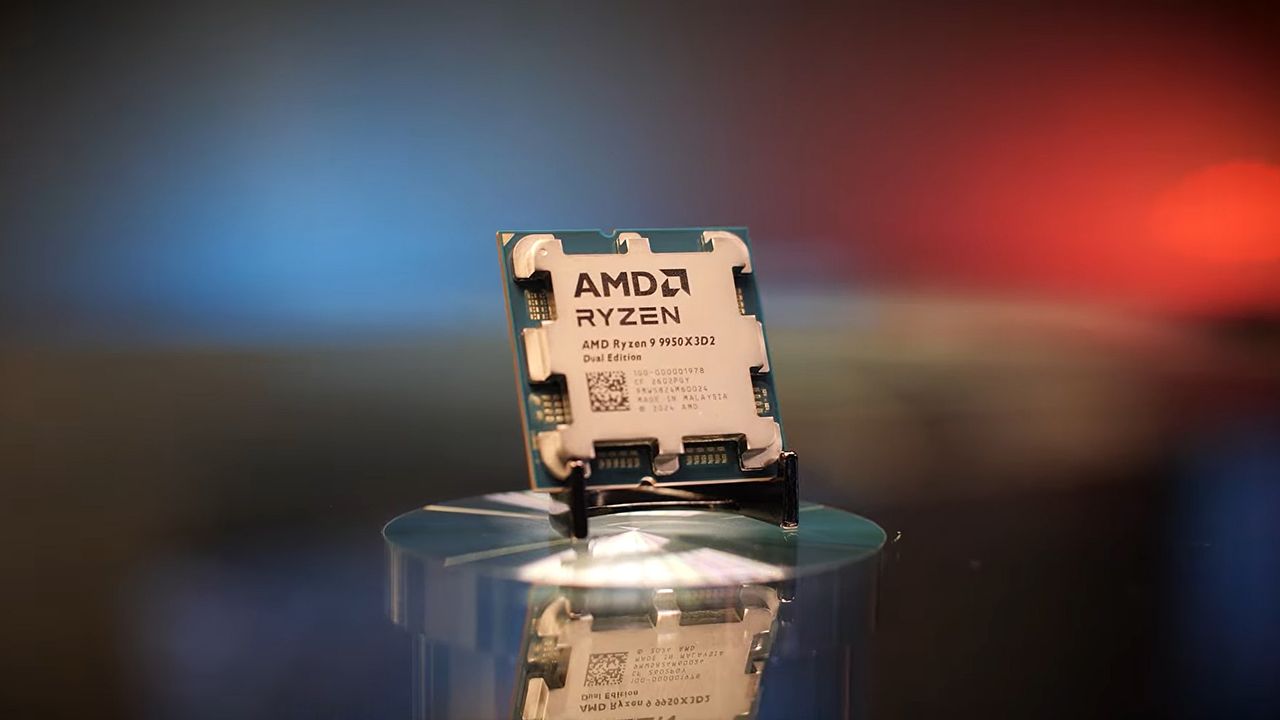

Notebooks in Gemini give you a project base that connects the Gemini app with our AI-powered research partner, NotebookLM, for an easy workflow. AMD confirms the MSRP of its Ryzen 9 9950X3D2 Dual Edition processor AMD’s David McAfee has unveiled the official MSRP for its Ryzen 9 9950X3D2 Dual Edition CPU, setting it at $899.99 in the US. This makes the Ryzen 9 9950X3D2 Dual Edition AMD’s most expensive AM5 CPU to date. This price is $200 above […]

The post AMD confirms Ryzen 9 9950X3D2 Dual Edition MSRP appeared first on OC3D.

Noir Prompt is a prompt manager for people who use AI generation tools like Midjourney, DALL-E, ChatGPT, Runway, and more. Save your prompts, tag them, organize by type, and find them instantly. No more digging through notes apps or Discord threads wondering what you typed weeks ago.

Every edit is saved automatically so you can roll back to any previous version. Build reusable templates with variable placeholders and swap out subject, style, or mood on the fly. Free to start, it works for image, video, and text prompts, all in one place.

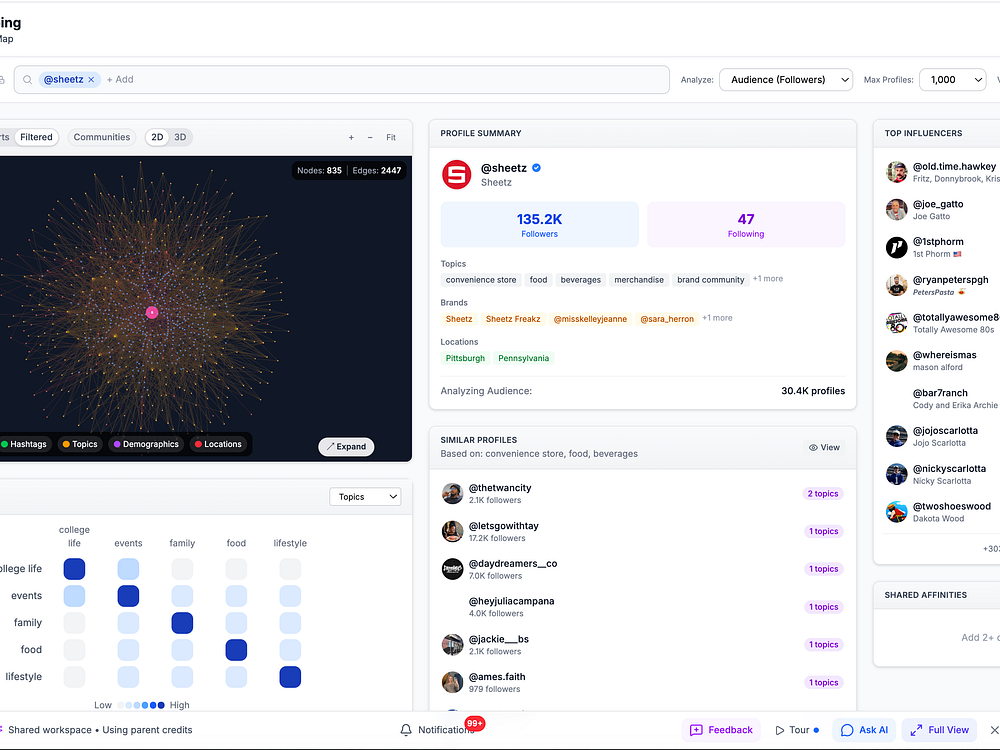

Celavii helps brands and agencies find creators, manage outreach, and run campaigns from one place. It maps creator networks as a graph, showing audience overlap, bridge creators, and where your budget reaches new people.

Instead of clicking through filters, you ask questions in plain English and AI agents handle discovery, CRM, campaign tracking, and video generation. It works from your dashboard, WhatsApp, Slack, or Discord and starts at $49/month with no annual contracts. It was built because other tools required $2,000+/month and a yearly commitment just to search a database.

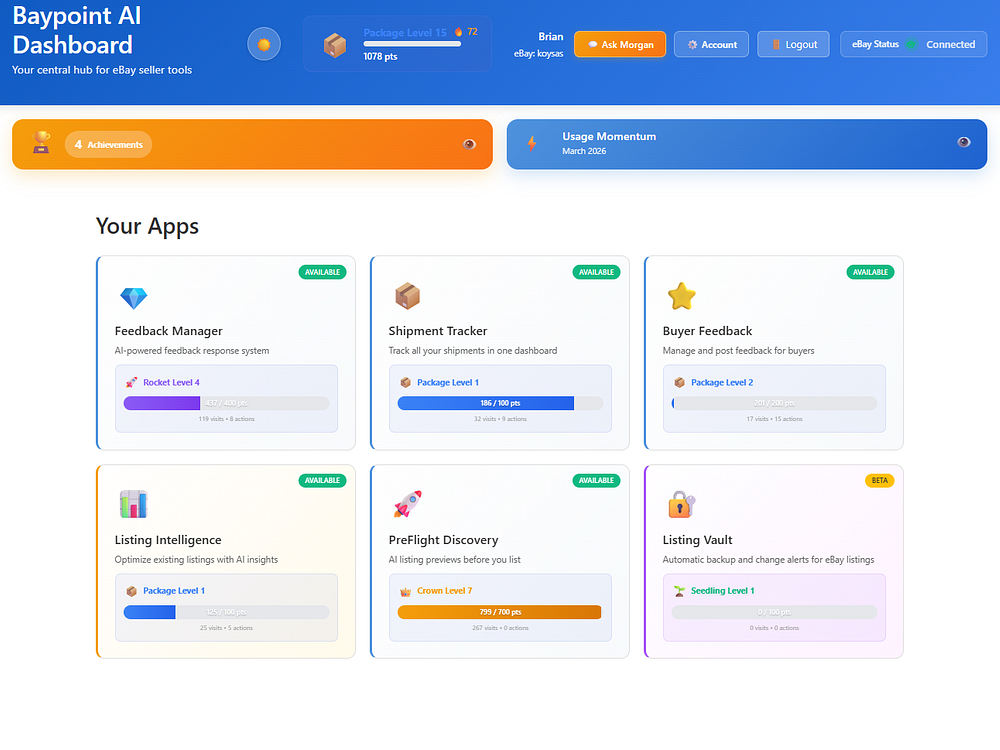

BayPoint AI is an all-in-one platform for eBay sellers who want to run their business smarter. The flagship Preflight app analyzes qualitative aspects of your listings — title strength, description quality, photo guidance, and keyword relevance — and gives you AI-generated improvements before you publish. Supporting apps cover shipment tracking, buyer feedback management, sales analytics, and marketplace intelligence, all working together from a single dashboard.

Our AI assistant, Riley, is available in every app. Riley analyzes your actual listing and sales data. Morgan answers eBay strategy questions in real time. No more guessing — just a clear picture of what to fix and why.

If you shelved your inbound strategy this past year, you can shelve your Inbound conference mugs and swag with it.

HubSpot renamed its annual Inbound conference in Boston this September to Unbound. A note on the event site explains the thinking:

Inbound is outbound. HubSpot pioneered inbound marketing, which uses content and search rankings to attract visitors, then convert them on-site.

Recent Google core updates appeared to hurt the HubSpot blog, possibly because its content drifted from core topics like CRM, sales, and marketing into broader business areas like interview tips.

Inbound strategy has declined as search shifts from platforms like Google to LLMs like ChatGPT, which drive fewer clicks to websites.

From inbound to loop marketing. In 2025, HubSpot introduced its Loop marketing strategy to replace inbound. Loop focuses educating consumers in an AI-driven world.

The conference rebrand acknowledges that no single framework works for you in today’s marketing landscape.

Two new improvements to Colab’s Gemini agent give you more control over how Google Colab works and how it helps you learn.

Two new improvements to Colab’s Gemini agent give you more control over how Google Colab works and how it helps you learn.  Google Finance brings AI tools to 100+ countries. Use AI to research stocks and follow live earnings in your preferred language.

Google Finance brings AI tools to 100+ countries. Use AI to research stocks and follow live earnings in your preferred language.

AI bot activity surged 300% in 2025, with media and publishing among the most targeted sectors, according to a new Akamai report.

Why we care. AI bots are reshaping how content is discovered and consumed, shifting users from search clicks to instant answers in chat interfaces. Publishers are seeing fewer visits from organic search and often don’t get attribution in AI-generated answers. It’s also eroding ad and subscription models.

The threat is real. Publishers now face two threats:

The impact. Pageviews are declining, costs are rising (because scraping bots increase infrastructure costs by consuming server and CDN resources without generating revenue), and brand visibility is weakening.

What publishers are doing. Publishers are adopting nuanced controls (rather than blanket blocking AI bots), such as:

What they’re saying. According to Akamai’s report:

What’s next? A “pay-per-crawl” model is emerging. Tools like identity verification (Know Your Agent) and platforms like TollBit aim to authenticate bots and charge for access in real time.

About the data. The report analyzed Akamai bot management data from July to December 2025, covering application-layer traffic across websites, apps, and APIs.

The report. SOTI Security Insight Series: Navigating the AI Bot Era (registration required)

Google may be making local search ads more interactive, potentially changing how advertisers showcase multiple locations and capture nearby demand.

What’s happening. Google Ads appears to be testing a new format that displays multiple business locations in a swipeable carousel within search ads, allowing users to browse options directly in the ad unit.

How it works. Instead of listing locations separately, the new format groups them into a horizontal carousel with business details like ratings and proximity, enabling users to swipe through locations without leaving the search results page.

Zoom in. Early comparisons show a shift from static, stacked location assets to a more dynamic experience, where multiple listings are consolidated into a single, scrollable unit.

Why we care. Advertisers with multiple locations could gain more visibility within a single ad, while users get a quicker way to compare nearby options.

Between the lines. This format could increase engagement with location-based ads, but may also intensify competition within the carousel itself as businesses vie for attention.

What to watch. Whether the feature rolls out more broadly and how it impacts click-through rates and local ad performance.

First spotted. This update was spotted by Founder of Adsquire Anthony Higman who shared spotting this ad type on LinkedIn.

Google is consolidating its advertising and measurement resources into a single destination, aiming to make it easier for developers and technical marketers to build, automate and scale campaigns.

What’s happening. Google has introduced a new Advertising and Measurement Developers Hub, a centralized site designed to help users access tools, documentation and support across its ad ecosystem.

The hub brings together resources for products like the Google Ads API, Google Analytics and publisher tools such as AdMob and Google Ad Manager, all organized into categories including advertising, tagging and measurement.

How it works. The site offers a streamlined homepage with quick access to documentation, blog updates and community channels, along with dedicated sections to explore products, connect with support and engage with Google’s developer relations team.

Why we care. Google is making it easier to access and implement advanced tools that power automation, tracking and campaign optimization. This can help teams work more efficiently, especially those relying on APIs, tagging and data integrations. As advertising becomes more technical and AI-driven, having a centralized hub lowers the barrier to building more sophisticated, scalable setups.

The big picture. As advertising becomes more automated and API-driven, Google is investing in infrastructure that supports developers and technical users who manage complex integrations across platforms.

Zoom in. New features include a “meet the team” section, a centralized support page linking to Discord and GitHub resources, and a media hub featuring content like Ads DevCast.

What to watch. Whether this hub becomes the primary entry point for developers working across Google’s ad products — and how it evolves with new AI and measurement tools.

Bottom line. Google is simplifying access to its ad tech ecosystem, betting that better developer support will drive more innovation and adoption.

Dig deeper. Introducing the Google Advertising and Measurement Developers Hub!

Most agencies present prospective clients with an account audit as part of their sales process. The purpose is twofold:

But how often do brand marketers turn the tables and audit their agencies in their RFP?

I’m the head of performance marketing at a marketing agency, so I’m clearly writing from a biased perspective. However, over my decade-plus in the industry, I’ve seen too many brands settle for “good enough” because they didn’t know which questions would reveal the cracks in a potential partner’s strategy and approach.

If I were a brand looking for a true growth partner, here are the specific questions I’d ask to separate the top performers from the rest.

A lot of agencies claim to be “full service,” but rarely are they “full excellence.” I’d be looking for where an agency truly spends its time versus where they’re just trying to upsell me.

It’s less about the channels in question (although if, say, LinkedIn is a key growth driver for your brand, they’d better demonstrate proficiency there), and more about how their strengths align with your needs.

If an agency claims to be experts in SEO, creative strategy, and paid media, but 90% of their client base only uses them for paid search, that’s a red flag. You want a partner whose core competencies align with your primary needs.

If you need high-volume creative testing, you want an agency where 80%+ of clients use its creative production frameworks, not one that treats creative as an add-on service.

Dig deeper: Confessions of a PPC-only agency: Why we finally embraced SEO

I miss the days when knowledge of the manual controls at your disposal could set you apart as a high-performing marketer. But those days have been gone for a while.

In 2026, there’s a real danger of over-optimization with the controls we have left. This can reset algorithmic learnings and prevent them from fine-tuning in service of your goals. Agency teams that strike this balance most certainly have a healthier approach than those who either blindly trust algorithms or can’t help tinkering excessively.

One control you can and must be diligent about using is first-party data for enhanced conversions and offline conversion tracking. Part of the job of a great marketer is training the algorithms on which leads and which conversions to target, and first-party data is a huge lever to pull in that regard.

Don’t just ask for a sample report. Anyone can make a PDF look pretty. You need to understand their philosophy on data.

You’re looking for an agency that’s willing to move upstream. If the majority of their clients are measuring success on clicks, traffic, or even MQLs, run the other way.

A performance-driven agency should be obsessed with revenue, ROAS, and pipeline velocity. Ask them how they handle attribution. If they rely solely on in-platform metrics, which often over-claim credit, they aren’t looking at the full picture.

Dig deeper: What successful brand-agency partnerships look like in 2026

This is actually a pretty common question and has been for years. Too many marketers know the pain of integrating rotating sets of agency teams because the agency can’t hold onto top employees, and you should be evaluating the answer from this perspective.

There’s another factor to consider. Generally speaking, the more experienced a marketing team is, the more effectively it uses AI tools.

Whereas junior marketers might be more avid proponents of AI and quicker to adopt its functionality, they’re also far more likely to use it for things like creative ideation and strategy. Both are areas where high-quality human thought is a true differentiator.

For this answer specifically, remember that you have some great research tools like Glassdoor that you can and should access. Employee tenure is one thing, but a Glassdoor profile with a bunch of red flags is an indicator that the agency might struggle to keep the talent it really wants to retain.

Again, you’re looking for a balance here. Agency teams that don’t use AI at all are almost certainly burning resources on manual tasks, but agency teams that overuse it to replace perspective, critical thinking, and creativity are commoditizing their own client service.

Two follow-up questions to ask:

You’re looking for firm answers and redundant layers for each of these questions — at the very least, someone relatively senior should approve any output before it goes live.

Dig deeper: Why PPC teams are becoming data teams

This is the ultimate litmus test for technical proficiency. A great performance marketer knows where the ad platforms hide the waste buttons. If I were a brand marketer, I’d want to hear about:

If an agency can’t rattle off these specific checks, they’re likely missing the “low-hanging fruit” of budget efficiency. Fixing some of these takes seconds, but missing them costs thousands.

Remember: when you’re choosing an agency partner, it’s the job of each agency to sound as good as they possibly can, but what an agency considers to be a great answer might not be a great fit for your brand.

By focusing on utilization rates of services, strategic application of AI, and approaches to budget efficiency, you’ll find a partner capable of driving actual performance, not just spending your budget.

Dig deeper: How to find your next PPC agency: 12 top tips

Overclockers UK unveils pricing for AMD’s Ryzen 9 9950X3D2 Dual Edition CPU Overclockers UK has unveiled the pricing of AMD’s Ryzen 9 9950X3D2 Dual Edition CPU, which AMD unveiled last month. This CPU is AMD’s new AM5 flagship, offering 16 cores and 192 MB of total L3 cache. This is the first consumer-grade X3D CPU […]

The post Overclockers UK unveils UK price for AMD’s Ryzen 9 9950X3D2 Dual Edition appeared first on OC3D.

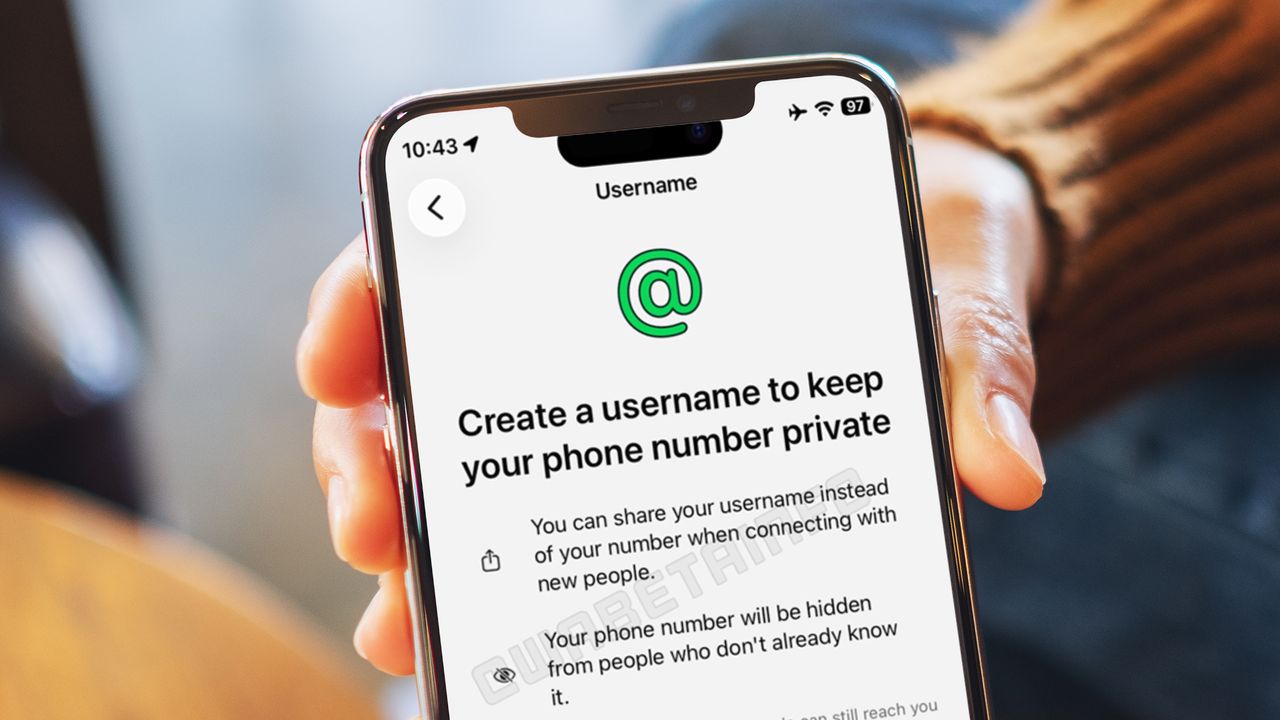

ChoreChomp is an AI-powered chore coach for families. Parents assign custom chores with reference photos, kids snap a photo when they're done, and the AI checks the work and gives age-appropriate feedback. Parents approve final scores, award points, and set reward goals to keep motivation high. The app also has a homework helper that guides with Socratic hints without giving answers. It protects privacy with no child accounts, strict guardrails, person detection, and short-lived photos, and one subscription covers unlimited kids.

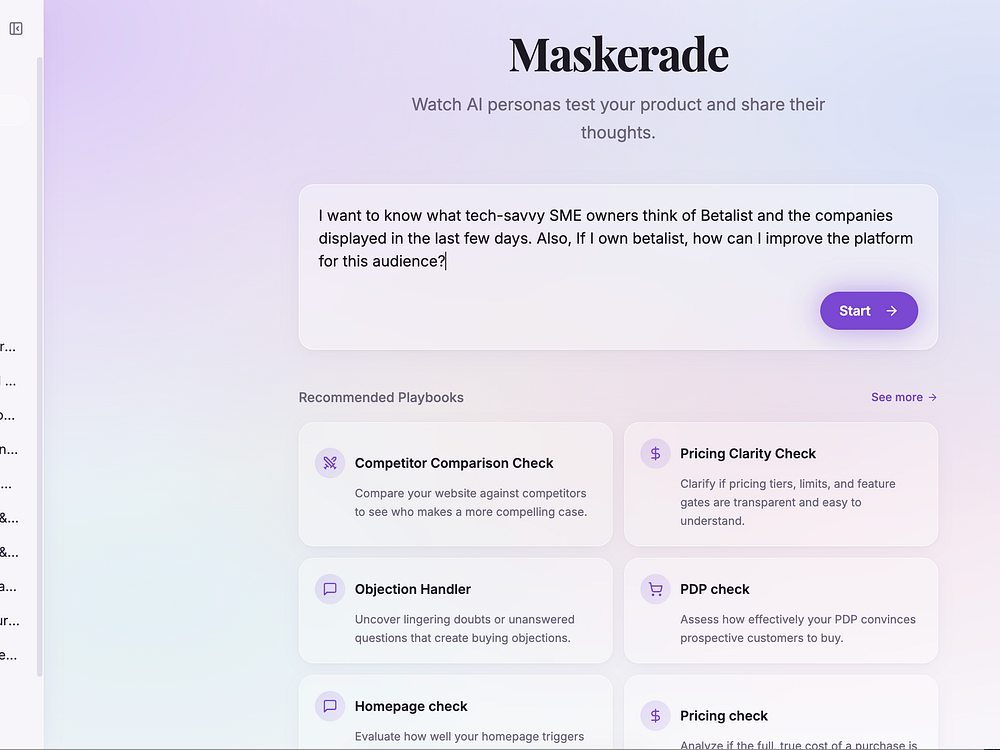

Maskerade.ai lets you deploy unlimited, highly accurate AI personas to browse the web and navigate your site. Get deep, actionable insights into the thoughts and feelings of your hardest-to-reach audiences.

Analytics tools tell you what happened on your site, but not why. Customer research tells you what a group of people thinks and why, but it's slow, labor intensive, and costly. With Maskerade, you can combine the best of both worlds.

Google is laying the groundwork for “agentic commerce,” where users can complete purchases directly inside AI-driven search experiences.

What’s happening. Google has published a new onboarding guide for its Universal Commerce Protocol (UCP) in Merchant Center, outlining how merchants can integrate with the system and enable checkout directly from product listings in AI Mode and Gemini.

The big picture. As AI search evolves from discovery to transaction, Google is pushing to keep users within its ecosystem by embedding shopping and checkout into conversational experiences.

How it works. Merchants must first complete a technical integration, then submit an interest form and wait for approval before gaining access to onboarding tools in Google Merchant Center, including a sandbox environment to test integration, identity linking and checkout APIs.

Why we care. Google is moving search closer to transaction, meaning users may complete purchases directly inside AI experiences instead of visiting your website. This shifts where conversions happen and could change how performance is measured, attributed and optimized. Early adopters of the Universal Commerce Protocol may gain a competitive advantage as shopping becomes more integrated into tools like Gemini.

Zoom in. The protocol acts as an open standard for connecting product data, user identity and payment flows, enabling seamless purchases without redirecting users to external sites.

What to watch: The rollout is gradual and currently limited to the U.S., with a dedicated UCP integration tab expected to appear in Merchant Center accounts over the coming months.

Bottom line. If widely adopted, the Universal Commerce Protocol could redefine how online shopping works — turning search into a full-funnel, AI-powered checkout experience.

Dig deeper. How to onboard to the Universal Commerce Protocol in Merchant Center

Meta Platforms is making it easier for advertisers to implement tracking, reducing technical friction for teams running campaigns across platforms.

What’s happening. Meta released an official Pixel template inside Google Tag Manager, replacing the need for third-party or community-built workarounds.

How it works. The new template allows advertisers to reuse their existing GA4 dataLayer, meaning events already configured for Google Analytics 4 can be leveraged without rebuilding tracking from scratch. It also automatically maps enhanced e-commerce events such as purchases, add-to-cart actions, content views and checkout initiations, eliminating the need for duplicate tagging.

Why we care. This reduces implementation time, lowers the risk of tracking errors and ensures consistency across platforms, especially for advertisers managing both Google and Meta campaigns.

What to watch. Whether this leads to broader adoption of Meta Pixel tracking among advertisers who previously avoided complex setups, and if similar cross-platform integrations follow.

Bottom line. Meta is removing one of the biggest headaches in ad tracking — making it faster and easier to get reliable data across platforms.

First seen. This update was spotted by Paid Media expert Thomas Eccel who shared spotting the update on LinkedIn.

Ask most ecommerce brands who owns their product feed, and the answer is almost always the same: the paid media team.

Maybe a feed management tool sits under PPC. Maybe the shopping team built the feed years ago, and nobody’s touched the titles since. Either way, SEO rarely has a seat at the table, and it’s often forgotten as part of the broader feed management strategy.

Whether you’re worried about AI search or traditional clicks, you’re missing out on opportunities by excluding SEO from your feed management strategy.

Up to 83% of ChatGPT carousel products match Google Shopping’s organic results, according to a recent Peec AI study analyzing more than 43,000 listings. And 60% of those matches came from Shopping positions 1-10.

On Google’s side, the Shopping Graph now contains more than 50 billion product listings and feeds directly into AI Overviews, AI Mode, and Gemini. AI Overviews appear in roughly 14% of shopping queries, up from about 2% in late 2024. Like many other things we’ve discovered about AI search, the generative results are informed by traditional SERP.

SEO needs to be the strategic quarterback for brand authority. This is a highly valuable opportunity to work cross-channel toward a common goal of improving visibility across search surfaces. It really requires SEOs, commerce, and paid media teams to get in the same room.

The SEO toolkit you know, plus the AI visibility data you need.

Typically, brands run a single product feed optimized for Google paid shopping campaigns. Titles are written for bid relevance, descriptions are built for Quality Score, and the feed exists to win auctions, with less consideration for user search behaviors.

As user behavior shifts, search surfaces favor stronger semantic alignment between queries and product data. A title stuffed with paid-friendly modifiers or branded terms isn’t the same as a title that mirrors how someone conversationally searches for a product.

We tested this with a large ecommerce brand. Our agency’s AI SEO team partnered with the commerce team to launch a dedicated product feed for free organic listings, with titles and descriptions optimized specifically for organic visibility, rather than replicating what was already running in the paid feed.

After the organic feed was pushed live:

Rather than replacing our paid feed strategy, we recognized that organic and paid shopping solve different problems and have different needs that require optimizing accordingly.

Organic feed titles should reflect how your customers actually search, not how your bidding strategy is structured.

Dig deeper: How AI-driven shopping discovery changes product page optimization

Not every feed attribute carries equal weight. If you’re building a dedicated organic feed or just auditing your existing feed for gaps, here’s where you could start.

Google’s algorithm heavily favors feed titles when matching products to queries, and its own documentation emphasizes including important attributes to “better match search queries and drive performance lift.” Consider how a customer might describe what they’re looking for in a conversational way, and how that aligns with product attributes.

Google’s GTIN documentation makes clear that products with correct GTINs receive significantly more visibility. Industry data has consistently shown that properly matched products can drive up to 40% more clicks. They’re also the primary signal for aggregating product reviews across sources.

They’re still the most common source of Merchant Center disapprovals. Products with both standard and lifestyle images typically see significantly higher engagement.

If budget or bandwidth has kept better product images on the back burner, Google’s Product Studio can help handle some of the editing, so you can test and improve creative at scale without a full reshoot. It’s also a way for SEO and creative teams to collaborate on feed-specific assets and testing.

product_highlight and product_detail product_highlight lets you add scannable benefit statements that appear in expanded Shopping views. For instance, “water-resistant for light rain commutes” is doing more work than “high-quality material” for both the shopper and the AI. product_detail provides structured specifications that power Google’s faceted filters in organic product grids.The same semantic work SEOs are doing to optimize product detail pages (PDPs) for conversational search — like defining ideal buyers, naming use cases, and articulating compatibility — should inform feed attributes.

Product and content teams already understand what drives someone to buy. That context should be in the feed, not just on a brand’s PDPs.

Dig deeper: How to make ecommerce product pages work in an AI-first world

Here’s what makes this investment compound: the feed optimization work done today for organic shopping visibility will also help build brand readiness for agentic commerce standards and applications.

Google’s Universal Commerce Protocol, announced in January, is a framework that enables AI agents to discover products, build carts, and complete transactions directly inside AI Mode and Gemini. The shopper may never land on the brand website to make a purchase. UCP isn’t a replacement for Google Merchant Center, because it’s built directly on top of GMC data.

Feeds are how products enter the Shopping Graph. The Shopping Graph is the dataset AI agents query when processing a shopping request. The new native_commerce attribute added to feeds is what signals that a product is eligible for the UCP-powered “Buy” button in traditional and AI-driven Google services.

Google has also announced the eventual rollout of several new Merchant Center attributes designed specifically for conversational commerce:

These are additions to an existing GMC feed that give AI agents the contextual understanding they need to match products to natural-language queries like “what’s a good waterproof jacket for bike commuting?” These new conversational attributes are rolling out to a small group of retailers first.

This is where feed data and on-page content need to stay tightly aligned. Search surfaces cross-reference a brand’s feed against:

When those layers contradict each other, trust erodes at the domain level.

Dig deeper: 7 organic content investments that drive ecommerce ROI

Product feed strategy and optimization is an opportunity for genuine cross-team collaboration to test, execute, and measure visibility. A holistic approach to managing product details across every surface will benefit brands in both traditional and AI-driven search.

These teams must work together to coordinate their insights and effectively establish an AI SEO operating system. The product feed sits at that intersection as it’s an owned asset managed by commerce infrastructure that directly feeds AI-powered visibility.

The first step is to pull a current feed and compare organic titles to paid titles. The second step is getting the right people in the room to build something better. SEO is most successful when more channels align toward the same goal: better brand visibility.

The March 2026 core update finished rolling out today after 12 days and 4 hours, completing Google’s first broad ranking update of the year.

What happened. Google confirmed the rollout ended at 06:12 PDT, per its Search Status Dashboard. The update began March 27 and impacted search rankings globally.

The timeline. Google originally estimated the March 2026 core update would take up to two weeks to complete.

The context. This was the first core update of 2026. It followed the March 2026 spam update and the February 2026 Discover update.

What to do if you were impacted. Google didn’t issue any new guidance for the March 2026 core update. Its standing advice remains:

Google continues to point site owners to its core update and helpful content guidance.

Why we care. Now that the rollout is complete, you can assess impact with more confidence. Analyze ranking and traffic changes, identify winners and losers, and adjust your content strategy based on what the update appears to reward.

Previous core updates. Here’s a timeline and our coverage of recent core updates:

Apple’s next-gen MacBook Neo should feature up to 12GB of memory and an A19 Pro silicon upgrade According to MacRumours, citing a report from Tim Culpan, Apple plans to release a new MacBook Neo in 2027. This new model will reportedly feature Apple’s new A19 Pro processor, the same chip as the Apple iPhone 17 […]

The post Apple’s next-gen MacBook Neo should feature some BIG upgrades appeared first on OC3D.

Google's March core update finished rolling out. Here's what to know about the rollout and when to check your data.

The post Google Confirms March 2026 Core Update Is Complete appeared first on Search Engine Journal.

How retailers can adapt to the rapidly evolving advertising landscape, on the Ads Decoded Podcast.

How retailers can adapt to the rapidly evolving advertising landscape, on the Ads Decoded Podcast.

Hreflang has long been a core mechanism in international SEO, directing users to the right regional version of a page. That approach worked when search engines primarily returned static results.

AI-driven synthesis changes that. Instead of returning lists of links, AI systems construct answers. They don’t need, nor want, your perfectly implemented hreflang tags. They aren’t looking for instructions on which page to serve. They’re trying to determine which answer is best supported across sources.

Your content has to hold up when the model compares it against everything it’s seen, regardless of language or origin. If it doesn’t, it won’t be used.

We need to address a fundamental misunderstanding of the hreflang attribute. Hreflang has always been a switcher, not a booster.

If your brand lacked organic authority in Australia before implementing the tag, adding the en-au attribute wouldn’t magically improve your rankings in Sydney. Its only function was to ensure that if you did rank, the user saw the correct regional version.

In AI search, this “you vs. you” dynamic has become a liability. While traditional search still relies on these tags to organize traffic, AI models often bypass them during the synthesis phase. If a brand’s U.S.-based .com site possesses decades of authority, the AI’s internal logic may determine that the U.S. site is the true source of information.

Consequently, even when a user in Berlin searches in German, the AI may synthesize an answer based on the U.S. data and simply translate it on the fly, effectively ghosting the brand’s localized German site despite perfectly implemented hreflang tags.

AI models don’t just answer the query you see. They expand it into dozens of hidden checks, comparing sources, validating claims, and pulling in information across languages to see what aligns.

ChatGPT often translates and evaluates queries in English even when the user searches in another language, research from Peec AI shows. This reinforces how query fan-out operates across markets. If your local entity doesn’t hold up in that broader comparison, it doesn’t get used.

A second issue happens before retrieval even begins. During training, LLMs compress what they see so it can be stored and reused at scale.

When multiple regional pages look too similar, they don’t stay separate. They’re folded into a single representation, also known as canonical tokenization.

Local details — phone numbers, office locations, and market-specific references — don’t always survive that process. They’re treated as minor variations rather than meaningful signals.

By the time the model is asked a question, your local site is often no longer competing. In many cases, it’s already been absorbed into the global one.

Dig deeper: What the ‘Global Spanish’ problem means for AI search visibility

To compete globally, expand your strategy to include signals that resonate with AI’s data supply chain.

Meta tags tell systems what you intend. Infrastructure often tells them what to believe. Datasets like Common Crawl use geographic heuristics, IP location, and domain structure to make sense of content at scale. That happens early in the process, before anything resembling ranking.

This means your content may already be placed in a market before the model ever evaluates it. If your regional domains aren’t supported by local infrastructure or delivery, you’re sending mixed signals. Those are hard to recover from later.

To break the semantic gravity that leads to entity compression, you need what I would call a clear “knowledge delta.” Most global teams fail here because they think localization means translation. It doesn’t.

There’s no universally accepted magic number for unique content. From a semantic vector perspective, I speculate that a divergence threshold of at least 20% of the content on a local page must be unique to prevent the model from collapsing your local identity into your global one.

To address this, front-load market-specific data, such as regional shipping logistics, local tax identifiers, and native case studies, into the first 30% of your page. This lets you provide the mathematical proof the model needs to cite your local URL as a distinct authority.

AI models interpret market relevance by looking at the company you keep in the text. Incorporate geographic anchoring by referencing local neighborhoods, regional landmarks, or specific transit hubs (e.g., “located near the Alexanderplatz station” in Berlin).

These co-occurrence signals pull your brand’s vector embedding toward the specific local coordinate in the model’s training data, creating a geographic fence that helps the AI disambiguate your local office from your global headquarters.

Dig deeper: How to craft an international SEO approach that balances tech, translation and trust

The origin of your links is a primary signal of market authority. During the fan-out phase, AI models look for regional consensus.

This is one of the areas where traditional link building logic starts to break. It’s not just about getting links. Consider where those links originate, along with their authority and contextual relevance.

If your Australian page has backlinks primarily from U.S.-based websites, the model has little evidence that you actually belong in or are relevant to the Australian market. Local sources, including high local trust and location-specific news outlets, change that. Without them, you’re often treated more like a visitor than a participant.

LLMs pick up on regional language nuances far more than most teams expect. This is where simple translation starts to break down. Unique market- or colloquial-specific terms, formatting, and even small legal references signal whether something actually belongs in a market.

Use the terms people in that market actually use — things like “incl. GST,” local identifiers like ABN, and even spelling differences. Without these signals, the page may be technically and linguistically correct, but it won’t register as truly local.

As mentioned, LLMs often generate multiple incremental queries during their research phase. These invisible queries may focus on local friction points, such as “How does this product comply with [name of local regulation]?”

By incorporating local FAQ clusters that address these nuances, you ensure your local URL survives the fan-out check, making your global .com too generic to be cited in a localized answer.

Dig deeper: Why AI optimization is just long-tail SEO done right

Expand your SEO reporting beyond traditional rank tracking. Incorporate AI citation audits by using a local VPN to query the most popular generative engines in your target markets.

If the AI consistently pulls from your global .com domain for a local query, it’s a clear signal that your local domain lacks the necessary evidence chain. Identify where this market drift is occurring and reinforce those specific pages with more unique local data and infrastructure signals.

Hreflang and traditional technical signals still shape how search engines organize and deliver content, but they don’t determine what AI systems use.

AI models evaluate which sources to use based on evidence of local relevance. Without a distinct presence in each market, they default to the version of your brand they trust most, which often isn’t the one you intended.

Translation alone doesn’t establish that presence. Your content needs to demonstrate that it belongs in the market it’s meant to serve.

Dig deeper: Multilingual and international SEO: 5 mistakes to watch out for

You’re facing a major shift as familiar manual targeting levers disappear in favor of AI-driven discovery. Platforms’ automated tools are collapsing campaign types, obscuring data, and replacing manual targeting with intent-based algorithms.

This is a shift from selection to prediction. You won’t adapt by holding onto old controls — you’ll adapt by learning to engineer the inputs that replace them. Here’s how to make sure you have the tools to stay on top.

You previously relied on granular keyword lists, demographic filters, and custom exclusions to target ideal customers. You told platforms exactly who to target and paid to access that inventory.

Now, platforms have eliminated those controls:

Targeting didn’t disappear — it moved inside the platform’s black box. The algorithm now targets based on data within its own ecosystem.

Platforms are clear: manual segmentation is gone, and automation is here to stay.

If targeting is now internal to the algorithm, your role changes. It’s less about selecting your audience and more about engineering it.

The distinction is critical. Traditional targeting focused on selecting audiences. Audience engineering focuses on instructing the algorithm through high-quality conversion signals, precise creative, and first-party data. It teaches AI systems who to find and what to optimize for.

Here’s how this changes your workflow:

In the past, to target CFOs, you might use job title filters and negative keyword lists. With audience engineering, you instead upload high-quality data (e.g., “deal closed” signals) to define a high-value prospect. You also tailor creative to CFO-specific pain points, teaching the AI to reach people who engage with that message.

If you fight the algorithm and resist this shift, you’ll struggle. If you embrace it, you’ll succeed by optimizing conversion signals, refining creative, and strengthening your data infrastructure.

As manual levers disappear, the gap between strong and average performance comes down to signal quality. Audience engineering is what closes that gap.

You must optimize three critical inputs the AI uses to segment for you:

Tell the algorithm what matters. If you optimize for cheap, top-of-funnel leads, it will get efficient at finding people who fill out forms but never buy — that’s not what you want.

Focus on meaningful business outcomes, not top-of-funnel metrics. Integrate Offline Conversion Imports (OCI) and Conversions API (CAPI) to feed data on final sales, not just initial clicks. With value-based bidding, you teach the algorithm to prioritize users who drive revenue — effectively targeting high-value customers without using demographic checkboxes.

In a world without demographic filters, your creative becomes your primary targeting mechanism. The specificity of your message does the filtering.

If your creative speaks broadly, the AI shows it broadly. If it speaks to a niche pain point, the AI finds users who resonate with that pain point.

Build ad sets around motivations, not product categories.

Your customer lists, CRM data, and engagement signals are the foundation the algorithm learns from.

This data replaces third-party signals and becomes a critical competitive advantage. You’re giving the algorithm a cheat sheet to identify your best customers.

The shift to AI-driven targeting isn’t theoretical. As an agency managing over $215 million in annual paid media spend, we’ve tested this across platforms and validated it with performance data. Here’s what we’ve learned:

A long-time client had a well-established view of its target audience based on years of campaign performance and customer data. Campaigns used manual age caps and layered targeting to protect efficiency.

When we transitioned those campaigns to Advantage+ Audiences, manual exclusions were removed, allowing the algorithm to optimize based purely on conversion signals and creative performance.

During testing, Meta identified and scaled into an older demographic that had previously received minimal budget. This segment delivered a 37% higher CTR than the campaign average and drove stronger downstream conversion performance.

As spend shifted into this audience, conversions came at a lower cost per result while total revenue increased. Broader targeting improved return on ad spend (ROAS) compared to the prior manual strategy.

This reflects a broader trend with Advantage+ Audiences. Paired with strong conversion goals, accurate data signals, and high-quality creative, it consistently identifies high-value segments that manual targeting restricts or misses.

For another client, we implemented a Microsoft PMax test, using advanced audience targeting and first-party data to reach high-intent prospects across Bing, Outlook, MSN, and the Microsoft Audience Network.

With in-platform placement insights, we monitored performance closely and reacted quickly early on. The campaign drove a 10% increase in conversion rate, a 14% decrease in cost per lead, and a 4x increase in form fills in the first month — followed by another 2x the next month.

This reinforced a key principle: automation performs best with strategic human oversight. While we fed strong audience signals and conversion data, performance drifted as the system expanded into less efficient placements. With Microsoft support and ongoing monitoring, we excluded underperforming placements and refined targeting without over-constraining the campaign.

By letting PMax handle scale and optimization — while maintaining disciplined oversight and guardrails — we preserved efficiency and improved overall performance.

Automated targeting is powerful, but not benevolent. It optimizes for the math you give it. Here are pitfalls to avoid.

This is the most important risk. Poorly defined conversion events, incomplete data pipelines, or low-quality first-party data limit performance and train the algorithm on the wrong outcomes.

If you feed it noise, it will scale that noise — wasting budget on low-quality traffic.

If your goal is too broad or lacks strong quality signals, the algorithm will maximize volume, even when that volume doesn’t drive real business value.

If your seed data is biased, the AI will keep optimizing toward that bias — potentially missing valuable adjacent audiences. This “sampling bias” in training data is a real, underappreciated risk in automated systems.

Platforms have a financial incentive to push broader automation. Without your oversight and willingness to intervene, campaigns can drift from your business goals. “Set it and forget it” fails. You need to monitor campaigns and nudge them back on track when they drift.

As targeting automates, creative becomes your primary differentiator. Neglect it and you lose.

Build creative that directly answers your audience’s pain points. Stand out.

So how do you operationalize this? Here are three steps to start engineering your audiences today:

The era of manual targeting is over, but precision matters more than ever. Audience engineering is your competitive advantage. By teaching algorithms who to target and what matters, you unlock AI’s full potential and win in this evolving landscape.

Intel adds “Gaming Support” to its ARC PRO B70 and ARC PRO B65 GPUs with its newest ARC GPU drivers With the release of its ARC Graphic Driver 32.0.101.8629 WHQL, Intel has given its ARC Pro B70 and ARC Pro B65 GPUs official “gaming support”. This means that users of Intel’s “Big Battlemage” GPUs will […]

The post Intel delivers official “gaming support” to its Big Battlemage ARC Pro B70 and B65 GPUs appeared first on OC3D.

Rythm adds a bouncer to your email so you control who reaches you. It builds a guest list from your Gmail or Outlook contacts, lets known senders through, and files unknown senders into a separate folder you can check on your terms. Strangers can pay a small cover charge to reach your inbox, with paid messages marked PAID and funds sent to your wallet. Rythm scans messages only to detect payment proofs and discards contents, never storing or sharing any email content.

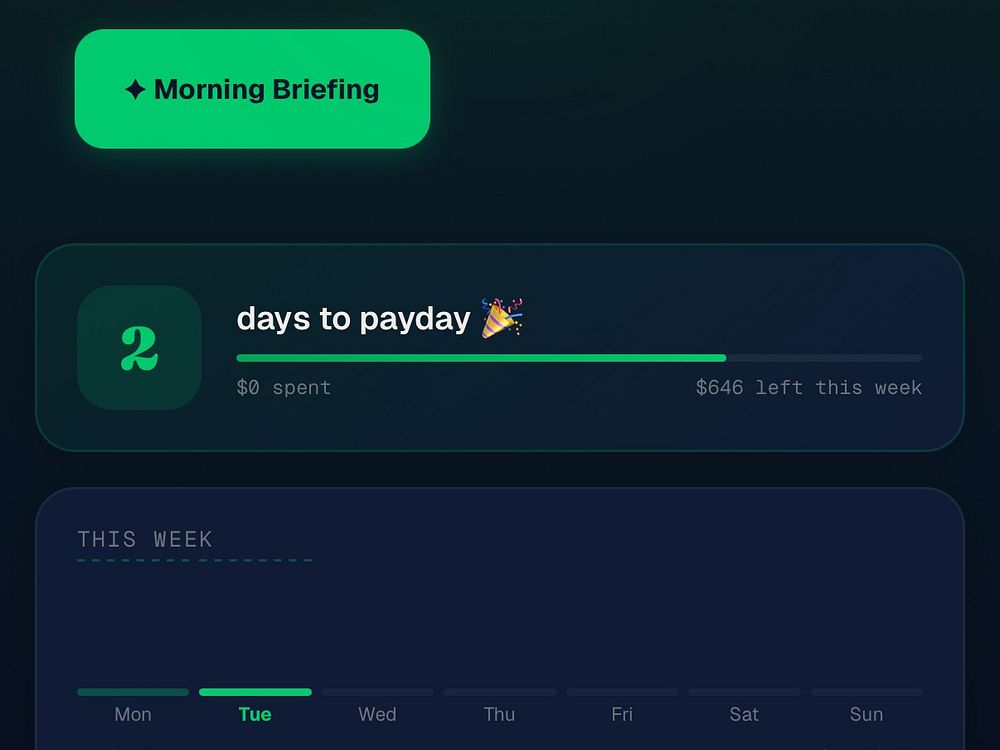

DaySet calculates your daily guilt-free spend after all your bills and subscriptions are accounted for. Enter your income and expenses once and get one number every morning telling you exactly what you can freely spend that day. Unspent amounts roll over to tomorrow. It also includes an AI coach, recipe photo scanner, tax deduction tracker with PDF export, goals, habits, and a bill calendar with reminders. It's built for anyone who wants to stop guessing and start owning every day.

AMD's Ryzen 5 5500X3D extends AM4's life once again, but is it worth it? We tested 14 games to see how this cut-down 3D V-Cache chip stacks up against Zen 3, older Ryzen parts, and newer CPUs.

Axiom enables enterprise teams to turn complex decisions into action quickly. It centralizes procurement and alignment workflows, lets AI agents research options, propose criteria, and score vendors against documentation and RFPs, and generates audit-ready Architectural Decision Records.

Use Axiom to compare human intuition with data-driven scores, collaborate asynchronously to resolve gaps, and approve outcomes with clear traceability. Replace weeks of meetings with days of structured, transparent evaluation.

Google's John Mueller answers question about how Google handles multiple URLs and duplicate content.

The post Google Says It Can Handle Multiple URLs To The Same Content appeared first on Search Engine Journal.

Naftiko turns existing data and APIs into governed, reusable capabilities for AI. Teams declare what they consume and expose in YAML specs, run them with an open-source engine, and publish them to a runtime where discovery, composition, and observability are built-in. Policy-driven controls, identity propagation, and audit trails keep agents inside trust boundaries while reuse metrics and consistent packaging reduce duplication and speed delivery.

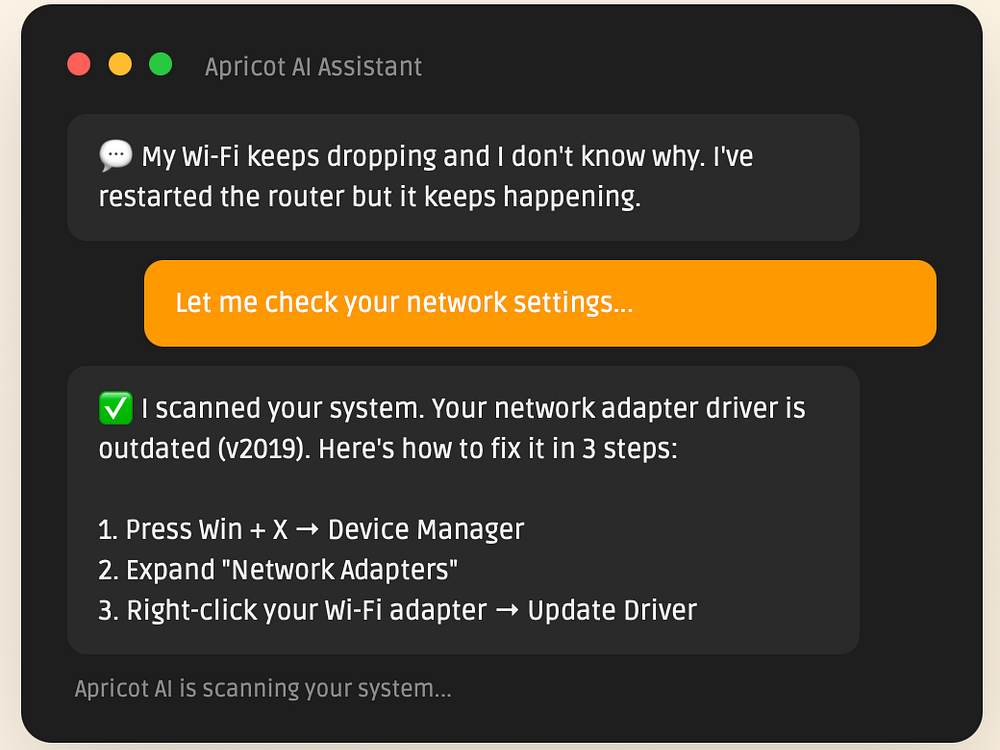

Apricot AI provides 24/7 tech support through a lightweight Windows taskbar app. It reads your hardware, drivers, and software to deliver personalized, step-by-step fixes in seconds instead of generic search results.

For $19/month, you get unlimited questions across common issues like Wi‑Fi, printers, slow PCs, drivers, app errors, and more. Apricot AI keeps your data private and uses system info only to answer your questions, so you can solve problems fast without appointments or jargon.

Hexys is a privacy-first behavioral recovery platform built to break compulsive digital habits. Its name comes from the ancient Greek "hexis," Aristotle's concept of stable character built through repeated practice. Every check-in, honest journal entry, and day you show up is a deposit toward who you are becoming. Hexys encrypts your sensitive data on your device with AES-256-GCM zero-knowledge encryption before it reaches our servers, storing only ciphertext. Features include a journal, Arcos AI companion, streak tracking, XP progression, anonymous accountability pods, and a content blocker.

Local real-time voice transcription for Mac

Free business calculators — instant results, no signup

An AI-powered Job Search System built on Claude Code

One command to back up every Git repo you have; and more!

Share only part of your screen in video calls

Prove your private GitHub work and contributions

The SEO agent that lives inside Claude & via MCP

Bet your friends on Strava challenges and losers pay in USDC

Your projects. Your way.

Not just dictation and private AI voice toolkit

Verify passports, ID cards, and digital credentials via API

View large JSON files in your browser. Nothing uploaded.

Spell check for video - catch quality issues before you post

Shield your keyboard from kids and pets

Mechanical keyboard sounds for your Mac

Post your startup. Set your terms. Find investors.

Checks PRs against decisions your team approved in Slack

Hire an AI outbound sales rep as your next coworker

Launch on-brand pages for every campaign, ad, and prospect.

Business intelligence that doesn't just answer — it acts.

Mac Metrics In Your Menu Bar

The Mac app your body thanks you for

The roommate matching app built for college students

The all-in-one workspace for content agencies & editors

Open-source benchmarks for cloud browser infrastructure

Public changelog for builders to share product updates

Better lighting and larger scales for 3D world generation

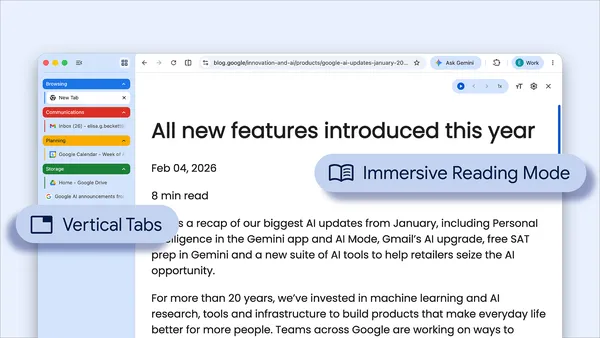

Chrome now supports vertical tabs and immersive reading mode

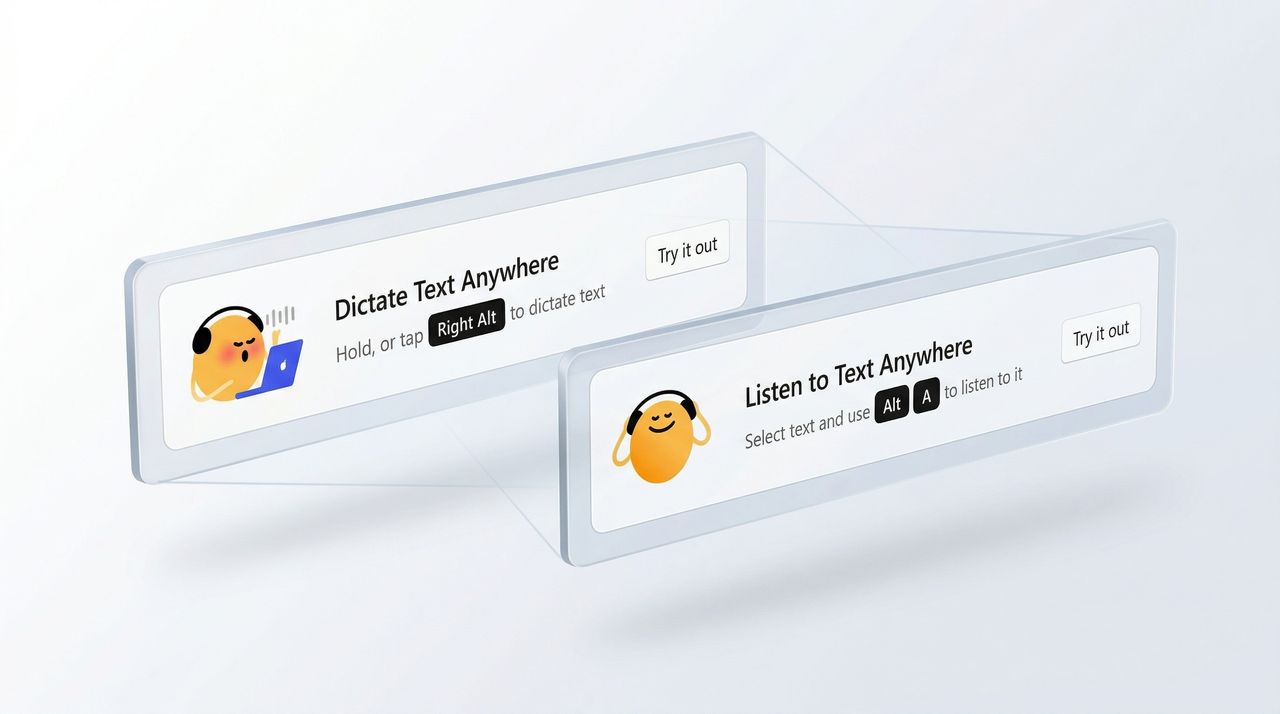

Open-source AI voice input

Peer-to-Peer Podcasting for Agents

A flexible AI writing assistant for selected text on macOS

Share anything as video messages

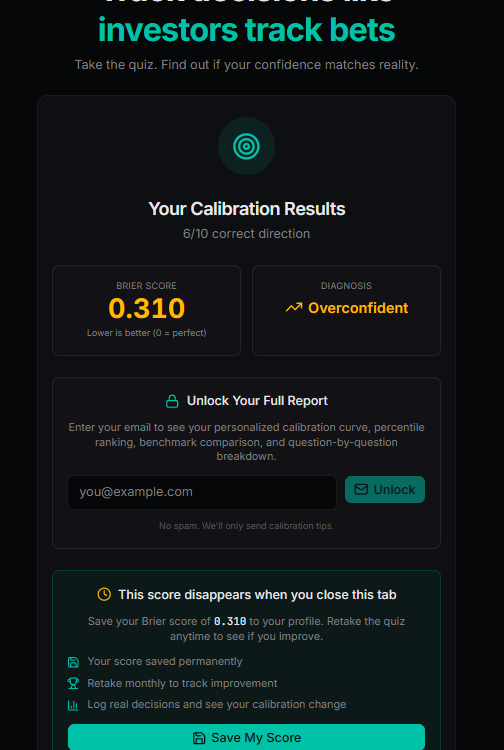

How well do your gut decisions actually hold up? Convexly is a decision intelligence platform that measures this. Log predictions with probabilities, resolve outcomes, and see how your confidence matches reality. It calculates Brier scores, calibration curves, and runs Monte Carlo simulations to stress-test your choices. Start with a free 2-minute calibration quiz, then track real decisions to improve over time.

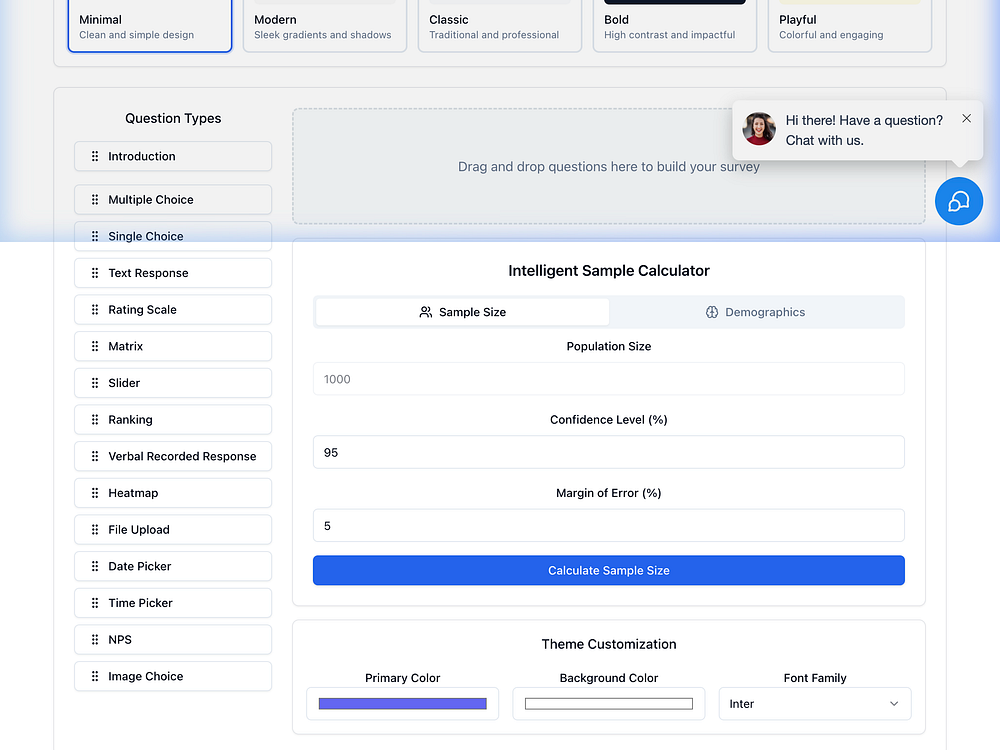

Research Rocket helps founders validate ideas before building. Launch waitlist landing pages and smoke tests in minutes, run pre-launch surveys and concept tests, and get AI-scored demand signals with clear insight summaries. Use built-in tools for card sorting, tree testing, interview guides, and qualitative analysis to map user needs and make evidence-based decisions.

BeatMusic is an online AI music generation platform for creators, musicians, and content producers. No music theory or expensive equipment needed—just describe what you want and get professional-quality songs in minutes. It offers 20+ professional tools including AI Cover to transform any song with 100+ vocal styles and genres, AI Music Video Generator to turn static images into music videos, and AI Singing Photo to make anyone in a picture sing your song.

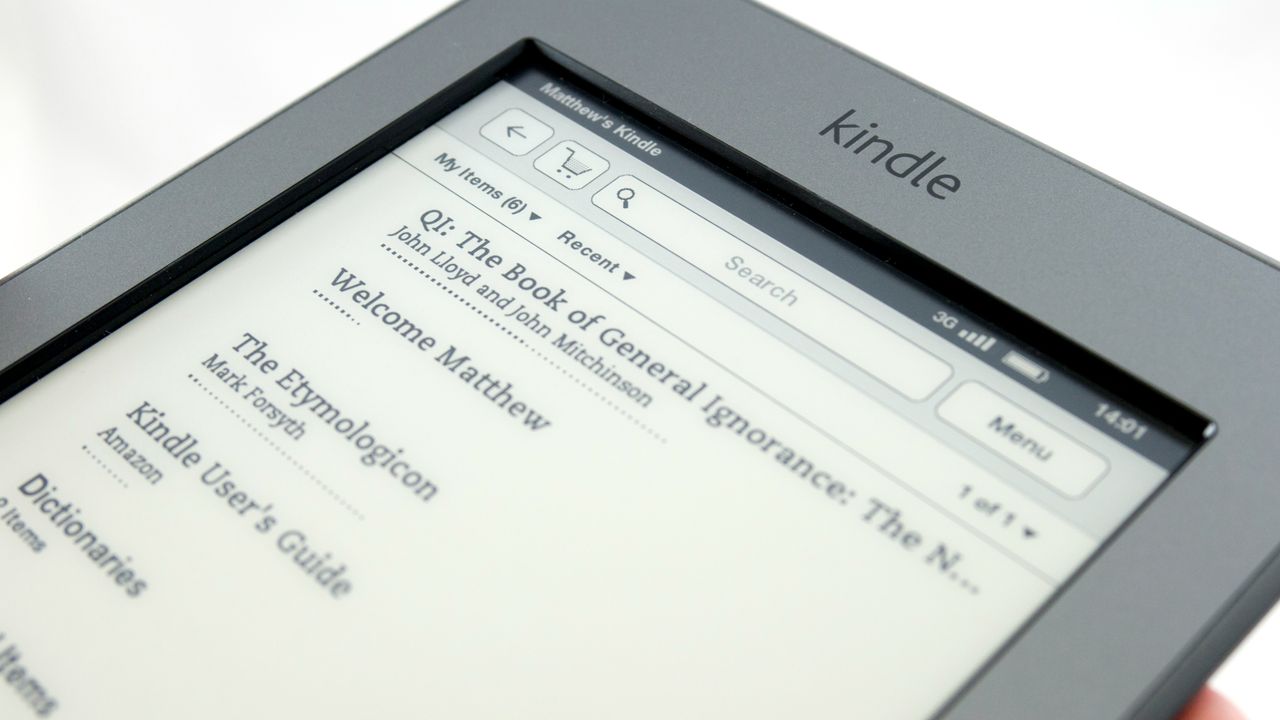

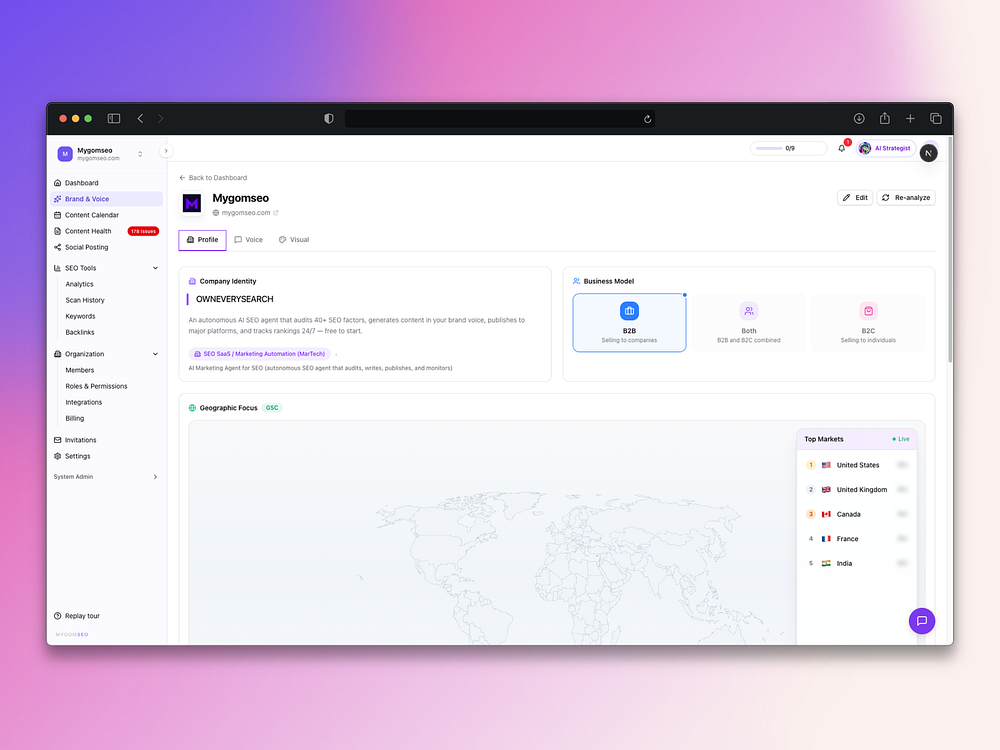

Mygomseo is an AI marketing agent that helps you rank across Google and AI search. It scans your site in seconds, runs 40+ technical checks, connects to Search Console, and uncovers issues and keyword gaps. It learns your brand voice, plans a content calendar, writes SEO articles, and auto-publishes to 13+ platforms including WordPress, Shopify, and Webflow. Mygomseo tracks rankings, backlinks, and anomalies, delivers reports, and answers questions on demand so you grow search visibility with minimal effort.

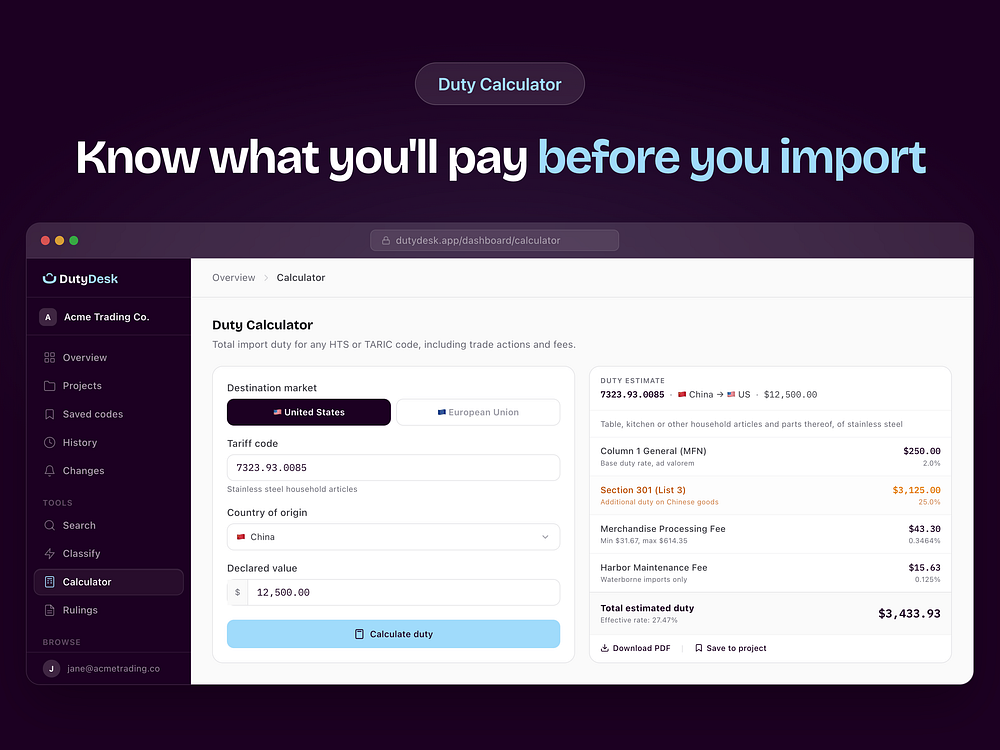

DutyDesk helps importers and trade professionals look up US and EU tariff rates, calculate total landed costs, and classify products. You can search by name or HTS code to see full rates including trade actions and fees, or use AI classification backed by GRI rules and official rulings. Set alerts for rate changes, organize codes by client or shipment, and access data via a REST API. Data sources include USITC, CBP, the Federal Register, TARIC, EUR-Lex, and EU BTI.

The app also updated reply settings, allowing paying users to give second-degree connections the ability to comment on posts.

The brief comment function is being expanded beyond mutual followers and could potentially become a new way for creators to broadcast information.

The app highlighted the popularity of its public discussions during March Madness, though Threads and X still have more active users during live events.

New demographic data points could be valuable for brand partners, while Google’s latest Nano Banana model will help with image generation.

There may be opportunities for wellness brands that want to engage with people beyond the confines of a doctor’s office, according to a new study from the company.

New insight from the platform highlights the importance of variable signals within Pin recommendations.

Google CEO Sundar Pichai said AI models could expose more software vulnerabilities and agreed it was plausible AI is affecting zero-day exploit markets.

The post Google CEO Says AI Could ‘Break Pretty Much All Software’ appeared first on Search Engine Journal.

Google is giving advertisers new visibility into whether its automated recommendations actually drive performance — a long-standing blind spot in the platform.

What’s happening. A new “Results” tab within Recommendations shows the incremental impact of bidding and budget changes after they’ve been applied, allowing marketers to evaluate outcomes instead of relying on assumptions.

How it works. The feature attributes performance changes to specific recommendations, helping advertisers understand what effect adjustments like budget increases or bid strategy shifts had on results.

Why we care. Marketers can now validate whether recommendations improved performance, making it easier to decide which automated suggestions are worth adopting in the future.

Between the lines. Google has a vested interest in encouraging adoption of its recommendations, so providing performance data could build trust — but it also raises questions about how that impact is measured.

The catch. Advertisers may question whether the reported results are fully objective or skewed toward showing positive outcomes, given Google’s incentives.

What to watch. How detailed and transparent the reporting becomes — and whether advertisers see mixed or negative results alongside wins.

Bottom line. Google is moving from “trust us” to “here’s the proof,” but advertisers will be watching closely to see how impartial that proof really is.

First seen. This update was first spotted by Arpan Banerjee who shared seeing the new tab on LinkedIn.

Google is giving advertisers more control over how AI generates ad copy, making it easier to scale campaigns without losing brand consistency.

What’s happening. Google Ads is rolling out a beta feature that allows marketers to copy text guidelines from existing campaigns and apply them to new ones, eliminating the need to rewrite brand rules from scratch.

How it works. Advertisers can replicate approved tone, style and messaging rules across campaigns in one click, ensuring AI-generated ads stay aligned with brand standards while reducing setup time.

Why we care. The feature helps teams launch campaigns faster by reusing what already works, while maintaining consistency across large accounts where multiple campaigns run simultaneously.

Between the lines. This shift reflects a growing demand from marketers to “train” AI systems rather than rely on them blindly, effectively turning brand guidelines into reusable inputs for automation.

Bottom line. AI is speeding up ad creation, but control is becoming the real differentiator — and Google is starting to hand more of it back to advertisers.

First spotted. This update was spotted by Paid Media expert Arpan Banerjee when he shared spotting the alert on LinkedIn.

ZeroTwo lets you access the combined capabilities of Claude, Perplexity, ChatGPT, Manus, and Higgsfield. These top AI platforms each have unique features that give them special abilities beyond their models. Now you can use all of them without paying for several subscriptions. Perplexity's agentic search, Claude's agentic connector, ChatGPT's apps, and Higgsfield's AI tools for creatives are all available on one platform.

The platform also offers deep research, canvas mode, and shared access to threads and projects. Plans include unlimited messages, expanded memory, priority performance, and team features for businesses.

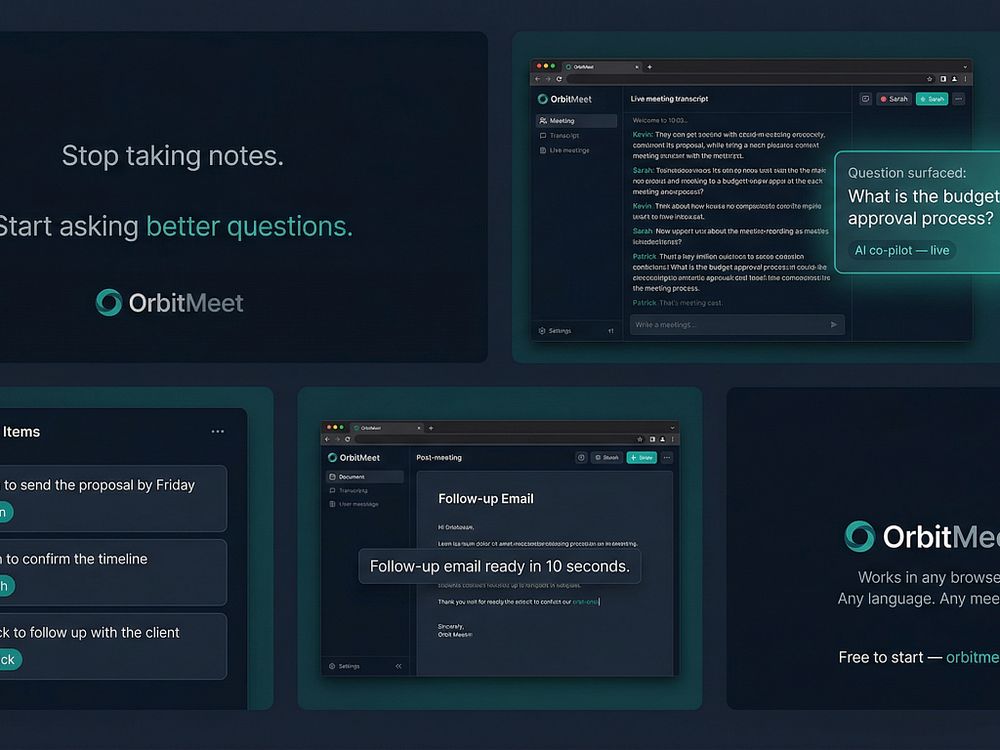

OrbitMeet is a browser-based AI meeting co-pilot that listens to your meetings in real time, surfaces questions you might miss every 75 seconds, and builds your summary as you talk with no plugins or installation.

It detects action items by speaker name, generates follow-up documents such as emails, memos, and action trackers in seconds, and works across Zoom, Teams, Google Meet, or in-person meetings. It's designed for consultants, founders, and distributed teams working in multiple languages. A free plan is available, with Pro at $20.5 CAD/month.

Google says its AI-powered advertising tools are starting to deliver meaningful results, including major revenue gains for some retailers, as it experiments with how ads work in AI-driven search.

The big picture. Fears that AI chatbots like ChatGPT would disrupt Google’s core search business haven’t materialized, and instead the company’s ads business continues to grow, suggesting AI may be expanding how people search rather than replacing it.

By the numbers:

What’s happened. Google is embedding ads into its AI-powered search experiences, including AI Mode powered by Gemini, while introducing new ad formats designed for conversational queries and tools that allow brands to shape how they appear in AI-generated answers, with a new “business agent” feature enabling companies like Poshmark and Reebok to control how their products are represented.

Driving the results. AI-driven campaigns like Performance Max and AI Max match ads to more detailed and conversational search intent, and Google says queries in AI Mode are often two to three times longer than traditional searches, giving the system more context to connect users with relevant products, as seen with Aritzia, which reported an 80% increase in revenue after adopting AI Max.

How it works. The system scans a retailer’s website and creative assets, interprets user intent from conversational queries, and dynamically matches products and messaging in real time. This is increasingly important given that 15% of daily searches are entirely new (according to Google) and cannot be predicted through traditional keyword targeting.

Why we care. Google is shifting from keyword-based ads to intent-driven, AI-matched advertising, meaning campaigns can reach consumers with far more precision at the moment they’re ready to buy. As search becomes more conversational and unpredictable, advertisers who rely on traditional targeting risk falling behind those using AI-driven formats that automatically adapt to new user behavior.

Zoom in. Google is testing new formats such as “direct offers,” which deliver personalized promotions when users show purchase intent, using Gemini to analyze conversational context and behavior, with brands like E.l.f. Beauty, Chewy and L’Oréal participating in early trials.

Commerce push. Google is also advancing its commerce strategy through a Universal Commerce Protocol developed with Shopify, which allows purchases to happen directly within AI conversations.

Yes, but. Google is not alone in experimenting with ads in AI search, and early results across the industry have been mixed, as Amazon has reportedly seen limited traction from ads in its AI shopping assistant, OpenAI continues to explore monetization models, and Perplexity AI has begun phasing out ads after underwhelming performance.

What they’re saying, Google positions itself as a “matchmaker” rather than a retailer, emphasizing that AI helps deliver more relevant and personalized ads while allowing brands to maintain control over their messaging and build user trust by showing the right product at the right moment.

What’s next. Gooogle says it has no current plans to introduce ads directly into Gemini but will continue testing and expanding advertising within AI Mode, including more personalized offers and AI-driven shopping experiences.

Bottom line. AI is not replacing search but reshaping it, and for Google that shift is making advertising more conversational, more targeted and, in some cases, significantly more profitable.

Dig deeper. Google says its AI-powered ads help some brands lift online sales by 80%.

Google Search is evolving beyond links and answers into a system that completes tasks, potentially fundamentally changing how users interact with the web. That’s according to Alphabet CEO Sundar Pichai, speaking on the Cheeky Pint podcast.

Why we care. Google is signaling a move from information retrieval to task execution.

Search becoming agentic. Traditional search behavior is already changing and will continue to, Pichai said.

Pichai also described a future where Google Search acts less like a list of results and more like a system that coordinates actions:

AI Mode is already changing queries. Users are already adapting their behavior in Google’s AI-powered search experiences, Pichai said:

Search vs. Gemini overlap. Despite the rise of Gemini, Pichai said Google isn’t replacing Search with a chatbot. Instead, the two will coexist — and diverge (echoing what Liz Reid said last month):

The interview. The history and future of AI at Google, with Sundar Pichai

Google’s AI Overviews answered a standard factual benchmark correctly 91% of the time in February, up from 85% in October, according to a New York Times analysis with AI startup Oumi.

However, Google handles more than 5 trillion searches per year, so that means tens of millions of answers every hour may be wrong.

Why we care. We’ve watched Google shift from linking to sources to summarizing them for more than two years. This report suggests AI Overviews are improving, but still mix correct answers, weak sourcing, and clear errors in ways that can mislead searchers and reshape which publishers get visibility and clicks.

The details. Oumi tested 4,326 Google searches using SimpleQA, a widely used benchmark for measuring factual accuracy in AI systems, the Times reported. It found AI Overviews were accurate 85% of the time with Gemini 2 and 91% after an upgrade to Gemini 3.

What changed. Accuracy improved between October and February, but grounding worsened. In October, 37% of correct answers were ungrounded; in February, that rose to 56%.

Examples. The Times highlighted several misses:

Google’s response: Google disputed the Times analysis, saying the study used a flawed benchmark and didn’t reflect what people actually search. Google spokesperson Ned Adriance told the Times the study had “serious holes.”

The report. How Accurate Are Google’s A.I. Overviews? (subscription required)

PeaZip 11.0 refines one of the most capable free archivers with faster browsing, smoother drag-and-drop across tabs, and a cleaner, more responsive UI. The update also improves scaling, adds flexible icon rendering, and introduces batch archive testing, alongside the usual fixes and cleanup.

Shadow OS is the first decision-making app built on 64 hexagrams, the same system Carl Jung studied for over two decades and called his most significant method for surfacing what the unconscious already knows. Other decision apps use random spinner wheels, AI chatbots validate whatever you say, and astrology apps offer forecasts open to interpretation. Shadow OS gives you one committed answer: move forward, hold, or pull back.

BeMusic AI is a free AI music generator that turns text prompts into fully produced, royalty-free songs in under 30 seconds. Choose from 50+ genres, adjust mood, tempo, and energy, and download high-quality WAV or MP3 for videos, games, podcasts, and ads. It also offers tools to write lyrics, create instrumentals, convert audio to MIDI, edit MIDI, make AI covers, remove vocals, extend tracks, and analyze songs. Use it to avoid copyright issues and keep full ownership of every track.

These new features are designed to streamline your browser and help you maximize productivity in Chrome.

These new features are designed to streamline your browser and help you maximize productivity in Chrome.

Google has begun placing sponsored ad units directly inside the Images tab of mobile search results — a new placement that eligible campaigns can access without any changes to existing keyword targeting.

What’s happening. When a user navigates to the Images tab within Google Search on mobile, they may now see sponsored units appearing within the image grid. Each unit shows a full image creative as the primary visual alongside text, and is clearly labelled “Sponsored” — consistent with how Google labels ads elsewhere in search results.

How it works. Eligible campaigns can serve into the Images tab without any changes to keyword targeting or campaign structure. The placement draws from existing image assets, meaning advertisers running Search or Performance Max campaigns with strong visual creative are best positioned to benefit. No separate image-only campaign setup is required.

Why we care. This is a meaningful expansion of Google’s paid search real estate. For product-led and catalog-heavy advertisers, the Images tab is where purchase-intent discovery often starts — and now ads can appear right in that moment. If your campaigns already use strong image assets, you may be picking up incremental impressions without lifting a finger.

The big picture. Early indications suggest this placement behaves more like a visual discovery surface than classic paid search. Expect high impression volume but lower click-through rates — more in line with display or Shopping than traditional text ads. That said, the assist value in multi-touch conversion paths could be significant, particularly for retail and direct-to-consumer brands. Treat it as upper-funnel reach, not a last-click channel.

What to watch. Google has not made a formal announcement, and there is no dedicated reporting breakdown for Images tab placements yet. Monitor your impression share and segment data closely to understand whether this placement is contributing — and whether it’s eating into organic image visibility for competitors.

First seen. The placement was spotted by Google Ads Expert – Matteo Braghetta, who shared seeing this update on LinkedIn. No official documentation has been published by Google at the time of writing.

Over 30% of outbound clicks go to just 10 domains, with Google alone taking more than 20%, according to a new Semrush study published today.

ChatGPT also relies less on the live web, triggering search on 34.5% of queries, down from 46% in late 2024.

The big picture. ChatGPT’s growth has plateaued, and its role in how users navigate the web is evolving unevenly.

The details. Most ChatGPT referral traffic still goes to a small set of sites, even as more sites receive some traffic.

Why we care. Visibility in ChatGPT doesn’t translate evenly into traffic, and you’ll likely see marginal referral impact. The decline in search-triggered queries also limits your chances to earn citations and traffic.

When ChatGPT searches. It defaults to pre-trained knowledge and uses web search in specific cases, including:

Behavior shift. Most ChatGPT prompts still don’t resemble traditional search queries.

About the data. Semrush analyzed more than 1 billion lines of U.S. clickstream data from October 2024 to February 2026 across a 200 million-user panel, tracking prompts, referral destinations, and search usage.

The study. ChatGPT traffic analysis: Insights from 17 months of clickstream data

Google doesn’t train Gemini using personal emails. Here’s how Google keeps private data secure in Gmail amid new AI model upgrades.

Google doesn’t train Gemini using personal emails. Here’s how Google keeps private data secure in Gmail amid new AI model upgrades.  Android XR adds spatial conversion for 2D apps, the ability to pin apps to your walls and more ways to watch, create, and explore.

Android XR adds spatial conversion for 2D apps, the ability to pin apps to your walls and more ways to watch, create, and explore.  We’re making it easier for people to share photos and videos, and keep track of their progress.

We’re making it easier for people to share photos and videos, and keep track of their progress.

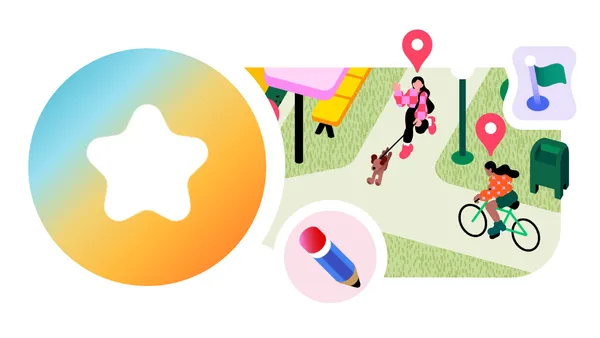

Google is rolling out new Google Maps features that make it easier to contribute photos, reviews, and local insights, while adding Gemini-powered caption suggestions.

Local Guides redesign. Contributor profiles are getting more visibility. Total points now appear more prominently, Local Guide levels are easier to spot, and badge designs have been refreshed.

AI caption drafts. Google is also introducing AI-generated caption drafts. Gemini analyzes selected images and suggests text you can edit or discard.