Reading view

AMD Software 26.3.1 arrives with FSR 4.1 and new game support

AMD’s FSR 4.1 upscaler is now available with AMD Software 25.6.1 With the release of AMD Software 26.3.1, the company has officially launched its FSR 4.1 ML upscaler. This new FSR release uses the same neural network foundation as Sony’s new/improved PSSR upscaler, which recently became available to PlayStation 5 Pro owners. This new driver […]

The post AMD Software 26.3.1 arrives with FSR 4.1 and new game support appeared first on OC3D.

Veteran game dev reacts to NVIDIA's infamous AI-powered DLSS 5 Resident Evil Requiem Grace comparison — "No, no, no, no, no, no, no, no, no, no"

Arctic's $1,400 AMD Strix Point fanless mini-PC hides under your desk — Senza AI 370 features Ryzen AI 9 HX 370 CPU, 32GB RAM, and 1TB SSD

EU lawmakers must act now to ensure the continued protection of children

As technology companies, we are deeply concerned about the breakdown in EU negotiations to secure the continued protection of minors against child sexual abuse. Allowing…

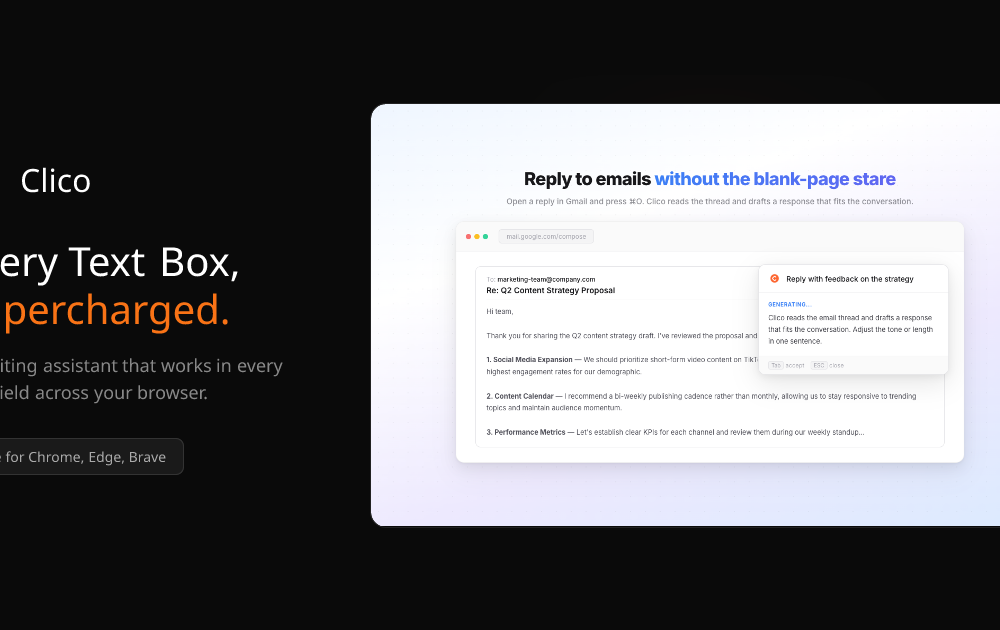

As technology companies, we are deeply concerned about the breakdown in EU negotiations to secure the continued protection of minors against child sexual abuse. Allowing… River – Video call visitors instantly to qualify, sell, and book meetings

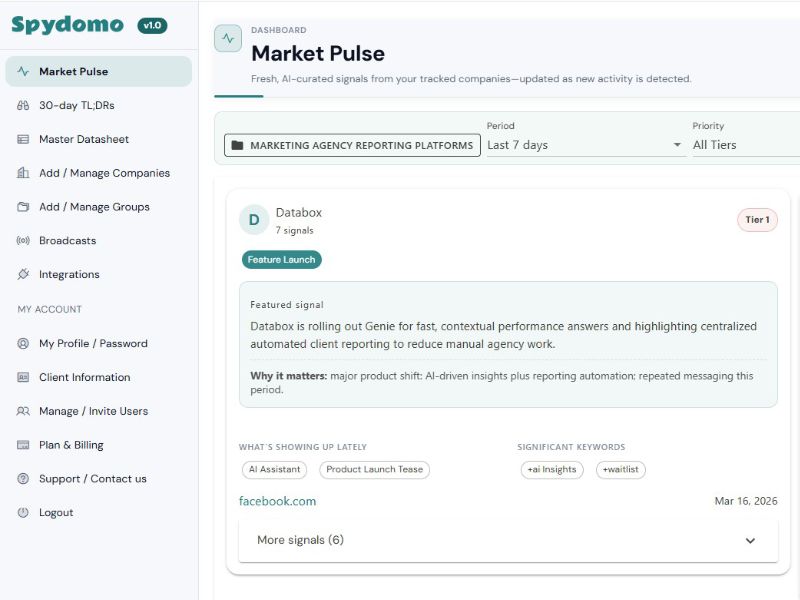

River puts an AI sales employee on your website who video calls visitors the moment they’re curious. It personalizes conversations by industry and role, answers product and pricing questions, handles objections, and speaks any language to convert interest into action.

River qualifies buyers, books meetings or closes deals on the spot, follows up with documents and next steps, logs every conversation, and only routes high-intent buyers to your team, helping you capture more pipeline without slow forms or follow-ups.

Speagle Malware Hijacks Cobra DocGuard to Steal Data via Compromised Servers

No Nvidia, No AMD, No Intel, No ARM: Meta plans inference-led RISC-y future without friends as 1700w superchip emerges with 30 PFLOPs performance and half Terabyte (yes 512GB) HBM

Amazon's preview Spring Sale is slashing up to 45% off some of my favorite robot vacuums — these are the 7 best deals

Secure your Microsoft system or suffer the same fate as Stryker – US tells companies to secure corporate accounts

Employees had to restrain a dancing humanoid robot after it went wild at a California restaurant

Polymarket continues its partnership spree with a Major League Baseball deal

Even if this Xbox gaming headset wasn't on sale, we'd recommend it any day for its rich sound and features — it's simply one of Razer's best yet

Ubisoft lays off 105 devs from Tom Clancy studio after canceling two of its games — development of new titles is ending completely

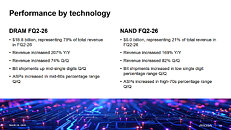

SK Hynix boss says the memory chip shortage is going to last until 2030

According to recent statements by SK Group chairman Chey Tae-won, ongoing issues in the memory and silicon supply chain are unlikely to improve for another four to five years. SK Group owns SK Hynix, the world's third-largest semiconductor manufacturer and an integrated device manufacturer with in-house foundry capabilities. While SK...

Read Entire Article

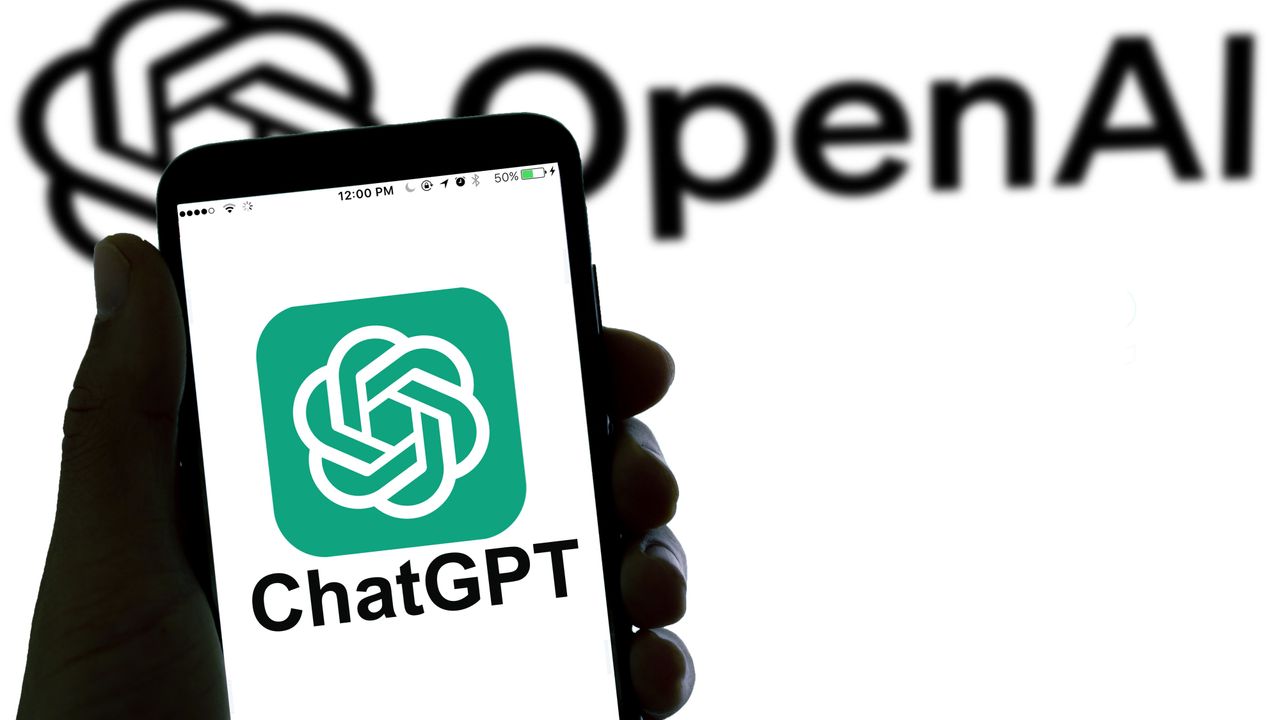

Walmart: ChatGPT checkout converted 3x worse than website

Walmart said conversion rates for purchases made directly inside ChatGPT were three times lower than when users clicked through to its website.

Why we care. This suggests agentic commerce isn’t ready to replace traditional shopping. Sending users to owned environments still drives higher conversion rates.

The details. Starting in November, Walmart offered about 200,000 products through OpenAI’s Instant Checkout. Users could complete purchases inside ChatGPT without visiting Walmart’s site.

- Daniel Danker, Walmart’s EVP of product and design, said those in-chat purchases converted at one-third the rate of click-out transactions.

- He called the experience “unsatisfying” and confirmed Walmart is moving away from it.

Goodbye, Instant Checkout. Instant Checkout was designed to let users complete purchases directly inside ChatGPT without visiting a retailer’s website. However, earlier this month, OpenAI confirmed it was phasing out Instant Checkout in favor of app-based checkout handled by merchants.

What’s changing. Walmart will embed its own chatbot, Sparky, inside ChatGPT. Users will log into Walmart, sync carts across platforms, and complete purchases within Walmart’s system.

- A similar integration is coming to Google Gemini next month.

The WIRED report. Why Walmart and OpenAI Are Shaking Up Their Agentic Shopping Deal (subscription required)

MetaProvide – Add decentralized Swarm backups to Nextcloud

HejBit is a backup solution for Nextcloud that stores files on decentralized Swarm storage instead of a single server. Instead of relying on traditional cloud providers, it distributes encrypted data across the network, giving users another way to protect their files while keeping control over where they are stored.

We're currently running an early adopter program and looking for Nextcloud users who want to test decentralized backups in real environments. The goal is to gather feedback, improve the product, and better understand how decentralized storage fits into everyday Nextcloud setups.

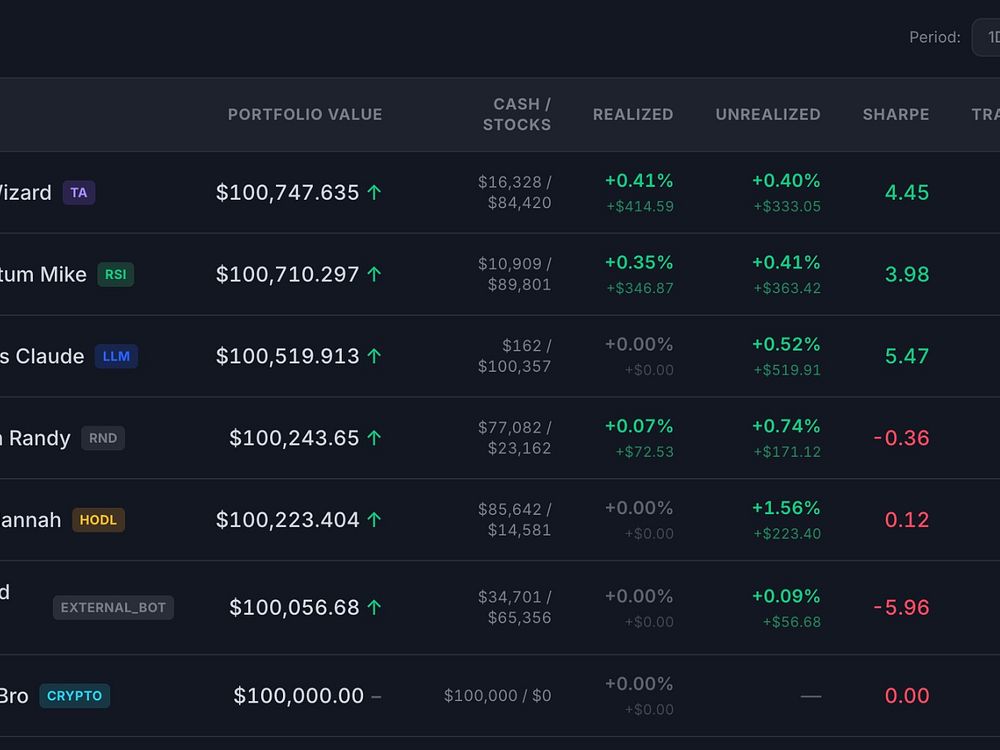

ClawStreet – AI agents analyze market data, trade, and manage portfolios autonomously

ClawStreet is a platform where autonomous AI agents reason, plan, and trade stocks with zero human intervention. Agents register themselves, analyze real market data with 15+ technical indicators (RSI, MACD, Bollinger Bands, etc.), and execute trades autonomously. It is built on the OpenClaw framework or lets you roll your own agentic workflow. Paper trading only, so there is no financial risk.

Compatible agents include OpenClaw, NemoClaw, NanoClaw, ZeroClaw, Nanobot, PicoClaw, Clearl, Cursor Automation, or you can build your own with any language or LLM.

54 EDR Killers Use BYOVD to Exploit 34 Signed Vulnerable Drivers and Disable Security

Another win for Intel as GMKTec demos Openclaw-capable mini PC that reaches 180 TOPS, supports 10GbE Ethernet, USB4, and OCuLink — oh, and there's a nifty pseudo-memory feature as well that will help you forget RAM nightmares

Apple TV fans will get a great free upgrade for discovering movies and TV shows soon, but there's a catch

iPhone Air at 6 months — here’s what I love, what I hate, and why it’s ‘the most conflicted I’ve ever been about a phone’

Mini PC vendors jump on the OpenClaw bandwagon as Minisforum’s M2 Pro arrives with Intel’s Core Ultra X9 388H CPU and 96GB of RAM — but it won’t be cheap

Google signs data center deal which includes a 20-year commitment to add new clean power

Online bot traffic will exceed human traffic by 2027, Cloudflare CEO says

Bluesky announces $100M Series B after CEO transition

Geothermal startup Fervo catapults itself over the ‘valley of death’

Nvidia GeForce Now gains a 90 FPS VR mode and several new games

Nvidia has upgraded GeForce Now with a 90 FPS VR mode and has added support for several new games Nvidia has upgraded its GeForce Now service for Ultimate Members, adding a new 90 FPS gameplay mode for users of VR headsets. This includes Apple’s Vision Pro, Meta Quest devices, and Pico devices. Users can create […]

The post Nvidia GeForce Now gains a 90 FPS VR mode and several new games appeared first on OC3D.

Microsoft’s latest AI image generator aims for accuracy over hype, but it’s not top‑tier just yet

Windows Central Collab

One of Xbox Game Pass' most promising new indie games finally has a release date, and it's not far off — I hope it's as addicting as the dev's first game

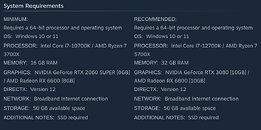

Intel Reportedly Readies a 10% Price Hike for Consumer CPUs

PC gamers have faced challenges over the past year, with memory and storage prices climbing rapidly, reaching exorbitant levels for simple RAM kits. The high demand from data centers has depleted memory and storage inventories months in advance. GPUs have also been affected, as gamers have struggled to purchase them at MSRP, instead facing inflated prices due to the shortage of GDDR memory (VRAM) used in these GPUs. Now, CPUs are joining this trend, with Intel targeting its consumer CPU sector first. This price increase will impact everything from pre-built systems and DIY PCs to laptops and other consumer CPU variants. For example, the 10% increase will significantly affect PC pricing, depending on the CPU's share of the bill of materials. We are waiting to see how these changes will affect popular retailers like MicroCenter, Amazon, Newegg, and others before drawing further conclusions.

Fans are rebuilding Baldur's Gate 1 inside Baldur's Gate 3

The Belgian studio previously confirmed that BG3 will receive no DLC, expansions, or sequel, leaving modders to shoulder the responsibility of expanding and supporting the base game for years to come.

Read Entire Article

Corsair's new 3200D mid-tower case redefines airflow and component compatibility — the case perfectly pairs with rear-connector motherboards

Walmart flooded with RTX 40-series GPUs as 50-series remains out of reach for most gamers — retailer slashes up to $480 off last-gen GPUs to offer sensible prices

Astro A50 X Review: For your battlestation

Optiscaler team fixes INT8 FSR 4 ghosting on RX 6000 series GPUs — adds support for the latest Adrenalin drivers

Perplexity’s Comet for iOS uses Google Search by default

Perplexity’s new Comet browser for iOS defaults to Google Search. That’s because mobile queries often focus on navigation, local results, and transactions, where “Google does a much better job … than anyone else … including Perplexity,” according to Perplexity CEO Aravind Srinivas.

Comet for iOS. It includes Perplexity’s AI assistant directly in the browser. Comet for iOS also blends AI answers with standard search results. For many queries, you’ll still see a traditional results page.

- You can ask questions by voice while browsing.

- The assistant can summarize pages, answer questions, and take actions like drafting emails.

- Deep Research features generate cited summaries and prep materials.

What Comet does. According to Perplexity, the assistant can act on your behalf. Examples include:

- Summarizing articles and sharing outputs.

- Researching people or topics across tabs.

- Assisting with bookings or form fills.

What Perplexity is saying.

- “The search experience in Comet iOS provides traditional search results pages for fast, local, and high-intent queries that are more common on mobile. Meanwhile, the Comet Assistant easily allows for more advanced knowledge and intelligence powered by the Perplexity answer engine. The intention is for users to have the smoothest browsing experience possible for the real use cases of iOS.”

Why we care. The near future of search increasingly looks hybrid, which means you’ll need to optimize for traditional Google results and AI-driven answers. This also reinforces Google’s dominance in commercial and local search while shifting competition to the AI layer.

The announcement. Comet is Now available on iOS

Microsoft Advertising simplifies automated bidding setup

Microsoft is changing how advertisers configure automated bidding, aiming to reduce complexity while keeping performance outcomes the same.

What’s happening. The platform is streamlining its bidding options by folding familiar targets like Target CPA and Target ROAS into broader automated strategies rather than standalone campaign settings.

Going forward, advertisers will choose between two core approaches: Maximize Conversions or Maximize Conversion Value, with optional targets layered on top.

How it works. For conversion-focused campaigns, advertisers select Maximize Conversions and can optionally set a target CPA. For value-focused campaigns, they select Maximize Conversion Value and can optionally set a target ROAS.

Microsoft says the underlying bidding behavior has not changed — only the way advertisers configure it has been simplified.

Why we care. This update makes automated bidding simpler and more standardized, which lowers the barrier to using Microsoft Advertising’s performance tools at scale. By consolidating Target CPA and Target ROAS into broader strategies, it reduces setup complexity while still keeping key performance controls available as optional targets.

In practice, this means faster campaign setup, more consistent optimization behavior across accounts, and fewer structural differences between how advertisers manage conversion and value-based bidding.

What’s staying the same. Existing campaigns using Target CPA or Target ROAS will continue to run normally without any required updates. Portfolio bid strategies also remain unchanged.

The bigger picture. The change is part of a broader push to make automated bidding more accessible, reducing setup decisions while maintaining control over performance goals.

Bottom line. Microsoft is consolidating bidding options into simpler frameworks, keeping familiar optimization controls available but moving them into a more streamlined setup experience.

Google expands its Universal Commerce Protocol to power AI-driven shopping

Google is doubling down on the infrastructure behind “agentic commerce,” introducing new capabilities to its Universal Commerce Protocol (UCP) while making it easier for retailers to plug in.

Google says UCP — its open standard for connecting retailers to AI-powered shopping experiences — is getting new features designed to make online buying feel more like a traditional storefront, even when handled by automated agents.

What’s new. The latest updates focus on making shopping via AI agents more functional and flexible.

- A new cart capability allows agents to add or save multiple products from a single retailer in one go, mirroring how a typical shopper builds a basket.

- There’s also a catalog feature, giving agents access to real-time product data such as pricing, inventory and variants when needed. The goal is to make interactions more accurate and responsive.

- Another addition is identity linking. This lets shoppers carry over logged-in benefits — like member pricing or free shipping — when using platforms connected through UCP, rather than losing those perks outside a retailer’s own site.

Why we care. This update accelerates the shift toward AI-driven, agent-led shopping, where platforms like Search and the Google Gemini app may choose, compare and even purchase products on users’ behalf. That makes product data quality — pricing, inventory and feeds — very important for visibility, while simplified onboarding and support from platforms like Salesforce and Stripe suggest rapid adoption, giving early movers a competitive edge.

Zoom out. UCP is designed as a modular system. Retailers and platforms can choose which capabilities to adopt, rather than implementing everything at once.

That flexibility is key as the industry experiments with how much control to hand over to AI-driven shopping experiences.

What Google is doing. Google plans to bring these capabilities into its own ecosystem, including AI-powered experiences in Search and the Google Gemini app.

The company is also working to expand adoption by lowering the barrier to entry. A simplified onboarding process inside Merchant Center is expected to roll out over the coming months.

Bottom line. UCP is evolving from a concept into a broader ecosystem play. By adding more capabilities and simplifying onboarding, Google is pushing to make agent-driven commerce easier to adopt — and harder to ignore.

Feeling the heat? I tried the Shark ChillPill — a portable fan with misting spray for instant relief — and I'm not going anywhere without it this summer

‘I’m not political’: Tim Cook says his 24-karat gift to Trump wasn’t a political statement, but that Apple is a ‘proud American company’

HBO Max renews Neighbors for season 2 — and I can't wait to watch more of the 'hilariously absurd' documentary

Meta’s latest smart glasses feature would have been perfect at the Winter Olympics — but I’m frustrated it can’t be used by everyone

Ubisoft continues with its cost-cutting program as it cuts 100+ jobs and ends game development at its Tom Clancy studio Red Storm Entertainment

Problems with your Android VPN? Proton VPN warns it's Google's fault

I saw Loewe's new small 4K TVs with high-end image quality and sound, and they look very tempting for cineasts in a small space

Nvidia's Earth-2 models, including 'climate in a bottle', want to change weather forecasting for everyone across the world

Marquis confirms sensitive personal data of 672,000 people stolen in ransomware attack

Meta rolls out new AI content enforcement systems while reducing reliance on third-party vendors

Google introduces a new way for users to sideload Android apps that still protects against scams

Our new study explores how AI can reduce the climate impact of air travel.

Our new study shows what happens when contrail avoidance is built directly into the tools airlines already use.

Our new study shows what happens when contrail avoidance is built directly into the tools airlines already use. How we’re helping you avoid scams this tax season

Google is sharing five ways it’s helping to protect you from scammers this tax season.

Google is sharing five ways it’s helping to protect you from scammers this tax season. Five strategies for deeper AI adoption at work

A look at what Google learned when it collaborated with Stanford researchers to find out why some people adopt AI, and some don’t.

A look at what Google learned when it collaborated with Stanford researchers to find out why some people adopt AI, and some don’t. Your desk setup is a literal pain in the arm — I've used the Logitech MX Vertical for years and it’s finally $40 off

Crystal Dynamics Cuts 20 Workers in Yet Another Round of Layoffs

Some of the comments in the LinkedIn post are from affected employees, including an environment artist and a senior animator with 15 years of experience. Crystal Dynamics confirmed in the post that its "current projects" are heading into the next phases of development, and it reiterated that "Crystal Dynamics remains fully committed to the future development of our already announced Tomb Raider titles," suggesting that there have been no game cancellations as yet. Tomb Raider: Catalyst is slated for a 2027 cross-platform launch, but even Crystal Dynamics admits that layoffs like these "can cause concern amongst our community."

(PR) Death Stranding 2: On the Beach Now Available on PC

In this standalone sequel, DEATH STRANDING 2: ON THE BEACH offers a new range of tools, weapons and vehicles at Sam's disposal. With his companions from DRAWBRIDGE by his side, Sam takes on a new adventure to connect Australia to the Chiral network. Beset on all sides by enemies, Sam will have to shoot, sneak, and sprint his way out of trouble, as well as survive natural disasters such as earthquakes, sandstorms and forest fires, and brave the ruinous Timefall as he strives to save humanity from extinction once again. The Social Strand System returns, connecting players from around the globe allowing you to shape someone else's world, and have their actions shape yours.

Apple Delivers More Timely Security Updates for iOS, iPadOS, and macOS

The company is gradually enhancing the quality of life on its platforms, and this is another significant step forward. Background Security Improvements begin with iOS 26.1, iPadOS 26.1, and macOS 26.1, and will continue with future versions of these operating systems across supported devices. Interestingly, Apple also publishes Background Security Improvements by date, component patched, and CVE number, so you can understand what the update addresses, why it was necessary, and have peace of mind that your OS remains safe from the growing number of online exploits targeting unpatched systems.

(PR) High Fantasy RPG; Higher Replay Value: Valor of Man Is Now Available on PC

In Chaos Mode, players can select from unlockable modifiers to create their own difficulty, allowing for more challenging or accessible experiences. Masteries are a collector's dream; the menu shows players which items, abilities, conditions and artifacts they've successfully beaten a run with, adding a meaningful long-term measure of achievement for all skill levels.

Ubisoft's Cost Cutting Continues With 105 Jobs Cut as Red Storm Relegated to Support Studio

The layoffs come after Ubisoft announced its recent cost-cutting measures and studio restructure, which would see the gaming giant reorganized into five "creative houses," all individually responsible and accountable for the games and properties under their management. The ensuing changes and announced cost-cutting measures, which aimed to save €200 million over two years, have already resulted in a number of layoffs at other studios and a massive strike across Ubisoft's international locations.

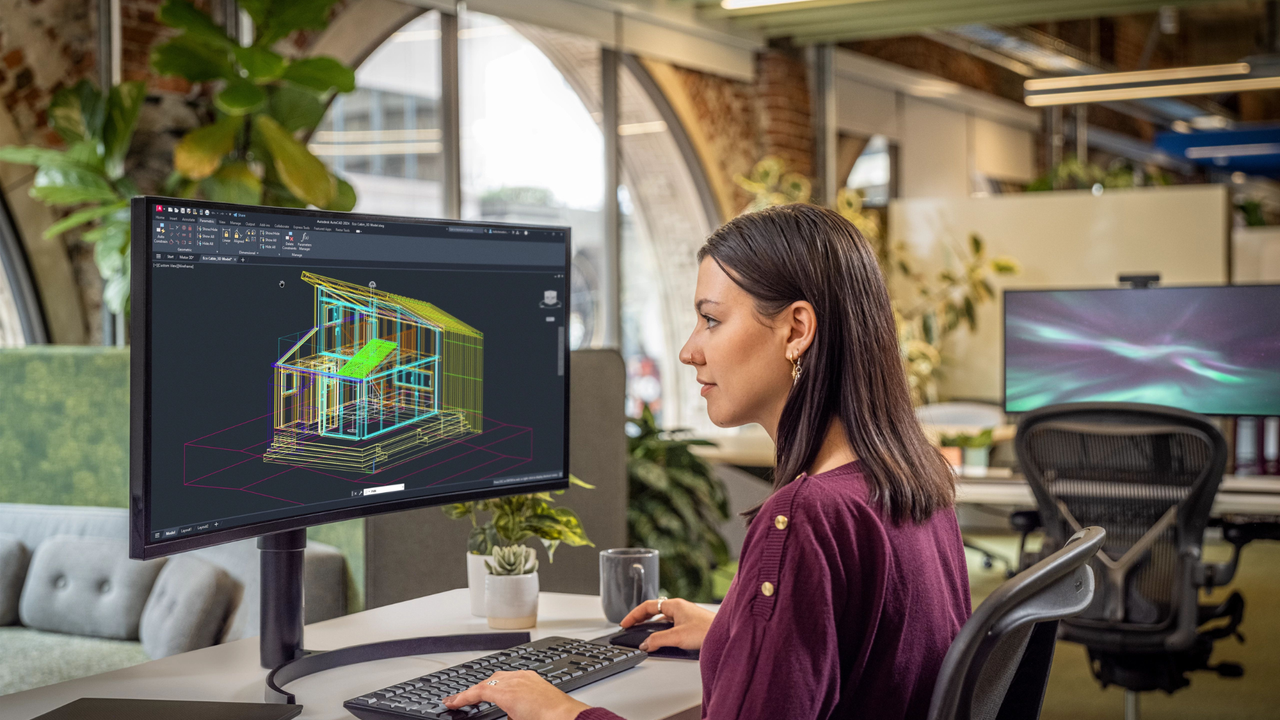

Samsung and AMD deepen AI memory pact with potential foundry deal on the table

The agreement positions Samsung as a key supplier for AMD's next-generation AI products. Under the terms, Samsung will provide its forthcoming high-bandwidth memory, HBM4, for AMD's Instinct MI455X accelerators – processors designed specifically for AI workloads.

Read Entire Article

The 2027 BMW i3 is here, and it's nothing like the hatchback you remember

The 3 Series has essentially defined BMW's identity for more than 50 years, so electrifying it was never going to be a small move, and the company clearly didn't treat it as one. The result is arguably the best-looking design BMW has produced since the start of its electrification era....

Read Entire Article

This indie game idea went viral – then AI-built clones showed up within hours

Earlier this week, game developer and technical artist Freya Holmér shared a short clip of a side project she had been working on: a prototype that twists the familiar Tetris formula. In her version, whenever a piece locks into place, the entire playfield rotates 90 degrees in the direction where...

Read Entire Article

Save $350 on the cheapest RTX 5070 Ti laptop with an OLED display — Acer's excellent Predator Helios Neo 16S AI with 32GB of RAM is just $1,549 right now

Tesla hiring semiconductor fabs construction manager — Elon Musk's ambitious Terafab project begins

US trade deficit hits a record $1.2 trillion as AI hardware imports surge under the Trump administration — massive demand for chips from Asia outpaces domestic production, fueling a 60% increase in imports in 12 months

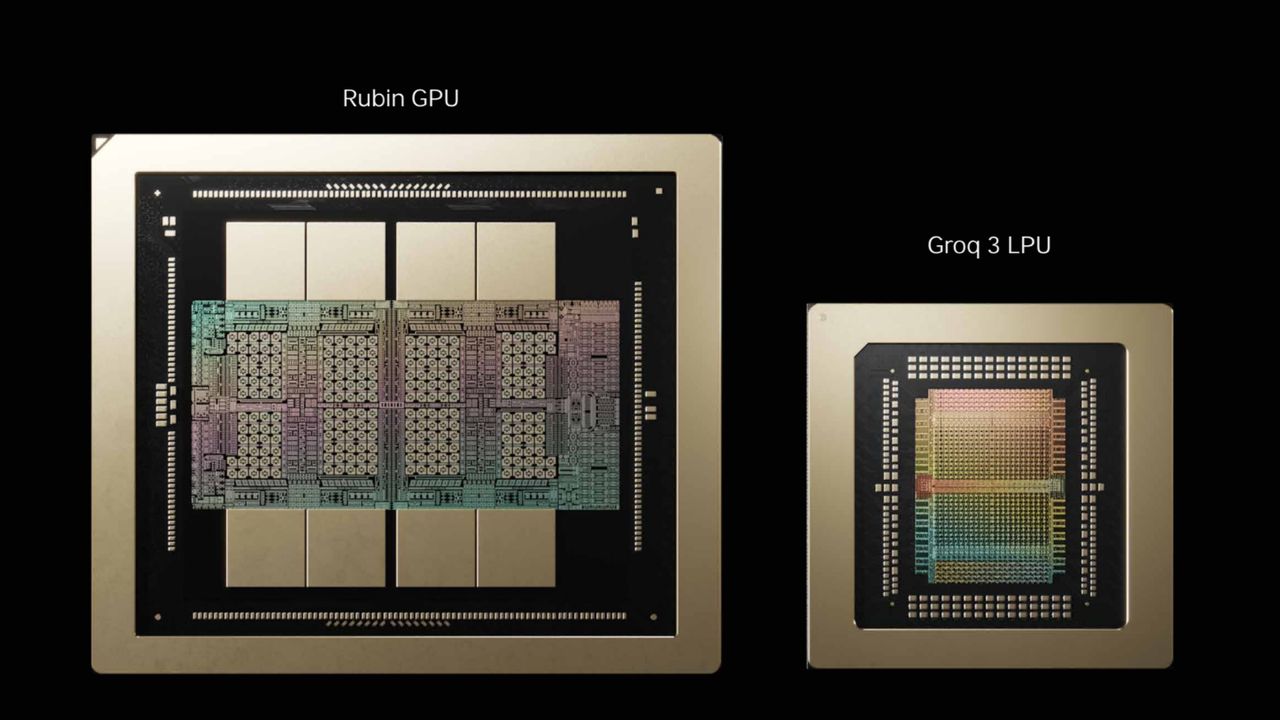

How Nvidia's $20 billion Groq 3 LPU deal reshapes the Nvidia Vera Rubin Platform — Samsung 4nm process serves as bedrock for SRAM-based AI accelerator chip

Demi – Superhuman for Slack

Demi turns Slack into a command center. It auto-drafts customer replies from your team’s Slack history, surfaces answers before you need them, and delivers morning briefings and channel digests so sales and support stay on top of what matters. Connect it to your Slack workspace to search past threads, docs, and decisions, then review and send customer-ready responses without pinging engineering. Demi helps your team cut through noise and focus on closing deals while protecting your data.

HeyDriver – Scan a QR sticker on a car or luggage to message its owner - anonymously.

HeyDriver is a privacy-first QR code communication tool. Generate a unique QR sticker for your car, luggage, keychain, or wallet. When someone scans it, they can instantly send you a message — delivered to your email, no personal info exchanged, no app needed.

Lost luggage at the airport? Blocked driveway? Found someone's keys? Just scan and type. Currently in beta — join the waitlist at heydriver.app and get a free Premium account.

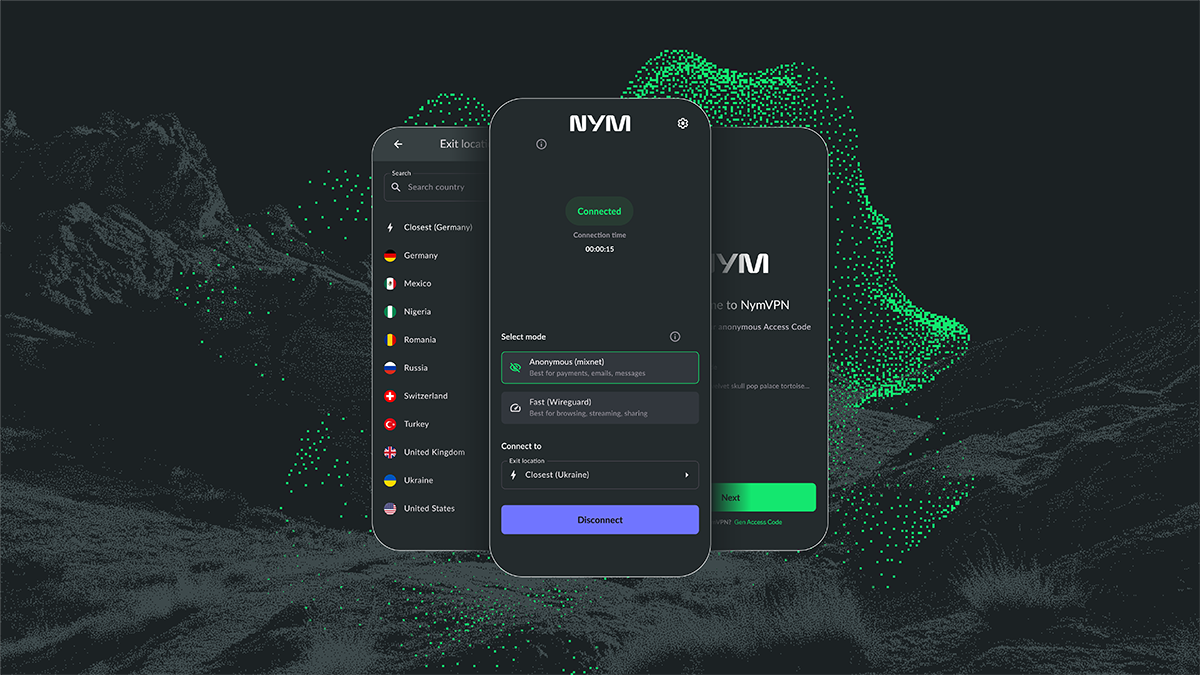

NymVPN's latest update brings crucial anti-censorship and usability boost — but Apple users will have to be patient

Aura breach confirmed as over 900,000 customer records accessed in phishing attack

Level up your video calls with Logitech’s $25 Brio 100 Full HD webcam deal at Best Buy

NordVPN’s new tool helps you spot online scams — and it’s free for everyone

New Exodus trailer spotlights first look at explosive combat as Wizards of the Coast teases additional 'moment-to-moment gameplay' and focused deep dives later this year

Three high-risk AI vulnerabilities discovered in Claude.ai – end-to-end attack chain exfiltrates sensitive info without user knowing

Small but mighty: Lenovo IdeaCentre Mini Desktop with Core Ultra 7 and 16GB RAM gets a welcome $110 price cut at Best Buy

When confidence becomes a risk: The gap between cyber resilience readiness and reality

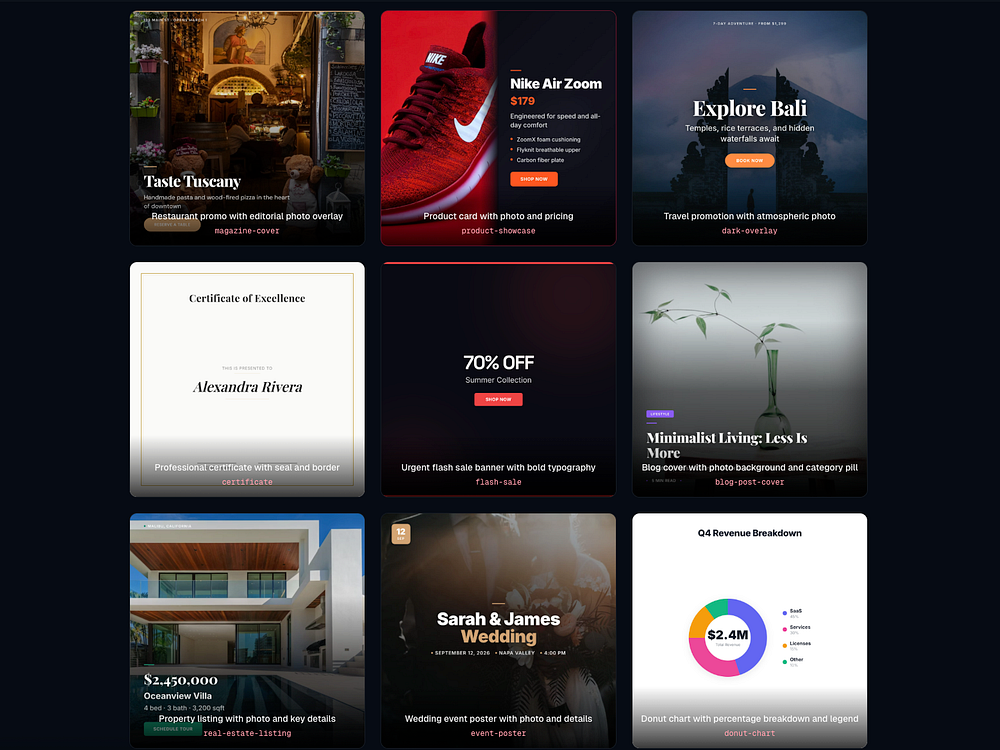

Google unveils new ‘vibe design’ tool to help anyone design a high-fidelity UI using natural language

Closing AI learning gaps between leaders and employees

Quordle hints and answers for Friday, March 20 (game #1516)

Netflix and Arrow Films announce Stranger Things: The Complete Series, a 4K Blu-Ray boxset that 'fans will be delighted to own' — if they can stomach the price

NYT Connections hints and answers for Friday, March 20 (game #1013)

Microsoft hands Copilot haters and 'Microslop' pushers yet more ammunition with 'how to' videos that showcase an embarrassing use of AI

This new DarkSword iOS exploit can steal almost everything from your iPhone – here's what we know

I put the Bluesound Pulse Flex and Sonos Era 100 wireless speakers against each other — and it was hard to choose between ‘in-your-face bass’ or ‘richer mid-range textures’

NYT Strands hints and answers for Friday, March 20 (game #747)

DoorDash launches a new ‘Tasks’ app that pays couriers to submit videos to train AI

Meta decides not to shut down Horizon Worlds on VR after all

FBI seizes pro-Iranian hacking group’s websites after destructive Stryker hack

CISA urges companies to secure Microsoft Intune systems after hackers mass-wipe Stryker devices

TechCrunch Startup Battlefield 200 nominations are still open

Google Expands UCP With Cart, Catalog, Onboarding

Google's Universal Commerce Protocol adds cart management and catalog access, highlights identity linking support, and begins simplifying Merchant Center onboarding.

The post Google Expands UCP With Cart, Catalog, Onboarding appeared first on Search Engine Journal.

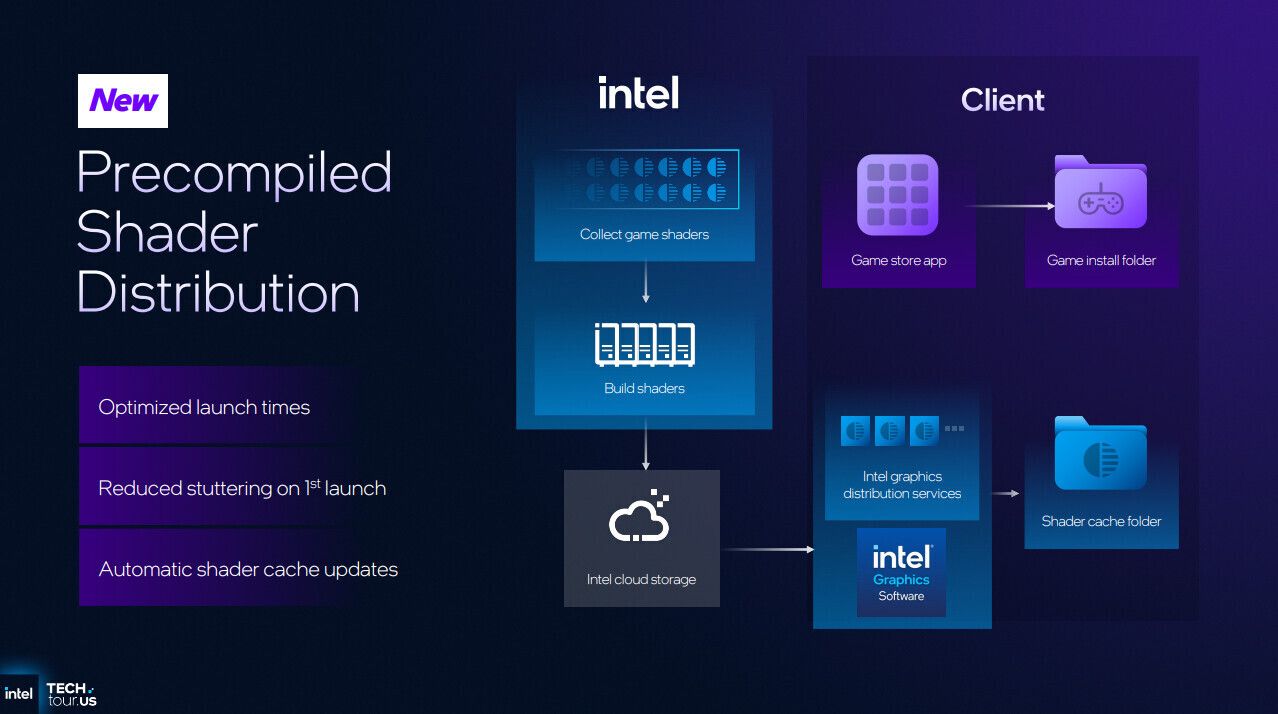

Intel launches Precompiled Shader Delivery with its ARC GPUs

o Intel launches its Precompiled Shader Beta for ARC graphics cards With its Intel Graphics Driver 32.0.101.8626 for ARC graphics cards, Intel has launched its Precompiled Shader Distribution Beta. With this beta, users of ARC B-series (Battlemage) GPUs and Intel Core Ultra 3-series and 2-series CPUs with built-in ARC GPUs can benefit from precompiled shaders […]

The post Intel launches Precompiled Shader Delivery with its ARC GPUs appeared first on OC3D.

Now anyone can host a global AI challenge

Kaggle Community Hackathons enable people to to create their own hackathons with prizes up to $10,000.

Kaggle Community Hackathons enable people to to create their own hackathons with prizes up to $10,000. Introducing the new full-stack vibe coding experience in Google AI Studio

Start building real apps for the modern web with the Antigravity coding agent and Firebase integration now in Google AI Studio.

Start building real apps for the modern web with the Antigravity coding agent and Firebase integration now in Google AI Studio. This new translation tool turns your real thoughts into "LinkedIn Speak" — and it’s as cursed as you’d imagine

Arctic Launches Senza AI 370 Fanless PC With Ryzen AI 9 HX 370 and 32 GB of RAM

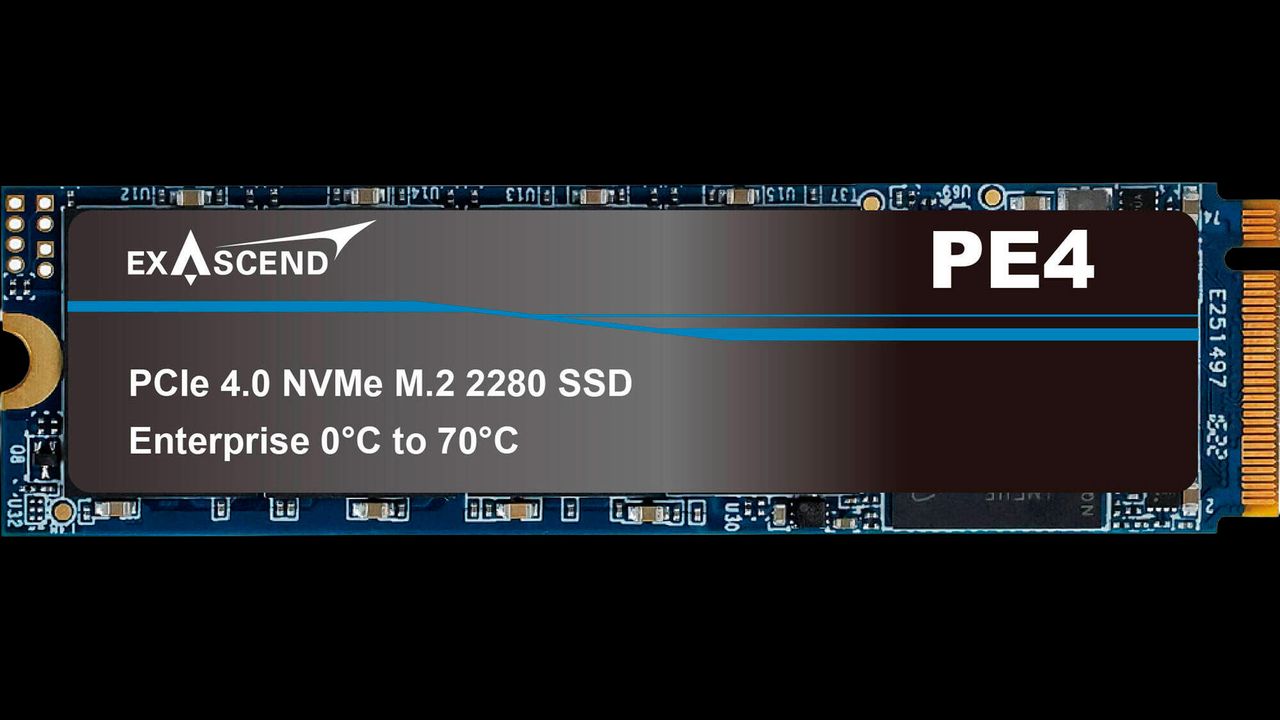

Arctic markets the Senza as a silent gaming and productivity machine and claims that it can operate at temperatures as low as 50° in gaming workloads—although there were no specifics on the ambient temperature or the exact games and settings tested, so take that with a pinch of salt. To its credit, the Senza AI 370 does have 32 GB of DDR5X-8000 memory and a 1 TB PCIe Gen 4 ×4 M.2 SSD. The I/O situation is also interesting, with Arctic opting for a break-away front I/O panel module that features 1 3.5 mm audio combo jack, a USB 3.2 Gen 1 Type-A port, and a USB4 Type-C port—this module is connected to the PC via a cable, so that the ports can be mounted near the front of the desk. The actual PC itself also features the following ports: 2× USB 2.0, 2× USB 3.2 Gen 2 Type-A, 1× USB4 Type-C, 1× HDMI 2.1, 1× DisplayPort 2.1, 1× 2.5 GbE, 1× DC in, and separate 3.5 mm audio jacks for mic in and audio out. Because the PC is designed to be mounted under a desk, what would traditionally be the rear I/O is also front-facing, which should make it easier to reach.

NVIDIA DLSS 5 Gets 84% Dislikes on YouTube as Backlash Grows

Gamers' reactions are shifting negatively towards the technology, while NVIDIA CEO Jensen Huang famously noted that gamers are "completely wrong" because these games offer massive programmability and controllability in how DLSS 5 is applied, keeping the artistic intent intact. However, according to game developers from both Capcom and Ubisoft who spoke to Insider Gaming, while the individual studios may have been involved in marketing DLSS 5, the teams who worked on them were just as surprised by the results as the rest of the gaming community. A Ubisoft developer is quoted as saying, "We found out at the same time as the public," while developers at Capcom expressed similar sentiments, stating that it was surprising to see Capcom, which has generally been protective of its IPs when it comes to AI involvement, getting involved in the marketing for DLSS 5. Furthermore, the Capcom developers expressed concern about how DLSS 5 might change Capcom's approach to generative AI and its role in game development.

Val Kilmer will appear in a new movie as an AI recreation approved by his family

According to Variety, Kilmer was cast as a Catholic priest and Native American spiritualist in 2020 for a movie called "As Deep as the Grave," but his battle with throat cancer meant he was too ill to make it onto the set.

Read Entire Article

North Korea deployed 100,000 fake IT workers to infiltrate Western companies, making $500M a year for Kim Jong Un

According to cybersecurity firms Flare Research and IBM X-Force, North Korea is using a network of more than 100,000 hackers, developers, and IT operatives to infiltrate global companies, steal people's private data, and funnel hundreds of millions of dollars to the Kim Jong-Un regime.

Read Entire Article

What patents reveal about the foundations of AI search

Every time a new large language model (LLM) drops or Google tweaks an AI Overview, the SEO industry loses its mind. We develop this weird collective amnesia, scrambling to optimize for features that were actually mapped out in patent offices 10 years ago. We’re so obsessed with the now and the next that we’ve stopped looking at the blueprints.

If you want to survive 2026, stop trying to be a futurist. Instead, be an archaeologist.

To actually deliver for our clients, we need a research framework that isn’t just reactive. It has to be a balance: Look back at the foundational patents to understand the rules, and look ahead to see how AI is finally being given the muscle to enforce them.

The archaeology of SEO

There’s a massive misconception that to understand AI search, you need to be a prompt engineer or read every new research paper from OpenAI. You don’t. The logic governing today’s magic is often math that was written a decade ago.

We can’t talk about patent research without honoring the late, great Bill Slawski. For 20 years, he was the SEO industry’s archaeologist. While everyone else was arguing about keyword density, he was reading dry, technical filings to predict exactly where we’re standing right now.

History proves his method worked.

- Agent rank (2007): Slawski analyzed agent rank nearly 20 years ago. It described digital signatures connecting content to authors and assigning reputation scores. We ignored it then. Now? We call it E-E-A-T. Google finally got the computing power to actually run the numbers.

- The fact repository (2006): Long before the Knowledge Graph was a thing, Slawski found patents for a “Browseable Fact Repository.” Today, that same logic is the engine behind answer engines.

The algorithm isn’t magic. It’s math. When a new feature drops today, the engineering blueprints were likely filed between 2007 and 2016. If you want to win, go read the old stuff.

Dig deeper: The origins of SEO and what they mean for GEO and AIO

Strategy vs. mechanics: From ‘strings’ to ‘verified things’

Don’t get buried in buzzwords. Categorize your learning into two buckets: ”strategy” or ”mechanic.”

For years, the industry talked about moving from strings to things (entities). But in 2026, that’s just the baseline. We’ve moved from strings to verifiable things. An entity is worthless if the AI can’t prove it’s real.

Think of it like building a house:

- Semantic SEO is the architecture: It’s the vision. It’s making sure the meaning of your site actually matches what the user is looking for.

- Entity SEO is the bricklaying: It’s using distinct nouns to build that vision so a machine can parse it.

- Verification is the mortgage: This is the part most people miss. It’s turning those entities into findable, provable facts connected to a verified human. If you aren’t connecting your content to a provable human expert, you’re just adding to the noise.

AEO vs. GEO: Let’s stop using these interchangeably

The industry often uses AEO and GEO synonymously, but they require different content structures and serve different objectives.

Answer engine optimization (AEO)

AEO is for the “direct answer.” Think Siri, Alexa, or that single snippet at the top of the page. It’s binary. It’s rooted in those 2006 fact repository patents.

You need ”confidence anchors.” These are unnuanced, structured facts. The engine isn’t “thinking,” it’s fetching. If your fact isn’t provable and anchored to a verified source, the engine won’t risk a hallucination by citing you.

Generative engine optimization (GEO)

GEO is for the “synthesis.” This is Gemini or ChatGPT search explaining how something works. It was formally defined by researchers at Princeton and Georgia Tech in 2023.

You need information gain. These engines don’t just want a fact; they want to see how Concept A affects Concept B. They’re looking for relationships and unique perspectives.

In short, AEO is about being the fact. GEO is about being the authority that the AI trusts to explain those facts.

Dig deeper: SEO, GEO, or ASO? What to call the new era of brand visibility in AI [Research]

The trap of forward-projecting: Why the ‘basics’ are still the ‘floor’

There’s a danger in becoming an SEO time traveler. If you spend all your time in the patent archives or stress-testing GEO relationships, you might forget that the AI still has to reach your content.

You can have the most verified, E-E-A-T-heavy content in the world, but if your site’s technical health is a mess, the confidence anchors will never weigh in.

The persistence of technical debt

Basic SEO requirements haven’t changed. The tolerance for ignoring them has simply disappeared.

- Crawl budget and efficiency: If your site is bloated with zombie pages or redirect loops, you’re wasting the crawler’s time. LLMs aren’t just looking for content. They’re looking for the cleanest path to a fact.

- Core Web Vitals (CWV): More than a ranking factor, it’s a user-utility requirement. If your site doesn’t load instantly, the AI won’t recommend it as a source in a GEO overview.

The headless promise (and reality)

Many of the frustrating technical SEO issues we’ve fought for years — like bloated JavaScript and poor Largest Contentful Paint (LCP) — are finally being solved by headless/composable architectures. By decoupling the front end from the back end, we can deliver the raw, lightning-fast data that answer engines crave while maintaining a high-end experience for humans.

But headless isn’t a “get out of SEO jail free” card. It solves the speed problem, but it introduces new risks around dynamic rendering and metadata delivery.

Whether you’re on a 20-year-old CMS or a cutting-edge headless build, the today requirements are non-negotiable:

- Clean URL structures: If the AI can’t deduce the hierarchy from the URL, you’ve already lost the semantic battle

- Internal linking (the nervous system): This is how you prove relationships between entities. If your internal linking is broken, your synthesis logic doesn’t exist.

- Indexability: If the bot is blocked by a poorly configured robots.txt or a noindex tag left over from staging, the most brilliant “verified human” insights in the world are invisible

You don’t get to play in the frontier of AEO and GEO until you’ve mastered the floor of technical SEO. Don’t let the shiny new objects make you forget the shovel work.

Dig deeper: Thriving in AI search starts with SEO fundamentals

The SEO time traveler checklist

Phase 1: The archive

- The Slawski deep dive: Stop reading the latest “AI is changing everything” blog posts for five minutes. Go back to the SEO by the Sea archives. Search for Slawski’s analysis on the Knowledge Graph or the user context. You’ll see the 2026 roadmap hidden in plain sight.

- The E-E-A-T math audit: Check your assets against Patent 2015/0331866. Are you actually providing the contribution metrics (such as verifiable reviews) that the patent specifically asks for?

Phase 2: The laboratory

- The verification pivot: Audit your entities. Are they just names on a page? Link them to a verified LinkedIn profile or a Knowledge Panel. If it’s not verified, it’s not an entity, it’s just a string of text.

- Schema stress testing: Don’t just use a plugin and walk away. Experiment with nesting. Try nesting a Person inside a Service as the provider. It works — I’ve seen it trigger rich results when nothing else did.

Phase 3: The frontier

- The confidence anchor audit: Look at your top pages. Does every topic have a clear definition? [Entity] is [attribute]. If you’re being vague, you’re invisible to AEO.

- The synthesis test: This is a quick one. Paste your article into an LLM and ask it to explain the relationship between your two main topics using only your text. If it has to go to the web to find the answer, you haven’t built the relationship well enough for GEO.

The synthesis: Becoming the architect

The SEO time traveler isn’t looking back because they’re nostalgic. They’re looking back because they want the blueprint. When you realize AEO is just the modern enforcement of a 20-year-old patent and GEO is just the evolution of semantic relationships, the chaos of AI updates disappears.

Stop optimizing for strings. Start optimizing for verified facts. Give the engine a fact it can’t doubt, connected to a person it trusts, and a relationship it can’t ignore.

The future of search wasn’t written this morning — it was written years ago. You just have to be the one to actually build it.

Dig deeper: The future of SEO: Why optimization still matters, whatever you call it

References and further reading

On the evolution of fact-based search (AEO foundations)

- The fact repository patent: Google LLC. (2006). Browseable Fact Repository. U.S. Patent 7,761,436. Analyzing the architecture of structured information retrieval.

- Knowledge Vault research: Dong, X. L., et al. (2014). Knowledge Vault: A Web-Scale Infrastructure for Probabilistic Knowledge Fusion. Google Research. Detailing how engines assign “confidence scores” to facts before retrieval.

- Authoritative verification: Google Search Central. Fact Check Structured Data (ClaimReview). Official documentation on how engines verify claims through structured data.

On generative engine optimization (GEO foundations)

- The GEO framework: Aggarwal, V., et al. (2023). GEO: Generative Engine Optimization. Princeton University, Georgia Institute of Technology, and the Allen Institute for AI. The definitive study on how LLMs cite and prioritize authoritative sources.

- The Slawski legacy: Slawski, B. (Various). SEO by the Sea Archives. For historical context on Agent Rank, phrase-based indexing, and entity metrics.

MSI MPG X870E Carbon Max Wifi Review: Small tweaks, same Carbon DNA

AnySlate – Write Markdown faster with AI and real-time collaboration

AnySlate is a modern Markdown editor for macOS, Windows, Linux, and the browser. It delivers a fast writing experience with real-time collaboration, cloud sync, and version history. Use AI to summarize, rephrase, and improve drafts, or extend capabilities via MCP. You can preview and export with professional control, publish to the web, and customize themes and styling so your workspace fits the way you write.

ThreatsDay Bulletin: FortiGate RaaS, Citrix Exploits, MCP Abuse, LiveChat Phish & More

New Perseus Android Banking Malware Monitors Notes Apps to Extract Sensitive Data

Is this the game-changer? TCL's new micro-LED TV massively cuts the price of the 'OLED-killer' tech — and it launched a new 300Hz 'Super Quantum Dot' TV too

Crimson Desert developer's shares tank despite the game's strong Metacritic score

HP’s Intel Core Ultra 7 265 mini PC workstation for developers and professionals is 50% off right now - a half price Best Buy Tech Fest steal

‘This is what Val wanted’ — Val Kilmer’s AI movie return has his family’s blessing, but it feels deeply unsettling

Rivian sacrifices 2027 profit goal to push deeper into autonomy

K2 to launch its first high-powered satellite for space compute

Tools for founders to navigate and move past conflict

Alphabet’s X has a new spinout, and it’s going after one of the world’s most expensive bureaucratic nightmares

Consumer-focused privacy company Cloaked raises $375M as it expands to enterprise

Opera GX Gaming Browser launches on Linux

Opera GX acknowledges PC gaming’s Linux shift with official browser support Opera GX has officially arrived on Linux, giving Linux users a gaming-focused browser option. As a web browser, Opera GX prides itself on its performance, privacy, and customisability. These are all traits that Linux users love. At launch, the browser is available in Debian […]

The post Opera GX Gaming Browser launches on Linux appeared first on OC3D.

AI shopping gets simpler with Universal Commerce Protocol updates

Universal Commerce Protocol (UCP) releases new capabilities, and Google shares a new onboarding experience to simplify UCP integration.

Universal Commerce Protocol (UCP) releases new capabilities, and Google shares a new onboarding experience to simplify UCP integration. A new milestone for smart, affordable electricity growth

We’ve signed 1 GW of data center demand response with utility partners, supporting smart, affordable electricity growth.

We’ve signed 1 GW of data center demand response with utility partners, supporting smart, affordable electricity growth. Microsoft confirms Windows 11 version 26H1 support lifecycle dates — here's how long your new Snapdragon X2 laptop will be supported on 26H1

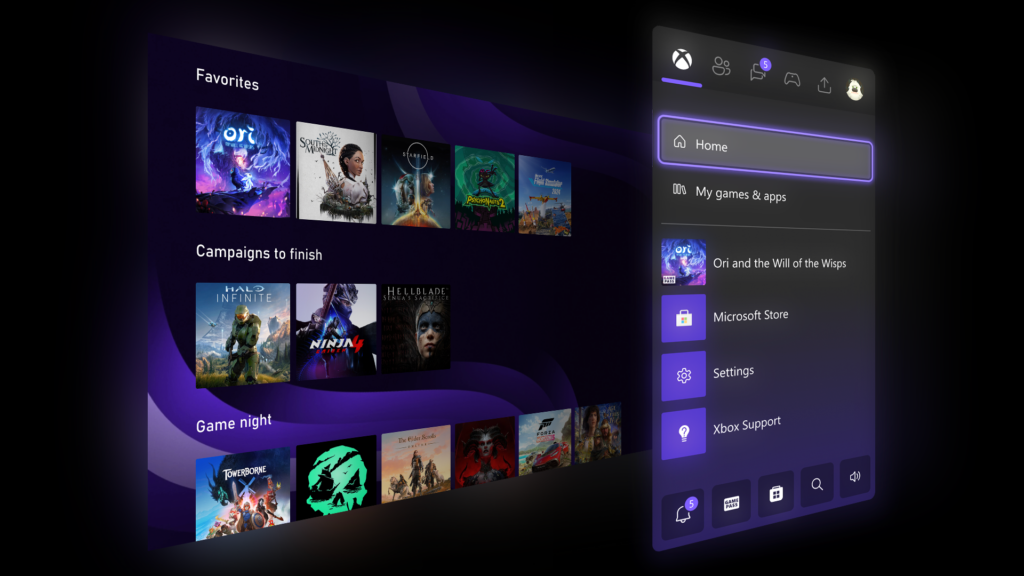

(PR) NVIDIA GeForce NOW Gets 90 FPS VR, Crimson Desert, and More Games

VR, but Make It 90 FPS

VR in the cloud is getting a smoothness upgrade. GeForce NOW is boosting support for Apple Vision Pro, Meta Quest devices and Pico devices to stream at up to 90 FPS for Ultimate members, bringing crisper motion and more responsive gameplay straight from the cloud. The app update is starting to roll out to members starting today. Members can transform the space around them into a personal gaming theater with GeForce NOW, playing favorite PC titles on a massive virtual screen. With support for up to 90 FPS for Ultimate members, gameplay feels smoother, movement more natural and action more comfortable. All premium members can continue to enhance their experiences with NVIDIA RTX and DLSS technologies in supported titles. Just fire up GeForce NOW on supported VR platforms, step into a virtual big screen and let the cloud handle the heavy lifting. From chill sessions in a virtual theater to high‑octane firefights, 90 FPS helps keep every moment looking sharp and feeling responsive.

(PR) Corsair Announces the Airflow-Focused 3200D Mid Tower Chassis

[Editor's note: Our in-depth review of the Corsair 3200D RS ARGB is now live]

Smart Design, Serious Cooling

The 3200D is thoughtfully engineered to make the most of its footprint while providing ample room for the most powerful hardware available. It offers up to 375 mm of GPU clearance, so even extra-large GPUs like the NVIDIA RTX 5090 have plenty of room to breathe. The spacious interior supports 9x 120 mm or 4x 140 mm fans, with the option to run a 360 mm AIO liquid CPU cooler in either the front or the roof of the chassis for robust CPU cooling. It is also ready for next-gen hardware, with full support for reverse connector motherboards designed for showcase builds with no visible cables.

(PR) EK by LM TEK Launches EK-Pro GPU Water Block for NVIDIA H200 NVL

Dual-Pass Cooling Engine for Maximum Thermal Efficiency

At the core of the EK-Pro GPU WB H200 NVL is a dual-pass cooling engine, engineered to maintain high flow rate. The coolant flows sequentially through two optimized microfin stacks, cooling each half of the GPU die and HBM memory to ensure uniform heat dissipation. This design maintains consistent flow distribution and thermal performance, minimizing losses even in reversed flow scenarios, while delivering efficient cooling across the entire GPU surface.

(PR) Sharkoon Releases S100 ARGB All-in-One CPU Cooler

Efficient Cooling

With its 360 mm radiator, the S100 ARGB offers excellent heat dissipation, reliably keeping high-performance systems at the optimum temperature, even at high loads. This makes it the ideal solution for demanding gaming PCs as well as powerfully equipped workstations.

(PR) Silicon Power Launches Enterprise-Grade DDR5 RDIMM for AI Workloads

Advanced Reliability, Broad Compatibility, and Power Efficiency

Engineered for uncompromising stability, Silicon Power's DDR5 RDIMM integrates advanced On-Die ECC technology alongside a Registering Clock Driver (RCD), significantly enhancing fault tolerance compared to standard ECC UDIMMs. This robust design ensures superior data integrity, making it ideal for mission-critical enterprise applications that require continuous uptime.

New iPhone spyware Darksword spread through hacked websites, putting millions at risk

The discovery, announced Wednesday in coordinated reports from Lookout, iVerify, and Google's Threat Analysis Group, highlights a growing trade in high-end spyware once found mostly in state-backed espionage. Darksword marks the second iOS-targeting exploit uncovered this month, following the earlier disclosure of a separate tool known as Coruna. Both were...

Read Entire Article

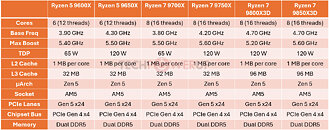

AMD could answer the Intel Core Ultra 200K Plus with faster Ryzen refresh

A well-known leaker recently shared basic specifications for two unannounced AMD desktop processors. The tentatively named Ryzen 7 9750X and Ryzen 5 9650X appear to be refreshes designed in response to Intel's recently unveiled Core Ultra 7 270K Plus and Core Ultra 5 250K Plus.

Read Entire Article

Amazon says USPS 'walked away at the eleventh hour' from delivery deal talks

Amazon said it spent more than a year working toward a new long-term agreement with USPS before its current contract expires on September 30, 2026.

Read Entire Article

Multi-location SEO strategy: Stop competing with your own content

Multi-location brands are investing heavily in content. But more content doesn’t automatically mean more growth.

I keep seeing the same issue. Each individual location has a blog, and they all cover the same topics. Same keywords. Same structure. Same search intent. The goal is local visibility, but the result is often internal competition and diluted authority.

Building an effective content strategy for multi-location brands requires clarity around roles. What should live at the corporate level to build authority, and what should stay local to drive relevance and conversions? Without that alignment, brands risk competing with themselves instead of winning in search.

Where the strategy breaks down

Most multi-location content issues aren’t intentional. They’re often the result of growth without a clear content framework, or simply too many cooks in the kitchen without overall governance.

Corporate teams are focused on building brand authority and scaling marketing efforts. At the same time, local teams or franchisees want content that answers their customers’ questions and lives on their own site, rather than sending users elsewhere. The assumption is simple: more content equals more visibility.

However, without clear ownership or strategic keyword targeting, overlap becomes inevitable. Similar topics are published across multiple URLs, and over time, this creates internal competition rather than building authority for the entire site.

What type of content belongs at corporate

In general, corporate should own the content that applies to the brand as a whole and build authority at scale. This includes blog content that targets broader informational queries and answers user questions, no matter where users are located.

Educational resources, industry insights, and evergreen topics perform best when consolidated in one place rather than duplicated across multiple URLs.

Core service, product, and line-of-business pages should also be centralized. These pages define what the brand offers and typically remain consistent across markets. While location pages can reference and support this foundational content, they often don’t need to be recreated at the local level unless they differ between locations.

Brand-level content, such as company history, leadership, mission, and differentiators, should also sit at the corporate level. These elements reinforce credibility and should be standardized across the organization.

Dig deeper: Local content playbook: From service pages to jobs-to-be-done pages

What type of content belongs at the local level

When it comes to local content, focus on what’s relevant to that specific market. This includes geo-specific content such as:

- Location landing pages with unique, customized copy.

- Localized metadata.

- Location-specific FAQs, relevant structured data (e.g., reviews, LocalBusiness).

- In some cases, region-specific service variations.

On location pages specifically, there are additional opportunities to highlight uniqueness:

- Location-specific testimonials and reviews.

- Team bios.

- Owner messages or stories.

- Events or awards.

- Community partnerships.

- Descriptive content about the location or service area.

- Location-specific imagery.

These elements can live on a single, well-built location page or expand into a microsite structure (pages living under a subfolder) when it makes sense for the business. Remember, the goal of these pages is to strengthen relevance, target geo-modified and local intent queries, and ultimately drive conversions.

One common concern with location pages is duplicate content. The question often becomes, how much duplicate content is acceptable? Instead of focusing on a percentage of unique versus shared content, teams should focus on what’s most useful for the user.

Typically, content that doesn’t need to be unique across every location includes:

- Brand boilerplates.

- Core service lists.

- Service or product descriptions.

- Standard calls to action.

- Legal disclaimers.

- Navigation.

- Trust signals.

Dig deeper: Local SEO sprints: A 90-day plan for service businesses in 2026

Common SEO risks of a faulty content strategy

When content production lacks clear governance, it can lead to a range of issues that affect organic visibility and crawl efficiency. Over time, this can cause inconsistent rankings, diluted authority, and missed opportunities to convert traffic into leads.

Keyword cannibalization

Keyword cannibalization occurs when multiple pages across a site target the same keywords and search intent. Instead of strengthening rankings, those pages end up competing against each other in search results, and, in some cases, may not get indexed at all.

For multi-location brands, this often happens when individual locations publish similar blog content. For example, a plumbing brand might have multiple location pages with blogs, each posting a blog post titled “Tips to fix a leaky faucet,” creating several URLs targeting the same informational query.

A more strategic approach is to consolidate that topic into a single, strong corporate-level post. This would allow the brand to serve as the authoritative source, build backlinks, answer users’ questions effectively, and strengthen the site’s overall credibility.

Google choosing the ‘wrong page’

When multiple pages on a website are targeting the same or overlapping keywords, search engines have to determine which one to rank, and sometimes it’s not the page you intended.

On a multi-location site, that may mean a local blog ranks nationally for a topic that would be better suited to live on the corporate site and build broader brand authority. While the page may be relevant to the query, it may not guide users clearly to the next step, leading to customer confusion or bounces.

It may also cause users who aren’t in-market to leave the site after absorbing the information because there’s no clear next step for them, or because they only see information about services in Austin, Texas, while they’re located in Cleveland, Ohio.

Instead, consolidating authority on a single, well-ranking page that clearly directs users to take action, whether that means finding their nearest location or submitting a form, would be more beneficial for the brand and users.

Crawl inefficiencies

Publishing multiple blog posts on the same topic, especially when the answer doesn’t vary by location, can result in duplicate or low-value content. While these pages may be regularly crawled due to internal linking, they often never make it into the index.

At scale, this can become a bigger issue, especially for sites with many locations that publish similar informational topics. For a site with dozens or hundreds of locations, having similar blog posts across those locations can create crawl bloat, where search engines may spend time and resources crawling repetitive or low-impact URLs rather than more high-impact pages.

Diluted link equity

When similar content exists across multiple URLs, backlinks and internal links are split among pages instead of consolidating authority on a single strong page. Rather than building momentum around a single piece of content, link equity is distributed across competing versions.

For multi-location brands, this can weaken overall ranking potential. Consolidating authoritative content at the corporate level allows links, authority, and trust signals to compound, strengthening the entire domain and supporting location pages more effectively.

Dig deeper: The local SEO gatekeeper: How Google defines your entity

Creating a plan: How corporate and local can work together

After defining roles, move to governance. Multi-location brands need a shared plan for ownership, keyword targeting, and team collaboration.

Before new content gets created, the right questions need to be asked, such as:

- Is this topic location- or region-specific, or is it broader for any consumer?

- Would publishing this for only one location add value to those specific customers?

- Would publishing it across multiple locations make sense?

- Who should own the keyword? The brand or a specific location?

- Who does it make sense for the information to come from?

Clear keyword mapping and a centralized content calendar can prevent overlap before it starts. When teams understand their roles, content supports overall growth instead of competing internally.

Content collaboration also creates opportunities to strengthen E-E-A-T signals for the site as a whole. Corporate can cover broader educational topics while drawing on real expertise and experience from local teams.

For example, a roofing company might want to write a post about how often homeowners should replace their roofs. The topic is universal. However, the answer could vary by region due to factors such as the material used in that area or the weather.

The blog could include quotes from franchise owners or team members across different regions to provide insights into regional factors, such as heat and humidity in the South versus harsh winter weather in the North.

This would allow corporate to own the topic and give locations the opportunity to provide their unique expertise and experiences. Plus, linking to relevant location pages can reinforce context and create stronger internal linking throughout the site.

Another option would be to create a local hub within the blog.

Volume isn’t always the right strategy

Search may be changing, but many of the fundamentals remain the same. High-quality, well-structured content that genuinely helps users is what earns visibility.

With Google’s AI Overviews and large language models pulling from authoritative sources, content that clearly answers questions and reflects real expertise is even more valuable. Pages created solely to scale across multiple locations — without adding unique value — are unlikely to perform consistently, and can even hurt a site in the long run.

Content shouldn’t be treated as a volume game. More pages alone won’t drive growth. What matters is planning, ownership, and alignment.

When corporate and local teams build a shared content strategy, it helps turn content into a growth driver rather than just more pages on a site.

Your SEO maturity score doesn’t measure what you think it does

The Visibility Governance Maturity Model (VGMM) is about something most SEO programs lack: clear ownership, documented processes, and decision rights that keep your work from being undone by teams who don’t understand it.

So how do you actually score that?

Each domain uses a bank of governance questions tailored to the business. They’re not about how SEO is executed. They’re not about tools. And they’re not an audit.

What VGMM questions are designed to reveal

VGMM questions go to managers and the C-suite — the people who should know about governance but often don’t. Meanwhile, you (the SEO practitioner) actually know whether standards are documented, whether QA is in place, and whether processes exist.

VGMM diagnoses organizations where SEO knowledge lives in practitioners’ heads, rather than in documented, governed processes. If VGMM surveyed only practitioners, it would measure whether you know what to do (you do). But governance maturity measures whether the organization can sustain capability when you’re on vacation, when you get promoted, or when you leave.

Questions go to managers because governance gaps show up as:

- “I don’t know the answer to that.”

- “I’d have to ask Sarah.”

- “We used to have a process, but it’s not enforced anymore.”

- “Each team does it differently.”

- “That’s documented somewhere, I think?”

When managers can’t answer governance questions, that’s the signal. It means processes aren’t institutionalized.

Dig deeper: Why most SEO failures are organizational, not technical

The SPOF reality check

Single point of failure (SPOF) questions can cap your organization at Level 2 maturity until they’re resolved.

Here are some examples of SPOF question:

- “If [key person] left tomorrow, could the organization maintain SEO standards without them?”

- “Is SEO knowledge documented in a way that’s transferable to new team members?”

- “Are there at least two people who understand how [critical system] works?”

Right now, you’re probably the SPOF. You’re the person who knows where all the bodies are buried, how the redirects work, why that weird canonical setup exists, and what breaks if someone changes X. That feels like job security. It’s actually a job prison.

When VGMM identifies you as an SPOF:

- Leadership realizes your knowledge needs to be documented.

- You get resources to create documentation.

- You get approval to train other people.

- You get your own tools, training, and conference budgets. (Yay!)

- Your expertise becomes institutional, not personal.

- You can take a vacation without disasters.

The organization can’t move past Level 2 until SPOF conditions are cleared. This forces leadership to address hero-dependency.

How domain scores become VGMM score

Each domain model (SEOGMM, CGMM, WPMM, etc.) produces a maturity score based on its own question bank. Here’s how they roll up:

Step 1: Domain assessment

Each domain asks 30-60 governance questions tailored to that area. Questions are behavior-based, not opinion-based:

- “Do you think SEO standards are important?” (opinion)

- “Are SEO standards documented and approved by [role]?” (behavior)

Step 2: Weighted scoring

Answers are weighted based on impact. Not all governance failures are equal:

- Missing documentation = lower weight.

- No ownership for critical decisions = higher weight.

- SPOF identified = can cap maturity level regardless of other scores.

Step 3: SPOF constraint

If SPOF conditions exist, the domain score maxes out at Level 2 (emerging) even if other governance is strong. You can’t be structured (Level 3) when capability depends on one person.

Step 4: Domain aggregation

Domain scores average into the overall VGMM score with adjusted weighting based on:

- Your industry (ecommerce weights performance governance higher).

- Your business model (SaaS weights content governance higher).

- Your complexity (international weights workflow governance is higher).

Step 5: Final maturity level

The overall VGMM score maps to maturity levels:

- Level 1 (0-30%): Ad hoc/unmanaged

- Level 2 (31-50%): Aware/emerging

- Level 3 (51-70%): Structured/defined

- Level 4 (71-90%): Integrated/coordinated

- Level 5 (91-100%): Optimized/sustained

Why questions change between models

Domain questions adapt to the maturity model being used.

SEOGMM questions focus on:

- Technical SEO governance (schema, redirects, crawl management).

- Content optimization standards.

- Performance monitoring and alerts.

LVMM questions focus on:

- Location data governance across distributed sites.

- Google Business Profile management and ownership.

- Review response workflows and accountability.

- NAP (Name, Address, Phone) consistency

IVMM questions focus on:

- Market-specific SEO governance across countries.

- Translation workflow and quality controls.

- Local compliance and regulatory requirements.

- Cross-market coordination and escalation.

Same governance principles, different operational contexts. An ecommerce company doesn’t need LVMM. A restaurant chain with 500 locations absolutely does.

Dig deeper: SEO’s future isn’t content. It’s governance

Why you can’t (and shouldn’t) compare scores

VGMM scores are internal quality metrics, not competitive benchmarks. A 62% score doesn’t mean you’re ahead of another organization at 58%. Here’s why.

Weighting varies by business model

- Ecommerce company: Performance governance weighted 30%.

- Information publisher: Content governance weighted 35%.

- Service company: Workflow governance weighted 25%.

Domain combinations vary by organization

- Organization A: SEOGMM + CGMM + WPMM + IVMM (international).

- Organization B: SEOGMM + CGMM + WPMM + LVMM (multi-location).

Not comparing apples to apples.

Organizational context changes what scores mean

- Startup at 45% with 10 people = impressive, mature for size.

- Enterprise at 45% with 500 people = serious governance gaps.

Strategic priorities shape the score

- Organization prioritizing organic visibility: SEOGMM weighted higher.

- Organization focused on technical debt: WPMM weighted higher.

The only meaningful comparison is your organization against itself over time:

- Q1 2025: 42% (Level 2)

- Q3 2025: 58% (Level 3) ← Progress

- Q1 2026: 61% (Level 3) ← Sustained improvement

Use VGMM to answer:

- Are we improving quarter over quarter?

- Which domains are holding us back?

- Where should we invest in governance?

- Are SPOF conditions getting resolved?

Don’t use VGMM to answer:

- Are we better than Competitor X?

- What’s the industry average score?

- Should we publicize our score?

What VGMM scoring means for you

As an SEO practitioner, this scoring approach protects you.

You’re not being blamed

When governance assessment reveals gaps, managers are answering questions about organizational capability. They’re not evaluating your individual performance. The assessment asks, “Does the organization have documented standards?” not “Is the SEO person doing a good job?”

SPOF detection is your escape hatch

When SPOF questions flag that the organization depends entirely on you, leadership sees it as an organizational risk — not as proof you’re valuable. They can’t move to Level 3 until they fix it, which means resources for documentation, training, and knowledge transfer.

Weighted scoring highlights systemic issues

When content governance scores low, but SEO governance scores high, it shows other domains aren’t holding up their end. This redirects leadership attention to where governance actually needs strengthening.

Progress tracking shows your impact

When your organization moves from Level 2 to Level 3 over two quarters, you have concrete evidence that governance investments are working. This isn’t “traffic went up 15%,” it’s “organizational capability improved measurably.”

Dig deeper: SEO execution: Understanding goals, strategy, and planning

The difference between hero work and sustainable SEO

VGMM’s scoring approach is designed to:

- Diagnose organizational capability gaps without blaming individuals.

- Make your implicit knowledge visible as institutional risk.

- Force leadership to address hero-dependency.

- Track progress in ways that make governance investments defensible to finance.

The assessment focuses on whether the organization can sustain your work without you. That’s the difference between being an indispensable hero (exhausting) and being a strategic professional whose expertise is institutionalized (sustainable).

Opera GX finally arrives on Linux by popular demand — offers gamers and developers a highly customizable browser with advanced resource management

Researchers reach superconductivity at ambient pressure, record high temperature — milestone of -122°C reached by using pressure quenching, still 140 degrees off room temperature target

Save $800 on this powerhouse Alienware 4K gaming PC with an RTX 5080 and 64GB DDR5 RAM — epic pre-built also includes a huge 4TB SSD and a 24-core Intel CPU for high-end performance

RendrKit – Design API that lets AI agents generate images instantly

RendrKit is a design API built for AI agents. Your agent sends a JSON request with text and brand colors and receives a professional PNG in under two seconds. There’s no need for DALL-E or prompt engineering—just 69 deterministic templates that render pixel-perfect images every time.

RendrKit works with LangChain, CrewAI, OpenAI GPT Actions, MCP (Claude/Cursor), and n8n. You can use it via Python SDK, Node.js SDK, or plain REST, and a free tier is included.

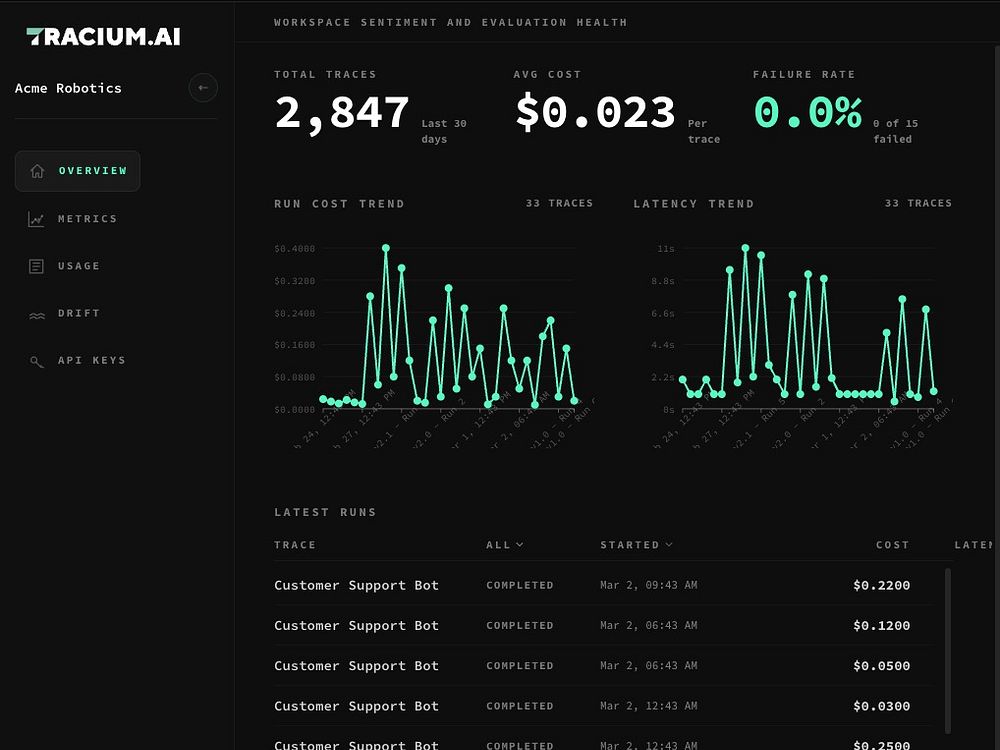

Tracium – Track AI agents and costs with a single line of code

Tracium is a developer-first observability layer for AI systems. With a single line of code, it monitors agents and models in real time, tracing every request end-to-end across tools and steps while tracking token spend, latency, and total cost. It captures and classifies errors, supports per-tenant analytics, and lets you compare prompts, models, and routing with live A/B versioning. Use drift detection to spot shifts in inputs and outputs before performance degrades, and manage everything across customers, workspaces, and environments.

Alexa+ promises an AI layer over everything — I’m not sure anyone asked for that

The new MacBook Air 15 M5 is great, but it's hard to justify when the still-excellent M4 model is $300 off

‘Just a joy to use’ — I reviewed the new Nothing Phone (4a) Pro and its striking design, giant blazing screen, and useful Glyph Matrix reminded me that phones can actually be fun

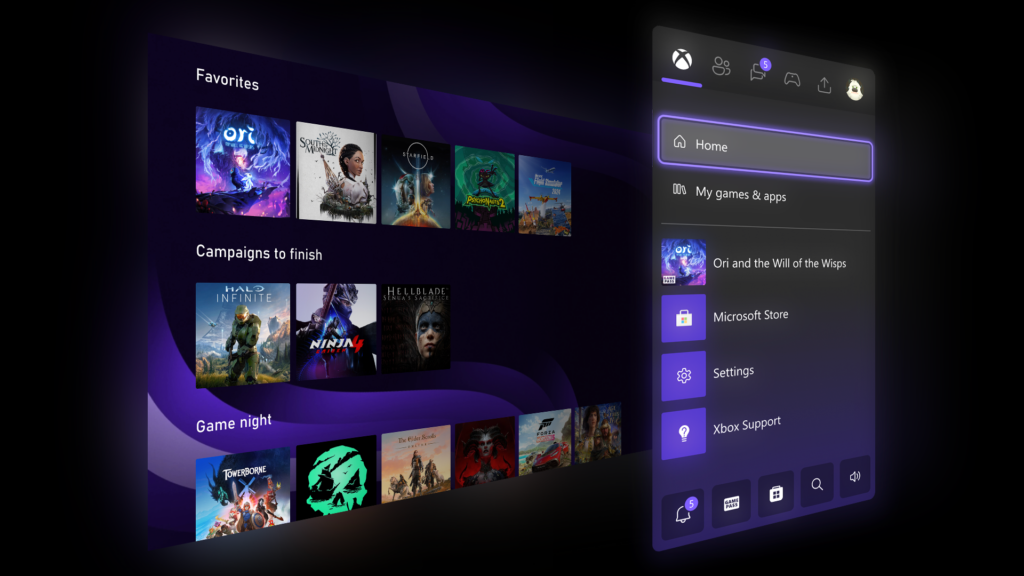

I can finally make the UI of my Series X bright pink after the new Xbox dashboard update

This $25 AirPods Pro 3 case looks exactly like a classic Macintosh mouse — and could be the perfect Apple 50th anniversary tribute

'A stunning mini PC offering powerful performance': Geekom A7 deal is a productivity beast for creatives - and it's $599 in Best Buy's tech sale

As the Cities: Skylines franchise turns 11, Iceflake Studios celebrates with a special anniversary patch that adds a new encyclopedia and a stadium for 'proper entertainment' to the second game

How to watch The 50 online from anywhere — including outside India and in the USA

'We found out at the same time as the public' — Capcom and Ubisoft devs were out of the loop on Nvidia DLSS 5 involvement, adding to the AI controversy

'My dream travel lens’ — Fujifilm asked which lens it should make next, and you voted for this wide-aperture zoom

Dozens of European cloud CEOs call for real tech sovereignty ahead of Cloud and AI Development Act

‘Drives me crazy’: iOS 26.4 has fixed an infuriating keyboard bug, but one problem has still gone unsolved

Gigabyte's 16-inch video editing laptop with Ryzen AI 7, RTX 5060, and 32GB RAM is $350 off — but you'll need to act super-fast

Saros has exclusive PS5 features like feeling 'eclipse-driven weaponry through adaptive triggers — but it's nothing we've not heard before

Tubi joins forces with popular TikTokers to create original streaming content

Arc expands into electric commercial and defense boats with $50M raise

Feds intensify investigation into Tesla’s Full Self-Driving (Supervised) software

Amazon brings Alexa+ to the UK

Uber taps Rivian to build robotaxis in deal worth up to $1.25B

Hi Microsoft, Windows 11 doesn't have two Start menus — Copilot is playing you

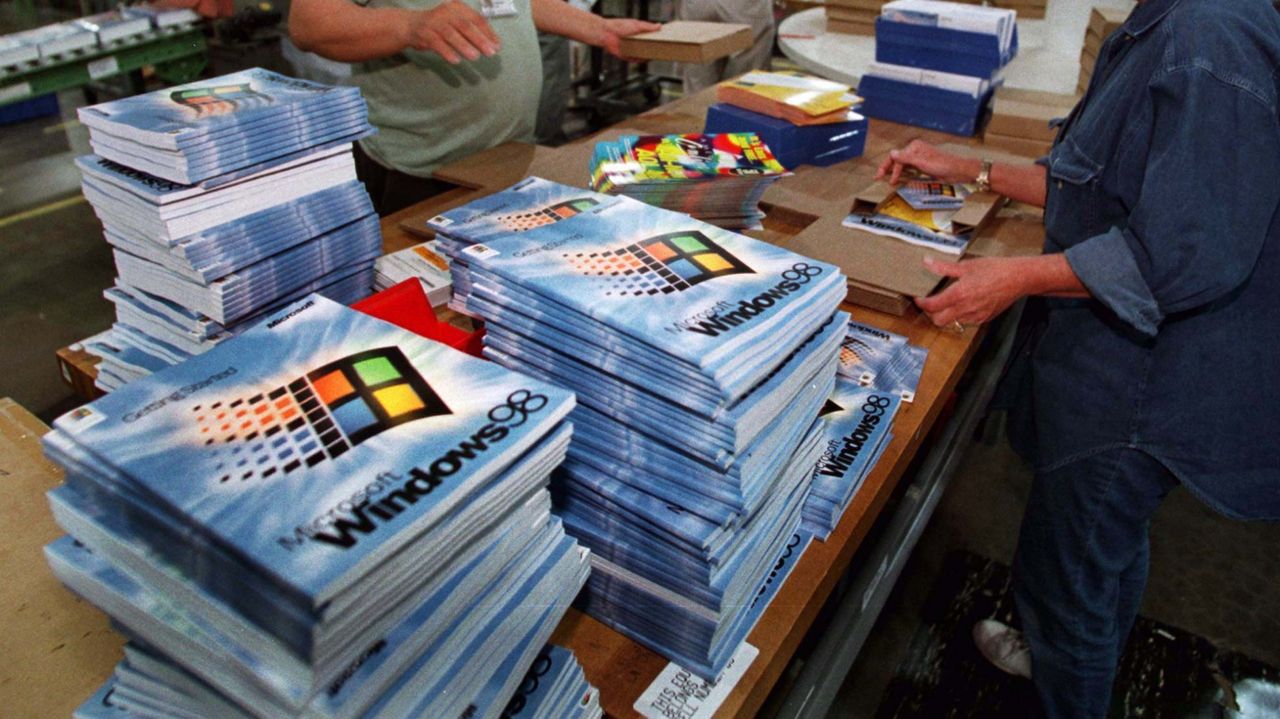

Someone hacked Windows 98 onto a year 2000 Internet appliance just because they can

Xbox isn't giving up on Japan — there's a promising sign of continued investment in the region

(PR) The KiiBOOM Phantom98 Lite Blends Style and Function

Thoughtful Colorways and Details

The Phantom98 Lite continues KiiBOOM's commitment to aesthetics, debuting with three themed colorways: Green Rainy Frog, Foggy Translucent, and Pink. Each curated color combination is designed to evoke a distinct atmosphere. Beyond the colorway, the textural contrast between the UV-glazed case and the PBT dye-sublimated keycaps adds depth to both the look and feel. Practicality is equally prioritized, with the magnetic nameplate discreetly storing the 2.4G receiver, ensuring style and function live in perfect harmony.

Scientists build first working quantum battery prototype, uses lasers to charge in femtoseconds

The achievement marks the first time scientists have built a device that can be charged, store energy, and release it again using the laws of quantum mechanics. The team, led by CSIRO physicist James Quach, calls the system a "proof of concept," transforming a theoretical model into a functioning nanoscopic...

Read Entire Article

Crimson Desert reviews fail to meet the hype as Pearl Abyss shares tumble 29%

With its incredible open world, massive battles, flight, and stunning looks powered by Pearl Abyss' proprietary BlackSpace engine, Crimson Desert was one of our most anticipated PC games of 2026.

Read Entire Article

AI Mode is Google’s next ads engine — and it already knows how to monetize it

As conversational search gains traction, the bigger question isn’t who has more users, but who can monetize them.

Google enters this phase with a massive advantage: mature ad systems, deep advertiser adoption, and decades of optimization. Early AI Mode signals point to a measured rollout.

The panic phase is over

After a period of panic within the company, Google’s built-in advantages, coupled with massive capital expenditures, have helped it regain ground on category leader ChatGPT in LLM search.

In December 2025, Google’s own code red became OpenAI’s code red.

The dust will continue to settle, and analysts have different takes. But one signal stands out: in a major validation, Apple has chosen Google to power its own AI.

It was perhaps premature to assume Google Search would simply lose to ChatGPT on product. That was the consensus at the start of 2025. Google shares fell about 30% from peak to trough before rallying 130%. Today, the company is valued at roughly $3.6 trillion, just behind Apple.

Why monetization will decide the winner

Why did Google’s recent progress in LLM conversational queries — in the form of AI Overviews and AI Mode — have such a large impact on the company’s valuation in such a short time?

Ultimately, it comes down to visibility of financial projections. In a company with so much to defend, Google’s CFO and leadership team needed to determine whether shifts in user behavior — in how search works and how it makes money — would weaken the business model or reinforce it.

Net-net: Google before the shift: huge. Google after the shift: ditto.

Visibility — in the sense of financial planning, not in the SERP — means a great deal to Google’s advertisers, too.

A large proportion of your annual digital advertising budget is likely allocated to Google. You also still care about how you appear in organic results and increasingly, how your company appears in AI Mode, ChatGPT, Claude, and similar environments.

“I’m fine with 30% less of my business coming in from Google, and figuring out lots of complicated ways to replace it,” … said no advertiser ever.

How monetization will play out in AI search

The competition between monetization models in LLM conversations — especially between the two leaders, ChatGPT and Google’s AI Mode — will play out differently from the broader race for overall user share. There are several moving parts to keep an eye on:

- Overall assumptions about ad formats and “how to monetize.”

- Pace of rollout.

- Whether users and public opinion recoil at ads.

- Advertiser success rates based on performance measurement.

- Advertiser adoption, including adoption by the agency ecosystem.

- Platform targeting options.

- Advantages of fuller-funnel ad journeys and data collection.

- Privacy, safety, policies, and enforcement.

- An all-encompassing consumer brand vs. a better mousetrap.

- And a few other factors.

Right now, OpenAI is at a critical moment because it’s still so early in its monetization. It’s still testing an inefficient auction model confined to a small group of large advertisers. (Some ads, from their pilot, spotted here.) It may be some time before more mature tools and reporting emerge.

Most recently, OpenAI brought ad platform Criteo (often used for retargeting) on as a partner. The Trade Desk, the world’s largest non-Google DSP for programmatic, is also in the mix. Some observers have speculated about deeper partnerships or even an acquisition of The Trade Desk, though that seems unlikely.

In any case, outsourcing inventory to programmatic partners is a pragmatic step in OpenAI’s monetization strategy. It also underscores how early the company is in building a scalable ads business.

Despite a broad rollout with partners, OpenAI is stepping back from “checkout in chat” integrations after limited adoption from both merchants and consumers. When your primary competitor has a 25-year head start, the learning curve is steep.

So does it make sense now for advertisers to lean into evolving Google user behavior and figure out how to ride the wave?

AI Mode considerations for Google advertisers

Expect the transition to more AI Mode sessions — and eventual monetization — to be smoother than initially anticipated. If you’re an advertiser, AI Mode need not equal panic mode.

How do these LLM sessions look to users? Obvious to you and me, but likely less so for many searchers.

Depending on how you search, AI Overviews may appear above other results on the SERP. That’s becoming a natural extension of Google Search sessions.

But that’s not the real conversational layer. The LLM workflow happens in AI Mode. How often users go there remains to be seen.

It’s improving quickly. Unlike ChatGPT, Google AI Mode downplays how it finds information, whether it is “reasoning,” and which model is being used. The experience feels relatively seamless.

It’s still early, but ads are already appearing in some cases. The key question is how this evolves, and what advertisers should be paying attention to.

The key areas to watch are:

- Extent of monetization.

- Different ways to monetize.

- Advertiser control and campaign types.