Snapchat expands Creator Subscriptions to all eligible users

The program launched in February for Snap Stars and offers exclusive ways to monetize content, such as subscriber-only Stories.

The program launched in February for Snap Stars and offers exclusive ways to monetize content, such as subscriber-only Stories.

The emoji-based experience is available globally and mirrors similar engagement efforts from Threads and LinkedIn.

Although a new report found that about 4.7 million teen social media accounts were removed, deactivated or restricted, children still had access to the apps.

The platform’s artificial intelligence tool will allow users to “make conversational queries” about in-app content and get in-stream responses.

The annual presentation will be hosted by comedian Trevor Noah and will include a performance by musician Chappell Roan.

While calling its short-form videos Reals was an April Fool’s Day joke, the animosity between the two companies is not.

AnyToURL turns any file into a short, shareable link in seconds. Drag and drop to upload, then share an instant URL with browser previews for images, PDFs, and documents. Files are delivered over a global edge network for fast access worldwide. Add password protection, keep files temporary or make them permanent on paid plans, and manage uploads via API or CLI with custom domains and branding, supporting sizes up to 10GB.

NanoMaker AI is an all-in-one creative platform that lets you generate and edit images using Nano banana AI, videos, music, and voice with the world's top AI models under one subscription. Work in a seamless workflow: turn an image into a video, add background music, and export without switching tools. Use prompt-based editing, background removal, lighting control, and style transfer to produce consistent, professional results for marketing, content creation, education, and e-commerce.

Embed AI into your app or site in just 3 lines of code. Normally, building AI into mature apps or websites requires dealing with vector databases, custom integration pipelines, authentication, and brittle LLM calls, which distract core engineering teams from shipping product features. EmbedAI solves this by providing a drop-in component that abstracts away infrastructure, letting you inject AI into your app logic without restructuring your backend. It requires zero backend maintenance or database provisioning, offers seamless UI matching your brand rules, and gives you complete control with your own API keys.

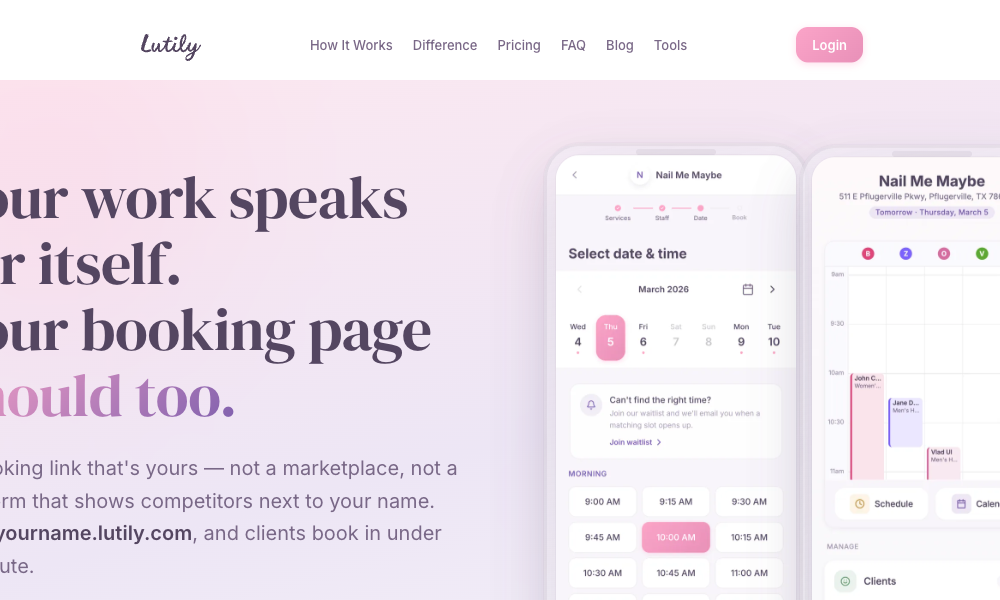

Lutily gives salons a branded booking page at yourname.lutily.com that lets clients pick services, choose a staff member, and reserve a real open slot in under a minute. It never shows competitors, charges no commission, and has no per‑staff fees. Every booking is phone‑verified to reduce no‑shows.

Use Lutily to stack appointments to fill gaps, run a smart waitlist that auto‑offers newly opened times, and control working hours by date. Manage a color‑coded calendar, team permissions, client history and notes, and automatic SMS confirmations and reminders, with instant rescheduling and cancellations.

Experience the pinnacle of gaming technology with GCS Cheats, the industry’s leading provider of state-of-the-art gameplay modifications. It features the most intuitive interface and the lightest system footprint in its class, offering a powerful and easy-to-use level of customization. Every tool is designed for maximum stability, providing seamless integration into today’s biggest games.

Google.org expanded our existing collaboration with Highlights for Children.

Google.org expanded our existing collaboration with Highlights for Children.

Early reviews of the AirPods Max 2 highlight improved sound quality, excellent noise cancellation, and deeper Apple ecosystem integration, powered by the new H2 chip and added smart features.

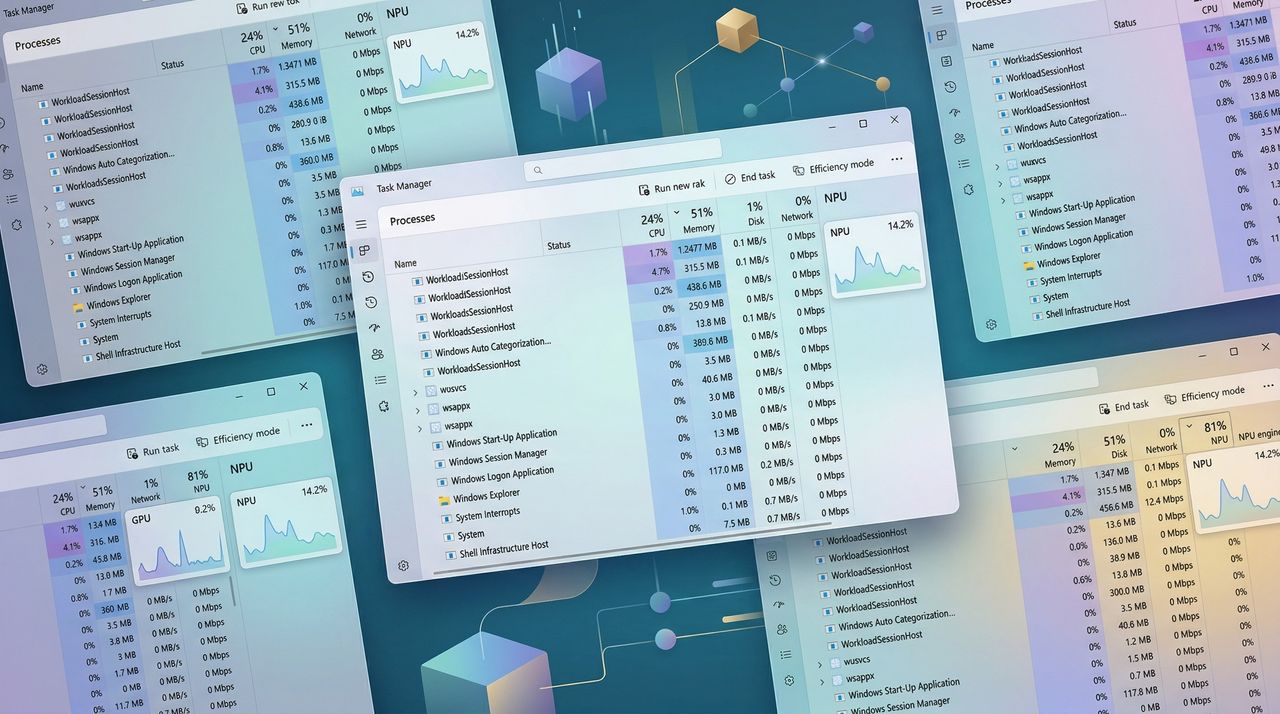

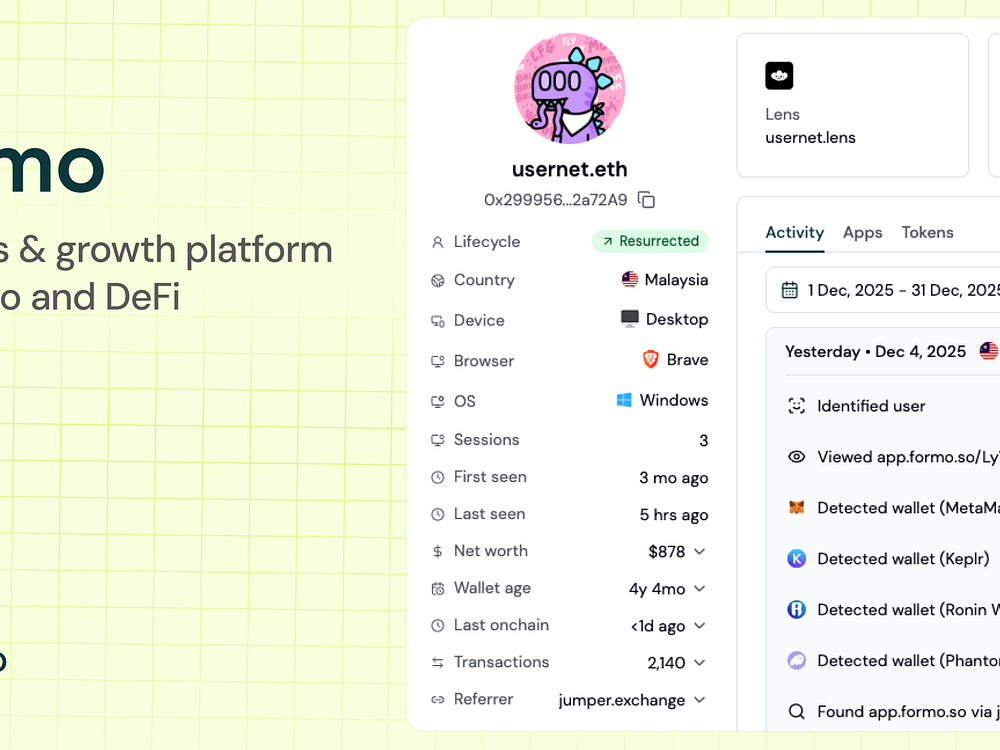

Formo makes analytics and attribution easy for DeFi apps, so you can focus on growth. Understand who your users are, where they come from, and what they do onchain. Measure what matters and drive growth onchain with the data platform for onchain apps. Get the best of web, product, and onchain analytics on one versatile platform.

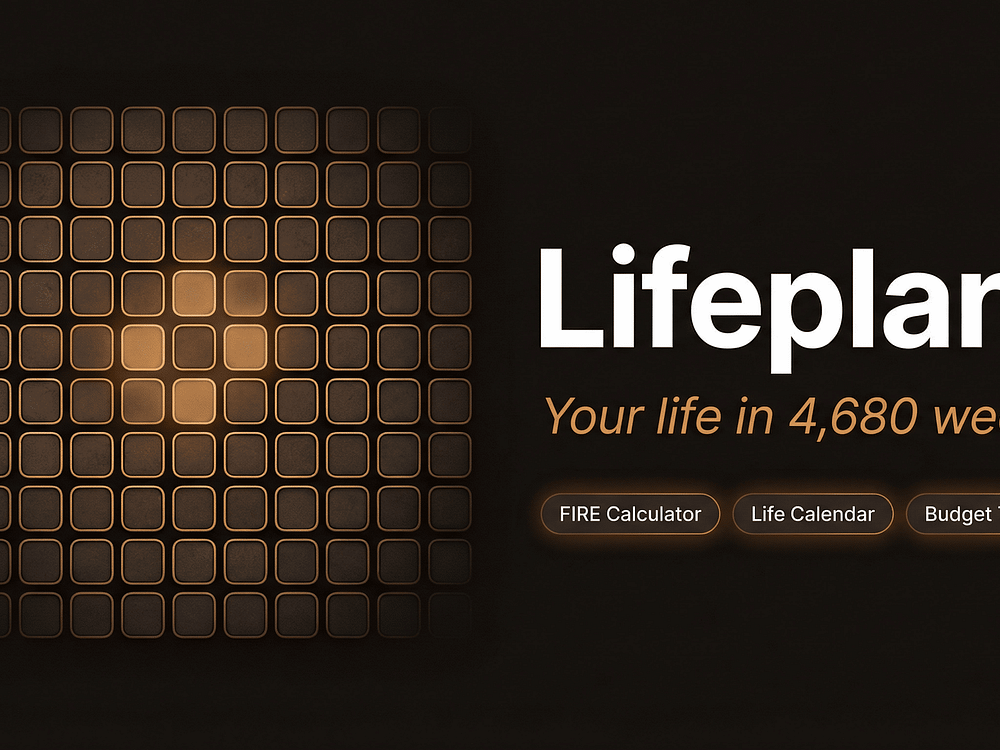

Lifeplanr visualizes your entire life as 4,680 weeks and lets you plan, journal, map travel, and track finances with a built-in FIRE calculator. You can see life phases at a glance, tag moods, attach photos, and scratch off countries you’ve visited.

Install it as a PWA on any device, switch between 10 themes, and use it offline. Your data stays on your device by default, with optional Pro cloud sync and easy export.

A recap of AI Literacy Day, including a New York City Public Schools event hosted at Google and updates to Google AI literacy resources.

A recap of AI Literacy Day, including a New York City Public Schools event hosted at Google and updates to Google AI literacy resources.

Google Ads quietly added an auto-apply setting to its experiments feature — and it’s turned on by default, meaning winning experiment variants can be automatically pushed live without manual review.

How it works. Advertisers can choose between two modes — directional results (the default) or statistical significance at 80%, 85%, or 95% confidence levels. There is one built-in safeguard: if a chosen success metric performs significantly worse in the test arm, the change won’t be automatically applied.

Why we care. Experiments are one of the most powerful tools in a Google Ads account. Automating the apply step could speed up testing cycles, but it also removes a critical checkpoint where advertisers catch unintended consequences before they affect live campaigns.

The catch. Experiments only allow two success metrics. That means a third metric you care about — one you didn’t or couldn’t select — could quietly be declining in the background, and the auto-apply setting would never catch it. The guardrails protect what you told Google to watch, not everything that matters.

The bottom line. The auto-apply feature is a reasonable shortcut for straightforward tests, but for anything consequential, manual review is still worth the extra step. Run the experiment, let it reach significance, then dig into the full data before pulling the trigger yourself.

First seen. This update was spotted by Google Ads specialist Bob Meijer who shared the update on LinkedIn.

Bing appears to be testing a significantly expanded sponsored products section in its shopping search results, featuring a double-rowed carousel that takes up considerably more real estate than its current format.

What was spotted: The test was flagged by Digital Marketer Sachin Patel, who noticed the expanded layout while searching for cushions on Bing. The format pairs a large double-rowed sponsored carousel with organic cards from individual websites beneath it.

Why we care. If this format rolls out broadly, it means significantly more screen space dedicated to sponsored products — which typically translates to higher visibility and more clicks for retailers running Microsoft Shopping campaigns. The double-rowed carousel format is also a more visually competitive layout, putting Bing’s shopping ads closer in prominence to what Google Shopping already offers.

The catch: The test appears to be limited — not all users are seeing it. Search industry veteran Mordy Oberstein checked his own results and got a noticeably more compact layout, suggesting Bing is still in early experimentation mode.

The bottom line: Bing quietly runs a lot of SERP experiments that never make it to full rollout, so this one is worth watching but not banking on. Retailers running Microsoft Shopping campaigns should keep an eye out for any uptick in impressions if the format expands.

First spotted. This test was was spotted by Sachin Paten who shared a screenshot of the test on X.

SEO tools were the most replaced martech application in 2025 — but not for the reason you might expect.

According to the 2025 MarTech Replacement Survey, SEO platforms topped the list of replaced tools for the first time, overtaking categories like marketing automation platforms (MAPs), which had led for the past five years.

At first glance, that might suggest instability in SEO. After all, the discipline is being reshaped by LLMs, AI-generated answers, and the rise of zero-click search experiences — all of which challenge traditional keyword tracking and ranking-based workflows.

But the data tells a more nuanced story.

Even though SEO tools were the most replaced category in 2025, they were replaced at a slower rate than in prior years.

In other words, they’re now the most commonly replaced — but also more stable than before.

That shift suggests a maturing category. Rather than widespread churn, you appear to be consolidating, upgrading, or refining your SEO stack as search evolves.

Meanwhile, several other major martech categories saw sharper year-over-year declines in replacements:

So if SEO tools aren’t being swapped out due to instability, what’s driving the changes?

The survey points to three primary factors:

For the first time, the survey asked about AI’s role in replacement decisions — and the impact was significant.

This reflects a broader shift in SEO tooling, with platforms rapidly integrating AI for:

In many cases, replacing your SEO tool isn’t about abandoning SEO — it’s about upgrading to AI-native capabilities.

Cost has become a major driver of martech replacement decisions, including SEO tools:

This suggests growing pressure to optimize and rationalize your SEO tech stack, especially as you evaluate overlapping functionality across tools.

As search behavior changes, so do expectations for SEO platforms.

Traditional rank tracking and keyword monitoring are no longer sufficient on their own. Teams are increasingly looking for tools that can:

That evolution is likely contributing to replacement activity — even as overall stability increases.

One of the more notable trends in the 2025 survey is the resurgence of homegrown solutions, including for SEO workflows.

Replacing commercial martech tools with homegrown applications accounted for:

This marks a meaningful shift after years of near-total reliance on commercial platforms.

“AI-assisted coding is changing the calculus of build vs. buy,” said martech analyst Scott Brinker. “It’s easier and faster to build than ever before. Companies should still buy applications where they have no comparative advantage. But in cases where they can tailor capabilities to differentiate their operations or customer experience, custom-built software is an increasingly attractive option.”

For SEO teams, this could mean more organizations building:

While SEO tools led in total replacements, the broader martech landscape is becoming more stable.

Several major categories saw declining replacement rates in 2025, including:

This suggests that many organizations are settling into core systems while selectively updating areas — like SEO — that are changing faster.

Invitations to take the 2025 MarTech Replacement Survey were distributed via email, website, and social media in Q4 2025.

A total of 207 marketers responded. Findings are based on the 154 respondents (60%) who said they had replaced a martech application in the previous 12 months.

Download the 2025 MarTech Replacement Survey, no registration required.

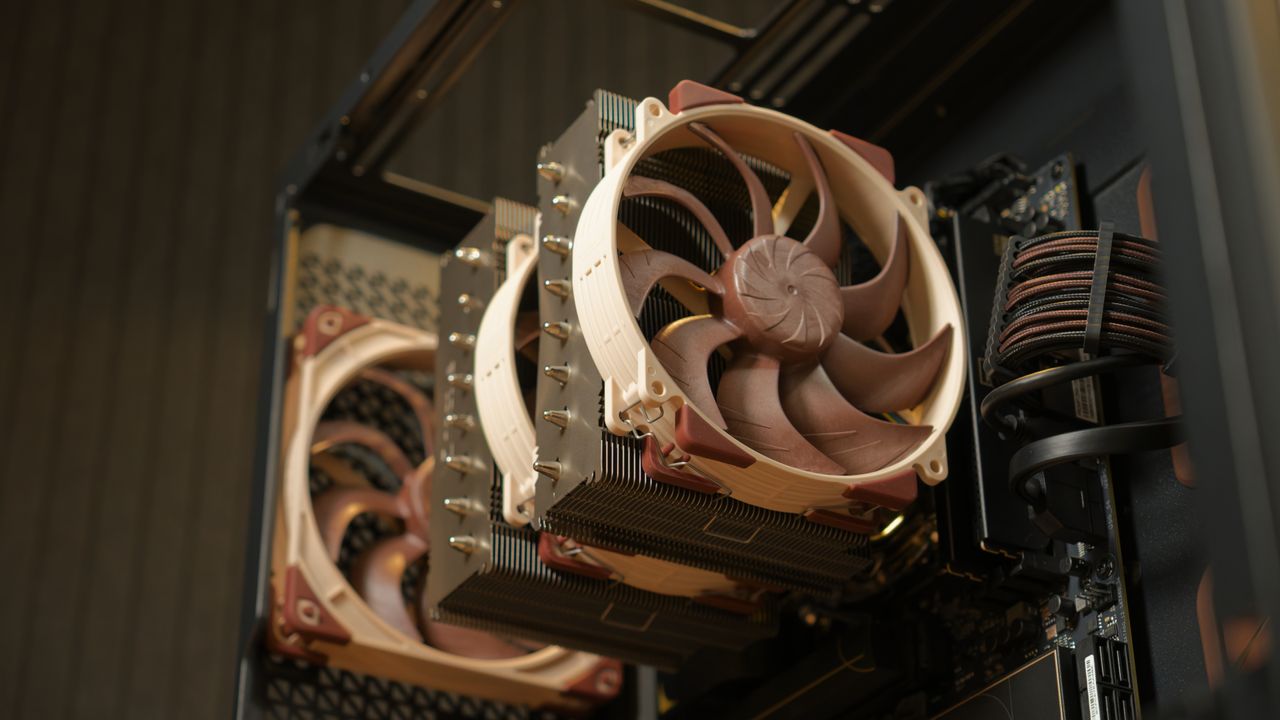

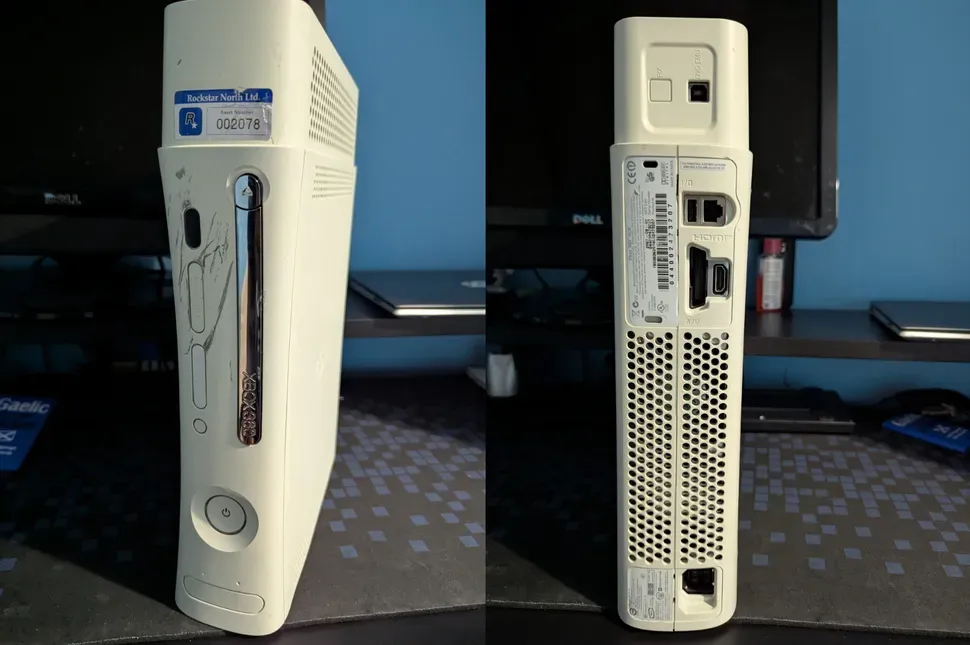

Sony hopes to deliver a better-than-Xbox Series S experience on its next-gen handheld with next-gen upscaling According to the leaker KeplerL2, Sony’s next-generation PlayStation 6 Handheld should feature a GPU that surpasses the Xbox Series S and Nintendo Switch 2. In fact, the leaker thinks that the system’s GPU will be “a bit ahead of […]

The post PlayStation 6 Handheld Performance Detailed – Better than Xbox Series S appeared first on OC3D.

AI-powered ad bidding systems are highly sophisticated, but conversion tracking hasn’t kept pace. Ad platforms encourage advertisers to track more actions, while many experts argue for tracking only final outcomes.

Both are partly true. Neither is universally correct.

In practice, both over- and under-signaling can hurt PPC performance. Too many loosely defined micro-conversions introduce noise. Bidding shifts toward easy, low-value actions, inflating reported performance while eroding real results. Too few signals leave the system without enough data to learn.

This dynamic is most visible in Performance Max and Search plus PMax setups, where the system optimizes toward whatever signals it’s given — regardless of whether they reflect real business value.

Here’s what happens when micro-conversions outnumber real conversions, why bidding systems behave this way, and how to build a conversion framework that aligns signal volume with business impact.

The idea that algorithms need as much data as possible has been repeated so often that it’s become an assumption. Platform documentation, automated recommendations, and many PPC blog posts reinforce the same message: more signals equal better learning.

Bidding systems require a minimum level of signal density to function, but they don’t benefit from indiscriminate micro-conversion signals. More data isn’t always better data.

Adding low-intent or loosely correlated actions often degrades performance by shifting optimization toward behaviors that don’t correlate with revenue.

Machine learning systems don’t evaluate the strategic relevance of a signal. They evaluate frequency, consistency, and predictability.

When an account includes a mix of high- and low-intent micro-conversions — purchases, add-to-carts, pageviews, video plays, and soft leads — the system doesn’t inherently understand which actions matter most to the business.

Without a clear value hierarchy, the bidding algorithm treats all signals as valid optimization targets. This creates a structural bias toward high-frequency, low-value actions because they’re easier and cheaper to achieve. The result is a bidding pattern that maximizes conversion volume while minimizing business impact.

Many practitioners advocate for value-based bidding, where each micro-conversion is assigned a relative financial or hierarchical value. In theory, this helps the system understand which signals matter most. You can also instruct the platform to maximize conversion value, which should push the algorithm toward higher-value purchases or sales-qualified leads (SQLs).

But value-based bidding isn’t a complete solution. When too many micro-conversions are included — even with assigned values — the system can still become overwhelmed. A high volume of low-intent signals can dilute intent and distort the value hierarchy.

The issue isn’t just a lack of context.

Every signal becomes part of the optimization math. If the model weighs signals by volume rather than business importance, low-intent micro-conversions will dominate. Assigning values helps clarify priorities, but it can’t override signal imbalance. At a certain point, the math wins.

Dig deeper: In Google Ads automation, everything is a signal in 2026

In practice, this shows up as a “path of least resistance” problem.

Even with values assigned, bidding algorithms still optimize toward the signals they’re given. When low-intent micro-conversions are included as Primary actions, the system treats them as efficient ways to increase conversion volume. This isn’t an error. It’s expected behavior for a model designed to maximize conversions within a set budget.

When those signals occur more frequently, the system gravitates toward them. A signal that fires hundreds of times a day will exert more influence than a high-value action that fires only a handful of times per week.

This dynamic is especially visible in PMax. The system evaluates signals across channels, audiences, and placements, and pursues the cheapest, most abundant path to conversion. If a contact page visit or key pageview is treated as a Primary signal, PMax may prioritize it over a purchase or SQL because it’s easier to achieve at scale.

That’s why PMax often reports strong conversion volume and low CPA while revenue remains flat or declines. The system is performing as instructed, but the inputs lack a disciplined signal hierarchy. Value-based bidding improves structure, but without restraint in the number and type of signals, it can’t fully prevent the problem.

When low-value actions are tracked as Primary conversions, platform-reported performance becomes disconnected from business outcomes. Metrics such as CPA, ROAS, and conversion rate may improve, but those gains are often illusory.

For example:

These patterns create a false sense of success, leading advertisers to scale budgets prematurely and erode contribution margin.

When multiple micro-conversions are tracked as Primary, a single user journey can generate multiple wins for the algorithm.

For example, a user who views a product page, signs up for a newsletter, and adds an item to cart may be counted as three conversions from a single click. If values are assigned to each step, conversion value and ROAS become inflated as well.

This inflates conversion volume, inflates conversion value, and distorts bidding behavior. The system interprets this as a high-value user and begins overbidding on similar traffic, even if the user never completes a purchase.

In many accounts, micro-conversions outnumber real conversions by a ratio of 500 to 1 or more. This imbalance has significant implications for bidding behavior.

If an account records 500 pageviews, 200 add-to-carts, 50 lead form starts, 10 purchases, and all actions are treated as Primary, the system receives 760 signals for every 10 that actually matter.

Without distinct values, the algorithm can’t differentiate between a $0.05 action and a $500 action. It optimizes toward the most frequent signals because they provide the clearest path to increasing conversion volume.

Even when values are assigned, overvaluing micro-conversions teaches the algorithm to pursue easy wins. The result is a maximized conversion value metric that looks strong in the dashboard but isn’t reflected in actual sales.

When micro-conversions dominate the signal mix:

That’s why accounts with high micro-conversion volume often show strong platform metrics but weak financial performance.

Micro-conversions are useful when an account lacks enough real conversion volume to support stable bidding. However, once a campaign consistently reaches 30 to 60 real conversions per month, they no longer provide meaningful benefit.

At that point, the system has enough high-quality data to optimize effectively. Continuing to rely on micro-conversions introduces unnecessary noise and increases the risk of misaligned bidding.

This is the point to transition from tCPA to tROAS and let real revenue guide optimization.

Dig deeper: Why better signals drive paid search performance

Primary actions influence bidding, while Secondary actions provide visibility without affecting optimization. This four-part litmus test helps determine which actions should be treated as Primary.

Micro-conversions should be used only when real conversion volume isn’t sufficient to support stable bidding. As a general guideline:

This threshold ensures micro-conversions serve as a temporary bridge, not a permanent crutch.

A Primary action should represent a required step in the conversion journey, such as:

Actions that aren’t required steps — such as contact page visits, whitepaper downloads, or time on site — shouldn’t be treated as Primary. These may indicate interest, but they don’t reliably predict revenue.

If an action can’t be assigned a realistic financial value, it shouldn’t be used as a Primary conversion. Assigning arbitrary values introduces risk and can distort bidding behavior.

Actions such as time on site or scroll depth fail this test because they don’t consistently correlate with revenue. However, if CRM data shows a reliable statistical correlation with revenue, that can justify including the action.

Even if multiple actions pass the first three tests, only the strongest one or two should be designated as Primary. Including too many Primary actions increases the risk of double-counting and overbidding.

A streamlined Primary set ensures the system focuses on the most meaningful signals.

Secondary conversions provide visibility into user behavior without influencing bidding. They’re a useful diagnostic tool for understanding funnel performance and evaluating new signals.

Tracking actions such as newsletter signups, video views, or soft leads as Secondary lets you monitor engagement without shifting bidding toward low-value behaviors.

This approach preserves data integrity while maintaining control over optimization.

Secondary conversions reveal where users drop off in the funnel. For example:

These insights support more informed optimization decisions.

New signals should be tracked as Secondary for several weeks before being considered for Primary status. This allows you to evaluate frequency, correlation with revenue, stability, and predictive value.

Only signals that demonstrate consistent value should be promoted to Primary.

When micro-conversions are used, they must be assigned values that reflect their true contribution to revenue. Overvaluing micro-conversions is a common cause of inflated platform performance and misaligned bidding.

The baseline value of a micro-conversion is determined by:

For example:

The baseline value shouldn’t be used directly. Instead, apply a 25% reduction:

This discount helps prevent overbidding by ensuring the system doesn’t overvalue micro-conversions relative to actual revenue.

Undervaluing micro-conversions may slightly slow learning, but it doesn’t distort bidding. Overvaluing them can push the system toward low-intent traffic, leading to rapid budget misallocation.

The safety discount provides a buffer that protects contribution margin while still supplying useful data.

Dig deeper: How to make automation work for lead gen PPC

Practitioners consistently point to the same principle: signal discipline matters more than signal volume.

Julie Friedman Bacchini emphasizes that every conversion action becomes a signal the system optimizes toward. Using more than one Primary action introduces ambiguity — “it’s suddenly muddier” — and skipping values makes it easier for the system to latch onto lower-value signals. Values don’t need to be exact, but they must be relative.

She also notes that micro-conversions can help low-volume campaigns reach data thresholds, but they aren’t a substitute for real Primary conversions. Removing them later can mean “starting over to a large extent on system learning.”

Jordan Brunelle takes a similarly disciplined approach: “There can definitely be too many.” He recommends starting with one strong signal of intent and watching the ratio between micro-conversions and real outcomes. If volume is high but outcomes are low, it often signals a targeting or signal issue.

Across both perspectives:

The debate around micro-conversions often focuses on quantity. But the real differentiator isn’t volume, but discipline.

Bidding systems optimize toward the signals they’re given. When the signal mix is cluttered, performance drifts. When it’s clear and intentional, the system aligns with real business outcomes.

Micro-conversions should be selectively used and continuously evaluated. Start with a simple audit:

Micro-conversions should be a temporary bridge. Once real conversion volume is sufficient, optimization should be guided by revenue. A disciplined signal architecture gives automation what it needs to perform as intended: efficient, predictable, and aligned with real business outcomes.

If you’re a lawyer, college administrator, or financial services provider, you’ve likely seen the frustrating “Eligible (Limited)” status in your Google Ads account. It can feel like you’re fighting Google with one hand tied behind your back when your remarketing lists, exact match keywords, and more don’t work as intended.

While it might feel like Google Ads is out to get you when you operate in a so-called “sensitive interest category,” there are specific reasons for these rules. More importantly, there are specific ways to succeed despite them.

This article will cover what the personalized advertising policies are, what they mean for your account, and five specific tactics you can use to succeed with Google Ads.

Google provides detailed explanations in its official policy documentation, but it comes down to two things: legal requirements and ethical standards.

In the United States, for example, the Fair Housing Act and employment laws prevent discrimination based on age, gender, or location. If you’re advertising a job opening or a new apartment complex, Google can’t allow you to exclude people based on those demographics because doing so would be against the law.

Then there’s the ethical side. Imagine you’re running a rehab center. If someone visits your site, Google’s “sensitive interest” policy prevents you from following them around the internet with targeted banner ads like, “Still struggling with addiction? Come to our clinic.”

That kind of remarketing is intrusive and, frankly, predatory when it targets someone’s health and struggles. To protect the user experience and maintain a sense of privacy, Google limits how personal data can be used in these high-stakes industries.

If you fall into one of these categories — housing, employment, credit, healthcare, or legal services — the biggest impact is usually on your audience targeting.

Here’s what you can’t use:

For certain categories in certain countries, like housing, credit, and employment in the United States, there’s further “demographic stripping” — you can’t target by age, gender, parental status, or ZIP code. Your Smart Bidding strategies won’t use these signals as inputs either.

It’s easy to focus on what’s gone, but what still works is a much longer list. Even in a restricted industry, you still have access to the core engine of Google Ads. You can still use:

If you want to move the needle without relying on remarketing, you need to rethink your account structure and messaging. Here are five things you can do right now.

If your business offers a mix of services — some sensitive, some not — don’t let the sensitive ones “poison” your whole account. Think of a spa that offers haircuts, pedicures, and Botox. Haircuts are fine; Botox is a medical procedure that triggers sensitive category restrictions.

If you put them all on one site, your entire remarketing capability might get shut down. Consider putting the sensitive service on a separate domain and a separate Google Ads account. This lets you use every available tool for your main business while the sensitive portion operates under the necessary restrictions.

If you want to use image or video ads, use Demand Gen instead of the standard Display Network. In my experience, Demand Gen delivers higher-quality audiences and tends to perform better in restricted niches.

You might be tempted to stick to Exact Match keywords to keep things tight. However, in sensitive categories, Google may restrict ads on very narrow, specific queries for privacy reasons. If your Exact Match keywords aren’t getting impressions, try Phrase or Broad Match. This gives the algorithm more room to find users searching for the same thing with slightly different phrasing that may be less restricted.

Think of it like fishing: if you can’t use a spear, use a net. You’ll catch some fish you don’t want, but that tradeoff helps you catch the ones you do want more easily.

Most businesses in these categories, such as law firms or banks, don’t make sales on their websites. The website generates a lead, and the sale happens over the phone or in an office.

If you want Google to find better users, you must feed that real-world data back into the system. Use Offline Conversion Tracking (OCT) to show Google which leads became customers. Even if you must navigate HIPAA or other privacy regulations, there are ways to do this safely.

Consult your legal team, but don’t skip this step. It’s the best way to train the algorithm when you can’t use your own audiences and to ensure Smart Bidding works at its full potential.

When you can’t tell Google who to target with a list, you have to tell the user who the ad is for through your creative. Your headlines and images should qualify the lead.

Be specific in your copy. For example, instead of “Need a Lawyer?” try “Defense Attorney for Small Business.” This attracts your target audience and encourages people who aren’t a fit to scroll past, saving you money and improving your conversion rate.

Running Google Ads in a sensitive category is a challenge, but it’s far from impossible. By shifting your focus from who the person is to what they’re looking for and how you speak to them, you can still drive incredible results.

This article is part of our ongoing Search Engine Land series, Everything you need to know about Google Ads in less than 3 minutes. In each edition, Jyll highlights a different Google Ads feature, and what you need to know to get the best results from it – all in a quick 3-minute read.

AI has changed how I work after nearly two decades in digital marketing. The shift has been meaningful, freeing up time, reducing the grinding parts of the job, and making some genuinely hard tasks faster.

That doesn’t mean it does the work for you, transforms everything overnight, or saves you 40 hours a week. In real-world SEO, with real clients and real deadlines, it’s a tool that makes parts of the job easier, not something that replaces the work itself.

Here are 20 ways I actually use it. Some are specific to SEO. Some are broader, but relevant to anyone working in the industry. All of them are practical, tested, and honest about their limitations.

The single best way to use AI for content is to stop expecting it to produce something publishable and start treating it as a very fast first-draft machine.

The content AI produces out of the box is average. Your job is to make it good. Reference real-life stories, case studies, and statistics, and showcase your personal viewpoint and expertise.

The time savings are in not starting from a blank page.

Give Claude or ChatGPT your target keyword, page topic, and character limits. Ask for 10 variations of your meta title and descriptions. You’ll use one, maybe combine two, but the process takes two minutes instead of 20. For large sites with hundreds of pages, this alone is worth the subscription.

Many tools allow you to upload CSV files, add AI’s suggested ideas, and download them for review. Don’t skip this step. A human eye is where the value sits

Paste an existing page or blog post that has dropped in rankings. Ask AI to identify what’s missing, what could be expanded, and what feels outdated.

It won’t always be right, but it gives you a starting point instead of reading the whole thing yourself with fresh eyes you don’t have at 4 p.m. on a Thursday.

Make sure to give context. Long prompts with lots of detail will produce much better results than pasting a page in cold.

Prompt AI to generate the 10 most common questions for your target keyword. Cross-reference with People Also Ask and your own research.

Answer them, and you now have an FAQ section, featured snippet opportunities, and a content gap analysis in about 10 minutes.

Nobody enjoys writing alt text for 200 product images. Describe the image, give it the context of the page it sits on, and include the target keyword. Then ask for alt text that’s descriptive and naturally includes the term where relevant. It’s not glamorous, but it’s necessary and faster.

You can also run a website through Screaming Frog, export it to a CSV file, upload it to your AI of choice, and ask it to write the alt text. This only works well if the file names are descriptive, and again, a human eye is key. This is about increasing speed, rather than handing it over to AI completely.

Dig deeper: How to use AI for SEO without losing your brand voice

Not everyone working in SEO has a developer background. AI is useful for:

Paste in the output, ask it to explain it in plain English, and then ask what the fix should be. Verify the answer, but it gets you most of the way there.

Schema is one of those things everyone knows they should be doing more of, and nobody finds especially enjoyable.

Describe the content of your page to your AI of choice, tell it what schema type is relevant (FAQ, Article, LocalBusiness, Product, etc.), and ask it to generate the JSON-LD.

Check it in Google’s Rich Results Test before implementation. This used to take me 20 minutes per page type. Now it takes five.

If you use regex in GSC filters and you’re not a developer, AI is your new best friend. Describe what you’re trying to filter, for example, all URLs containing a specific subfolder, or all queries including a particular term, and ask for the regex string.

It gets it right more often than not, and you can ask it to explain the logic so you actually understand what you’re implementing.

If you export a crawl from Screaming Frog or Sitebulb and you’re not sure what to prioritize, paste the summary data into your AI tool and ask it to help you identify the highest-priority issues based on the site’s goals.

It won’t replace your expertise, but it’s a useful sounding board when you’re staring at a spreadsheet with 47 issues and a client call in an hour.

Dig deeper: 6 tactical ways to responsibly use AI for everyday SEO

This is one of the most underrated uses of AI in SEO work. You have the data. You have the graphs. What takes time is writing the commentary that explains what happened, why, and what comes next.

Feed AI your key metrics and the context of what was happening that month (algorithm updates, campaign launches, seasonality), and ask it to draft the narrative section of your report. Edit it, add your actual insight, but stop writing it from scratch every month.

You can even upload reports from various data sources and ask it to combine and summarize them. This saves me hours every month when I’m putting together reports.

Not every client wants to read a 12-page report. Ask AI to summarize your report into a five-bullet executive summary. Give it to clients at the top of the document.

The ones who want details will read on. The ones who don’t will feel informed without asking you to talk them through every chart on the next call.

Ask AI to create the executive summary for someone who doesn’t know anything about SEO, and it’ll give you something simple and easy to understand.

Paste a table of your keyword rankings or traffic data, and ask AI to flag anything that looks unusual, including significant drops, unexpected gains, or patterns that don’t match the previous period.

It won’t replace proper analysis, but it’s a useful first pass when you’re managing a large amount of information and can’t give every dataset the attention it deserves.

Dig deeper: How to build AI confidence inside your SEO team

List your top three competitors and your own site. Ask AI to help you think through what content topics they’re likely covering that you’re not, based on their positioning and audience.

Then, validate that with actual keyword research tools. AI can’t see competitor data directly, but it’s useful for hypothesis generation before you do the manual work.

When you take on a client in an industry you don’t know well, you need to get up to speed fast. Ask your AI to give you a primer on the industry:

It saves you an embarrassing amount of time in discovery calls.

Paste a list of your target keywords and ask AI to categorize them by search intent: informational, navigational, commercial, and transactional. Then compare that against the page type you’re targeting them with.

You’ll almost certainly find mismatches. This is a task that’s straightforward to describe, but tedious to do manually across hundreds of keywords.

Dig deeper: How to use AI response patterns to build better content

Everyone has had to write a difficult email, whether it’s explaining why rankings have dropped, why a deadline was missed, or why they need to do something you know they don’t want to do.

These emails take a disproportionate amount of emotional energy to write. Give your AI the situation, the context, and what you need the client to understand or do, and ask for a draft that’s clear, professional, and honest.

Edit it. Send it. Move on.

If you’ve been meaning to document your processes and just haven’t gotten around to it, AI removes the excuse.

Describe a process out loud (or in rough notes), paste it in, and ask for a structured SOP with numbered steps, decision points, and notes.

The first version will need editing, but having a framework to work from is the difference between getting it done and it sitting on the to-do list for another quarter.

Before a client call, paste in your recent report data, any issues from the previous month, and what you need to cover.

Ask your AI to help you structure the agenda and anticipate questions the client might ask based on the data. You’ll go into the call more prepared and less likely to be caught off guard.

This one sounds vague, but it’s one of the ways I use AI most.

When I have a problem I can’t get clear on, a strategy decision I’m going back and forth on, or a piece of work I can’t find the right angle for, I talk it through with Claude (my AI buddy of choice) to clarify my own thinking. It asks questions, reflects things back, and helps me arrive at a point of view faster than I would staring at a blank document.

Ask your AI to be brutally honest with you. Otherwise, it’ll just keep agreeing with you and telling you that you’re truly an expert on every topic.

The biggest productivity gain from AI isn’t any individual use. It’s building a library of prompts that work for your specific workflow and reusing them consistently.

Every time you get a good result from an AI tool, save the prompt. Over time, you build a system, rather than starting from scratch every time. This is the thing most people skip, and it’s the thing that compounds.

Top tip: In the paid version of many AI tools, you can create projects and have specific instructions for each one. This is invaluable for saving time by not having to include all of this information in every prompt you use.

Dig deeper: Why SEO teams need to ask ‘should we use AI?’ not just ‘can we?’

None of these tips replace the expertise, judgment, and client relationships that make a good SEO professional.

AI doesn’t know the business the way you do. It doesn’t understand the nuance of an industry, the history of an account, or the particular quirks of a contact you deal with regularly.

AI reduces the time spent on tasks that don’t require that expertise, so you have more of it available for the work that does.

Use AI as a tool. Stay skeptical of the hype. And for the love of good search results, edit everything before it goes anywhere near a client.

Dig deeper: Could AI eventually make SEO obsolete?

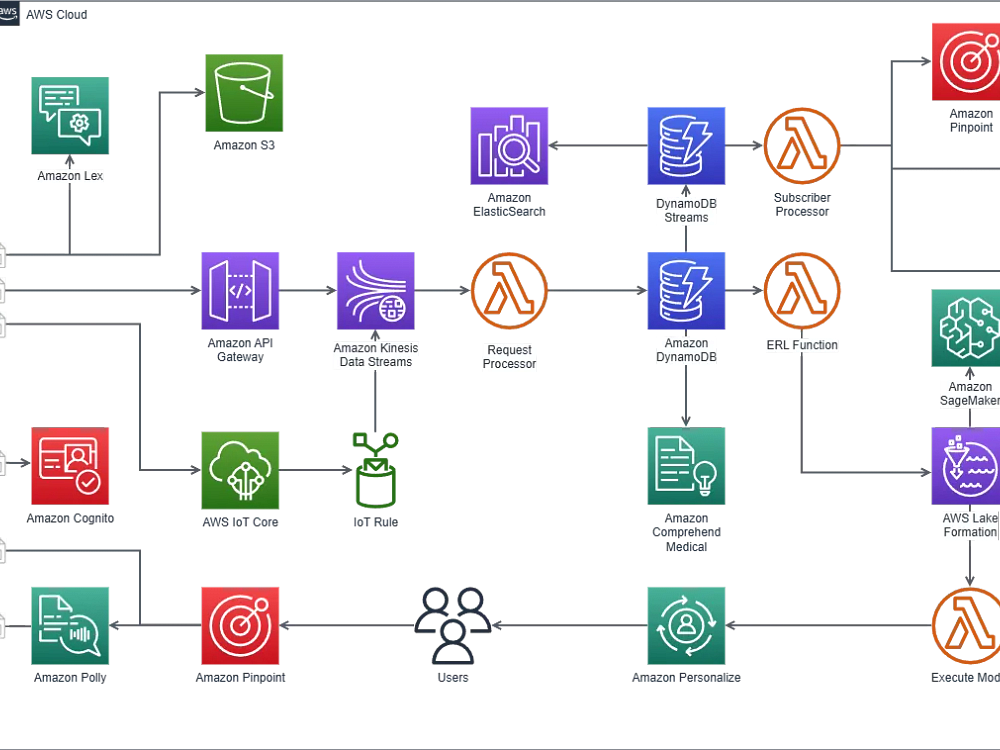

DiagramDeck is a cloud-based diagramming platform that hosts and manages draw.io for your team. Import and export .drawio files, edit together in real time with comments and live cursors, and use AWS, GCP, and Azure shape libraries to design cloud architectures, flowcharts, UML, ER, and network diagrams.

It removes self-hosting overhead with managed uptime, backups, and security, and adds team management, SSO, and compliance such as SOC 2 and GDPR. Use it as a modern alternative to Lucidchart and Visio while keeping the draw.io ecosystem.

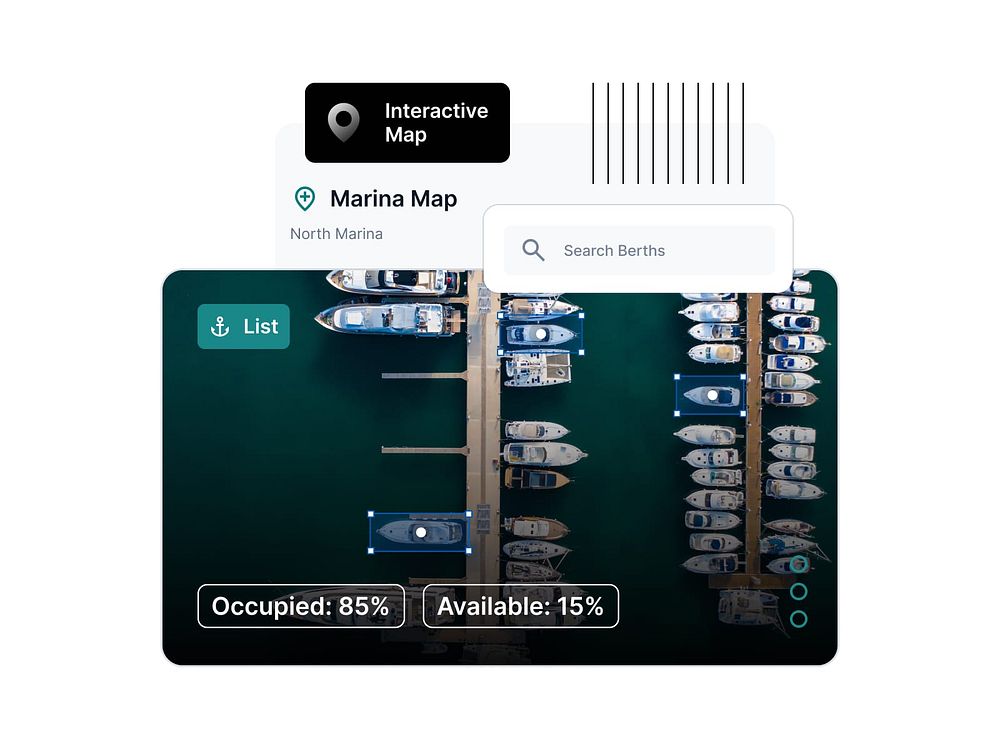

SupaSailing is the first operational ERP platform built for nautical professionals including fleet managers, charter companies, brokers, and marinas. These businesses previously used spreadsheets, disconnected tools, and email threads since no integrated system existed for this industry.

Six modules cover crew and fleet management, charter enquiries, brokerage CRM, berth management, refit projects, and ISM compliance. All modules are connected with no duplicate data, providing full operational visibility across the business.

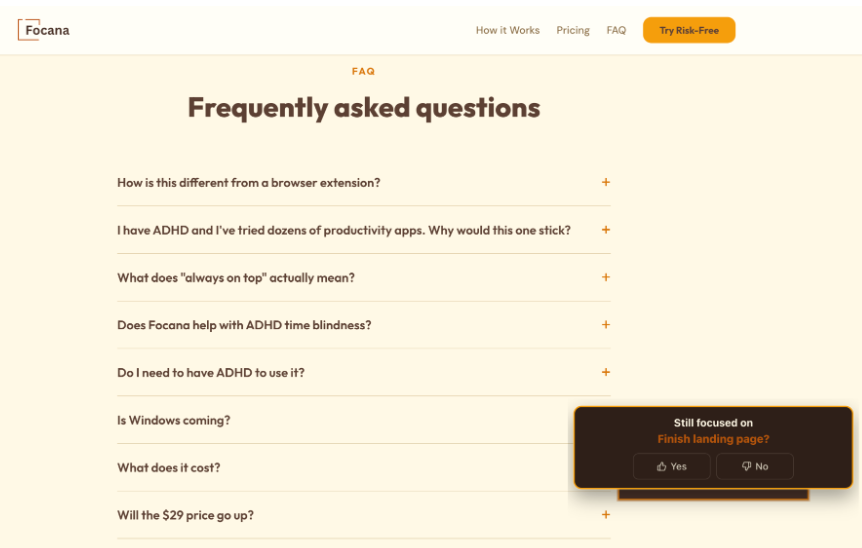

It's easier than ever to get distracted while working on your computer. A quick email check, a Slack ping, one ChatGPT question, and boom, 30 minutes gone. "What was I supposed to be doing?"

Most focus tools either block apps you need or disappear when you switch tabs or apps. Neither works. You need an anchor, not a blocker. Focana keeps one task and a timer always visible on your screen, delivers gentle visual nudges and check-ins to keep you locked in, captures stray thoughts in the Parking Lot so they don't derail your session, and allows you to leave notes for context to pick up where you left off. All with no accounts, no sync, and no cloud—just a calm companion for busy brains.

Google partnered with the Brazilian government on a satellite imagery map to help protect the country’s forests.

Google partnered with the Brazilian government on a satellite imagery map to help protect the country’s forests.  Here are Google’s latest AI updates from March 2026

Here are Google’s latest AI updates from March 2026  Get ready for YouTube Brandcast 2026 at Lincoln Center. Host Trevor Noah joins CEO Neal Mohan and a powerhouse lineup of creators to demonstrate why YouTube is the futur…

Get ready for YouTube Brandcast 2026 at Lincoln Center. Host Trevor Noah joins CEO Neal Mohan and a powerhouse lineup of creators to demonstrate why YouTube is the futur…

MSI GPU Safeguard+ finally allows me to trust 12V-2×6 GPUs If I’m honest, I’m not a fan of 12V-2×6 or 12VHPWR. There have been far too many reports of burnt graphics cards or melted power connectors to ignore. If I were to spend many hundreds, or perhaps thousands, on a new graphics card, I want […]

The post MSI GPU Safeguard+ is a game-changing tech for 12V-2×6 GPUs appeared first on OC3D.

Barry Adams recently published “Google Zero is a Lie” in his SEO for Google News newsletter, arguing that the narrative of Google traffic disappearing is false and dangerous.

His data backs it up. Similarweb and Graphite data show only a 2.5% decline in Google traffic to top websites globally. Google still accounts for nearly 20% of all web visits.

The widely cited Chartbeat figure showing a 33% decline? It’s skewed by a handful of large publishers hit by algorithm updates. Publishers who abandon SEO in the face of this panic are making a self-fulfilling prophecy, ceding traffic to competitors who keep optimizing.

He’s right. And he’s looking at the wrong problem.

Humans are still clicking Google results. What has changed is that a growing share of your visitors isn’t human at all.

Automated traffic surpassed human activity for the first time in a decade, per the 2025 Imperva Bad Bot Report. Bots now account for 51% of all web traffic. Not “soon.” Not “by 2027.” Now.

That number includes everything from scrapers to brute-force login bots. But the fastest-growing segment is AI crawlers.

AI crawlers now represent 51.69% of all crawler traffic, surpassing traditional search engine crawlers at 34.46%, Cloudflare’s 2025 Year in Review found. AI bot crawling grew more than 15x year over year. Cloudflare observed roughly 50 billion AI crawler requests per day by late 2025.

Akamai’s data tells a similar story: AI bot activity surged 300% over the past year, with OpenAI alone accounting for 42.4% of all AI bot requests.

So while Adams is correct that human Google traffic hasn’t collapsed, something else is happening on the other side of the server logs.

Cloudflare published crawl-to-referral ratios for AI bots. Look at these numbers.

Anthropic’s ClaudeBot crawls 23,951 pages for every single referral it sends back to a website. OpenAI’s GPTBot: 1,276 to 1. Training now drives nearly 80% of all AI bot activity, up from 72% the year before.

Compare that to traditional Googlebot, which has always operated on a crawl-and-send-traffic-back model. Google crawls your site, indexes it, and sends 831x more visitors than AI systems. The deal was simple: let me read your content, and I’ll send you people who want it.

That deal is fraying even on Google’s own turf. Queries where Google shows an AI Overview see 58-61% lower organic click-through rates, according to Ahrefs and Seer Interactive studies covering millions of impressions through late 2025.

Google’s newer AI Mode is worse. Semrush data shows a 93% zero-click rate in those sessions. AI Overviews now trigger on roughly 25-48% of U.S. searches, depending on the dataset, and that number keeps climbing.

And when Google’s AI features do cite sources, they’re increasingly citing themselves. Google.com is the No. 1 cited source in 19 of 20 niches, accounting for 17.42% of all citations, an SE Ranking study of over 1.3 million AI Mode citations found. That tripled from 5.7% in June 2025. Add YouTube and other Google properties, and they make up roughly 20% of all AI Mode sources.

So the old deal is being rewritten even by Google. AI crawlers from other companies skip the pretense entirely: let me read your content so I can answer questions about it without ever sending anyone your way.

The bot traffic numbers are already here. The next wave is bigger: AI agents acting on behalf of humans.

In 2024, Gartner predicted that traditional search engine traffic would drop 25% by 2026 as AI chatbots and agents handle queries. That prediction is tracking. Its October 2025 strategic predictions go further: 90% of B2B buying will be AI-agent intermediated by 2028, pushing over $15 trillion in B2B spend through AI agent exchanges.

This isn’t theoretical.

Gartner says 40% of enterprise applications will have task-specific AI agents by the end of 2026, up from less than 5% in 2025. eMarketer projects AI platforms will drive $20.9 billion in retail spending in 2026, nearly 4x 2025 figures.

Think about what that looks like in practice. An AI agent researches vendors for a procurement team. It doesn’t see your hero banner. It doesn’t notice your trust badges. It reads your structured data, compares your specs to those of three competitors, and builds a shortlist.

That “visit” might show up in your analytics as a bot hit with a zero-second session duration. Or it might not show up at all.

So what do you optimize for when the visitor is a machine making decisions for a human?

It’s not the same as traditional SEO. And it’s not the same as the AI Overviews optimization most people are focused on right now. AI Overviews are still Google. Still one search engine, still largely the same ranking infrastructure, still (mostly) one answer format.

Agentic SEO is about being useful to software that’s pulling from search APIs, crawling directly, and using LLM reasoning to make recommendations. That software doesn’t care about your page layout. It cares about whether it can extract what it needs.

I think a few things start to matter a lot more.

Schema markup has always been a “nice to have” for rich snippets. When an AI agent compares your product to three competitors, structured data lets it read your specs without having to guess. Think product schema, FAQ schema, and pricing tables in clean HTML. These go from SEO hygiene to core infrastructure.

Dig deeper: How schema markup fits into AI search — without the hype

AI agents don’t search for “best CRM for small business.” They ask compound questions: “Which CRM under $50/user/month integrates with QuickBooks and has a mobile app with offline capability?” If your content only answers the first version, you’re invisible to the second.

A human might not notice your pricing page is 8 months stale. An AI agent cross-referencing your pricing against competitors will flag the discrepancy. Or worse, use the outdated number in its recommendation and cost you the deal.

Blocking AI crawlers feels protective, but it means AI agents can’t recommend you. Allowing them means your content trains models that may never send you traffic. There’s no clean answer.

But pretending it’s just a technical setting is a mistake. New IETF standards are emerging to give publishers more granular control, but they’re not widely adopted yet.

Dig deeper: Technical SEO for generative search: Optimizing for AI agents

Most analytics setups can’t tell the difference between a human visit, a bot crawl, and an AI agent evaluating your site on someone’s behalf. GA4 filters most bot traffic. Server logs show the raw picture, but take work to parse. Even then, figuring out whether an AI agent’s visit led to an actual sale is basically impossible right now.

This is where the “Google Zero” framing does real damage.

If you’re only measuring organic sessions from Google, you’re blind to a channel that doesn’t show up in that number. Your traffic could look stable while an AI agent steers $50,000 in annual spend to your competitor because their product schema was more complete.

I don’t think we have good measurement for this yet. Nobody does. But ignoring the problem because Google sessions look fine is like checking your print ad response rate in 2005 and deciding the web wasn’t worth paying attention to.

I don’t have a playbook for this. It’s too new. But I can tell you what we’re doing at our agency.

The “Google Zero” argument pits one extreme against another, even as the actual shift is quieter and more important.

The web is becoming a place where the majority of visitors are machines. Some send traffic back. Most don’t. Some of them make purchasing decisions on behalf of humans. That number is growing fast.

The SEOs who do well here won’t be the ones arguing about whether Google traffic moved 2.5%. They’ll be the ones who figured out how to be useful to both human visitors and the AI agents acting on their behalf.

We’ve spent 25 years optimizing for how humans find things. Now we need to figure out how machines find things for humans.

That’s not Google Zero. We don’t have a name for it yet. But it’s already here.

If you want to go deeper on GEO and agentic SEO, I’m teaching an SMX Master Class on Generative Engine Optimization on April 14. It covers structured data implementation, AI visibility measurement, content optimization for AI systems, and the practical side of everything in this article.

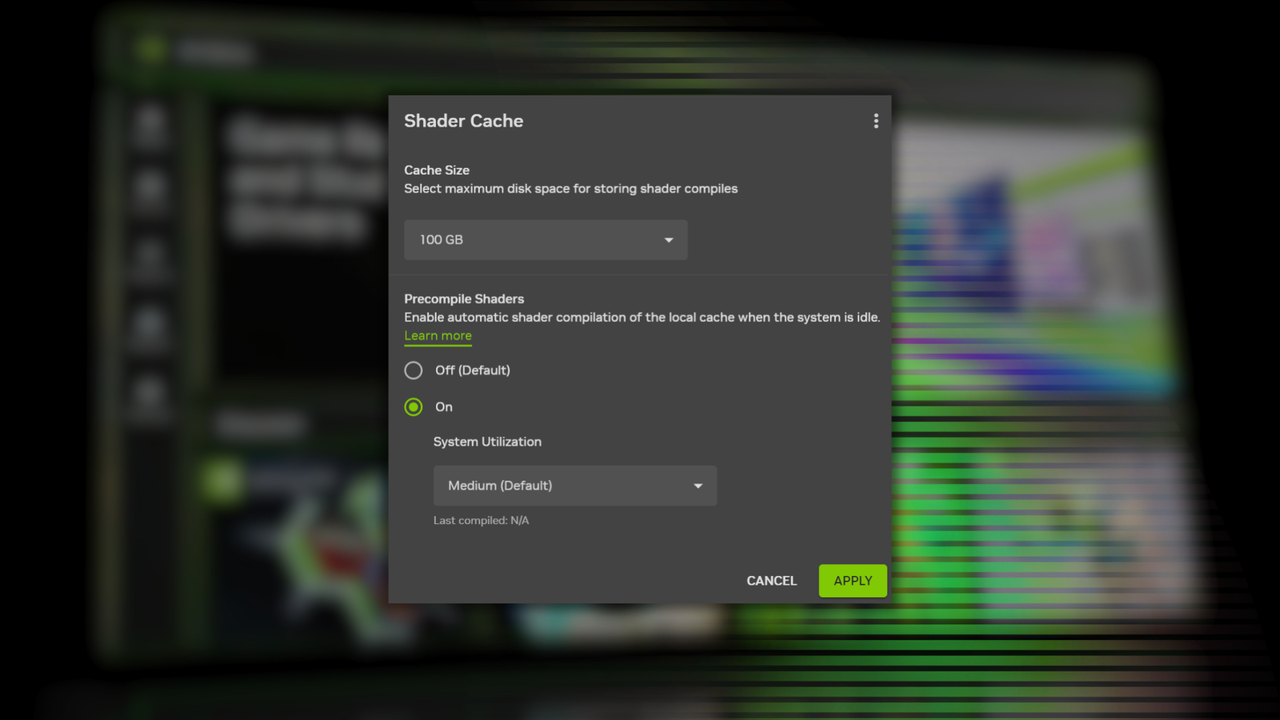

Nvidia aims to tackle shader stutter with its Auto Shader Compilation Beta Nvidia is taking action against shader compilation stutter. With its new Auto Shader Compilation (ASC) feature, Nvidia are giving gamers the option to rebuild game shaders outside of runtime to deliver a smoother gaming experience. When your PC is idling, it can be […]

The post Nvidia adds “Auto Shader Compilation Beta” to the Nvidia App appeared first on OC3D.

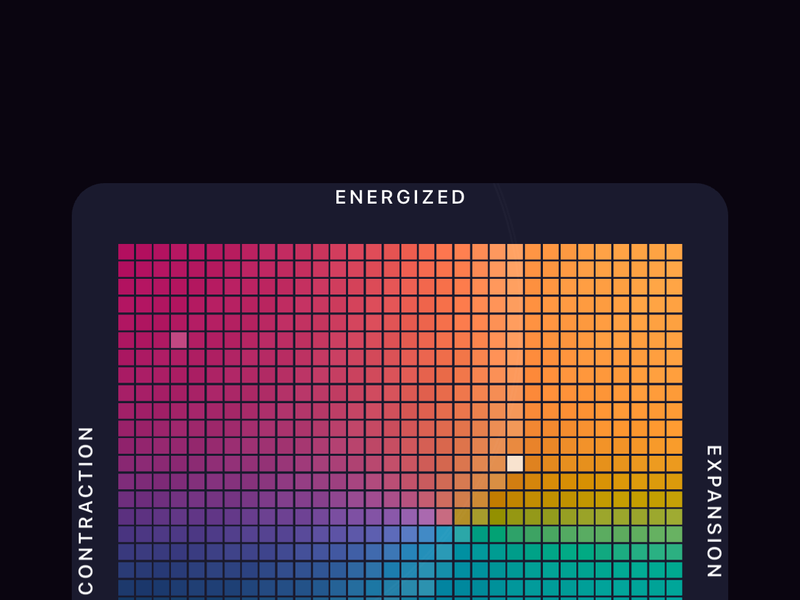

Gen AI gives us productivity superpowers, but the risk is mental fatigue. Ascenda helps you track how your mind is performing day to day. With a quick daily check-in, it shows patterns in clarity, energy, mood, recovery, focus, and decision load so you can protect your best work.

Built with input from a psychologist, neuroscientist, and engineer-founder with lived experience, Ascenda is a Whoop-like layer for the mind: signals, patterns, and early awareness before stress leads to poor decisions, lost focus, or burnout.

LinkedIn is one of the most powerful platforms for recruiting top-tier talent. It’s also one of the easiest places to waste budget if campaigns aren’t structured correctly.

Many recruitment campaigns fail because they prioritize visibility over intent. More impressions don’t equal better hires. Broad targeting and generic messaging often lead to an influx of unqualified applicants, driving up cost-per-hire and slowing down hiring timelines.

The most effective LinkedIn recruitment strategies focus on one thing: attracting and converting high-intent candidates while filtering out poor-fit applicants before they ever click. Let’s break down exactly how to do that.

The biggest mistake advertisers make on LinkedIn is targeting based solely on job titles, industries, and years of experience.

While this may generate volume, it rarely produces efficiency. Instead, high-performing campaigns are built around intent-based targeting — reaching candidates who are qualified and more likely to consider a new opportunity.

This requires a layered approach:

By combining these layers, you move beyond “who they are” and begin targeting why they might be ready to make a change — which is where real performance gains happen.

Your ad creative isn’t just there to attract attention. It should actively filter your audience. One of the most effective ways to control cost-per-hire is to discourage unqualified candidates from clicking in the first place.

Strong recruitment ads follow a structured approach:

This combination of attraction and exclusion ensures that the candidates who do click on your ads are far more likely to convert.

Dig deeper: LinkedIn Ads on a budget: How one playbook drove sub-$10 CPL

Rather than running a single campaign, high-performing LinkedIn strategies segment audiences based on intent.

These are active job seekers who offer the highest conversion opportunity. Following this structure:

These candidates aren’t actively applying but are open to change.

These are long-term potential candidates to start building your pipeline, with the intent to move them to the middle of the funnel and eventually the bottom of the funnel.

LinkedIn’s ad platform can quickly become expensive without proper controls. Start with manual CPC bidding to maintain control, then test automated delivery once performance data is established.

More importantly, optimize for the right metrics. Focus on qualified applications instead of clicks. Track downstream actions, such as interview and hire rates.

Be prepared to make fast decisions. Ads with high click-through rates but low application rates often indicate poor alignment. Ads that generate many applications, but few interviews signal weak pre-qualification.

Efficiency comes from eliminating wasted spend earlier, rather than later. It conserves ad spend and minimizes overlapping audiences and hitting the wrong targets.

Dig deeper: LinkedIn Ads retargeting: How to reach prospects at every funnel stage

A common but costly mistake is sending candidates directly to long, complex application forms. Instead, use a two-step funnel:

This approach sets expectations, filters candidates, and significantly improves application quality — often reducing cost-per-hire by 30-50%.

Not every qualified candidate applies on the first interaction. Retargeting allows you to re-engage high-intent users who have already shown interest.

Build audiences from:

Then serve follow-up messaging such as:

Retargeting campaigns are often the most cost-efficient part of your entire strategy.

Once the fundamentals are in place, there are several advanced tactics that can further improve performance:

Here’s an example of a successful LinkedIn InMail message that recently drove over 70% high-intent applications for an HVAC sales client:

Message body:

Hi [First Name],

This might be a stretch — but your background in HVAC sales caught my attention.

We’re hiring experienced sales reps who are tired of unpredictable commissions and weekend-heavy schedules.

This role is built for reps who:

- Have 3+ years in HVAC or home services sales

- Are comfortable running in-home consultations

- Want a more stable, high-earning structure

What’s different:

- No weekend appointments

- Pre-qualified, inbound leads (no cold knocking)

- Six-figure earning potential with consistency

That said, this isn’t a fit for entry-level reps or those new to sales.

If you’d be open to a quick 10-minute conversation to see if it’s worth exploring, I’m happy to share more.

If not, no worries at all — appreciate you taking a look.

— [Name]

Stating upfront the need for “experienced sales reps” immediately establishes relevance and increases response rates while reducing irrelevant replies.

Focusing on what matters to potential candidates, such as no weekend appointments and compensation structure, speaks to the audience’s needs versus the company’s.

Closing the conversation with the reminder that this isn’t an entry-level position weeds out wasted conversations and reduces cost-per-hire.

Dig deeper: LinkedIn Message Ads: Everything you need to know

The most effective LinkedIn recruitment campaigns rely on better strategy.

When you focus on intent-based targeting, pre-qualification within ad creative, funnel segmentation, and conversion optimization, you create a system that attracts the right candidates while minimizing wasted spend.

Ultimately, reducing cost-per-hire is about reaching the right people, at the right time, with the right message.

Napster made digital music feel limitless for the first time, then vanished in lawsuits, rebrands, and sales. Its name faded, but its ideas still shape how the world listens.

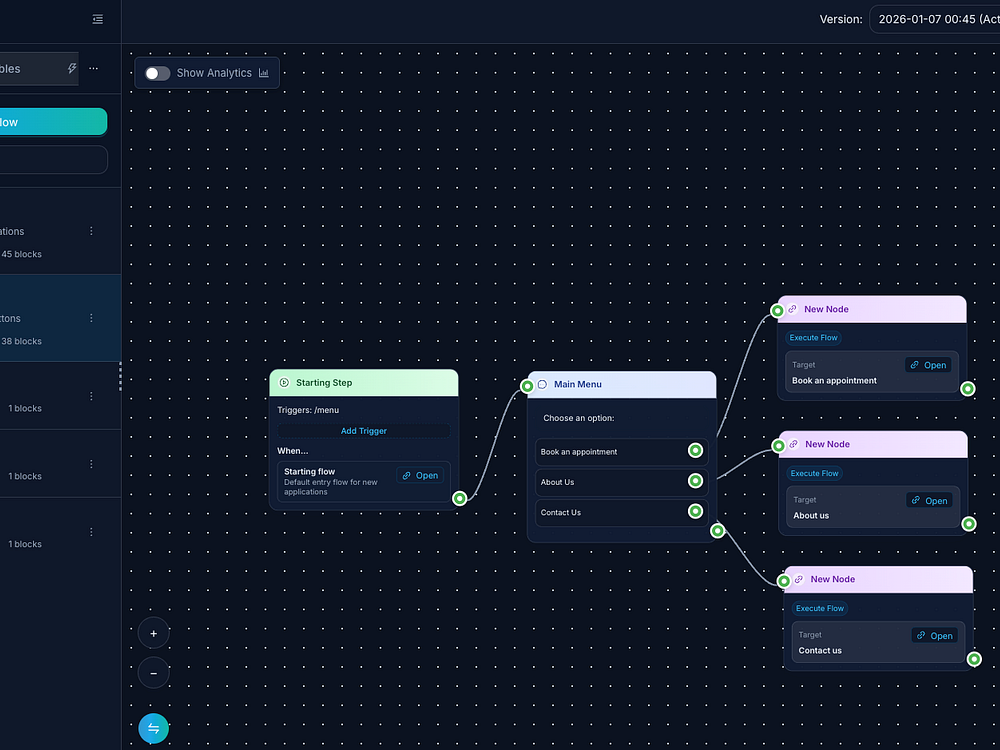

FlowCastle is a visual platform for building AI-powered chatbots without code. Use a drag-and-drop editor to design flows, then extend with TypeScript actions, HTTP requests, and integrations like Google Sheets. Launch on Telegram and reuse logic across brands with white-labeling. Accept payments, manage catalogs, and track orders inside the bot. Hand off to humans with live chat, run smart broadcasts, and monitor funnels with goal-based analytics. An AI copilot helps generate flows, write copy, and optimize automations.

HankRing helps you find the best versions of the specific dishes and drinks you crave. Choose your Hanks, see verified, likely, and potential spots on a map, and rate the dish—never the venue—to build consensus for the community. Browse Top 50 and trending categories, add missing spots, and keep a private journal of every rating and verification. With thousands of curated places preloaded, you can discover great food from day one and plan where to hunt next.

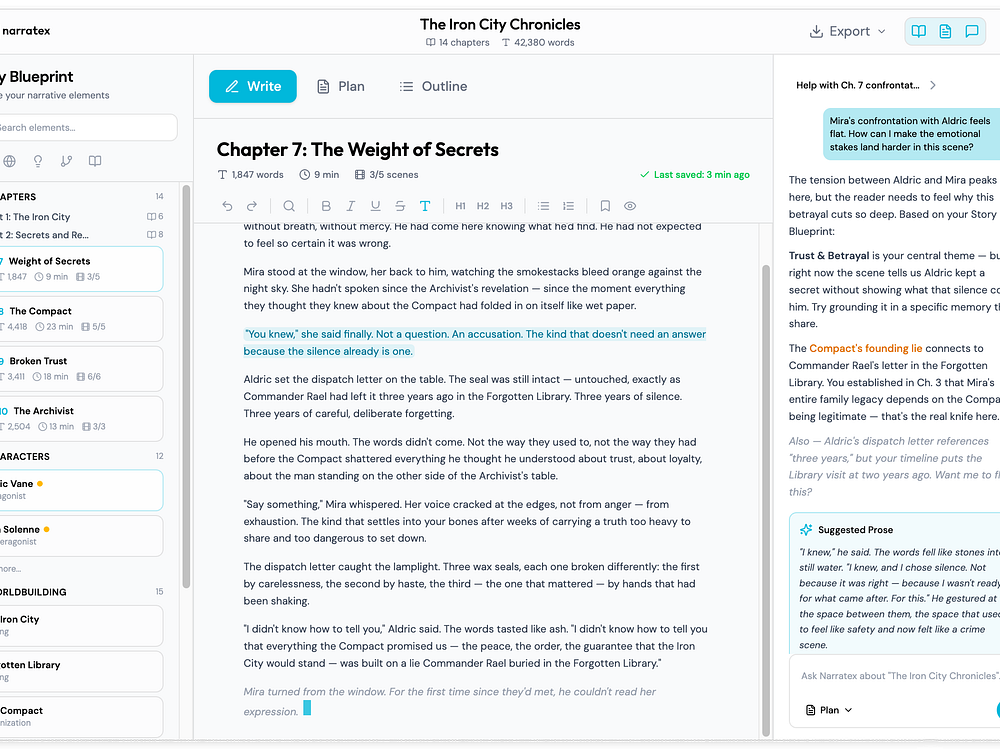

Narratex is a writing workspace for fiction authors that unifies your Story Blueprint, a full editor, and an AI collaborator that remembers context across sessions. It keeps track of your characters, plot threads, settings, and themes so you never need to re-explain your magic system or paste cast lists again.

Start by importing your existing work and building your blueprint, then write with an assistant that's already read everything you've created, keeping you consistent and focused chapter to chapter.

See, verify, and govern every agent action.

Trigger macros with rhythmic taps on your trackpad or mouse

Google's John Mueller answered a question about the nature of core updates: Are they rolled out in steps or all at once then refined?

The post Google Answers Why Core Updates Can Roll Out In Stages appeared first on Search Engine Journal.

AI voice feedback that catches complaints before bad reviews

Run a flock of Claude Code (or other agents) in one window.

Browse, search & track costs across Claude Code sessions

Turn your camera roll into group chat chaos

Axios / LiteLLM hacks behavioral detector app for Mac/PC

Rent real keyboard keys that redirect to your link

Skip ahead & chat with any YouTube video using AI

Meta's first AI glasses built for prescriptions

The spreadsheet rebuilt for AI

Formo makes analytics simple for DeFi so you can grow.

Speed of Voice. Power of AI.

One command to deploy Docker containers to your own server

Link-in-bio, but worse.

Cursor highlight, screen draw, zoom & spotlight

Know what's happening inside your NemoClaw sandboxes

AI-assistant native self-hosted deployment platform

A community idea board that looks like Windows 95

Your Network has Secrets, Now you can Them.

Google's most cost-effective video generation model

AI captures feedback and tells you what to build next

Everything at your cursor in a single gesture

Crack an Easter egg to generate an AI voice

Team up with your AI teammate in the all-new Slack

Control Codex on your iPhone

Pine doesn’t just draft or organize — it emails, calls, researches, plans, follows up, and persists until the job is done. For companies, Pine acts as an execution arm across CEOs, operations, finance, sales, marketing, and executive assistants — closing open loops, renegotiating contracts, chasing invoices, coordinating vendors, and unblocking stalled deals. For individuals who value time more than money, Pine handles life’s friction — negotiating bills, canceling subscriptions, filing claims, and waiting on hold. Pine turns decisions into outcomes — autonomously, persistently, and without expanding headcount.

Claras lets you get instant transcripts and chat with any YouTube video using AI. It analyzes full videos to answer questions, generate summaries, and build a clickable table of contents so you can jump to key moments with confidence. You can highlight insights, save notes, and export to TXT or PDF. Use transcripts to power ChatGPT, Claude, or custom agents, and collaborate with teammates.

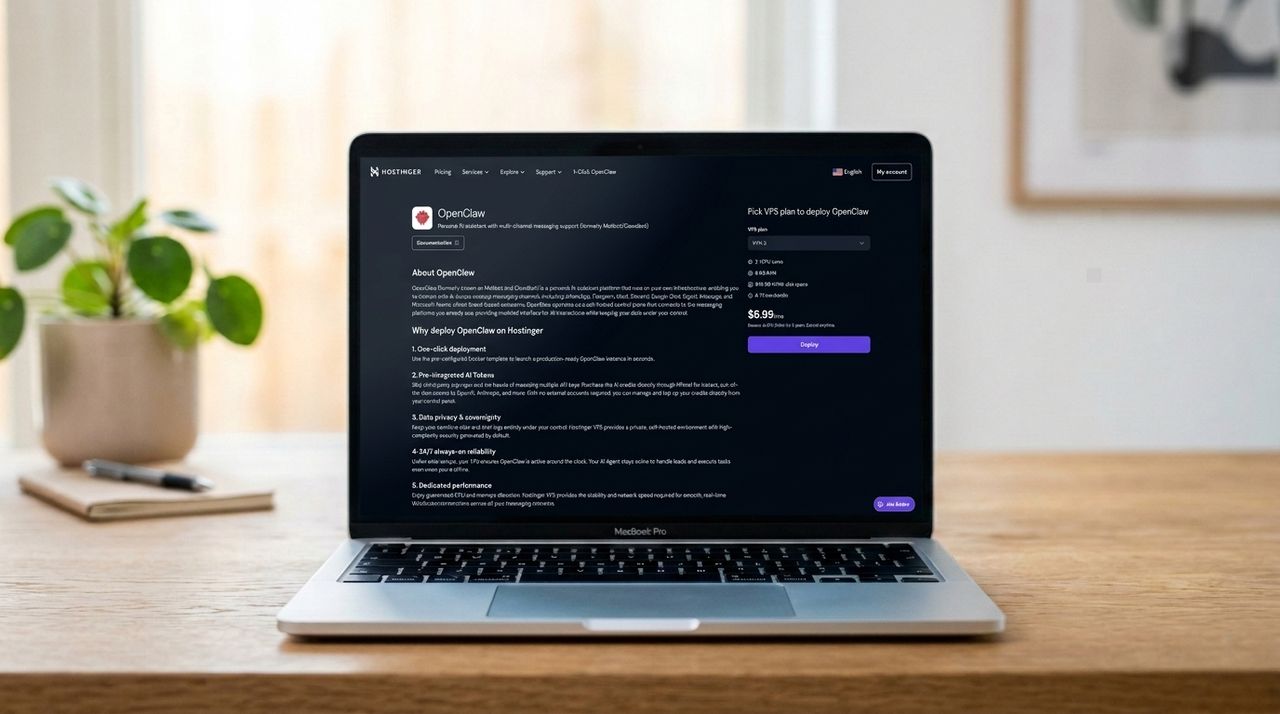

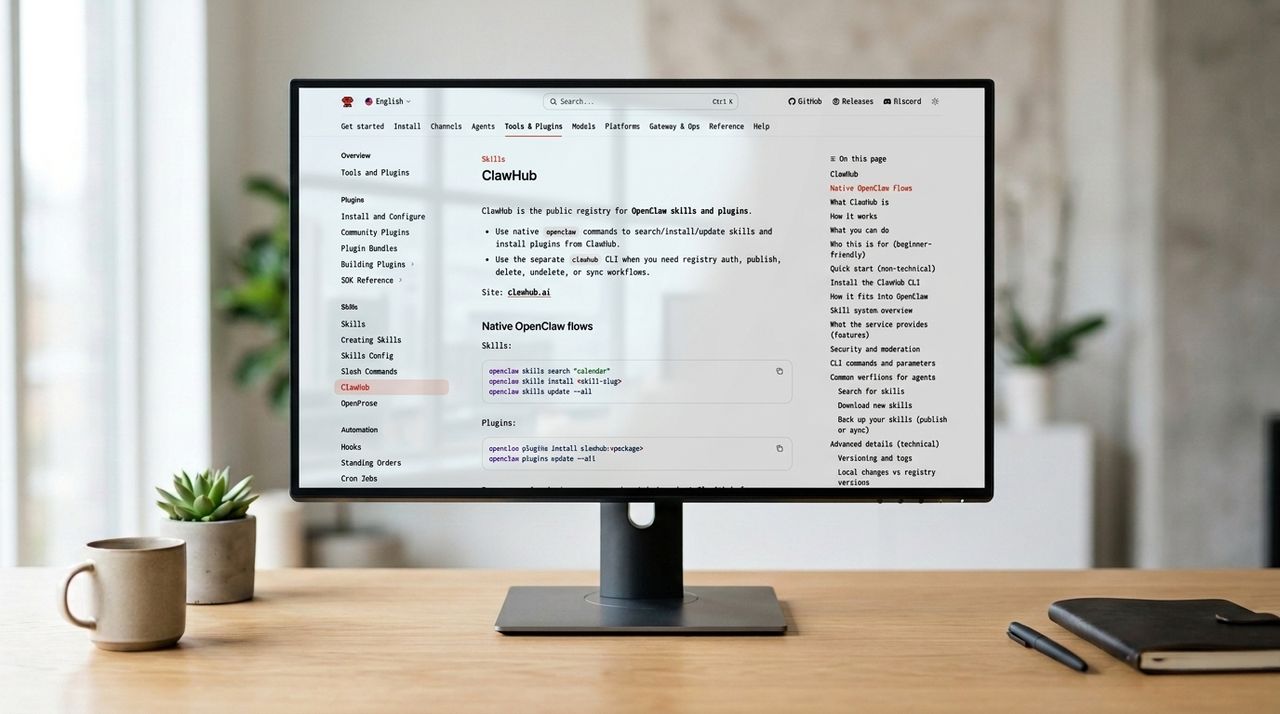

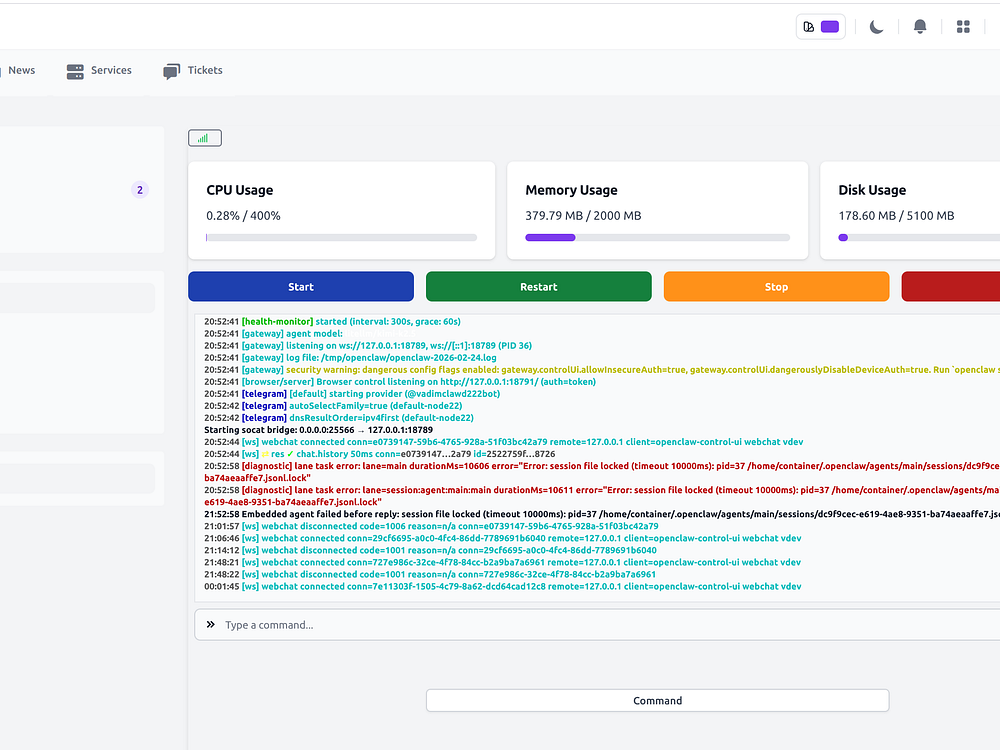

OpenClaw Hosting is a managed cloud platform for running OpenClaw, the open-source autonomous AI agent, 24/7 without dealing with servers or Docker. It supports any OpenAI-compatible model, including Claude, GPT, Gemini, and local models via Ollama, and includes free access to Kimi K2.5. Connect your agent to Telegram, WhatsApp, Slack, Discord, Signal, or iMessage, and keep data private with isolated containers and local-first storage. The platform handles updates, monitoring, and scaling so your agent stays online and productive.

Open-source LLM tracing that speaks GenAI, not HTTP.

The PDF Library that automatically organizes itself

Preview GIS files directly in Finder (GeoJSON, SHP, GPKG)

Turn Website Visitors into Customers with AI Conversations

App cleaner that lives in your MacBook’s notch

Email Infrastructure for Modern Product Teams

Massive local model speedup on Apple Silicon with MLX

Science-backed breaks to protect your vision & prevent RSI

Orchestrate your AI coding agents

Source leads, send outbound, grow pipeline. All in your CRM.

Get direct, unfiltered access to the People's House

Grails provides domain intelligence to help VCs, founders, and operators evaluate company domains, discover naming opportunities, and connect with owners. Use domain health audits, industry and funding-stage benchmarks, valuations, risk scoring, and curated lists to spot gaps and acquisition targets. Post a domain request and get responses from owners, or browse available strategic names and work with verified brokers to move fast and avoid costly mistakes.

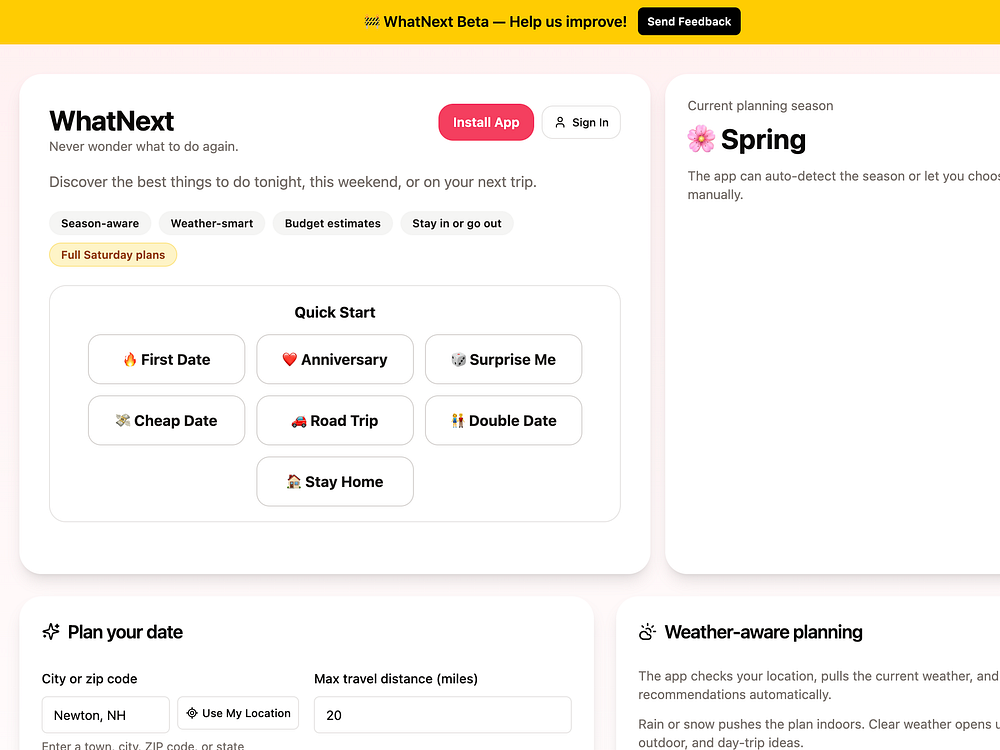

WhatNext is an AI-powered planner that instantly builds complete itineraries for date nights, friend hangouts, day trips, and weekend adventures using real places, venues, and live events near you. Enter your location and vibe, and it assembles dinner, activities, dessert, and drinks with Google Maps links. Customize budget and preferences, regenerate alternatives, save favorites, and use it across 50+ US cities — free to start

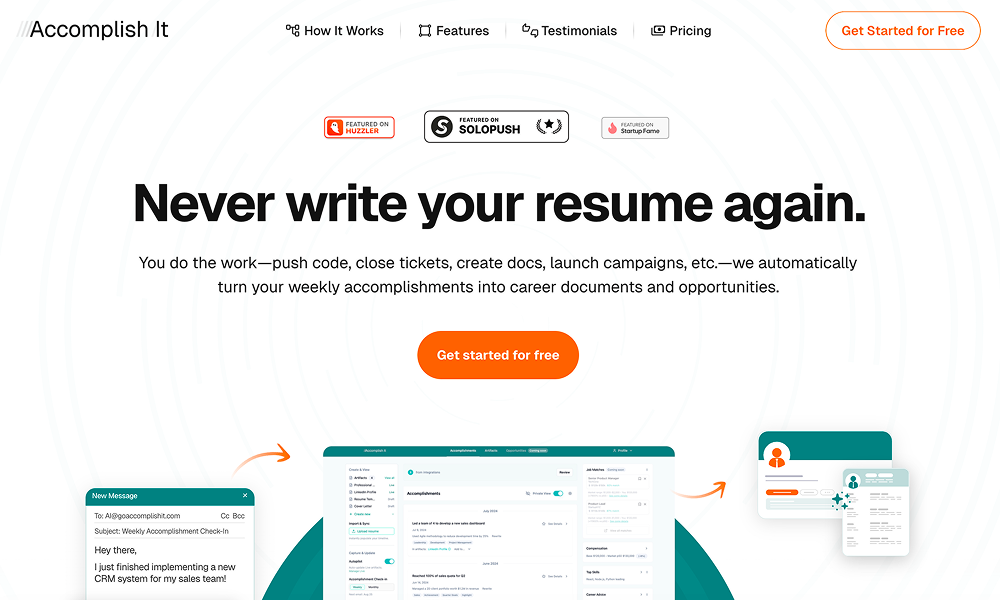

Accomplish It helps you capture, organize, and showcase your career accomplishments. Connect work sources like GitHub and Jira or reply to periodic prompts, and its AI records, categorizes, and turns results into resume-ready statements. Build a living resume to share a timeline, export polished resumes and career artifacts, and benchmark progress by role to stay ready for reviews and new opportunities.

The new Comments to Heart option will automate positive engagement and simulate personalized interaction on high-volume channels.

Instagram had been using the proprietary ratings scales without permission and will now include a disclaimer saying it “didn’t work with the MPA.”

A new survey from the app and research platform Suzy found that Snapchatters also use their content to influence travel decisions in their social circles.

The updated Adaptive Ranking Model will use less computing power to deliver more relevant ads and drive better return on ad spend.

The latest artificial intelligence-enhanced designs support all-day wear, allowing users to capture and record images and video in virtually any situation.

Integrating the two apps should offer a new monetization pathway for creators and make it easier for fans to request personalized messages.

Return of the King – Multi-GPU PC gaming is ready for a comeback During the company’s DLSS 5 reveal, Nvidia teased something massive. When demoing their next-generation DLSS features, Nvidia were running multi-GPU systems. While Nvidia confirmed that DLSS 5 will be usable on single-GPU systems later this year, this demo highlighted something bigger: the […]

The post Multi-GPU Returns – Nvidia unveils “AI SLI” to power DLSS 5 appeared first on OC3D.

Agents can generate outdated Gemini API code because their training data has a cutoff date. We built two complementary tools to fix this.The Gemini API Docs MCP (https:/…

Agents can generate outdated Gemini API code because their training data has a cutoff date. We built two complementary tools to fix this.The Gemini API Docs MCP (https:/…

dubltap.io is an ecosystem of 8 single-purpose AI web apps. Each one solves one problem well. Market Maven offers competitive intelligence. Bad Mutha Forker transforms recipes. CLIFF NOTEZ analyzes documents. There are 5 more tools for sales, design, music, side hustles, and cognitive enhancement. All are free to try.

Painkiller Ideas helps founders find ideas worth building and validate them fast. It scrapes Reddit, Hacker News, GitHub, and Product Hunt for real complaints, then uses AI to score pain intensity, market size, and competition. Submit any concept to get market sizing, competitor analysis, ideal customer profiles, pricing strategy, and a prioritized validation roadmap. Access playbooks, prompts, landing page wireframes, and brand assets, and join a community of builders to source problems and compare notes.

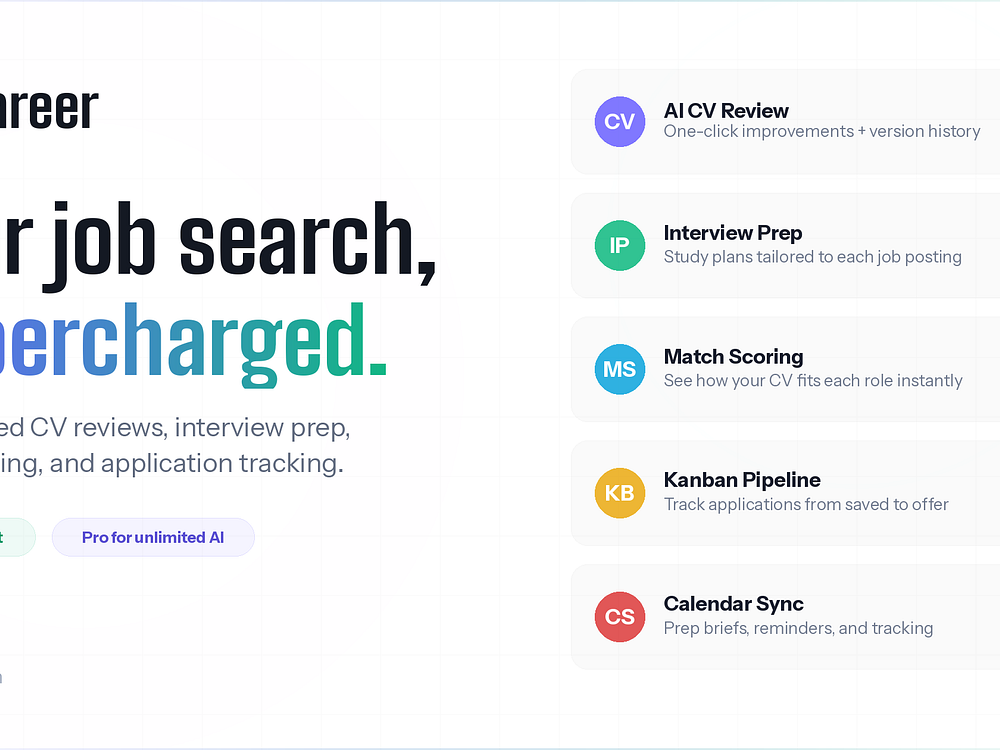

Lyn Career is a career intelligence platform that turns your job search into a strategic plan. It lets you track every application in one dashboard and extract job details from URLs, screenshots, or PDFs. You get match scores, skill gap insights, and rejection pattern analysis. It offers CV intelligence with actionable rewrites, role-specific interview prep, offer comparisons, and smart follow-up reminders with ghost detection. A built-in kanban, calendar sync, and contact CRM help manage pipelines and relationships clearly.

Valyris helps founders find and fix weak points in a campaign or investor pitch before high-stakes reviews. It tests narrative clarity, proof strength, internal consistency, timing and exposure, ask/raise logic, and delivery credibility to reveal blind spots and rank priorities. Start with a free 8-question check, then upgrade to an Audit or Deep Audit for a fast PDF diagnosis with key fragilities, likely objections and responses, contradiction mapping, evidence scoring, and a concrete fix plan. It's designed for Kickstarter, Indiegogo, Seedrs, Crowdcube, Y Combinator, Techstars, and direct investor outreach.

Nvidia DLSS 4.5 with dynamic frame generation is now available for RTX 50 GPUs using the Nvidia App (enable beta updates). The feature adjusts frame-gen in real time to balance performance and image quality. The update also adds MFG modes of up to 6x, along with beta automatic shader compilation to reduce in-game stutter.

The Card Shop Store is a marketplace for buying, selling, and vaulting sports, TCG, and entertainment trading cards. It supports direct sales and auctions, offers storefronts for sellers, and features CardShares for fractional physical ownership. You can browse graded and raw cards, track conditions and prices, and manage secure transactions. Use the web or mobile apps to list inventory, join breaks and auctions, and keep high-value cards safe in vault storage.

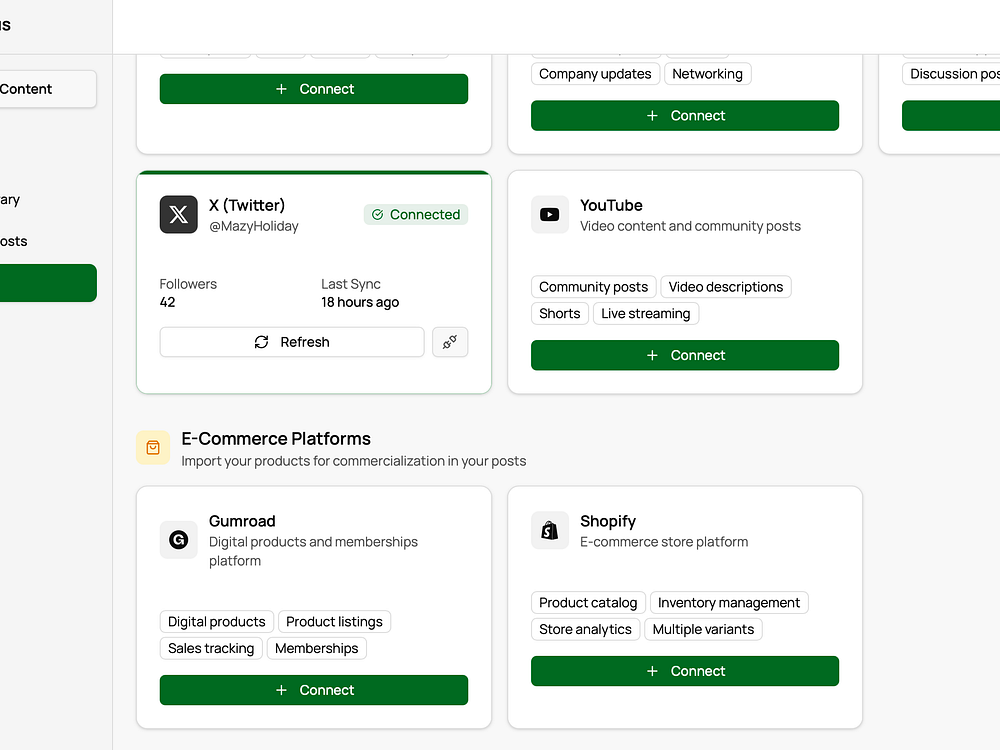

Tonimus automates social media growth for creators by generating, posting, and engaging in your brand voice while reporting revenue and personalized insights. Instead of guessing, creators know which platform earns money, audience authenticity, and insights across your genre based on real data. Tonimus not only tells you how many followers you have but also what they're worth and what to do next. It shows creators exactly which content drives revenue and automates creating more of it.

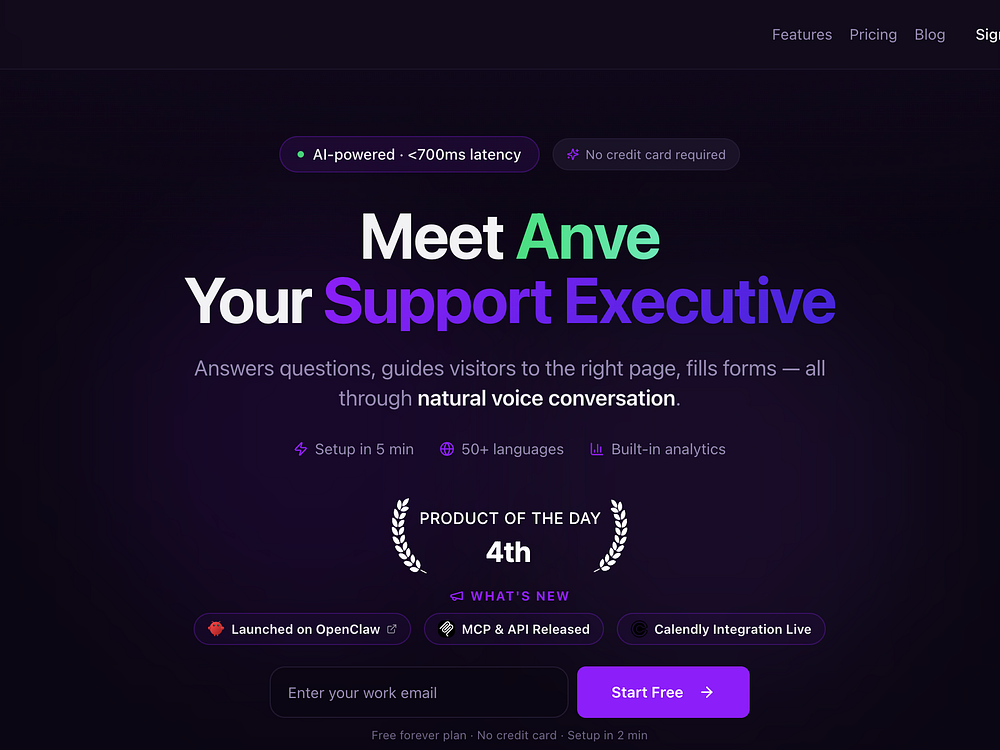

AnveVoice brings voice-first conversations to your website so visitors can speak naturally and get things done. It listens, understands intent, and acts on the page by scrolling, navigating, filling forms, and booking meetings while remembering preferences across sessions.

Embed a single script to add it to Shopify, WordPress, Webflow, Wix, Squarespace, React, or any site. A dashboard tracks sessions, conversions, and usage in real time so you can monitor performance and scale with transparent, token-based pricing.

YouTube used its NewFront presentation to unveil a significant upgrade to its Creator Partnerships platform, adding Gemini-powered creator matching, stronger measurement tools, and new ways to run creator content as paid ads.

Why we care. Influencer marketing has become a core part of many brands’ strategies, but finding the right creators at scale and proving ROI is a pain point. tackles influencer marketing’s two biggest friction points — finding the right creator and proving ROI.

Gemini-powered matching cuts through the noise of three million creators, while the ability to run creator content as paid Shorts and in-stream ads makes performance measurable like any standard campaign, backed by a reported 30% conversion lift.

How it works. The updated platform uses Gemini to recommend creators from a pool of more than three million YouTube Partner Program members, filtered by campaign goals. Advertisers get more control over who they work with and better visibility into how those partnerships perform.

The big new feature. A revamped Creator Partnerships boost lets brands run creator-made content directly as Shorts and in-stream ads — formats YouTube says deliver an average 30% lift in conversions.

The big picture. The announcement builds on BrandConnect, YouTube’s existing creator monetization infrastructure, showing that the platform is doubling down on the creator economy as a growth lever for advertisers — not just a content strategy.

What’s next. Brands interested in the updated tools can watch the full NewFront presentation on YouTube for more details.

Reddit ranks as the most-cited domain in AI-generated answers, followed by YouTube and LinkedIn, based on a new analysis of 30 million sources by Peec AI, an AI search analytics tool.

The findings. Reddit was the most-cited source across ChatGPT, Google AI Mode, Gemini, Perplexity, and AI Overviews. YouTube, LinkedIn, Wikipedia, and Forbes also ranked in the top five. Review platforms like Yelp and G2 appeared often in recommendation queries.

The research showed which domains models rely on:

Why we care. To win in AI search, you need authority beyond your site. Brands that appear consistently across trusted third-party platforms are more likely to be cited.

Why these sources? AI systems prioritize perceived authority plus authentic user input:

About the data. The analysis covered 30 million sources across ChatGPT, Google AI Mode, Gemini, Perplexity, and AI Overviews, measuring domains directly cited in answers to isolate what shapes responses.

The study. Top domains cited by AI search: Analysis based on 30M sources

Dig deeper. More citation research:

Veo 3.1 Lite is now available in paid preview through the Gemini API and for testing in Google AI Studio.

Veo 3.1 Lite is now available in paid preview through the Gemini API and for testing in Google AI Studio.  Fitbit adds cycle, mental wellbeing & nutrition tools in Public Preview. Now available for those without a Premium membership.

Fitbit adds cycle, mental wellbeing & nutrition tools in Public Preview. Now available for those without a Premium membership.

A newly published, unverified report claims Google’s Gemini AI is instructed to mirror user tone and validate emotions while grounding its responses in fact and reality.

Why we care. If accurate, AI-generated search responses may vary based on how a query is phrased — not just the information available.

What’s new. The report centers on the inherent tension in the system-level instructions guiding how Gemini responds. The report, published by Elie Berreby, head of SEO and AI search at Adorama, suggested that Gemini is instructed to:

What it means. The “overly supportive mandate frequently overrides the factual grounding,” Berreby wrote. So instead of acting as a neutral aggregator, AI answers may:

If public perception is negative, AI may amplify it. As the report suggests:

Query framing. The emotional framing of a query affects:

Google’s AI Overviews already show tone shifts, often aligning with query intent beyond keywords. This report offers a possible explanation.

Unverified. Google hasn’t confirmed the leak. As Berreby noted in his report: “I’ve decided to share only a fraction of the leaked internal system information with the general public. I’m not sharing any sensitive data. This isn’t a zero-day exploit. This is a tiny leak.”

The report. This Gemini Leak Means You Can’t Outrank a Feeling

Google is giving retailers more firepower to promote loyalty program benefits directly within product listings — expanding the program internationally and into its newest AI-powered shopping experiences.

What’s new. Merchants can now highlight member pricing and exclusive shipping options directly on listings. Loyalty annotations have also expanded to local inventory ads and regional Shopping ads — making it easier to promote in-store or geography-specific perks.

Why we care. The more you can personalize an offer for a shopper, the better. Embedding member perks into the moment of purchase discovery — rather than requiring a separate loyalty app or webpage — makes programs more visible and more likely to drive sign-ups.

By the numbers. According to Google, some retailers have reported up to a 20% lift in click-through rates when showing tailored offers to existing loyalty members.

The big picture. Loyalty benefits will now appear on Google’s AI-first surfaces, including AI Mode and Gemini, putting member offers in front of shoppers at an entirely new layer of the search experience.

Where it’s available. The expansion covers 14 countries — Australia, Brazil, Canada, France, Germany, India, Italy, Japan, Mexico, Netherlands, South Korea, Spain, the UK, and the US.

How to get started. Merchants activate the loyalty add-on in Merchant Center, configure member tiers, and set up pricing and shipping attributes. Connecting Customer Match lists in Google Ads is required to display strikethrough pricing and shipping perks to known members.

Don’t miss. US merchants can apply to join a pilot that uses Customer Match as a relationship data source for free listings — potentially expanding loyalty reach without additional ad spend.

Gary Illyes from Google shared some more details on Googlebot, Google’s crawling ecosystem, fetching and how it processes bytes.

The article is named Inside Googlebot: demystifying crawling, fetching, and the bytes we process.

Googlebot. Google has many more than one singular crawler, it has many crawlers for many purposes. So referencing Googlebot as a singular crawler, might not be super accurate anymore. Google documented many of its crawlers and user agents over here.

Limits. Recently, Google spoke about its crawling limits. Now, Gary Illyes dug into it more. He said:

Then what happens when Google crawls?

How Google renders these bytes. When the crawler accesses these bytes, it then passes it over to WRS, the web rendering service. “The WRS processes JavaScript and executes client-side code similar to a modern browser to understand the final visual and textual state of the page. Rendering pulls in and executes JavaScript and CSS files, and processes XHR requests to better understand the page’s textual content and structure (it doesn’t request images or videos). For each requested resource, the 2MB limit also applies,” Google explained.

Best practices. Google listed these best practices:

<title> elements, <link> elements, canonicals, and essential structured data — higher up in the HTML document. This ensures they are unlikely to be found below the cutoff.Podcast. Google also had a podcast on the topic, here it is:

PDFsam Basic is a free, open-source tool for splitting, merging, and organizing PDFs. Version 6.0 adds three compression modes, better support for PDF 2.0 and UTF-8 text, stronger handling for malformed files, and more quality-of-life improvements.

Manuscript is two things. For publishing houses, it's a tool that streamlines the entire editorial process and makes it 10 times more efficient. It uses AI ethically, handling the tedious parts of editing while keeping the artful, human side of publishing exactly where it belongs: with humans.

For authors, Manuscript is a full workspace that gives you a complete toolbox but leaves the writing entirely to you. Think of it as a Scrivener alternative built for the 21st century—one that will never write for you.