Get the sleep you've been dreaming of this Spring with the ultimate mattress upgrade

For the past several years, marketing strategy has reorganized itself around a simple premise. Third-party data is fading. Privacy expectations are rising. The solution, we are told, is first-party data.

Collect more of it. Centralize it. Build the customer view around it.

In many ways, the shift was necessary. Direct relationships with customers are more durable than rented audiences. Consent and transparency matter. Organizations that invested early in their own data ecosystems are better positioned today than those that relied entirely on external signals.

But the industry’s confidence in first-party data has grown so strong that it now obscures a more complicated reality.

Owning customer data does not automatically translate into understanding customers.

Most marketing leaders have sensed this tension already. Despite increasingly sophisticated technology stacks, many organizations still struggle with familiar questions. Which records represent active individuals? Which identities are stale or misattributed? How much of the customer view reflects current behavior versus historical assumptions?

These are not philosophical concerns. They surface in everyday operational decisions. Campaigns that reach fewer real customers than expected. Personalization efforts that plateau. Measurement models that appear precise but produce inconsistent outcomes.

The problem is not the absence of data. If anything, the opposite is true.

The problem is the assumption that the data sitting inside our systems still reflects reality.

One of the quiet characteristics of customer data is how quickly it shifts from present tense to past tense.

Most organizations gather identity information at moments of interaction. Account creation, purchases, subscriptions, service requests. These events create durable records that enter CRM systems, marketing platforms and data warehouses.

From that point forward, the records largely persist as they were captured.

What changes is the world around them.

Consumers rotate devices. Email addresses evolve from primary to secondary. People move, change jobs, create new accounts, abandon others. Behavioral patterns shift with new platforms, new habits, and new privacy controls.

The record still exists, but the certainty surrounding the identity begins to loosen.

Marketing teams encounter this reality in subtle ways. Lists that appear healthy but deliver diminishing engagement. Customer profiles that fragment across systems. Identity graphs that require constant reconciliation as signals drift out of alignment.

None of this means first-party data is wrong. It simply means it ages.

The moment of collection is precise. The months and years that follow are less so.

The idea of a unified customer profile has become foundational to modern marketing infrastructure. Customer data platforms, identity graphs and advanced analytics environments all attempt to bring scattered signals together into a coherent picture.

When the signals align, the results can be powerful.

But the effectiveness of these systems depends heavily on the integrity of the identifiers entering them. Email addresses, login credentials, device associations and other identity anchors serve as the connective tissue between records.

When those anchors drift or degrade, the unified profile begins to lose clarity.

This is not a failure of the technology itself. Most identity platforms perform exactly as designed. They connect the signals available to them.

The challenge is that many of those signals were captured months or years earlier, during moments when the system had limited visibility into the broader identity context surrounding the individual.

As the digital environment evolves, the original record becomes one reference point among many.

Marketing leaders recognize this gap when their systems produce technically accurate profiles that still fail to explain current customer behavior. The database reflects what was known. The customer reflects what is happening now.

Closing that gap requires something more dynamic than stored attributes alone.

In recent years, some organizations have begun looking beyond the traditional boundaries of customer records and focusing more closely on signals that indicate whether an identity is still active within the broader digital ecosystem.

Activity signals provide a different kind of intelligence.

Instead of asking what information was collected about a customer in the past, they ask whether the identity attached to that information continues to exhibit real-world behavior today.

These questions are becoming increasingly important for teams responsible for both growth and risk management.

For marketing, activity signals help clarify which audiences remain reachable and which identities have quietly gone dormant. For fraud teams, they help differentiate legitimate consumers from synthetic identities that appear valid on the surface but lack authentic behavioral patterns.

Both disciplines are ultimately trying to answer the same question.

Does this identity correspond to a real person who is active in the digital world right now?

Stored data alone rarely answers that question with confidence.

Among the many identifiers circulating through the digital ecosystem, one has proven particularly resilient over time.

Email.

For decades it served as both a communication channel and a persistent identity anchor. It appears in authentication systems, commerce transactions, subscriptions, customer service interactions and countless other digital touchpoints.

That ubiquity produces a secondary effect. Email addresses generate a continuous stream of activity signals that reflect how identities move through the online world.

When those signals are analyzed across large networks, they reveal patterns that extend far beyond a single company’s customer database.

They can indicate whether an identity is actively engaged in digital life or has fallen silent. They can highlight inconsistencies that suggest risk. They can surface connections that help reconcile fragmented customer views.

In other words, they transform a simple identifier into a dynamic indicator of identity health.

Organizations that understand this dynamic tend to treat email differently. It becomes less of a campaign endpoint and more of a reference point for understanding identity across channels.

Over the past decade, marketing technology has made extraordinary progress in storing and organizing customer data. Few organizations today lack the infrastructure to capture and analyze enormous volumes of information.

The next frontier is not accumulation. It is validation.

Knowing a customer increasingly depends on the ability to verify that the identities inside a database still correspond to real individuals with ongoing digital activity.

This shift changes how teams think about data quality.

Instead of focusing solely on completeness, forward-looking organizations pay closer attention to vitality. Which identities remain active. Which have quietly faded. Which exhibit patterns that suggest fraud or synthetic creation.

These distinctions influence everything from campaign reach to attribution accuracy to risk exposure.

When identity signals are strong, the rest of the marketing ecosystem performs more reliably. Personalization becomes more relevant. Measurement reflects real outcomes. Customer experiences align more closely with actual behavior.

When identity signals weaken, even the most advanced tools begin operating on uncertain ground.

The industry’s embrace of first-party data was an important correction after years of dependence on opaque third-party sources.

But ownership alone does not guarantee clarity.

Customer records capture moments in time. The people behind them continue to evolve.

For organizations that want to truly understand their customers, the challenge is no longer simply collecting data. It is maintaining an accurate connection between stored identities and real-world activity.

That requires looking beyond the database itself and paying closer attention to the signals that reveal whether an identity remains alive in the digital ecosystem.

Companies that make that shift discover something important.

The most valuable customer data is not the information they collect once.

It is the intelligence that helps them keep that data connected to real people over time.

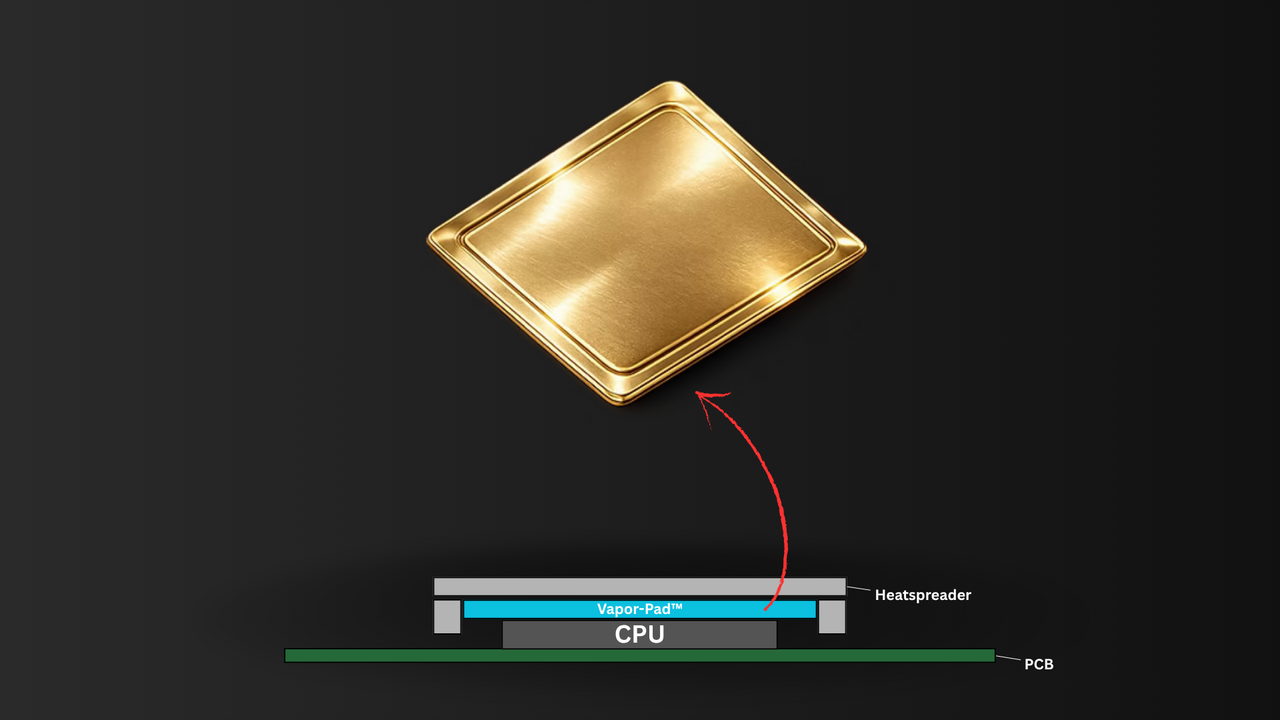

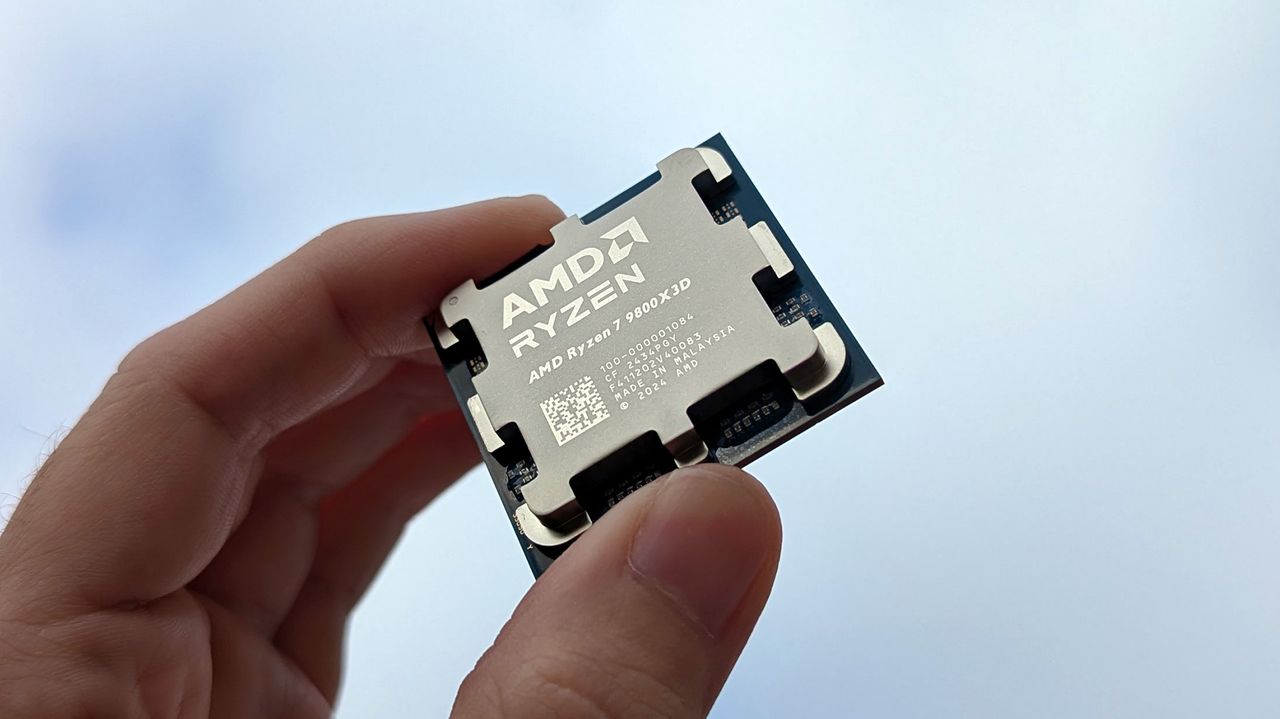

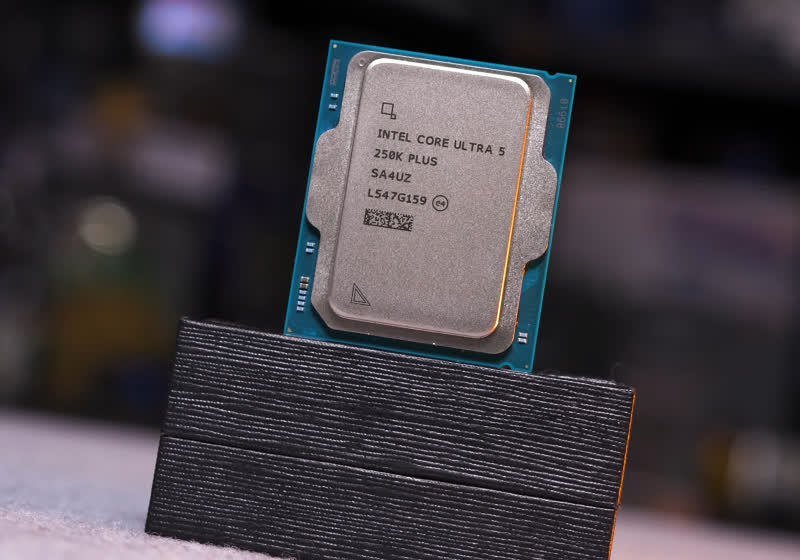

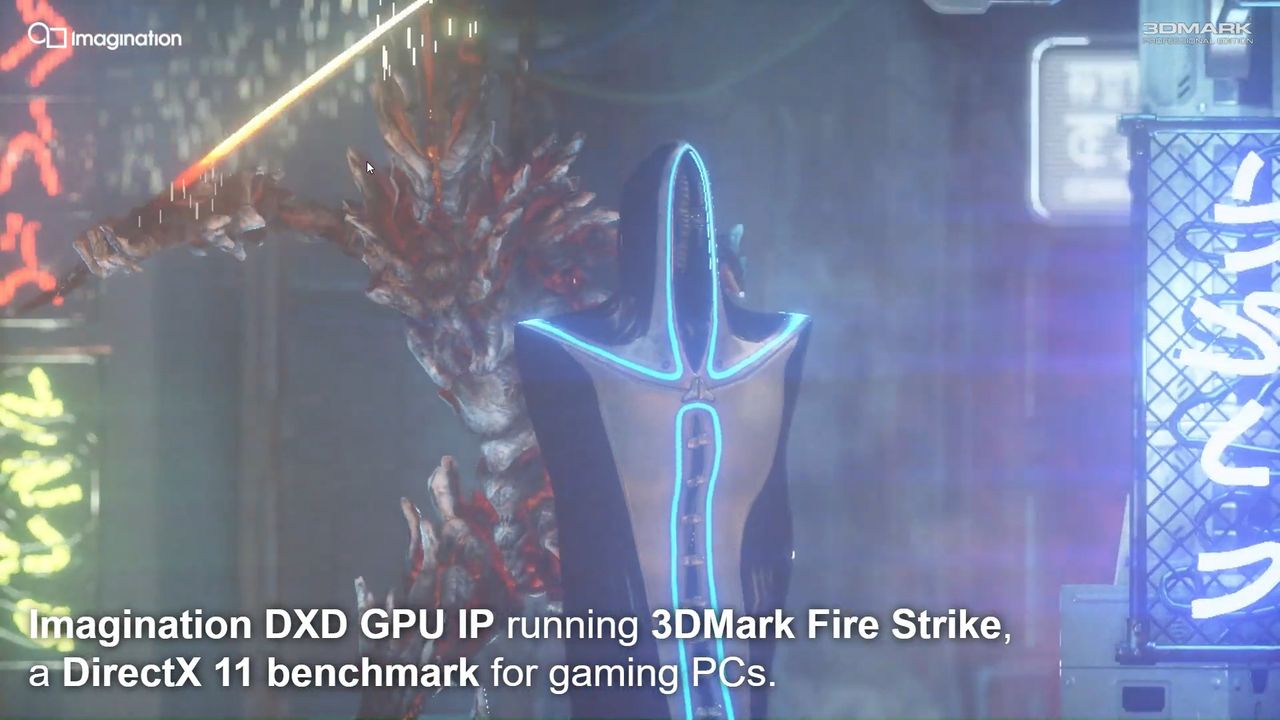

Primate Labs call Geekbench results with Intel’s IBOT tool “invalid” Primate Labs, the company behind Geekbench, the popular cross-platform benchmarking tool, has responded to the release of Intel’s Core Ultra 200S PLUS series CPUs (see our review here). The company has stated that all Geekbench 6 results using Intel’s new CPU “may be invalid” due […]

The post Geekbench declares all Intel Core Ultra PLUS CPU benchmarks potentially “invalid” appeared first on OC3D.

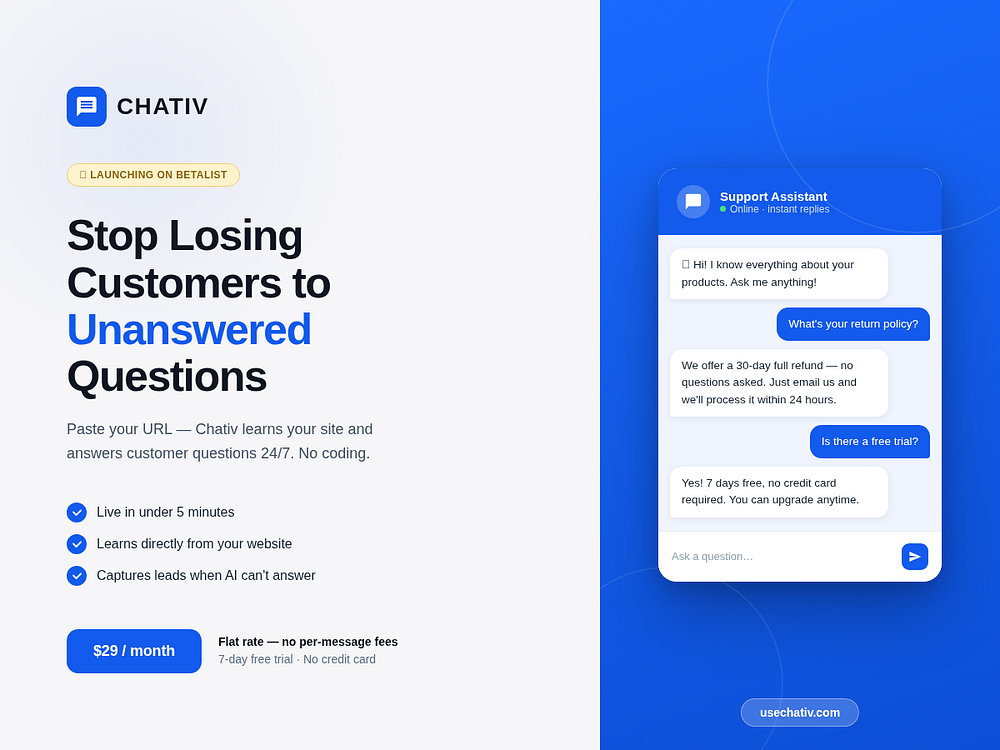

Prowl automates competitor tracking for pricing, website changes, hiring, news, and social channels. It delivers clear weekly reports explaining what changed, why it matters, and how to respond, plus real-time email or Slack alerts for critical updates. Use dashboards for trend analysis, side-by-side comparisons, and sales battlecards. Get started free for two competitors with no setup required.

QR Dex lets you create, brand, and manage dynamic QR codes while tracking every scan with real-time analytics. You can customize codes with your logo and colors, choose from URL, Email, Phone, SMS, WhatsApp, and Wi-Fi types, and update destinations anytime without reprinting.

Collaborate with your team using folders and roles, view campaign performance across locations, and export reports. The platform secures data in transit and offers SSO for teams that need centralized control.

VaultIt helps parents preserve their children's artwork, photos, and quotes in a secure, organized space. Capture memories quickly, tag by child, date, or theme, and find milestones fast without paper clutter. Choose who sees what, keep everything private, and upgrade for unlimited memories, advanced tags, custom timelines, and HD media. Build a digital time capsule today and later turn it into beautiful printed albums.

Spawn vision-enabled AI agents autonomously browsing the web

Help AI agents recommend you more often to the right people

Create specialized AI agents for real tasks and workflows

Generate design images and 3D models for product design

Stop BS in real-time with AI that fact-checks as you listen

Repurpose social media posts with unique content per format

A unified foundation model that thinks in pixels

Publish your markdown as a beautiful website – in seconds.

Set a budget and get alerted when flights get cheap

Pulls in changes from your tools and generates release notes

AI workspaces for building and running apps on Kubernetes

Where AI agents work at a schedule in the cloud

Create 3D, apps, and websites with parallel agents

AI-native global banking on stablecoins for emerging markets

Teach your repo how to run itself

Your tasks are the interface

Fully autonomous data analysis agent for daily insights

Turns screen recording into structured, AI-generated tasks

Deploy and Host AI Agents for $1/month

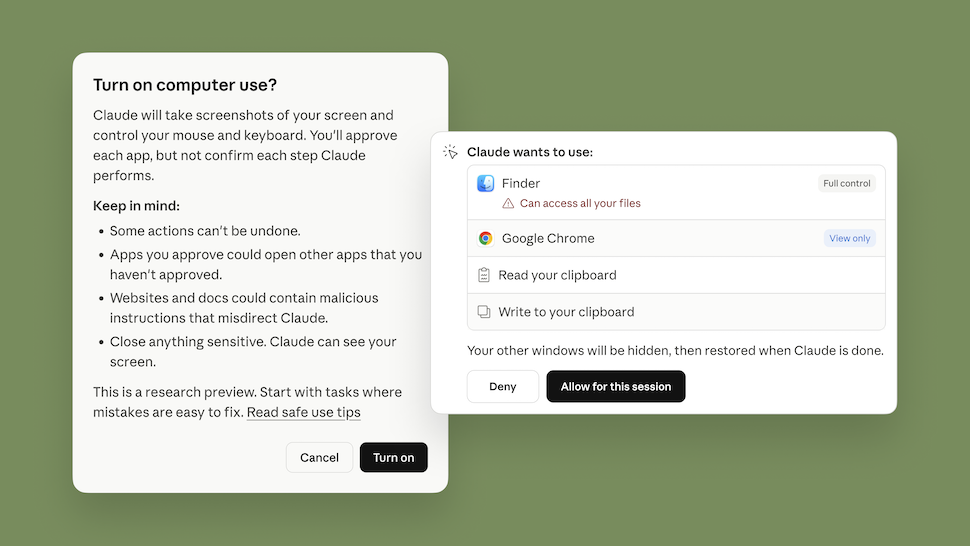

Let Claude make permission decisions on your behalf

AI that turns traffic into more revenue while you sleep

Agentic pentesting, now inside Lovable

New LLM compression algorithm by Google

AI teams that run your work

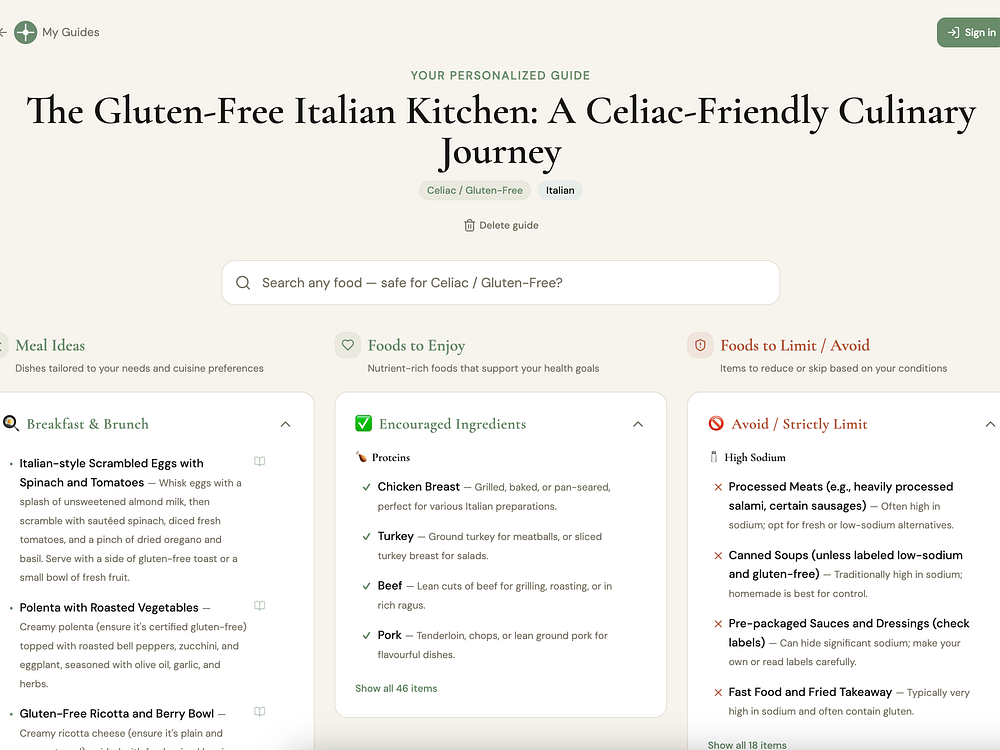

Most nutrition apps start with a calorie target and work backward. NutritionGuide starts with the food you love — your cuisine preferences, health condition, and lifestyle — and builds a 7-day guide from there. There's no calorie counting or macro tracking. Balance is shown as food groups, not numbers. Every meal is swappable, and your guide regenerates every week.

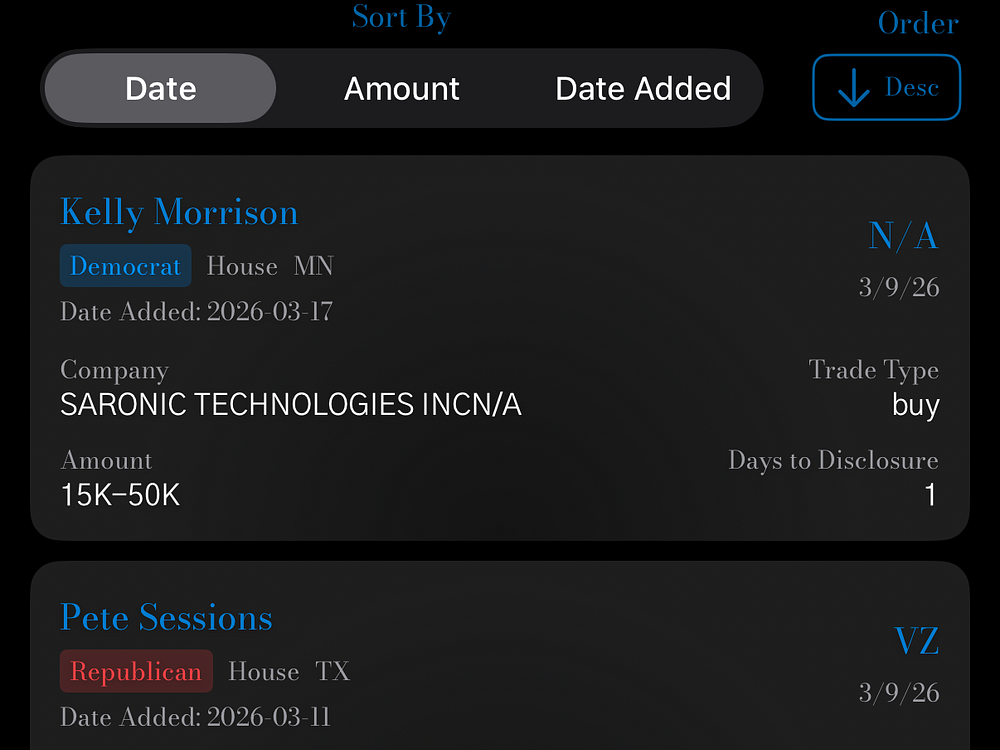

OtterQuant delivers live market intelligence with AI-powered analysis and interactive data. You can track custom portfolios, generate instant financial reports with OtterBot, and chat to screen stocks using natural language. Explore a congressional trade tracker, daily Reddit sentiment, and full earnings call transcripts. View fast intraday charts, analyst targets, calendars, and news for thousands of US tickers. Use free core tools or upgrade for faster updates and higher AI limits.

ManyLens lets you type a real-life dilemma and view structured perspectives side by side from philosophy, psychology, religion, and other traditions. It keeps each lens distinct, highlights common ground, and helps you reflect by saving insights over time. Use it to compare reasoning, spot convergences, and make decisions with context rather than one blended answer.

Reward your brain, feed your Dactyl, get stuff done! Taskadactyl is a gamified task app built for ADHD brains bored by other productivity tools. Your tasks don't get to win anymore. Your Dactyl eats first. Tasks become quests, completions trigger real rewards, with over 50 badges and game themes. Something unlocks at 3 referrals, with clues in the app.

Built by an ADHD founder who got tired of being eaten alive and decided to build the predator instead.

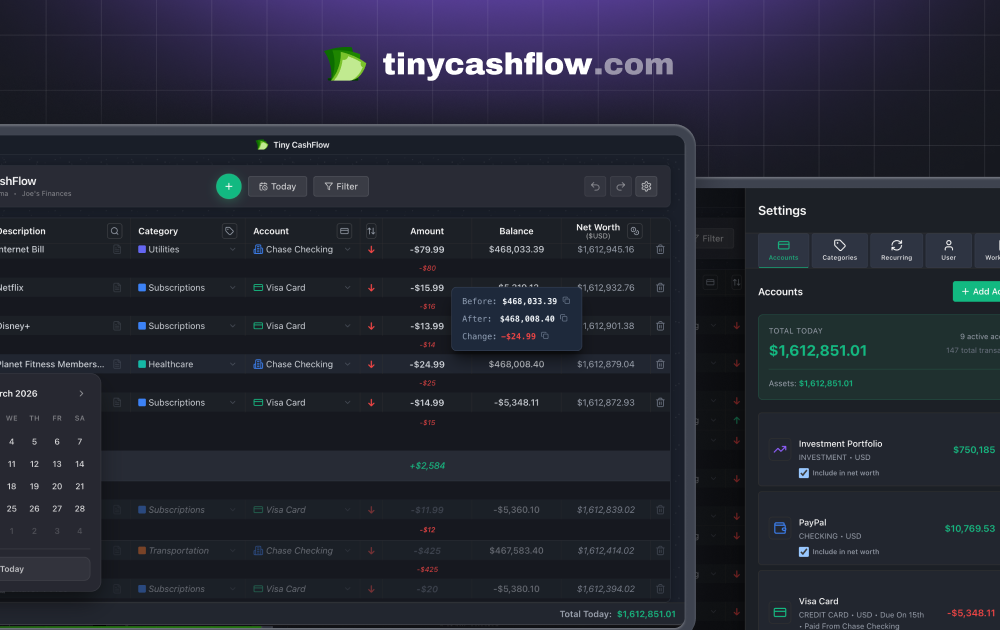

TinyCashFlow is a manual cashflow tracker with an infinite timeline. Instead of just showing your past spending, it projects forward — scroll to any future date and see your exact balance, accounting for all your recurring transactions. Built around a spreadsheet-style interface, everything is on one screen. Edit inline, filter on the fly, and quick-sum any selection. It supports multiple currencies, crypto, and shows a running net worth column across all your accounts. No bank connections or sign-up are required. The free tier is genuinely useful, while premium adds cloud sync, mobile, and multi-sheet support. It works on Mac, Windows, iOS, and Android, and is fully offline first.

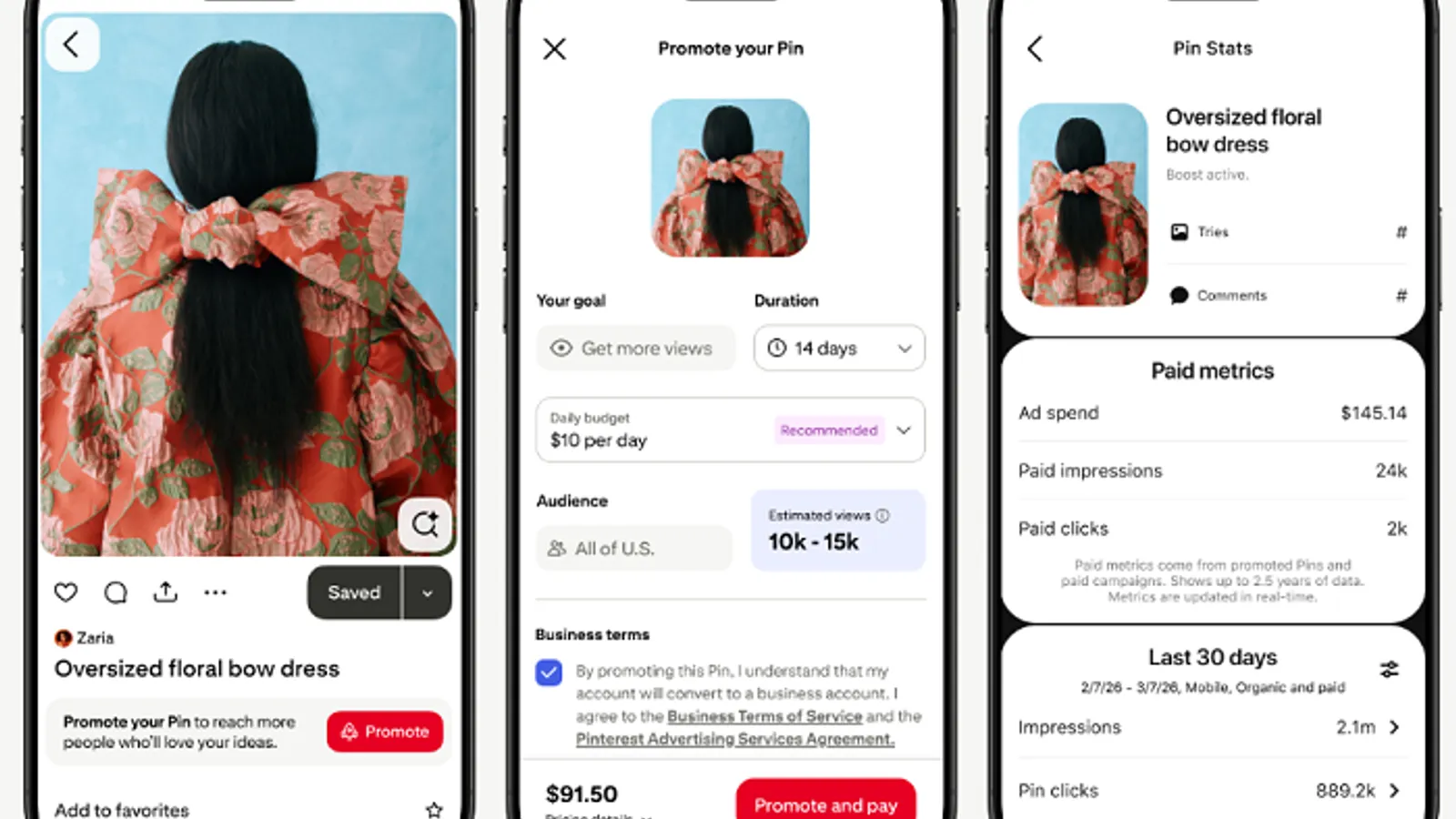

Meta announced a range of new in-app shopping updates at ShopTalk 2026.

Augmented reality developers will be able to create their own effects and integrate those clips into their Lenses using a closed-prompt approach.

The company said the number amounts to about 3.8 million Snaps per minute, although the app’s overall momentum appears to be stalling.

Advertisers will be able to include shoppable tiles and promotional overlays, which can help them reach the platform’s growing community of high-intent shoppers.

The app introduced Total Snap Takeovers and is developing a Snap-specific promotional option in an effort to win more marketing dollars.

The updated premium placement promotional opportunities include Logo Takeover, TopReach and an expanded Pulse suite.

The new option will offer creators and brands a flexible budget option to showcase content and reach more of the platform’s 619 million active users.

New elements are designed to improve ad performance and engagement tracking, as well as assist in campaign setup.

The platform is merging creator and advertising elements into a single space to facilitate collaboration opportunities and streamline affiliate marketing.

The much-requested feature will let creators edit the order of their images and videos after publishing.

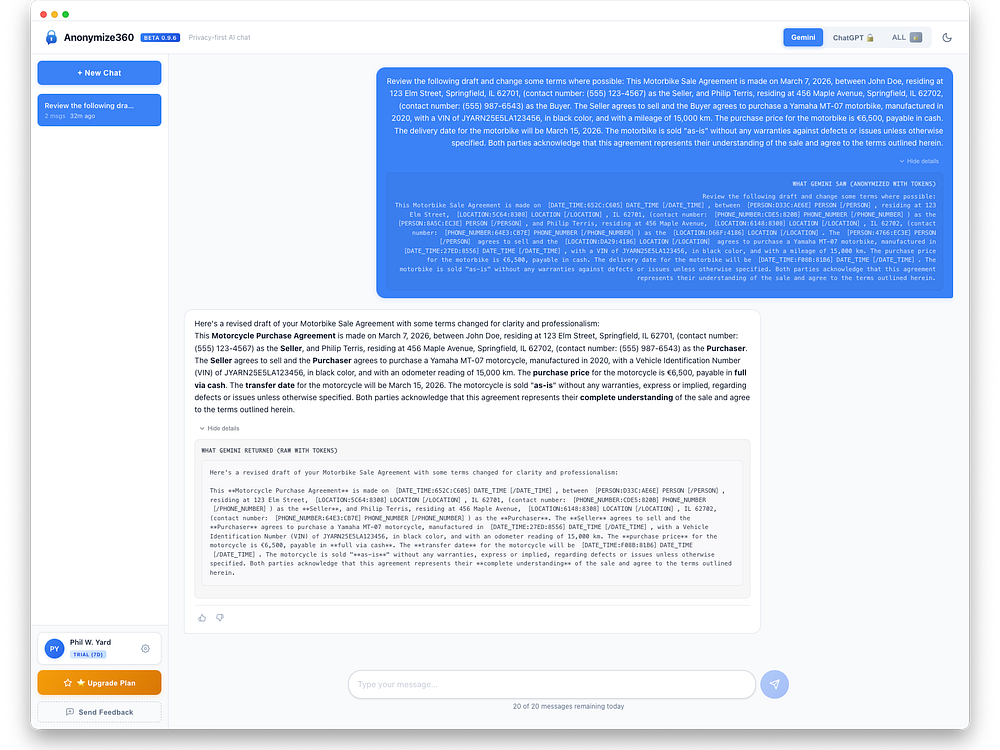

Anonymize360 protects sensitive data in AI chats by rewriting it on your device before it leaves and restoring it on return. It detects PII like names, addresses, SSNs, and medical or financial details, replaces them with tokens, and encrypts the originals locally with AES-256. The system runs on-device with a zero-knowledge design and works seamlessly with AI models. Enterprises gain privacy-by-default workflows and compliance support, while individuals can download and start with a free trial.

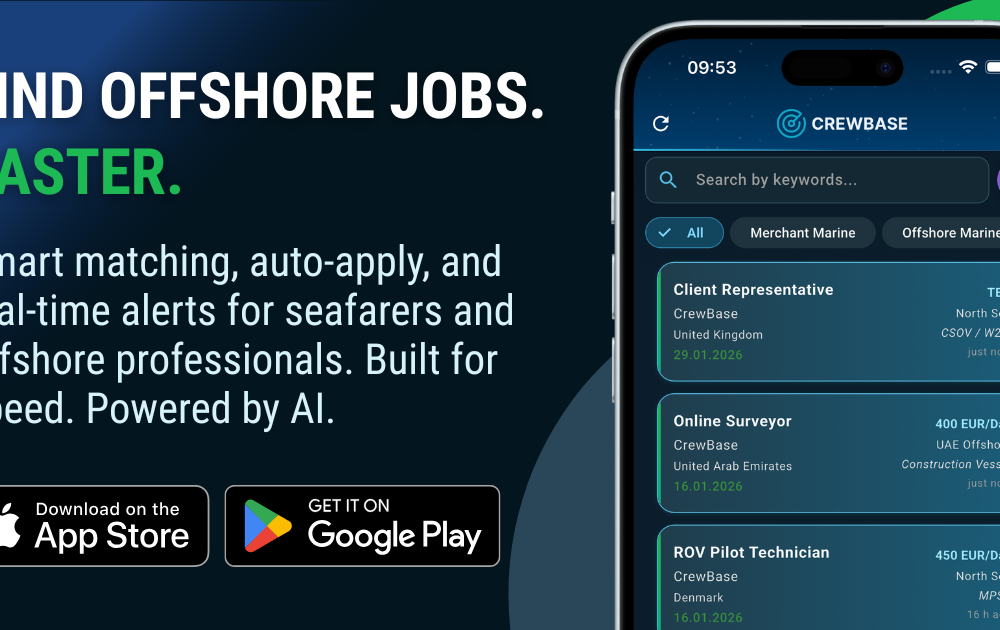

CrewBase connects seafarers and offshore professionals with verified maritime jobs using AI-powered matching, smart filters, and real-time alerts. It lets you search instantly, set auto-apply rules, and generate a polished CV, with seamless access on iOS, Android, and web. Employers post vacancies in minutes, search a growing verified talent pool, and manage applications with secure proxy email and desktop-optimized workflows, enabling fast, targeted maritime recruiting at scale.

Google updated its Discussion Forum and Q&A Page structured data docs with new properties, including a way to label AI- and machine-generated content.

The post Google Adds AI & Bot Labels To Forum, Q&A Structured Data appeared first on Search Engine Journal.

Google started rolling out the March 2026 spam update. The update applies globally and to all languages, with rollout taking a few days.

The post Google Begins Rolling Out The March 2026 Spam Update appeared first on Search Engine Journal.

Google released its March 2026 spam update today at 3:20 p.m. It’s the second announced Google algorithm update of 2026, following the February 2026 Discover core update.

Timing. This update may only “take a few days to complete,” Google said. On LinkedIn, Google added:

Why we care. This is the second announced Google algorithm update of 2026. It’s unclear what spam this update targets, but if you see ranking or traffic changes in the next few days, it could be due to it.

More on spam update. Google’s documentation says:

“While Google’s automated systems to detect search spam are constantly operating, we occasionally make notable improvements to how they work. When we do, we refer to this as a spam update and share when they happen on our list of Google Search ranking updates.

For example, SpamBrain is our AI-based spam-prevention system. From time-to-time, we improve that system to make it better at spotting spam and to help ensure it catches new types of spam.

Sites that see a change after a spam update should review our spam policies to ensure they are complying with those. Sites that violate our policies may rank lower in results or not appear in results at all. Making changes may help a site improve if our automated systems learn over a period of months that the site complies with our spam policies.

In the case of a link spam update (an update that specifically deals with link spam), making changes might not generate an improvement. This is because when our systems remove the effects spammy links may have, any ranking benefit the links may have previously generated for your site is lost. Any potential ranking benefits generated by those links cannot be regained.”

UDN, Machine TranslatedYesterday, ASUS, in partnership with Qualcomm, held a press conference for its new Zenbook A16 laptop. During an interview, Liao Yi-hsiang, General Manager of ASUS United Technology Systems Business, revealed that ASUS has confirmed that PC prices in Taiwan will increase by 25% to 30% or more in the second quarter, with varying increases across different models.

English Grammar guides you to master tenses, conditionals, modal verbs, and more through interactive exercises with instant feedback. Choose multiple choice or fill-in-the-blank, see clear visual cues, and read detailed explanations for every answer. It covers A1 to C1 levels across 20 grammar categories, with hundreds of exercises and more in development. Practice anytime on any device to build confident, accurate English.

Reddit is rolling out new Dynamic Product Ad features, including a shoppable Collection Ads format and Shopify integration, the company announced today.

What’s new.

The numbers. Reddit DPA delivered an average 91% higher ROAS year over year in Q4 2025. Liquid I.V. reports DPA already accounts for 33% of its total platform revenue and outperforms its other conversion campaigns by 40%.

Why now. Reddit has seen a 40% year-over-year increase in shopping conversations. Also, 84% of shoppers say they feel more confident in purchases after researching products on Reddit.

Why we care. The new tools, especially the Shopify integration, lower the barrier to getting started with Dynamic Product Ads. Reddit might still be viewed by some as an undervalued paid media channel, but there’s an opportunity to get in before competition and costs rise.

Bottom line. Reddit is increasingly a serious performance channel for ecommerce, and these tools make it easier to get started. If you’re not yet running DPA on Reddit, the combination of undervalued inventory and improving ad formats makes this a good time to test.

Reddit’s announcement. Introducing More Ways to Tap into Shopping on Reddit

Linkeezy is a compliant workflow tool that brings your LinkedIn inbox, saved posts, and feeds into one organized workspace. Instead of jumping between tabs and losing track of conversations or content, you can manage messages in a clean, Gmail-style view, organize saved posts into a searchable library, and follow focused feeds built around the people and topics that matter most.

Linkeezy runs through a web app and Chrome extension that retrieves your messages and content without storing them. It is designed to align with LinkedIn's terms of service, with no profile scraping, automation, or AI-generated interactions, so you stay in control while keeping your workflow efficient and focused.

Google was just named #1 on Fast Company's 2026 World’s Most Innovative Companies list.

Google was just named #1 on Fast Company's 2026 World’s Most Innovative Companies list.  An overview of Google Quantum AI’s work on superconducting and neutral atom quantum computers.

An overview of Google Quantum AI’s work on superconducting and neutral atom quantum computers.

AI search citations favor a small set of formats. Listicles, articles, and product pages drive over half of all mentions across major LLMs, according to new Wix Studio AI Search Lab research analyzing 75,000 AI answers and more than 1 million citations across ChatGPT, Google AI Mode, and Perplexity.

The findings. Listicles led at 21.9% of citations, followed by articles (16.7%) and product pages (13.7%). Together, these three formats made up 52% of all AI citations.

Why intent wins. Query intent — not industry or model — most strongly predicts which content gets cited. This pattern held across industries, from SaaS to health.

Why we care. This research indicates that you want to map content types to user goals rather than just creating more content. Articles educate, listicles drive comparison, and product pages convert. Aligning content format with user intent could help you capture more AI citations and increase visibility.

Not all listicles perform equally. Third-party listicles accounted for 80.9% of citations in professional services, compared to 19.1% for self-promotional lists. That seems to indicate LLMs prefer neutral, editorial comparisons over brand-led rankings.

Model differences. All models favored listicles, but diverged after that.

Industry patterns. Content preferences shifted slightly by vertical:

The research. The content types most cited by LLMs

A quiet but important policy update is coming to Google Shopping ads next month, requiring some merchants to verify their accounts before running ads featuring political content.

What’s changing. From April 16, merchants running Shopping ads with certain political content in nine countries will need to verify their Google Ads account as an election advertiser. Google will also outright prohibit some political Shopping ads in India.

The countries affected. Argentina, Australia, Chile, Israel, Mexico, New Zealand, South Africa, the United Kingdom, and the United States.

Why we care. Shopping ads aren’t typically associated with political advertising — this update signals that Google is broadening its election integrity efforts beyond search and display into commerce formats. Merchants selling politically themed merchandise, campaign materials, or other related products in the affected countries need to act before the April 16 deadline.

What to do now.

The bottom line. This affects a narrow but specific set of merchants — but the consequences of missing the deadline could mean ads being disapproved or accounts being flagged. If you sell anything with a political angle in the listed countries, check your eligibility now.

MyDreamGirlfriend is an AI-powered dating platform where users create customized AI companions with interactive conversations, voice messaging, and roleplaying features. Optimized for both mobile and desktop, it offers a freemium subscription model. Users can exchange voice notes and photos, unlocking content and deeper interactions with gems. Start free and upgrade for unlimited messages, multiple companions, and extras. All conversations are end-to-end encrypted for complete privacy.

LYNARA is a browser-based platform for precise multi-layer system design. It visualizes complex software landscapes in 3D and lets you structure user interface, services, and data layers for clarity. Use fast keyboard shortcuts to select, copy, paste, and navigate across layers, all without installation or a credit card.

New Gemini features for Google TV include richer visual answers, deep dives, and sports briefs, making it easier to explore the topics you love.

New Gemini features for Google TV include richer visual answers, deep dives, and sports briefs, making it easier to explore the topics you love.  Android Automotive OS is expanding as an open-source platform for core car functions, enabling new features and updates from manufacturers.

Android Automotive OS is expanding as an open-source platform for core car functions, enabling new features and updates from manufacturers.

AI citations in ChatGPT are far more concentrated than citation distributions in traditional search. Roughly 30 domains capture 67% of citations within a topic.

The details. Citation visibility wasn’t evenly distributed. In product comparison topics, the top 10 domains accounted for 46% of citations; the top 30, 67%.

What changed. Ranking No. 1 in Google still matters, but it’s not enough. Of pages ranking No. 1, 43.2% were cited by ChatGPT — 3.5x more often than pages beyond the top 20.

Why we care. Publishing the “best answer” for one keyword isn’t enough. ChatGPT rewards domains that cover a topic from multiple angles, not pages optimized for isolated terms. And discovery often happens outside the keyword universe you track.

The patterns. Longer pages generally earned more citations, with variation by vertical. The biggest lift appeared between 5,000 to 10,000 characters. Pages above 20,000 characters averaged 10.18 citations vs. 2.39 for pages under 500.

On-page behavior. ChatGPT cited heavily from the upper part of a page. The 10% to 20% section performed best across all industries.

About the data. Indig analyzed ~98,000 citation rows from ~1.2 million ChatGPT responses (Gauge), isolating seven verticals. The study used structural page parsing, positional mapping, and entity and sentiment analysis to identify which pages earned citations and where they come from.

The study. The science of how AI picks its sources

A new creative feature has been spotted inside Google Ads Performance Max campaigns — and it could change how advertisers without video budgets approach animated display advertising.

What was found. Vice President of Search at JumpFly, Inc. Nikki Kuhlman spotted an option to generate animated video clips directly within PMax asset groups, using AI to enhance and animate a single source image.

How it works.

Early results from testing. A logo generated a spinning animation of the image element. A house with a sold sign produced a slow cinematic pan. Simple inputs, but the output quality appears usable for display advertising without any video production required.

Where the ads appear. Google hasn’t provided in-product documentation on placement, but early testing shows animated clips surfacing in Display ad previews when added to an asset group.

Why we care. Video assets continue to be a strong creative option on Paid Media — but producing video has always required time, budget, and resources many advertisers don’t have. This feature effectively removes that barrier — turning a single product photo or logo into animated display creative in seconds, at no additional production cost.

For advertisers who’ve been running PMax on static images alone, this could be a meaningful and easy win.

The bottom line. This feature is still unconfirmed by Google, but advertisers running PMax should check their asset groups now. If it’s available in your account, it’s worth testing — especially for campaigns that have been running on static images alone.

First seen. Kuhlman shared spotting this new feature on LinkedIn.

AI tools and visibility have dominated the SEO conversation in the past two years. But while discussions focus on these new technologies, most of the biggest SEO risks in 2026 will come from somewhere else: within your own organization.

Fragmented data, unclear ownership, outdated KPIs, and weak collaboration can quietly destroy even the best strategies. As SEO expands beyond the website and into AI-driven discovery, the role of the SEO team is becoming broader, more influential, and, paradoxically, harder to define.

Here are some of the risks your team should start thinking about now.

Many SEO teams now rely on AI for everything, from generating briefs to analyzing data. That’s often necessary. You can’t spend hours creating a brief when AI can produce something usable in minutes. But that’s also where the risk starts.

AI can generate content quickly, but “acceptable” won’t differentiate you. You still need a clear point of view — what story you’re telling and what unique angle you bring. Without that, your content becomes generic, predictable, and indistinguishable from competitors using the same tools.

The issue is simple: if you ask similar tools similar questions, you’ll get similar answers. And your competitors have access to the same tools.

Some companies try to stand out by training models on proprietary data. In reality, few teams do this at scale. Most prioritize speed over quality.

There’s also risk in using AI for analysis without understanding the data behind it. AI is fast, but it can misinterpret or hallucinate results.

I’ve seen this firsthand. An AI tool hallucinated part of a calculation during an urgent analysis, making every insight that followed incorrect. It only acknowledged the mistake after it was explicitly pointed out.

More broadly, AI excels at identifying patterns. But in SEO, competitive advantage rarely comes from following patterns. The most effective strategies don’t just mirror what everyone else is doing. Sometimes the best opportunity isn’t the obvious one.

AI is reshaping how SEO work gets done, how impact is measured, and whether it can be measured at all.

Dig deeper: Why most SEO failures are organizational, not technical

The SEO toolkit you know, plus the AI visibility data you need.

For years, SEO professionals have worked with incomplete datasets. We’ve never had a full view of the user journey. That’s one reason organic impact has often been underestimated. In the past, though, we could still piece together a reasonably clear picture — from ranking to click to conversion.

Today, that picture is far more fragmented. AI tools have changed how people research and discover products. Users now start in AI assistants – asking questions, comparing options, and building shortlists before ever visiting a website. By the time they land on your page, part of the decision-making process is already done.

The problem is we have zero visibility into that journey. If a user discovers your brand through an AI-generated answer, adds you to a shortlist, then later searches for you directly, the signals that influenced that decision are invisible. We only see the final step.

Microsoft Bing has introduced basic reporting for AI searches, but it’s limited. We still can’t see the prompts behind specific page visibility.

At the same time, SEO teams are still expected to prove impact. Some companies are adding questions to lead forms to understand how users discovered them. In theory, this adds signal. In practice, it depends on accurate self-reporting. I know how I fill out forms, so I question how reliable that data really is. Still, it’s a start.

Fragmented data creates another risk: focusing on the wrong KPIs. Stakeholders still ask about traffic. No matter how often SEO teams explain that its role has changed, traffic remains a default measure of success. For years, organic growth meant more sessions, users, and visits. That mindset hasn’t fully shifted.

At the same time, stakeholders are drawn to newer metrics — AI visibility, citations, and mentions. These aren’t inherently wrong, but they need to be used carefully.

Most tools measure AI visibility using a predefined set of queries. That’s where risk creeps in. Teams can become too focused on improving visibility scores, even if it means optimizing for prompts that look good in reports rather than those that matter to the business.

For example, appearing for “What is XYZ software?” isn’t the same as showing up for “Which XYZ software is best?” The first may drive visibility, but the second is much closer to a purchase decision.

To avoid this, visibility metrics need to be tied to business outcomes — a real challenge given the fragmented data problem.

Tracking AI visibility also opens another rabbit hole: debates over which prompts to track, how many to include, and why. This can quickly overcomplicate measurement, especially if teams lose sight of the goal. The objective isn’t to track every phrasing, but to understand the intent behind it. Trying to capture every variation is impossible.

Dig deeper: Why governance maturity is a competitive advantage for SEO

SEO teams are expected to own AI visibility strategy much like they owned SEO strategy. But strategy is often treated as execution.

Even in the past, SEO was never fully independent. It relied on other teams — engineering to implement changes and content to create pages. The difference is that most of this work used to happen on the company’s own website.

That’s no longer true. Visibility in AI answers requires presence beyond your domain — Reddit threads, YouTube videos, and media mentions all play a role.

This significantly expands the scope of work. At the same time, many of these surfaces don’t have clear owners inside organizations. Even when they do, there’s a tendency to assume that if SEO owns the strategy, it should also own execution or at least be accountable for outcomes.

The opposite happens, too. If other teams own execution, they may take ownership of the entire strategy. In reality, neither model works well.

SEO teams can’t manage every platform that influences AI visibility. They don’t have the expertise to produce YouTube content or run PR campaigns. Their strength is knowing what works and helping optimize it. For example, advising on how a video should be structured to perform on YouTube.

Owning strategy also doesn’t mean deciding who owns execution. That’s a leadership responsibility. It requires visibility across teams and the authority to assign ownership. Otherwise, one team is left deciding how its peers should operate.

Even when companies recognize the importance of AI visibility, cross-team collaboration remains a challenge.

Roles and processes are often unclear. SEO teams may expect others to execute, while those teams assume it’s SEO’s responsibility. In other cases, teams don’t prioritize AI visibility because their KPIs focus elsewhere.

This is where leadership alignment becomes critical. If AI visibility is truly a strategic priority, it needs to be reflected in goals and KPIs across all relevant teams. When AI-related KPIs sit only with SEO, it creates an imbalance: one team is accountable for outcomes, while execution depends on many others.

Many teams are also unsure how to work with SEO. Some don’t involve SEO early enough. Others choose not to follow recommendations because they don’t agree with them.

SEO teams share responsibility here, too. They need to actively onboard other teams and clearly connect SEO efforts to broader business goals. It’s our job to show that lack of visibility means lost revenue.

I’ve seen cases where teams critical to AI visibility hadn’t even read the strategy document. In these situations, the issue isn’t one-sided. Teams need to understand what’s expected of them, and SEO needs to push for alignment and involve stakeholders early. Simply moving forward without that alignment doesn’t work.

SEO teams also don’t always explain the “why.” AI visibility can end up treated as a standalone SEO metric rather than a business driver. Even when there’s agreement on its importance, a lack of clear processes, shared goals, and training keeps collaboration inconsistent.

Dig deeper: Why 2026 is the year the SEO silo breaks and cross-channel execution starts

With rapid changes in search, SEO teams often spend more time on theory — reading, analyzing, building frameworks, and refining strategies — instead of making changes to the website.

That doesn’t mean teams should stop learning. Quite the opposite. But strategy without execution quickly loses value. In many organizations, SEO teams are expected to produce in-depth strategy documents meant to align teams and define priorities. In reality, many go unread outside the SEO team. They require significant effort but deliver little impact.

Part of the problem is that strategies are often too theoretical. They explain the why but miss the what. The value of a strategy isn’t the document, but the actions that follow. Other teams need to understand what to do and how to contribute.

AI is also accelerating how quickly search evolves. Waiting months to test ideas no longer works. A more practical approach is to understand the direction, implement changes, observe results, and iterate. Smaller experiments often lead to faster learning.

SEO has always been a consulting function. Success depends on collaboration with teams like engineering, content, and product. Today, that dynamic is more visible than ever. In many cases, SEO teams don’t execute directly. Their role is to enable others.

In mature organizations, this works well. Collaboration is strong, and credit is shared. SEO’s consulting role is recognized without forcing the team to own areas outside its expertise. In less mature environments, it can lead to SEO being undervalued or seen as unnecessary.

AI adds another layer. It can generate keyword ideas, outlines, and optimization suggestions, making SEO look deceptively simple, much like writing content. AI lowers the barrier to entry, but it doesn’t replace expertise. Without that expertise, teams produce work that’s technically correct but average.

It’s a familiar pattern: copy-pasting a Screaming Frog SEO Spider error list into a task doesn’t demonstrate real understanding. This creates a paradox. The more SEO becomes a company-wide capability, the more the SEO team risks becoming invisible.

Dig deeper: SEO execution: Understanding goals, strategy, and planning

Track, optimize, and win in Google and AI search from one platform.

SEO teams won’t fail in 2026 because of a lack of knowledge. They’ll fail if they can’t turn that knowledge into action, influence, and business impact.

The challenge is no longer just optimizing pages. It’s building processes, partnerships, and measurement models that reflect how visibility works today.

Success also depends on leadership support. Many of the biggest risks are structural — fragmented data, unclear ownership, weak collaboration, outdated KPIs, and the gap between strategy and execution.

AI visibility expands beyond the website and into the broader organization. That doesn’t make SEO less important, but it does make it harder to define, measure, and defend.

The companies that succeed will stop treating SEO as a traffic function and start treating it as a business capability that drives visibility, discovery, and growth.

Apple is preparing to introduce sponsored listings in Apple Maps, marking a significant expansion of its advertising business beyond the App Store.

How it will work. According to Bloomberg’s Mark Gurman, the system will function similarly to Google Maps — allowing retailers and brands to bid for ad slots against search queries. Sponsored businesses will appear in Maps search results, much like sponsored apps already appear in App Store searches.

The timeline. An announcement could come as early as this month, with ads beginning to appear inside Maps as early as this summer across iPhone, other Apple devices, and the web version.

Why Apple is doing this. Advertising is a growing and high-margin revenue stream for Apple’s services business. Maps — with its massive built-in user base across Apple devices — is a natural next step, particularly as location-based advertising continues to grow.

Why we care. Apple Maps has a massive built-in user base across iPhone and Apple devices, and users searching within Maps are expressing clear, high-intent signals — they’re actively looking for somewhere to go or something to buy. This opens up a brand new location-based advertising channel that previously didn’t exist on Apple’s platform, giving local businesses and retailers a way to reach those users at exactly the right moment.

Advertisers already running Google Maps or local search campaigns should pay close attention, as this could quickly become a significant complementary channel.

The privacy angle. True to Apple’s form, a user’s location and the ads they see and interact with in Maps are not associated with their Apple Account. Personal data stays on the user’s device, is not collected or stored by Apple, and is not shared with third parties.

How to access it. Businesses will be able to access a fully automated experience for creating ads through Apple Business in a few simple steps. Current Apple Ads advertisers and agencies will also have the option to book ads through their existing Apple Ads experience, which will offer additional customization options.

What you need to do now. When Apple Business becomes available in April, businesses will need to first claim their location on Maps apple before ads become available this summer — so the time to get set up is now, not when the auction opens.

The bottom line. Apple Maps ads should open up a high-intent, location-based channel that hasn’t existed before on Apple’s platform. Advertisers running local or retail campaigns should claim their Maps listing now and start planning budgets for a summer launch. Early entrants in a new ad auction typically benefit from lower competition before the market matures.

Update 10:45 ET: Apple has officially confirmed that ads are coming to Apple Maps this summer, as part of a broader new platform called Apple Business launching April 14.

Microsoft added query-to-page mapping to its AI Performance report in Bing Webmaster Tools, letting you connect AI grounding queries directly to cited URLs.

Why we care. The original dashboard showed queries and pages separately, limiting optimization. Now you can tie specific AI-triggering queries to the exact cited pages, so you can prioritize updates based on real AI-driven demand — not guesses.

The details. The new Grounding Query–Page Mapping feature links two existing views in the AI Performance dashboard:

Catch up quick. Microsoft launched the AI Performance report in Bing Webmaster Tools in February as its first GEO-focused dashboard. It:

What they’re saying. Microsoft said the update responds to “strong positive customer feedback and numerous requests.”

The announcement. The addition of query-to-page mapping to Bing Webmaster Tools appeared in a Microsoft Advertising blog post: The AI Performance dashboard: Your view into where your brand appears across the AI web

The entity home is the single page that anchors how algorithms, bots, and people understand your brand. It’s usually your About page, and it does far more than most teams realize.

It’s where algorithms resolve your identity, where bots map your footprint, and where humans verify trust before they convert. In one test, improving that page alone lifted conversions by 6% for visitors who reached it. The reason is simple: the human and the algorithm are doing the same job — checking claims, validating evidence, and deciding whether to trust you.

For years, this was overlooked. Most SEOs focused on rankings and traffic while underinvesting in the page that defines what their brand actually is. That’s no longer sustainable. The entity home is the foundation of how your brand is interpreted across search, AI, and what comes next.

Before going further, here are four misreadings worth pre-empting.

Getting the entity home right doesn’t produce a traffic spike next Tuesday. It builds the confidence prior that compounds through every gate of the pipeline over time.

Schema markup helps the algorithm read what is already there. It isn’t a substitute for the claims, the evidence links, and the consistent positioning that schema describes. Schema without substance is a well-formatted, empty declaration.

For most companies, it is, and for most individuals, it is a page on someone else’s website. The right URL to use carries the clearest identity statement, the strongest internal link prominence from the rest of the site, and the most stable long-term address (something people often don’t think about).

The entity home is where you declare your claims. Independent third-party sources confirm and corroborate your claims. The algorithm will only cross the confidence threshold when what you say matches what the weight of evidence supports.

The entity home serves three simultaneously, through three completely different mechanisms. Most brands haven’t yet given them enough thought.

So, the entity home webpage is vital to all three audiences — bots, algorithms, and humans: it sets the tone for the bot in DSCRI, the algorithms in ARGDW, and for the person who converts.

The entity home anchors everything: the canonical URL where the algorithm initializes its model of the brand, where bots orient themselves, and where humans arrive to verify their instinct. One page, doing one critical job. But one page declares. It doesn’t educate.

The entity home website educates. Every facet of the brand structured across pages that give the algorithm a complete picture of:

The difference between the two is the difference between introducing yourself and making your case.

Search built the web around a single assumption — the human acts. The engine organized, the website presented, and the human chose. That model shaped 30 years of architecture decisions because the website’s job was to win the human’s attention and trust once the engine had delivered them to you.

But assistive engines broke that assumption. They took on the evaluation work the human used to do: reading, comparing, synthesizing, and recommending. The human still makes the final call, but the website needs to have made its case to the algorithm before the human ever arrives.

The audience that matters first has shifted, and a website that speaks only to humans is already losing the conversation that determines whether those humans show up at all.

Agents go one step further. The agent researches, decides, and acts. The human receives the outcome. The website that wins in an agentic environment isn’t the one with the most compelling hero section — it’s the one the agent can read, trust, and act on without inferring anything.

All three modes co-exist, and all three always will.

What shifts over the next three years isn’t which mode exists — it’s which mode does the most work, and what your website needs to do to win each one.

This is where I’ll plant a flag, and you can disagree. All three jobs need attention right now — the percentages below describe where the main focus of your effort sits, not permission to ignore the others.

The work on assistive and agential is already overdue. The speed of change will probably make these figures look dated in a few months.

The entity home website anchors all three eras. What changes is who it speaks to first, and what that conversation needs to contain.

Each cluster in that diagram declares something: these satellite pages, grouped this way, belong to this entity and describe one specific dimension of what it is.

The grouping carries meaning — an algorithm that reads the structure learns something the individual pages couldn’t tell it separately.

Search, assistive, and agential engines co-exist, which means the entity home website runs three distinct jobs simultaneously.

SEO has always known what to do with a topic: build an authoritative page around it, link it well, and earn rankings. That architecture works because the ranking engine evaluates content.

What it can’t do is tell the algorithm who the entity behind that content is, what relationships it has built, what it has demonstrated over time, or why it should be trusted to recommend rather than merely rank.

An entity has facets, and facets aren’t the same thing as topics. A person isn’t “SEO consultant” plus “technical SEO” plus “keynote speaker”: those are keyword clusters, useful for ranking, useless for identity.

What the algorithm actually resolves identity against is the network of dimensions that define what this entity is — the companies it belongs to, the peers it works alongside, the publications it has appeared in, the expertise it has demonstrated over years, the events it speaks at, and the work it has produced.

An entity pillar page is the authoritative page on your own property for one of those dimensions.

These pages aren’t traffic pages in the traditional sense, and that framing matters: SEOs who measure them against keyword rankings will consistently underinvest in them because the return doesn’t show up in rank tracking. The return shows up in what AI assistive engines say about your brand when your prospects ask.

The keyword cornerstone page and the entity pillar page aren’t competing strategies: they’re parallel architectures serving different audiences, which means your website needs both, and the question is how to build them so they compound each other’s value rather than compete for the same resource.

The coincidence between them is real and worth engineering deliberately. The expertise page that ranks for “technical SEO audit” can also function as the entity pillar page that declares this entity’s demonstrated knowledge in that domain if it’s built with that second function in mind:

When those two requirements align, one page does both jobs, which is a good thing.

When they diverge: when the page that captures search traffic can’t easily carry the identity declaration without sacrificing one function for the other, you face an architectural choice, and making that choice consciously rather than defaulting to the keyword model is the skill the transition requires.

Earlier in this article, the 2026/2027/2028 split put search at 60%, then 35%, then 20% of focus. What those numbers don’t say, but what the logic demands, is that the other percentage — the assistive and agential share — needs your website to feed them right now. Don’t wait until the balance shifts.

Keyword cornerstone pages feed the search share. Entity Pillar Pages feed the assistive and agential share.

If you build the Entity Pillar Pages in 2027 when assistive engines truly dominate, you’ll be building into a window that has already closed for the brands that started in 2025, because the algorithm’s model of your entity solidifies around whatever you gave it during the period it was actively learning.

The percentages describe where the demonstrable value sits at each stage. Your investment needs to precede the moment your boss sees the results, not follow it.

Both architectures are required today; the balance shifts, but the requirement for both never goes away.

The risk brands hear when they encounter the machine-optimization argument is a false trade-off: build for machines at the expense of humans, strip the warmth from the copy, replace narrative with structured data fields, and turn the About page into a schema exercise. You can absolutely avoid the trade-off in practice because the best practices are more complementary than they might appear.

Clear entity statements that help the algorithm resolve your identity also help the human visitor understand immediately who they’re dealing with. Explicit links to corroborating third-party sources that build algorithmic confidence also give the human prospect the independent validation they’re quietly looking for. Schema markup that declares relationships for machine consumption gives structured clarity that human scanners doing final due diligence actually appreciate.

For me, this is the reframe that makes the whole project manageable: my approach to the entity home website is your current marketing, restructured to serve three audiences simultaneously, not a technical infrastructure project running alongside it. One investment that has three returns, and (when done right), the requirements pull in the same direction more often than they pull apart.

The funnel is moving inside the assistant.

When an assistive engine names your brand, summarizes it, and links to it in response to a user query, a conversion event has happened that you don’t see in your Analytics dashboard, and the human who arrives at your website has already been half-sold by the algorithm before they clicked. Traffic will decline as more of that evaluation work moves upstream, and the brands that measure only what arrives at the site will systematically underestimate both the value they’re generating and the gaps in their strategy.

Start measuring where your brand appears in assistive engine responses, how consistently it appears, and what the algorithm says about you when it does.

Start with the entity home page itself: choose the single URL that functions as the canonical anchor for your brand’s identity and commit to it. Don’t discover it by asking an AI engine what it thinks your entity home is, because the engine will tell you what it has already learned, and that might be your website homepage, Wikipedia, a press profile, or a LinkedIn page you half-filled in five years ago. You choose it, then you verify the algorithm has learned the lesson you are giving it. You are the adult in the room.

Five criteria determine that choice, in order of weight:

If your About page doesn’t hit all five, it isn’t doing the job the algorithm requires.

Invest in your About page. Strengthen it with a clear entity statement, schema with a proper @id, verified links to Wikipedia and Wikidata where they exist, every accurate sameAs declaration you can support, and the claims that define your brand’s positioning.

That single page is the anchor.

The entity home website is the education hub built around it: every entity pillar page you build — /expertise, /peers, /companies, /press — extends the identity declaration outward, giving the algorithm more dimensions to resolve against and more facets to cross-reference with independent sources. Each of those pages does for one identity dimension what the About page does for the whole: declares something specific, verifiable, and machine-readable about who this entity is.

The practical work on the entity home website side is the same audit applied at scale: for each entity pillar page, ask whether it declares a clear facet, links to corroborating evidence, and carries schema that names the relationship rather than just the topic. The pages that answer yes to all three are doing both jobs simultaneously — identity infrastructure and keyword architecture. The ones that don’t need a decision: extend them, or build the pillar function its own dedicated page.

If you’re unsure how much influence you actually have over what AI communicates about you, the answer is more than most people assume — and the channels that give you the most leverage are exactly the ones entity pillar pages are built to activate.

Then force the corroboration loop across the whole footprint: drive independent third-party sources to reference, link to, and echo the claims the entity home makes and the facets the pillar pages declare across enough independent contexts that the algorithm’s confidence crosses from hedged claim to corroborated fact.

That crossing doesn’t happen on a deadline and can’t be engineered in a sprint. The corroboration loop is the curriculum, slow by design, compounding with every cycle, never truly finished. It is the work, and it rewards the brands that start it today over the ones that plan to start it when the percentages shift.

This is the sixth piece in my AI authority series.

In an increasingly automated environment, paid search performance is constrained by a simple reality: Algorithms can only optimize toward the signals they’re given. Improving those signals remains the most reliable way to improve results.

That sounds straightforward, but in practice, many people are still optimizing around signals that don’t reflect real business outcomes.

Let’s dive into how algorithms function, how you can influence them, and where some people fail.

Modern bidding systems are often described as “black boxes,” suggesting they operate mysteriously. But that description isn’t helpful.

At a high level, bidding algorithms are large-scale pattern recognition systems.

Early automated bidding used simple statistical methods, including rules-based logic and regression models. Over time, these evolved into more advanced machine learning approaches using decision trees and ensemble models.

Eventually, these became large-scale learning systems capable of processing thousands of contextual and historical inputs. The technology has developed significantly, but the goal has stayed remarkably consistent.

Today’s systems evaluate signals such as query intent, device, location, time, historical performance, and user behavior, updating predictions continuously and adjusting bids in near-real time.

Despite this complexity, the underlying mechanisms haven’t changed:

Bidding algorithms identify patterns tied to a desired outcome, estimate that outcome’s probability and expected value for each auction, and adjust bids accordingly. They don’t understand business context or strategy — they infer success from feedback. This distinction matters.

When the feedback loop is weak, noisy, or misaligned with real business value, even advanced algorithms will efficiently optimize toward the wrong objective. Better technology doesn’t compensate for poor inputs.

Dig deeper: Bidding and bid adjustments in paid search campaigns

Paid search algorithms observe a vast range of signals, many of which are inferred by the platform and not directly controllable by you. These include user intent signals, behavioral patterns, and competitive dynamics.

While many signals sit outside of our control, there’s still a meaningful set of levers you control that shape how algorithms learn. These include:

These inputs shape how the algorithm explores and learns. They help define the environment in which optimization occurs. But they don’t, by themselves, define what success looks like. That role is played by conversion data.

Dig deeper: Conversion rate: how to calculate, optimize, and avoid common mistakes

When performance plateaus, the first instinct is to blame structure, budgets, or creative. In reality, the biggest lever you have available usually sits elsewhere: conversion data.

In most accounts, conversion data is the most influential signal you control. It defines the outcome the algorithm is trained to pursue and directly informs prediction models, bid calculations, and learning feedback loops.

When conversion setups are misaligned, overly broad, duplicated, or noisy, platforms still optimize efficiently, just not toward outcomes the business actually values. This is why, at times, you can show improving platform metrics while your commercial performance stagnates or deteriorates.

A common mistake is focusing on increasing conversion volume rather than improving conversion quality. Volume accelerates learning, but if the signal is weak, faster learning just means faster optimization toward a suboptimal goal.

In practice, refining what counts as a conversion often delivers greater performance gains than structural or tactical changes elsewhere in the account.

Dig deeper: Why a lower CTR can be better for your PPC campaigns

Before any optimization begins, define what success genuinely means for your business. Paid search platforms don’t have intrinsic knowledge of your revenue quality, profitability, or downstream value. They only see what is explicitly passed back to them.

Misalignment typically appears in predictable forms:

In each case, the algorithm is doing exactly what it has been instructed to do. The issue isn’t optimization accuracy, but goal definition. If an increase in a given conversion wouldn’t be seen as a win by the business, it shouldn’t be the primary signal used for optimization.

Dig deeper: 3 PPC KPIs to track and measure success

Conversion quality is determined by how confidently the platform can identify and interpret a tracked event.

Browser-based tracking alone is increasingly incomplete due to privacy controls, attribution gaps, and fragmented user journeys. As a result, ad platforms rely on a combination of browser-side and server-side data to improve matching and attribution. This means that, for you, this isn’t just a measurement problem, as it directly affects how confidently platforms can learn from conversions.

Stronger conversion signals are typically characterized by multiple reinforcing parameters, including:

When a conversion can be recognized through multiple mechanisms, platforms can match it more reliably and use it in learning models with greater confidence. This improves reporting accuracy and bidding performance by reducing feedback loop uncertainty.

Dig deeper: How to track and measure PPC campaigns

Selecting the right conversion goal isn’t a binary decision. It involves balancing several competing factors:

Higher-volume, faster conversions often sit further away from true commercial outcomes, while lower-volume, high-quality conversions may better reflect business value but risk data sparsity. The most effective setups acknowledge these trade-offs rather than attempting to eliminate them entirely.

In many cases, the optimal solution involves using proxy or layered conversion goals that strike a balance between learning speed and value accuracy.

Dig deeper: How to use proxy metrics to speed up optimization in complex B2B journeys

For ecommerce, optimizing toward order value assumes all revenue is equal. In reality, product margins often vary widely. When revenue alone is used as the optimization signal, algorithms may prioritize high-value — but low-margin — products.

A more effective approach is to optimize for gross margin by passing margin-adjusted conversion values via server-side tracking or offline conversion imports. This allows bidding systems to prioritize your business’s profitability rather than top-line revenue, without exposing sensitive cost data client-side.

In lead gen models where final outcomes occur weeks or months after the initial click, form submissions alone can provide you with weak signals. They are fast and high-volume, but poorly correlated with revenue.

Introducing lead scoring improves signal quality. Leads can be assigned proxy values based on known attributes and early indicators of quality, such as company size, role seniority, or engagement depth. These values can then be passed back to the platform via CRM integrations or server-side tracking, enabling value-based optimization even when final outcomes are delayed.

If you’re focused on lifetime value (LTV), there are two viable approaches:

In both cases, your objective is the same: provide the algorithm with timely, value-weighted signals that correlate strongly with long-term revenue, rather than waiting for delayed outcomes that are too sparse to support learning.

Modern bidding systems are powerful pattern recognition engines, but their effectiveness is constrained by the signals they receive.

The biggest performance gains rarely come from constant restructuring or tactical tests. They come from improving the clarity, quality, and commercial relevance of your conversion data.

Conversion signals are the most influential inputs you control, and misaligned or low-quality setups will limit performance regardless of how advanced the algorithm becomes.

Regularly audit your conversion definitions and ask a simple question: “Would you genuinely celebrate an increase in this outcome?” If the answer isn’t clear, the signal likely needs refinement.

Improving conversion goals, strengthening signal quality, and balancing volume, accuracy, and latency aren’t optional. They’re among the highest-impact ways to improve paid search performance.

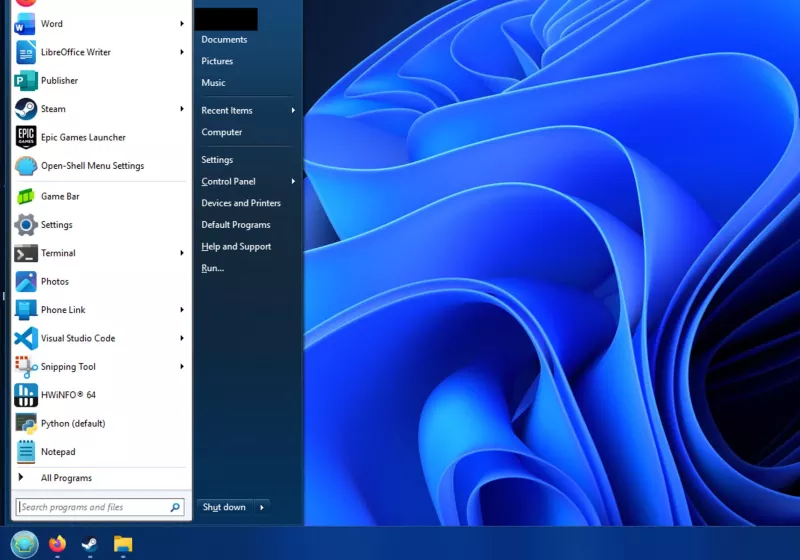

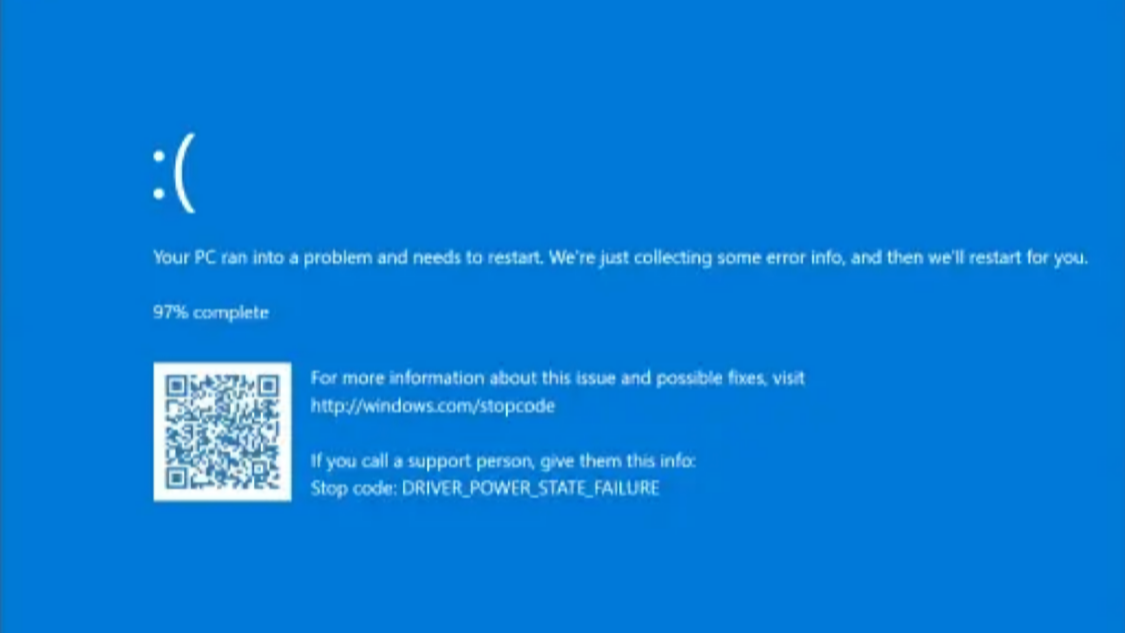

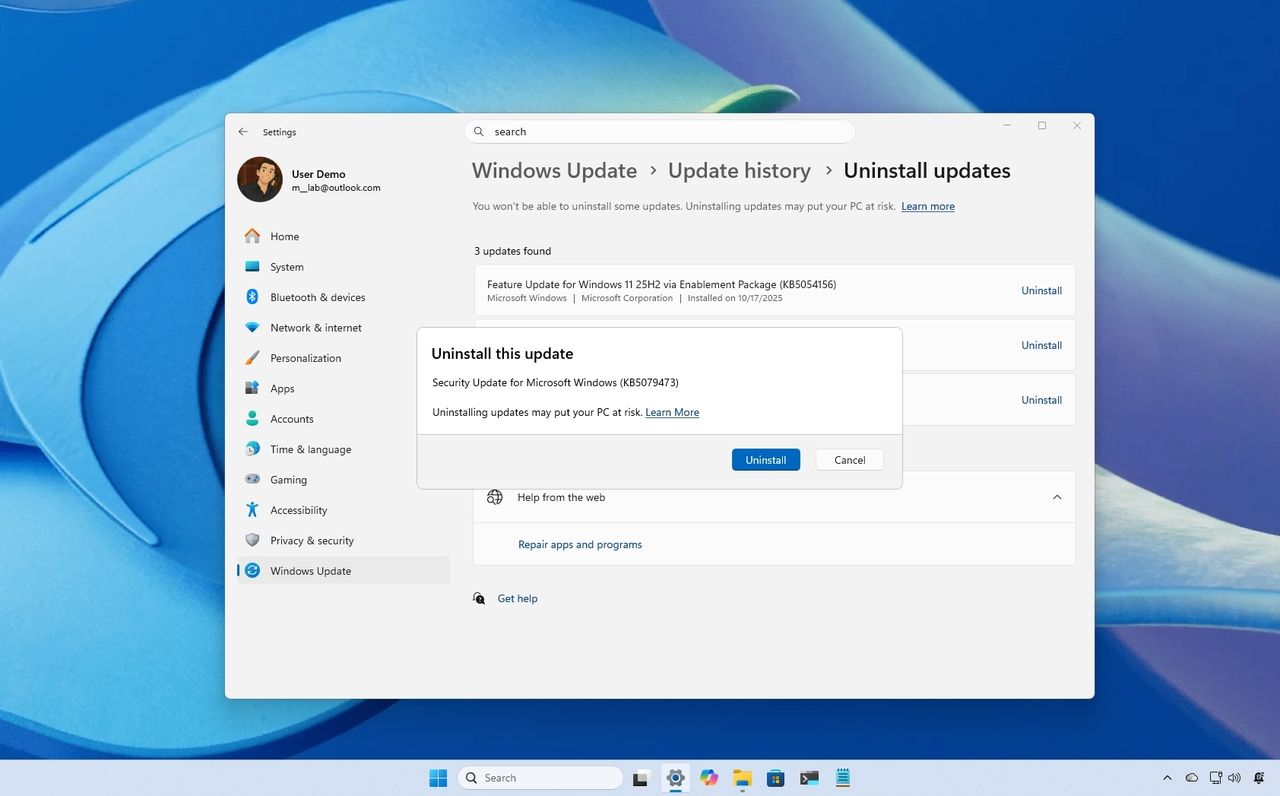

Windows 11 could soon be freed of mandatory Microsoft accounts Last week, Microsoft made it clear that it plans to significantly improve Windows 11 in 2026. While Microsoft’s list of planned improvements was impressive, it was missing one thing that would immediately be loved by Windows 11 users. That’s the removal of Microsoft accounts from […]

The post Microsoft could drop mandatory sign-ins for Windows 11 appeared first on OC3D.

LazyScreenshots is a Mac screenshot tool for builders that captures a region and auto-pastes it into your AI assistant with a single keystroke. It has many features like quick overlays, burst mode, and pixel measurements that keep you focused while sending screenshots back and forth with your AI agent or any other app.

Collaboration is critical to creators' success, but most AI creative tools are poor at collaboration. Buzbee AI pairs creators with a personalized Scout bee, a real-time voice-powered companion who helps ideate, script, and produce videos from the first spark to the final polish. Scout learns from your channel data and video content, applying proprietary storytelling intelligence to make better videos faster and scale your business.

No more prompt engineering one output at a time. You can create with Scout coordinating all your creative tasks across a swarm of worker bees to help you make better videos in minutes instead of days.

Supercharge performance across the full customer journey by connecting Kroger’s shopper insights with Google’s AI and scale.

Supercharge performance across the full customer journey by connecting Kroger’s shopper insights with Google’s AI and scale.

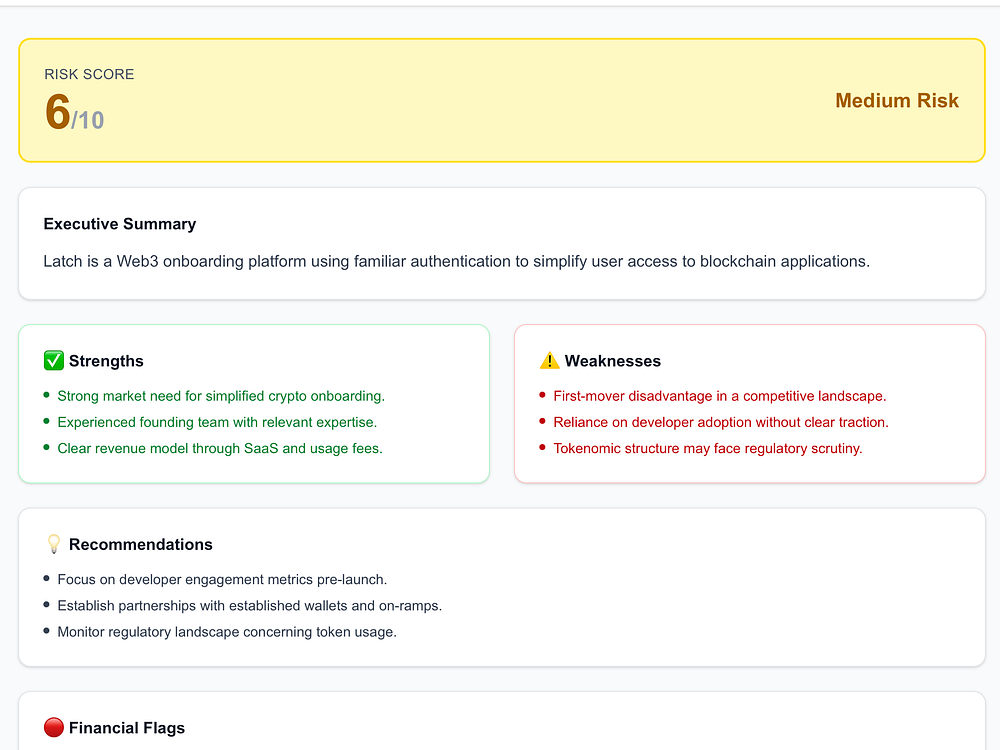

VentureLens is an AI-powered pitch deck analysis tool that helps founders and investors evaluate startup decks in seconds. Simply upload a pitch deck and receive a structured, investor-style report highlighting strengths, weaknesses, risks, and opportunities, just like a VC would. Designed for speed and clarity, VentureLens turns hours of manual review into a 60-second workflow.

Built with privacy in mind, VentureLens ensures your data stays secure while delivering actionable insights you can actually use. Whether you're a founder refining your pitch or an investor screening opportunities, VentureLens helps you make smarter, faster decisions with confidence.

Research finds that persona prompts "reliably damage" factual accuracy in certain kinds of tasks but work well in others.

The post Research Shows Where Persona Prompting Works And When It Backfires appeared first on Search Engine Journal.

Website migrations have a well-earned reputation for going wrong, with even well-planned migrations leading to rankings slipping, traffic dropping, or tracking breaking. But most migration problems come from small oversights rather than complex technical failures.

You can reduce your risk with a staged approach. The checks you complete during staging, on launch day, and in the first few weeks after go-live often determine whether a migration stabilizes quickly or becomes a long recovery project.

Most migration problems should be found and fixed on the staging site. If issues reach the live site, recovery is slower and more uncertain. Set yourself up for success with the following tips:

One common mistake is leaving the staging site publicly indexable. When Google crawls a staging environment, duplicate content can sometimes end up in search results. Rankings can fluctuate, and unfinished pages may end up indexed.

Make sure you have blocked crawlers from staging site or protected it with a password so it remains invisible to search engines until the live launch.

It’s not just crawlers, either. I’ve seen this happen with ecommerce sites.

Customers found the staging site, tried to place orders, and the process didn’t work. This confused customer service teams, frustrated buyers, and created avoidable pressure internally.

You want a baseline to help you identify real problems rather than reacting to normal short-term movement.

Record organic sessions, rankings, top landing pages, indexed pages, conversions, and site speed before transitioning to the new site to define the “normal” you will compare the new site to.

Focus on pages that drive traffic, revenue, or attract links. These pages need extra care during redirect mapping, content review, and testing.

Pay extra attention to internal links, redirects, and URL rules for these pages.

Dig deeper: Website migrations: a plan to keep your traffic and SEO safe

Templates control titles, headings, metadata, canonical tags, structured data, copy, and media. If templates break, problems repeat across hundreds of pages.

Check that:

This step protects more than rankings. It ensures the site still meets user needs and supports conversions.

Make sure canonical tags use full URLs and point to live pages, as explained in Google’s guide on canonical URLs. This simple step can prevent bigger headaches later.

Unnecessary URL changes are a common source of hidden damage. Changes made for design or CMS convenience often introduce risk without a clear benefit.

Typical issues include:

One of the most common causes of duplicate URLs during migrations is inconsistent handling of trailing slashes. URLs with and without a trailing slash are treated as different URLs. Allowing both to resolve can create duplicate content, dilute signals, and complicate crawling.

It doesn’t usually matter which version you choose, as long as the rule is consistent across the site. During a migration, avoid unintentionally switching between formats without a clear plan and proper redirects in place.

The same goes for folder structures and capitalization. Don’t change what you don’t need to, and be consistent wherever possible.

In one migration where we were brought in to rescue a site after go-live, every URL gained a trailing slash. Canonical tags only contained paths rather than full URLs, and internal links relied on redirects instead of pointing directly to final URLs. None of the changes were necessary, yet together they slowed crawling, caused confusion, and delayed recovery.

Redirect mapping is one of the highest-risk areas of any migration. Existing redirects should be pulled from the CMS, CDN, Google Search Console, analytics platforms, and backlink tools so nothing is missed. Every legacy URL needs a clear, intentional destination.

If pages are removed, redirect to the closest relevant alternative. If no equivalent exists, return a 404 or 410. Avoid sending everything to the homepage or top-level categories.

Aleyda Solis’ guide to SEO for web migrations provides a strong framework for this stage.

Migrations are often seen as a good time to refresh all the content on a site. This can be done if all the stakeholders align, but it should be done methodically.

Remove outdated content carefully. Where gaps exist in the new structure, plan new pages in advance and make sure they are ready to go live when the new site is. This planning avoids lost coverage or weak redirect decisions later.

Ensure the site can be verified after launch and that any international or country settings are correct.

Pre-launch is also about people. Developers, designers, SEO, and analytics teams need clarity on responsibilities and deadlines. Many migration issues happen through missed handovers rather than a lack of skill.

In my experience, most migration failures are preventable before launch, when fixes are safer and faster.

I worked on one migration where SEO was brought after launch. The site launched with broken internal links, missing redirects for high-traffic pages, and inconsistent URL rules. Organic traffic dropped by almost 40% within two weeks, and several priority pages disappeared from search results. All of these issues were visible on the staging site but weren’t reviewed before launch.

Make the case for SEO to be part of the planning process. It saves time, money, and headaches.

Dig deeper: Website migration checklist: 11 steps for success

Launch day is where preparation meets reality, and all teams, including SEO, developers, designers, and analytics, see the results of their planning. What worked on staging must now work on the live site. Even small oversights can immediately affect rankings, traffic, conversions, user experience, and reporting.

Calm, thorough verification ensures the migration pays off and prevents small errors from becoming lasting issues. Use this list as a starting point:

Spot-checking isn’t enough. Every mapped URL should redirect once and resolve cleanly. Avoid redirect chains and loops. They slow down crawling and delay signal consolidation.

In another migration we were called in to fix, only the top 50 pages had correct redirects. Thousands of other URLs redirected to the homepage. Rankings dipped, and recovery took months longer than expected.

Run a full crawl as soon as the site is live. Compare results with the staging crawl to identify differences.

Look for:

Menus, breadcrumbs, and in-content links should point directly to live URLs. Leaving internal links to rely on redirects increases load and risk.

Canonicals or hreflang pointing to staging URLs are a common launch issue. Confirm titles, headings, canonical tags, hreflang, copy, and media all reference the live site.

Dig deeper: How to run a successful site migration from start to finish

GA4, paid media tags, and social pixels should already be in place before launch. This ensures tracking fires correctly, conversions are measured accurately, and historical data remains intact when the live site goes public. Remember, the staging site should be blocked from crawling or be protected behind a password to prevent test traffic from polluting reporting.

In one migration, we were asked to review after launch. The domain stayed the same, but a new GA4 property was created during the redesign. Historical data remained in the original property, while new data was collected in the new one, making post-launch comparisons difficult.

Keeping the same GA4 property preserves reporting continuity, supports confident decision-making, and avoids unnecessary uncertainty at a critical point in the migration.

Ensure pages meant to be indexed are accessible and that noindex tags are only used where intended. If you use services like Cloudflare, it’s also important to check that your robots.txt and content signals are configured correctly.

For example, Cloudflare’s default setting may block AI training access while allowing search indexing. If this isn’t adjusted intentionally, AI models might pull content from third-party sources rather than your site, affecting how your brand is represented in generative AI outputs.

Submit the live sitemap to Google Search Console to support the discovery of new URLs.

Check Core Web Vitals and page performance. A redesigned site can still load heavier assets than expected. Launch day is about verification, not assumption.

Even the best-planned migrations can reveal surprises once search engines and real users interact with the site. Small errors that didn’t appear on staging can impact rankings, traffic, and conversions.

Calm, structured monitoring in the days and weeks after launch ensures problems are caught quickly before they affect performance or user experience. Here’s what to keep an eye on.

Dig deeper: Technical SEO post-migration: How to find and fix hidden errors

Even well-managed migrations can see short-term movement. Rankings may fluctuate, and traffic may dip before stabilizing.

If redirects are clean, content is intact, and crawl access is clear, recovery usually follows within weeks rather than months. Ongoing losses usually point to structural issues rather than algorithm changes.

Knowing when to wait and when to act comes from experience. You don’t want to react too quickly or too late. Keep a careful eye on your analytics, and you’ll develop the expertise over time.

Website migrations succeed when they are planned, tested, and monitored at every stage. A clear focus on pre-launch, launch day, and post-launch checks protects visibility, performance, and confidence across teams.

When SEO is involved early, and checks are clearly owned, migrations stop feeling like crisis events and become managed change.

Will STALKER 2’s first DLC be unveiled this week? On Thursday, March 26th, Microsoft will be hosting its 2026 Xbox Partner Preview, where GSC Game World plans to deliver an “update on STALKER 2: Heart of Chornobly. This event is likely to host the unveiling of the first story DLC for STALKER 2, and could […]