Google and Internet2 launched a collaborative AI leadership program for higher education.

Last week, we launched the Internet2 NET+ Google AI Education Leadership Program (ELP).

Last week, we launched the Internet2 NET+ Google AI Education Leadership Program (ELP).  Last week, we launched the Internet2 NET+ Google AI Education Leadership Program (ELP).

Last week, we launched the Internet2 NET+ Google AI Education Leadership Program (ELP).

Most marketing teams still treat SEO and PPC as budget rivals, not as complementary systems facing the same performance challenges.

In practice, these relationships fall into three types:

Only mutualism creates sustainable performance gains – and it’s the shift marketing teams need to make next.

One glaring problem unites online marketers: we’re getting less traffic for the same budget.

Navigating the coming years requires more than the coexistence many teams mistake for collaboration.

We need mutualism – shared technical standards that optimize for both organic visibility and paid performance.

Shared accountability drives lower acquisition costs, faster market response, and sustainable gains that neither channel can achieve alone.

Here’s what it looks like in practice:

During SEO penalties and core updates, PPC can maintain traffic until recovery.

Core updates cause fluctuations in organic rankings and user behavior, which, in turn, can affect ad relevance and placements.

PPC-only landing pages affect the Core Web Vitals of entire sites, influencing Google’s default assumptions for URLs without enough traffic to calculate individual scores.

Paid pages are penalized for slow loading just as much as organic ones, impacting Quality Score and, ultimately, bids.

PPC should answer a simple question: Are we getting the types of results we expect and want?

Setting clear PPC baselines by market and country provides valuable, real-time keyword and conversion data that SEO teams can use to strengthen organic strategies.

By analyzing which PPC clicks drive signups or demo requests, SEO teams can prioritize content and keyword targets with proven high intent.

Sharing PPC insights enables organic search teams to make smarter decisions, improve rankings, and drive better-qualified traffic.

Dig deeper: The end of SEO-PPC silos: Building a unified search strategy for the AI era

One key question to ask is: how do we measure incrementality?

We need to quantify the true, additional contribution PPC and SEO drive above the baseline.

Guerrilla testing offers a lo-fi way to do this – turning campaigns on or off in specific markets to see whether organic conversions are affected.

A more targeted test involves turning off branded campaigns.

PPC ads on branded terms can capture conversions that would have occurred organically, making paid results appear stronger and SEO weaker.

That’s exactly what Arturs Cavniss’ company did – and here are the results.

For teams ready to operate in a more sophisticated way, several options are available.

One worth exploring is Robyn, an open-source, AI/ML-powered marketing mix modeling (MMM) package.

Core Web Vitals measures layout stability, rendering efficiency, and server response times – key factors influencing search visibility and overall performance.

These metrics are weighted by Google in evaluating page experience.

| Core Web Vitals Metric | Google’s Weight |

| First Contentful Paint | 10% |

| Speed Index | 10% |

| Largest Contentful Paint | 25% |

| Total Blocking Time | 30% |

| Cumulative Layout Shift | 25% |

Core Web Vitals:

You can create a modified weighted system to reflect a combined SEO and PPC baseline. (Here’s a quick MVP spreadsheet to get started.)

However, SEO-focused weightings don’t capture PPC’s Quality Score requirements or conversion optimization needs.

Clicking an ad link can be slower than an organic one because Google’s ad network introduces extra processes – additional data handling and script execution – before the page loads.

The hypothesis is that ad clicks may consistently load slower than organic ones due to these extra steps in the ad-serving process.

This suggests that performance standards designed for organic results may not fully represent the experience of paid users.

Microsoft Ads Liaison Navah Hopkins notes that paid pages are penalized for slow loading just as severely as organic ones – a factor that directly affects Quality Score and bids.

SEOs also take responsibility for improving PPC-only landing pages, even without being asked. As Jono Alderson explains:

PPC-only landing pages influence the Core Web Vitals of entire sites, shaping Google’s assumptions for low-traffic URLs.

Agentic AI’s sensitivity to interaction delays has made Interaction to Next Paint (INP) a critical performance metric.

INP measures how quickly a website responds when a human or AI agent interacts with a page – clicking, scrolling, or filling out forms while completing tasks.

When response times lag, agents fail tasks, abandon the site, and may turn to competitors.

INP doesn’t appear in Chrome Lighthouse or PageSpeed Insights because those are synthetic testing tools that don’t simulate real interactions.

Real user monitoring helps reveal what’s happening in practice, but it still can’t capture the full picture for AI-driven interactions.

PPC practitioners have long relied on Quality Score – a 1-10 scale measuring expected CTR and user intent fit – to optimize landing pages and reduce costs.

SEO lacks an equivalent unified metric, leaving teams to juggle separate signals like Core Web Vitals, keyword relevance, and user engagement without a clear prioritization framework.

You can create a company-wide quality score for pages to incentivize optimization and align teams while maintaining channel-specific goals.

This score can account for page type, with sub-scores for trial, demo, or usage pages – adaptable to the content that drives the most business value.

The system should account for overlapping metrics across subscores yet remain simple enough for all teams – SEO, PPC, engineering, and product – to understand and act on.

A unified scoring model gives everyone a common language and turns distributed accountability into daily practice.

When both channels share quality standards, teams can prioritize fixes that strengthen organic rankings and paid performance simultaneously.

Display advertising and SEO rarely share performance metrics, yet both pursue the same goal – converting impressions into engaged users.

Click-per-thousand impressions (CPTI) measures the number of clicks generated per 1,000 impressions, creating a shared language for evaluating content effectiveness across paid display and organic search.

For display teams, CPTI reveals which creative and targeting combinations drive engagement beyond vanity metrics like reach.

For SEO teams, applying CPTI to search impressions (via Google Search Console) shows which pages and queries convert visibility into traffic – exposing content that ranks well but fails to earn clicks.

This shared metric allows teams to compare efficiency directly: if a blog post drives 50 clicks per 1,000 organic impressions while a display campaign with similar visibility generates only 15 clicks, the performance gap warrants investigation.

Reverse CPM offers another useful lens. It measures how long content takes to “pay for itself” – the point where it reaches ROI.

For example, if an article earns 1 million impressions in a month, it should deliver roughly 1,000 clicks.

As generative AI continues to reshape traffic patterns, this metric will need refinement.

The most valuable insights emerge when SEO and PPC teams share operational intelligence rather than compete for credit.

PPC provides quick keyword performance data to respond to market trends faster, while SEO uncovers emerging search intent that PPC can immediately act on.

Together, these feedback loops create compound advantages.

SEO signals PPC should act on:

PPC signals SEO should act on:

When both channels share intelligence, insights extend beyond marketing performance into product and business strategy.

These feedback loops don’t require expensive tools – only an organizational commitment to regular cross-channel reviews in which teams share what’s working, what’s failing, and what deserves coordinated testing.

Treat technical performance as shared infrastructure, not channel-specific optimization.

Teams that implement unified Core Web Vitals standards, cross-channel attribution models, and distributed accountability systems will capture opportunities that siloed operations miss.

As agentic AI adoption accelerates and digital marketing grows more complex, symbiotic SEO-PPC operations become a competitive advantage rather than a luxury.

Something’s shifting in how SEO services are being marketed, and if you’ve been shopping for help with search lately, you’ve probably noticed it.

Over the past few months, “AI SEO” has emerged as a distinct service offering.

Browse service provider websites, scroll through Fiverr, or sit through sales presentations, and you’ll see it positioned as something fundamentally new and separate from traditional SEO.

Some are packaging it as “GEO” (generative engine optimization) or “AEO” (answer engine optimization), with separate pricing, distinct deliverables, and the implication that you need both this and traditional SEO to compete.

The pitch goes like this:

The data helps explain why the industry is moving so quickly.

AI-sourced traffic jumped 527% year-over-year from early 2024 to early 2025.

Service providers are responding to genuine market demand for AI search optimization.

But here’s what I’ve observed after evaluating what these AI SEO services actually deliver.

Many of these so-called new tactics are the same SEO fundamentals – just repackaged under a different name.

As a marketer responsible for budget and results, understanding this distinction matters.

It affects how you allocate resources, evaluate agency partners, and structure your search strategy.

Let’s dig into what’s really happening so you can make smarter decisions about where to invest.

The typical AI SEO sales deck has become pretty standardized.

Here are the most common claims I’m hearing.

They’ll show you how ChatGPT, Perplexity, and Claude are changing search behavior, and they’re not wrong about that.

Research shows that 82% of consumers agree that “AI-powered search is more helpful than traditional search engines,” signaling how search behavior is evolving.

The pitch emphasizes passage-level optimization, structured data, and Q&A formatting specifically for AI retrieval.

They’ll discuss how AI values mentions and citations differently than backlinks and how entity recognition matters more than keywords.

This creates urgency around a supposedly new practice that requires immediate investment.

The urgency is real. Only 22% of marketers have set up LLM brand visibility monitoring, but the question is whether this requires a separate “AI SEO” service or an expansion of your existing search strategy.

To be clear, the AI capabilities are real. What’s new is the positioning – familiar SEO practices rebranded to sound more revolutionary than they are.

When you examine what’s actually being recommended (passage-level content structure, semantic clarity, Q&A formatting, earning citations and mentions), you will find that these practices have been core to SEO for years.

Google introduced passage ranking in 2020 and featured snippets back in 2014.

Research from Fractl, Search Engine Land, and MFour found that generative engine optimization “is based on similar value systems that advanced SEOs, content marketers, and digital PR teams are already experts in.”

Let me show you what I mean.

What you’re hearing: “AI-powered semantic analysis and predictive keyword intelligence.”

What you’re hearing: “Machine learning content optimization that aligns with AI algorithms.”

What you’re hearing: “Entity-based authority building for AI platforms.”

Dig deeper: AI search is booming, but SEO is still not dead

I want to be fair here. There’s genuine debate in the SEO community about whether optimizing for AI-powered search represents a distinct discipline or an evolution of existing practices.

The differences are real.

These differences affect execution, but the strategic foundation remains consistent.

You still need to:

And here’s something that reinforces the overlap.

SEO professionals recently discovered that ChatGPT’s Atlas browser directly uses Google search results.

Even AI-powered search platforms are relying on traditional search infrastructure.

So yes, there are platform-specific tactics that matter.

The question for you as a marketer isn’t whether differences exist (they do).

The real question is whether those differences justify treating this as an entirely separate service with its own strategy and budget.

Or are they simply tactical adaptations of the same fundamental approach?

Dig deeper: GEO and SEO: How to invest your time and efforts wisely

The “separate AI SEO service” approach comes with a real risk.

It can shift focus toward short-term, platform-specific tactics at the expense of long-term fundamentals.

I’m seeing recommendations that feel remarkably similar to the blackhat SEO tactics we saw a decade ago:

These tactics might work today, but they’re playing a dangerous game.

Dig deeper: Black hat GEO is real – Here’s why you should pay attention

AI platforms are still in their infancy. Their spam detection systems aren’t yet as mature as Google’s or Bing’s, but that will change, likely faster than many expect.

AI platforms like Perplexity are building their own search indexes (hundreds of billions of documents).

They’ll need to develop the same core systems traditional search engines have:

They’re supposedly buying link data from third-party providers, recognizing that understanding authority requires signals beyond just content analysis.

We’ve seen this with Google.

In the early days, keyword stuffing and link schemes worked great.

Then, Google developed Panda and Penguin updates that devastated sites relying on those tactics.

Overnight, sites lost 50-90% of their traffic.

The same thing will likely happen with AI platforms.

Sites gaming visibility now with spammy tactics will face serious problems when these platforms implement stronger quality and spam detection.

As one SEO veteran put it, “It works until it doesn’t.”

Building around platform-specific tactics is like building on sand.

Focus instead on fundamentals – creating valuable content, earning authority, demonstrating expertise, and optimizing for intent – and you’ll have something sustainable across platforms.

I’m not anti-AI. Used well, it meaningfully improves SEO workflows and results.

AI excels at large-scale research and ideation – analyzing competitor content, spotting gaps, and mapping topic clusters in minutes.

For one client, it surfaced 73 subtopics we hadn’t fully considered.

But human expertise was still essential to align those ideas with business goals and strategic priorities.

AI also transforms data analysis and workflow automation – from reporting and rank tracking to technical monitoring – freeing more time for strategy.

AI clearly helps. The real question is whether these AI offerings bring truly new strategies or familiar ones powered by better tools.

After working with clients to evaluate various service models, I’ve seen consistent patterns in proposals that overpromise and underdeliver.

When evaluating any service provider, ask:

After working in SEO for 20 years, through multiple algorithm updates and trend cycles, I keep coming back to the same fundamentals:

Dig deeper: Thriving in AI search starts with SEO fundamentals

AI is genuinely changing how we work in search marketing – and that’s mostly positive.

The tools make us more efficient and enable analysis that wasn’t previously practical.

But AI only enhances good strategy. It doesn’t replace it.

Fundamentals still matter – along with audience understanding, quality, and expertise.

Search behavior is fragmenting across Google, ChatGPT, Perplexity, and social platforms, but the principles that drive visibility and trust remain consistent.

Real advantage doesn’t come from the newest tools or the flashiest “GEO” tactics.

It comes from a clear strategy, deep market understanding, strong execution of fundamentals, and smart use of technology to strengthen human expertise.

Don’t get distracted by hype or dismiss innovation. The balance lies in thoughtful AI integration within a solid strategic framework focused on business goals.

That’s what delivers sustainable results – whether people find you through Google, ChatGPT, or whatever comes next.

Create stunning videos by speaking to AI

The post Alphabet’s AI Reckoning: Cloud Momentum vs. Search Durability appeared first on StartupHub.ai.

The burgeoning influence of artificial intelligence presents a dual narrative for tech giants, particularly Alphabet, as it navigates both profound opportunities and existential threats to its established revenue streams. As CNBC’s MacKenzie Sigalos reported on “Worldwide Exchange,” ahead of Alphabet’s recent earnings call, the company finds itself at a critical juncture, balancing the burgeoning momentum […]

The post Alphabet’s AI Reckoning: Cloud Momentum vs. Search Durability appeared first on StartupHub.ai.

The post TestSprite raises $6.7M to fix AI generated code testing appeared first on StartupHub.ai.

TestSprite raised $6.7 million to address the critical bottleneck of AI generated code testing, enabling developers to validate AI-written code at unprecedented speeds.

The post TestSprite raises $6.7M to fix AI generated code testing appeared first on StartupHub.ai.

The post Amazon’s AI Power Play: Inside the $11 Billion Indiana Data Center appeared first on StartupHub.ai.

Amazon’s latest $11 billion AI data center in Indiana signals a profound shift in the foundational infrastructure powering the artificial intelligence revolution, an investment of unprecedented scale that underscores the intense competition for AI compute. In less than a year, Amazon transformed vast Indiana cornfields into its largest AI data center yet, an astonishing feat […]

The post Amazon’s AI Power Play: Inside the $11 Billion Indiana Data Center appeared first on StartupHub.ai.

The post AI Browser Security: The Peril of the Premature Launch appeared first on StartupHub.ai.

“The rush to get these things to market has not allowed them to be secured.” This stark assessment from Dave McGinnis, Global Partner for Cyber Threat Management Offering Group at IBM, encapsulates the central tension explored in a recent episode of IBM’s Security Intelligence podcast. Host Matt Kosinski, alongside McGinnis and fellow panelists Suja Viswesan […]

The post AI Browser Security: The Peril of the Premature Launch appeared first on StartupHub.ai.

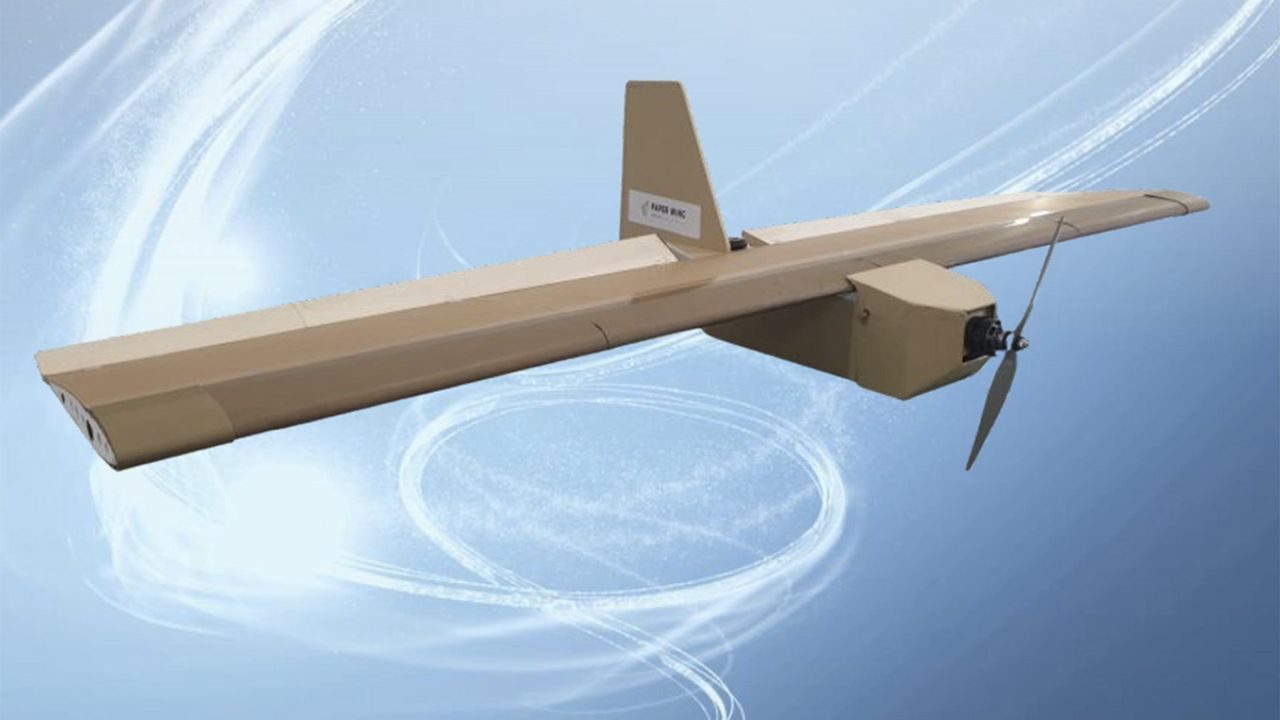

Seattle-based Radical says it has put a full-size prototype for a solar-powered drone through its first flight, marking one low-altitude step in the startup’s campaign to send robo-planes into the stratosphere for long-duration military and commercial missions.

“It’s a 120-foot-wingspan aircraft that only weighs 240 pounds,” Radical CEO James Thomas told GeekWire. “We’re talking about something that has a wingspan just a bit bigger than a Boeing 737, but it only weighs a little bit more than a person. So, it’s a pretty extreme piece of engineering, and we’re really proud of what our team has achieved so far.”

Last month’s flight test was conducted at the Tillamook UAS Test Range in Oregon, which is one of the sites designated by the Federal Aviation Administration for testing uncrewed aerial systems. Thomas declined to delve into the details about the flight’s duration or maximum altitude, other than to say that it was a low-altitude flight.

“We take off from the top of a car, and takeoff speeds are very low, so it flies just over 15 miles an hour on the ground or at low altitudes,” he said. (Thomas later added that the car was a Subaru, a choice he called “a Pacific Northwest move, I guess.”)

The prototype ran on battery power alone, but future flights will make use of solar arrays mounted on the plane’s wings to keep it in the air at altitudes as high as 65,000 feet for months at a time. For last month’s test, engineers added ballast to the prototype to match the weight of the solar panels and batteries required for stratospheric flight. Thomas said he expects high-altitude tests to begin next year.

Thomas and his fellow co-founder, chief technology officer Cyriel Notteboom, are veterans of Prime Air, Amazon’s effort to field a fleet of delivery drones. They left Amazon in mid-2022 to launch Radical and have since raised more than $4.5 million in funding. September’s test of a full-size drone follows up on the 24-hour-plus flight of a 13-pound subscale prototype in 2023.

The company’s manufacturing operation is based in Seattle’s Ballard neighborhood. There are currently six people on the team, plus a new hire, Thomas said. “We’re still lean,” he said. “To make this airplane work, it has to be really efficient, right? Really efficient electronics and aerodynamics. And you also need a really efficient team.”

Thomas said Radical has attracted interest from potential customers, but he shied away from discussing details. “We’re working with groups in the government and also commercially,” he said. “Obviously there are applications at the end of this that span everything from imagery through telecommunications and weather forecasting. There are a lot of people really interested in the technology, and the thing that stops us from serving those customers is not having a product up in the sky. So, that’s what we’re working through.”

Radical’s solar-powered airplane, known as Evenstar, is just one example of a class of aircraft known as high-altitude platform stations, or HAPS. Thomas and his teammates use a different term to refer to Evenstar. They call it a StratoSat, because it’s designed to take on many of the tasks typically assigned to satellites — but without the costs and the hassles associated with launching a spacecraft.

Potential applications include doing surveillance from a vantage point that’s difficult to attack, providing telecommunication links in areas where connectivity is constrained, monitoring weather patterns and conducting atmospheric research.

“We have customers who are really excited about the way that this can improve how we understand Earth’s weather systems and climate,” Thomas said. “That’s an application that we’re really excited to get into.”

Evenstar will carry payloads weighing up to about 33 pounds (15 kilograms). “That was based on analysis about major use cases,” Thomas explained. “That payload is enough to carry high-bandwidth, direct-to-device radio communications, or to carry ultra-high-resolution imaging equipment.”

Radical isn’t the only company working on solar-powered aircraft built for long-duration flights in the stratosphere. Other entrants in the market include AeroVironment, SoftBank, BAE Systems, Swift Engineering, Kea Aerospace, Korea Aerospace Industries and NewSpace Research & Technologies. Airbus’ solar-powered Zephyr set the record for long-duration stratospheric flight in 2022 with a 64-day test mission that ended in a crash.

Among those who tried but failed to field stratospheric solar drones are Alphabet, which closed down Titan Aerospace in 2016; and Facebook, which abandoned Project Aquila in 2018.

Thomas said the outlook for high-flying solar planes has brightened in the past decade.

“The key supporting technologies have matured enormously,” he said. “Commercial battery energy density has doubled in that 10-year time period. Solar cells are 10 times cheaper than they were just 10 years ago. And then you have advances in compute and AI, and all of these things feed into the situation we have now, where it’s actually possible to make the models close — whereas when we run the 10-year-old numbers, we can’t close the models.”

The way Thomas sees it, the concept behind Radical isn’t all that radical anymore.

“Not only do our models say this will work, but we have flight data that agrees with our models, and says this is a technology that can serve its purpose and unlock the potential of persistent infrastructure in the sky,” he said. “I can see why other people are pursuing it. It’s not a new idea. It’s one that people have wanted to crack for a long time, and we’re at this critical inflection point where it’s finally possible.”

In the era of AI-generated software, developers still need to make sure their code is clean. That’s where TestSprite wants to help.

The Seattle startup announced $6.7 million in seed funding to expand its platform that automatically tests and monitors code written by AI tools such as GitHub Copilot, Cursor, and Windsurf.

TestSprite’s autonomous agent integrates directly into development environments, running tests throughout the coding process rather than as a separate step after deployment.

“As AI writes more code, validation becomes the bottleneck,” said CEO Yunhao Jiao. “TestSprite solves that by making testing autonomous and continuous, matching AI speed.”

The platform can generate and run front- and back-end tests during development to ensure AI-written code works as expected, help AI IDEs (Integrated Development Environments) fix software based on TestSprite’s integration testing reports, and continuously update and rerun test cases to monitor deployed software for ongoing reliability.

Founded last year, TestSprite says its user base grew from 6,000 to 35,000 in three months, and revenue has doubled each month since launching its 2.0 version and new Model Context Protocol (MCP) integration. The company employs about 25 people.

Jiao is a former engineer at Amazon and a natural language processing researcher. He co-founded TestSprite with Rui Li, a former Google engineer.

Jiao said TestSprite doesn’t compete with AI coding copilots, but complements them by focusing on continuous validation and test generation. Developers can trigger tests using simple natural-language commands, such as “Test my payment-related features,” directly inside their IDEs.

The seed round was led by Bellevue, Wash.-based Trilogy Equity Partners, with participation from Techstars, Jinqiu Capital, MiraclePlus, Hat-trick Capital, Baidu Ventures, and EdgeCase Capital Partners. Total funding to date is about $8.1 million.

It looks like Crash Bandicoot is the newest video game classic to move to Netflix Netflix is the king of video game adaptations. In recent years, Netflix has adapted Castlevania, Tomb Raider, Splinter Cell, Sonic the Hedgehog, and even Cyberpunk 2077. Now, the streaming giant is reportedly developing a new animated series based on Crash […]

The post Crash Bandicoot Netflix series in the works – reports claim appeared first on OC3D.

No founder plans for the day they get fired from their own company.

You plan for funding rounds, product launches and exits, but not for the boardroom moment when everyone raises their hand, and you realize your journey inside the company is over.

It happened to me. I called that board meeting. I set the vote. We had to choose who would stay, me or my co-founder. The vote didn’t go my way.

In movies, this is where the music swells and the credits roll. Steve Jobs after John Sculley. Travis Kalanick after Bill Gurley. In real life, there’s no cinematic pause. No final scene. Just the quiet realization that everything you built now belongs to someone else.

What follows isn’t drama, either. It’s disorientation. And like most founders, I had no idea how to handle it.

When it ended, I filled my calendar with aimless meetings. Five or six a day. Not because they had any real purpose, but because it felt strange not to be doing business. For more than 10 years, I’d never had a day when I didn’t have to think about work. A startup teaches you to fix things fast.

When you’re out, though, there’s nothing left to fix. Only yourself. Getting pushed out isn’t like missing a quarterly target. It’s like losing the story you’ve been telling yourself for years.

The hardest part is that you don’t know who to blame.

Investors? They were doing their job. Yourself? Every decision made sense in context. So the frustration lands on the person closest to you. Your co-founder. It’s not about logic. I would say it is more of a defense mechanism. It’s how the mind tries to make sense of loss.

For months, I kept asking: What did we do wrong? It took me a couple of years to see the pattern.

Later, working inside a venture fund helped me see the truth. I saw the same story play out again and again. Founders repeating the same emotional arc, as follows:

Every time, the same sequence. And when the dream fades, blame fills the gap.

The pattern itself is that the anger toward a co-founder is often a projection of disappointment from a failed deal. If that energy isn’t processed consciously, it finds its own way out, usually as anger. You can’t really be mad at yourself; you did everything right. The other side acted in their own interest. So it lands on the person next to you, your co-founder and your team, and for them, it’s you.

And that’s where I have a bit of a claim toward investors because they often see this dynamic coming and could at least warn founders about it.

Once I recognized the pattern, I stopped seeing my story as a failure. It was part of a cycle almost every founder goes through, only most don’t talk about it.

Traditional business tools didn’t help. OKRs, planning sessions, strategy off-sites, none of it worked on the inner collapse that comes when your identity and your company split apart.

This led me to begin studying Gestalt therapy. It gave me the language to understand how situations like this actually work, their cycles, causes and effects, and how to think about them with the right awareness and perspective. One part of building startups isn’t about pivots or fundraising. It’s realizing how much of yourself you’ve tied to the story you’re telling the world.

The point is to first get conscious of your anger, and then let it out.

Acceptance doesn’t show up all at once. It arrives in pieces.

For me, the first piece came when I watched another founder go through the same breakdown and recognized every stage.

The second came when my first startup was acquired. Not at the valuation I’d dreamed of, but enough to accept that it continued without me. The third came with my current company, Intch, which is built from calm, not from fear.

I no longer measure success by control, but by clarity.

Here’s what I’d share now with another entrepreneur who finds themselves in the same situation.

Founders are trained to manage everything except their own psychology. But startups are way more than capital and code. They run on the emotional architecture of the people who build them. And when that structure breaks, rebuilding it is the most important startup you’ll ever work on.

Yakov Filippenko is a seasoned entrepreneur with more than 10 years of experience in IT and technologies, as well as scaling businesses internationally. As a product manager at Yandex, he led a team that grew the product’s user base from 500,000 to 1.2 million and secured its entry into the international market. Subsequently, he co-founded SailPlay, which he scaled to 45 countries and eventually exited, after it was acquired by Retail Rocket in 2018. In 2021, Filippenko launched Intch, an AI-powered platform connecting part-time professionals with flexible roles.

Illustration: Dom Guzman

With its many accessibility options, Ninja Gaiden 4 is one of the most approachable entries in the series, allowing players to experience its high-speed action without letting the high challenge level get in the way of enjoyment. Those who want to truly appreciate the game, however, will wish to master many of its intricacies, put their skills to the test, and attempt to achieve an S rank for completing each of the story chapters. Here are some tips to help you understand the mission scoring system and what you should always strive to do to achieve such a high rank. […]

Read full article at https://wccftech.com/how-to/ninja-gaiden-4-achieve-s-rank-easily-in-all-missions-with-this-trick/

The A20 and A20 Pro will be Apple’s first chipsets fabricated on TSMC’s 2nm process, pretty much highlighting the company’s propensity to jump to the newest manufacturing nodes as quickly as possible to have an advantage over the competition. On the same lithography, we expect the California-based giant to introduce a total of four chipsets, and after a couple of generations, Apple will switch to an even more advanced technology. The most obvious transition would be TSMC’s A16, or 1.6nm, but a report says neither company has entered talks for this node. Future Apple chipsets are expected to take advantage of […]

Read full article at https://wccftech.com/apple-not-yet-in-talks-with-tsmc-over-a16-process/

John Romero might not be a name that youngsters recognize, but he was a legend of the early days of the first-person shooter genre, co-founding id Software and making videogames like Wolfenstein 3D, Doom, Hexen, and Quake, to name a few. Nowadays, he makes smaller titles at Romero Games. The studio's most recent title was the Mafia-themed turn-based strategy game Empire of Sin, launched in late 2020 to a mixed reception. More recently, John Romero and his fellow developers signed a deal with Microsoft for their next project, but that deal went awry along with the latest Xbox layoffs. The […]

Read full article at https://wccftech.com/john-romero-talking-companies-finish-game-funded-by-microsoft/

Microsoft CEO Satya Nadella was featured in a live interview on TBPN (Technology Business Programming Network) discussing various topics, including the company's updated multiplatform strategy on the gaming front. Nadella pointed out that following the acquisition of Activision Blizzard, Microsoft is now the largest gaming publisher in terms of revenue. The goal, then, is to be everywhere the consumer is, just like with Office. The Microsoft CEO then interestingly pointed to TikTok, or, to be more accurate, short-form video as a whole, as the true competitor of gaming. Remember, the biggest gaming business is the Windows business. And of course, […]

Read full article at https://wccftech.com/microsoft-ceo-largest-gaming-publisher-want-to-be-everywhere-competition-tiktok/

ZipWik lets you turn several documents—PDFs, slides, images or spreadsheets—into one simple document and a link you can share anywhere, from WhatsApp to Slack. You can control who sees it, set it to expire, and skip the hassle of sending large attachments. ZipWik also shows you what happens after you share: who viewed it, how long they spent, whether they downloaded or shared it, and which documents got the most attention. It’s an easy way to share files, stay in control, and actually understand how people engage with your content.

No more struggles with large attachments, sharing documents that you cannot control, inability to combine document formats..ZipWik does it all. Try Today.

AI launches your product: page, posts, PH draft all in one

Your copilot for on-brand content at any scale

One workspace where AI, code, and teams work together

Host LLMs across devices sharing GPU to make your AI go brrr

When startups Financial data meets business intelligence

The weekly AI project chart judged by AI

All investor relations material in one powerful AI chat.

Understand, review, and refactor your code 20x faster

Preview and share live app updates across any device

Stop running commands one by one yourself

The AI writing partner with taste

Turn a boring LinkedIn post into a fun TikTok-style video

Build and deploy local AI agents offline

The Production AI Platform

Instant compliant test data for engineering teams

Screen recordings made beautiful

AI code editor for PyTorch development on GPU workspaces

AI-powered speech to text, tts, & translation Mac app

Build full-stack apps with AI from Database first

Assign, steer, and track copilot coding agent tasks

Social Media Videos to Data API

The post Arm’s GitHub Copilot Agentic AI: Cloud Migration’s Next Leap appeared first on StartupHub.ai.

Arm and GitHub's new Migration Assistant Custom Agent for GitHub Copilot Agentic AI fundamentally transforms cloud migration to Arm-based infrastructure.

The post Arm’s GitHub Copilot Agentic AI: Cloud Migration’s Next Leap appeared first on StartupHub.ai.

The post RealWear Arc 3 launch: A lighter AR headset with natural language AI for industry appeared first on StartupHub.ai.

The RealWear Arc 3 launch introduces a lightweight AR headset and a natural language voice OS, aiming to democratize industrial augmented reality.

The post RealWear Arc 3 launch: A lighter AR headset with natural language AI for industry appeared first on StartupHub.ai.

The post BRKZ’s $30M finance facility aims to unblock Saudi construction appeared first on StartupHub.ai.

BRKZ's $30M finance facility will provide crucial flexible payment options, accelerating Saudi Arabia's massive construction projects.

The post BRKZ’s $30M finance facility aims to unblock Saudi construction appeared first on StartupHub.ai.

The post Vesence lands $9M to bring rigorous AI review to law firms appeared first on StartupHub.ai.

Vesence's $9M seed round fuels its mission to embed rigorous AI review agents directly into Microsoft Office, promising law firms unparalleled precision and compliance.

The post Vesence lands $9M to bring rigorous AI review to law firms appeared first on StartupHub.ai.

The post Cartesia’s Sonic-3 TTS laughs and emotes at human speed appeared first on StartupHub.ai.

Cartesia's Sonic-3 uses a State Space Model architecture to deliver emotionally expressive AI speech, including laughter, at speeds faster than a human can respond.

The post Cartesia’s Sonic-3 TTS laughs and emotes at human speed appeared first on StartupHub.ai.

The post Salesforce Agentic AI: The Enterprise Evolution appeared first on StartupHub.ai.

Salesforce's 'Agentic AI' strategy, featuring Agentforce 360 and Forward Deployed Engineers, aims to fundamentally redefine enterprise operations with unified, workflow-spanning AI agents.

The post Salesforce Agentic AI: The Enterprise Evolution appeared first on StartupHub.ai.

The post Polygraf AI Closes $9.5M Funding Round to Scale Its Secure AI Solutions for Enterprise Defense and Intelligence appeared first on StartupHub.ai.

Polygraf AI, based in Austin, Texas announced the closing of their $9.5M seed round participation from DOMiNO Ventures, Allegis Capital, Alumni Ventures, DataPower VC and previous investors to accelerate their mission to bring clarity and trust to enterprise AI. With the new $9.5M Seed round, Polygraf AI is building the next generation of enterprise AI […]

The post Polygraf AI Closes $9.5M Funding Round to Scale Its Secure AI Solutions for Enterprise Defense and Intelligence appeared first on StartupHub.ai.

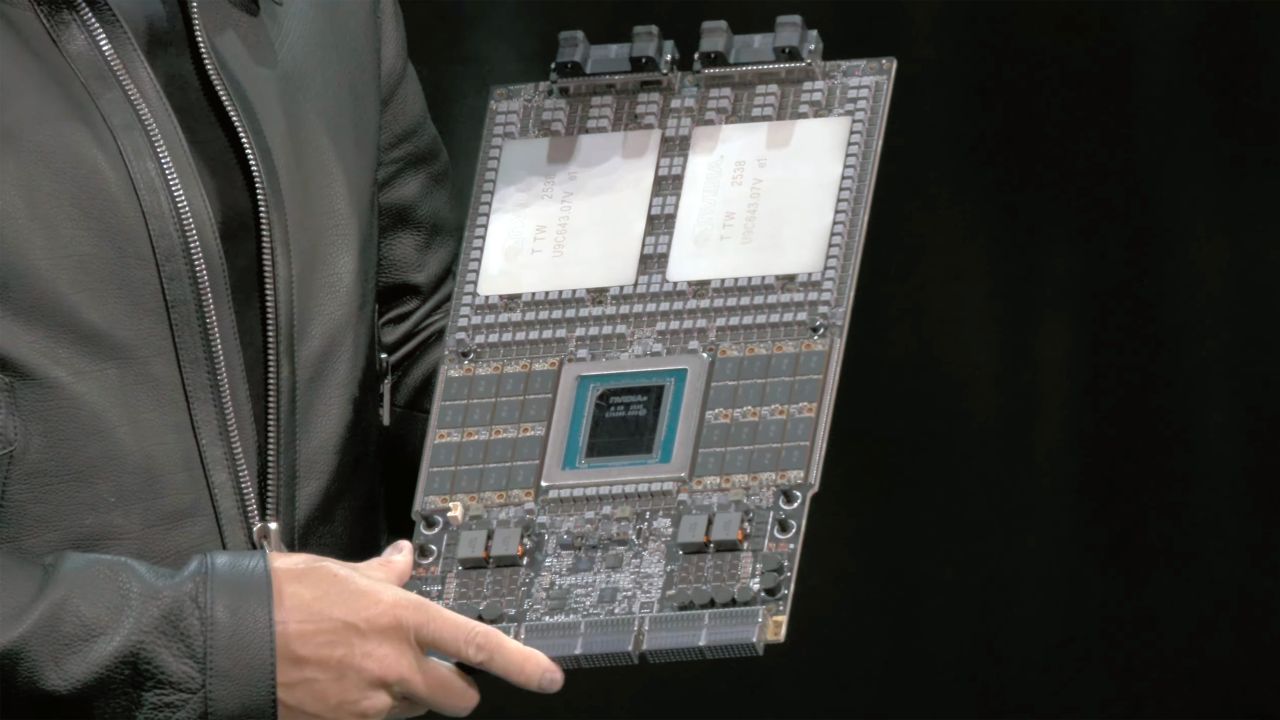

The post NVIDIA BlueField-4 Powers AI Factory OS appeared first on StartupHub.ai.

NVIDIA BlueField-4 is poised to redefine AI infrastructure, offering unprecedented compute power, 800Gb/s throughput, and advanced security for gigascale AI factories.

The post NVIDIA BlueField-4 Powers AI Factory OS appeared first on StartupHub.ai.

The post Primaa raises €7M to advance AI cancer diagnostics appeared first on StartupHub.ai.

Biotech company Primaa raised €7 million to expand its AI software that helps pathologists improve the speed and accuracy of cancer diagnostics.

The post Primaa raises €7M to advance AI cancer diagnostics appeared first on StartupHub.ai.

GPU prices have finally cooled, DDR5 has spiked, and AMD's new Threadripper opens fresh pro power. Here are five builds for every budget - mix and match to fit your needs.

Fitresume.app is a free, AI‑driven resume builder that generates ATS‑friendly resumes, lets you choose polished templates, and custom‑tailors every resume to the exact wording of each job description. Ready to download as a PDF in seconds. Beyond writing, it tracks every application, follow‑up, and interview while visualizing your entire pipeline with an interactive Sankey diagram, so you stay organised and land offers faster.

The post NVIDIA Tackles AI Energy Consumption with Gigawatt Blueprint appeared first on StartupHub.ai.

NVIDIA's Omniverse DSX blueprint provides a standardized, energy-efficient framework for designing and operating gigawatt-scale AI factories, directly addressing AI energy consumption.

The post NVIDIA Tackles AI Energy Consumption with Gigawatt Blueprint appeared first on StartupHub.ai.

The post NVIDIA Open Models Broaden AI Innovation Access appeared first on StartupHub.ai.

NVIDIA's new open models and data across language, robotics, and biology are set to democratize advanced AI and accelerate innovation.

The post NVIDIA Open Models Broaden AI Innovation Access appeared first on StartupHub.ai.

The post OpenAI Restructuring and Amazon’s AI Paradox Reshape Tech Landscape appeared first on StartupHub.ai.

The capital requirements and strategic maneuvering defining the artificial intelligence frontier are starkly evident in recent developments, from OpenAI’s finalized restructuring to Amazon’s contrasting AI investment strategy. CNBC’s Morgan Brennan recently spoke with CNBC Business News reporter MacKenzie Sigalos, delving into the implications of these pivotal shifts for the broader tech ecosystem and workforce. Their […]

The post OpenAI Restructuring and Amazon’s AI Paradox Reshape Tech Landscape appeared first on StartupHub.ai.

The post NVIDIA AI Factory Government: Securing Public Sector AI appeared first on StartupHub.ai.

NVIDIA's AI Factory for Government provides a secure, full-stack AI reference design, enabling federal agencies to deploy mission-critical AI with stringent security.

The post NVIDIA AI Factory Government: Securing Public Sector AI appeared first on StartupHub.ai.

The post NVIDIA IGX Thor Powers Real-Time AI at the Industrial Edge appeared first on StartupHub.ai.

NVIDIA IGX Thor is an industrial-grade platform delivering 8x the AI compute of its predecessor, enabling real-time physical AI for critical industrial and medical applications.

The post NVIDIA IGX Thor Powers Real-Time AI at the Industrial Edge appeared first on StartupHub.ai.

NVIDIA's market position in China could see a significant boost following the Trump-Xi meeting, as President Trump hints at discussing 'Blackwell' AI chips for Beijing. NVIDIA's Blackwell AI Chip Will Be a Topic of Discussion Under Trump-Xi Meeting, With a Potential Breakthrough in Sight The Chinese market has been a significant challenge for Jensen Huang since the US-China trade hostilities, and now, it seems like there might be a sigh of relief on the horizon for NVIDIA. According to a report by Bloomberg, President Trump has suggested discussing NVIDIA's Blackwell AI chip with the Chinese counterpart, indicating that chips could […]

Read full article at https://wccftech.com/nvidia-could-receive-approval-for-blackwell-ai-chip-in-china/

YouTube's also changing the name of the 'copyright' tab in YouTube Studio.

Amazon will lay off 2,303 corporate employees in Washington state, primarily in its Seattle and Bellevue offices, according to a filing with the state Employment Security Department that provides the first geographic breakdown of the company’s 14,000 global job cuts.

A detailed list included with the state filing shows a wide array of impacted roles, including software engineers, program managers, product managers, and designers, as well as a significant number of recruiters and human resources staff.

Senior and principal-level roles are among those being cut, aligning with a company-wide push to use the cutbacks to help reduce bureaucracy and operate more efficiently.

Amazon announced the cuts Tuesday morning, part of a larger push by CEO Andy Jassy to streamline the company. Jassy had previously told Amazon employees in June that efficiency gains from AI would likely lead to a smaller corporate workforce over time.

In a memo from HR chief Beth Galetti, the company signaled that further cutbacks will continue into 2026. Reuters reported Monday that the number of layoffs could ultimately total as many as 30,000 people, which is still possible as the layoffs continue into next year.

The post Lilly Blackwell Drug Discovery: A New Era appeared first on StartupHub.ai.

Lilly's new AI factory, powered by NVIDIA Blackwell GPUs, marks a pivotal shift in drug discovery, promising unprecedented speed and scale in pharmaceutical innovation.

The post Lilly Blackwell Drug Discovery: A New Era appeared first on StartupHub.ai.

The post NVIDIA AI-RAN: Open Source Rewrites Wireless Innovation appeared first on StartupHub.ai.

NVIDIA's open-sourcing of Aerial software, coupled with DGX Spark, is democratizing AI-native 5G and 6G development, accelerating wireless innovation at an unprecedented pace.

The post NVIDIA AI-RAN: Open Source Rewrites Wireless Innovation appeared first on StartupHub.ai.

The post Celestica CEO Mionis: AI is a Must-Have Utility, Not a Bubble appeared first on StartupHub.ai.

“AI right now used to be a nice-to-have. It’s a utility, it’s a must-have.” This declarative statement from Celestica President and CEO Rob Mionis on CNBC’s Mad Money with Jim Cramer cuts directly to the core of the current technological zeitgeist. It frames artificial intelligence not as a speculative fad or a nascent technology still […]

The post Celestica CEO Mionis: AI is a Must-Have Utility, Not a Bubble appeared first on StartupHub.ai.

The post Google AI Studio Unleashes “Vibe Coding” Revolutionizing AI Agent Development appeared first on StartupHub.ai.

The era of complex, code-heavy AI development is rapidly giving way to an intuitive, natural language-driven approach, dramatically democratizing creation. At the forefront of this shift is Google AI Studio, a platform designed to accelerate the journey from concept to fully functional AI application in minutes. This new “vibe coding” experience, showcased by Logan Kilpatrick, […]

The post Google AI Studio Unleashes “Vibe Coding” Revolutionizing AI Agent Development appeared first on StartupHub.ai.

The post AI Fusion Energy: NVIDIA, GA Unveil Digital Twin Breakthrough appeared first on StartupHub.ai.

NVIDIA and General Atomics have launched an AI-enabled digital twin for fusion reactors, dramatically accelerating the path to commercial AI fusion energy.

The post AI Fusion Energy: NVIDIA, GA Unveil Digital Twin Breakthrough appeared first on StartupHub.ai.

The post CyberRidge raises $26M to advance optical security for fiber networks appeared first on StartupHub.ai.

CyberRidge launched with $26 million to develop its optical security system that protects data in fiber-optic networks from eavesdropping.

The post CyberRidge raises $26M to advance optical security for fiber networks appeared first on StartupHub.ai.

MSI GeForce RTX 5050 Shadow 2X 8GB: $249 The GeForce RTX 5050 is the most-affordable graphics card based on NVIDIA's Blackwell architecture that targets mainstream gamers, and is the first RTX XX50 series GPU for the desktop in years. Blackwell Architecture Power Efficient Smaller Form Factor Cool And Quiet DLSS4 And RTX Neural Rendering Latest...

MSI GeForce RTX 5050 Shadow 2X 8GB: $249 The GeForce RTX 5050 is the most-affordable graphics card based on NVIDIA's Blackwell architecture that targets mainstream gamers, and is the first RTX XX50 series GPU for the desktop in years. Blackwell Architecture Power Efficient Smaller Form Factor Cool And Quiet DLSS4 And RTX Neural Rendering Latest...

Apple is finally getting ready to introduce OLED displays in a wider range of its products. However, don't expect a broad-based debut soon, especially given the Cupertino giant's tendency to move at a glacial pace when introducing new technology. Apple is gearing up to introduce OLED displays in the future versions of the MacBook Air, iPad Air, and iPad mini, with water resistance added for good measure Bloomberg's legendary tipster, Mark Gurman, is out with another scoop today, focusing on a much-anticipated display overhaul for the MacBook Air, iPad Air, and iPad mini, all of which are now slated to […]

Read full article at https://wccftech.com/apple-is-testing-oled-displays-for-the-macbook-air-ipad-air-and-ipad-mini-with-water-resistance-also-in-the-offing/

In an industry where model size is often seen as a proxy for intelligence, IBM is charting a different course — one that values efficiency over enormity, and accessibility over abstraction.

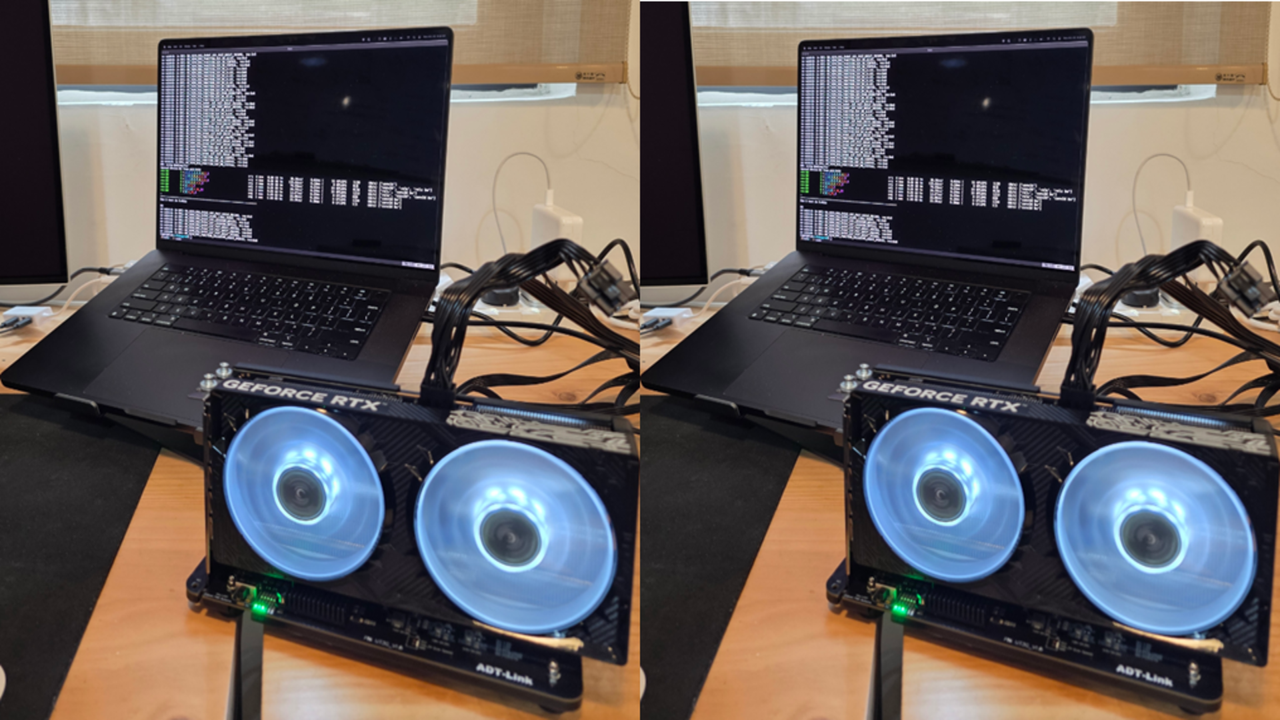

The 114-year-old tech giant's four new Granite 4.0 Nano models, released today, range from just 350 million to 1.5 billion parameters, a fraction of the size of their server-bound cousins from the likes of OpenAI, Anthropic, and Google.

These models are designed to be highly accessible: the 350M variants can run comfortably on a modern laptop CPU with 8–16GB of RAM, while the 1.5B models typically require a GPU with at least 6–8GB of VRAM for smooth performance — or sufficient system RAM and swap for CPU-only inference. This makes them well-suited for developers building applications on consumer hardware or at the edge, without relying on cloud compute.

In fact, the smallest ones can even run locally on your own web browser, as Joshua Lochner aka Xenova, creator of Transformer.js and a machine learning engineer at Hugging Face, wrote on the social network X.

All the Granite 4.0 Nano models are released under the Apache 2.0 license — perfect for use by researchers and enterprise or indie developers, even for commercial usage.

They are natively compatible with llama.cpp, vLLM, and MLX and are certified under ISO 42001 for responsible AI development — a standard IBM helped pioneer.

But in this case, small doesn't mean less capable — it might just mean smarter design.

These compact models are built not for data centers, but for edge devices, laptops, and local inference, where compute is scarce and latency matters.

And despite their small size, the Nano models are showing benchmark results that rival or even exceed the performance of larger models in the same category.

The release is a signal that a new AI frontier is rapidly forming — one not dominated by sheer scale, but by strategic scaling.

The Granite 4.0 Nano family includes four open-source models now available on Hugging Face:

Granite-4.0-H-1B (~1.5B parameters) – Hybrid-SSM architecture

Granite-4.0-H-350M (~350M parameters) – Hybrid-SSM architecture

Granite-4.0-1B – Transformer-based variant, parameter count closer to 2B

Granite-4.0-350M – Transformer-based variant

The H-series models — Granite-4.0-H-1B and H-350M — use a hybrid state space architecture (SSM) that combines efficiency with strong performance, ideal for low-latency edge environments.

Meanwhile, the standard transformer variants — Granite-4.0-1B and 350M — offer broader compatibility with tools like llama.cpp, designed for use cases where hybrid architecture isn’t yet supported.

In practice, the transformer 1B model is closer to 2B parameters, but aligns performance-wise with its hybrid sibling, offering developers flexibility based on their runtime constraints.

“The hybrid variant is a true 1B model. However, the non-hybrid variant is closer to 2B, but we opted to keep the naming aligned to the hybrid variant to make the connection easily visible,” explained Emma, Product Marketing lead for Granite, during a Reddit "Ask Me Anything" (AMA) session on r/LocalLLaMA.

IBM is entering a crowded and rapidly evolving market of small language models (SLMs), competing with offerings like Qwen3, Google's Gemma, LiquidAI’s LFM2, and even Mistral’s dense models in the sub-2B parameter space.

While OpenAI and Anthropic focus on models that require clusters of GPUs and sophisticated inference optimization, IBM’s Nano family is aimed squarely at developers who want to run performant LLMs on local or constrained hardware.

In benchmark testing, IBM’s new models consistently top the charts in their class. According to data shared on X by David Cox, VP of AI Models at IBM Research:

On IFEval (instruction following), Granite-4.0-H-1B scored 78.5, outperforming Qwen3-1.7B (73.1) and other 1–2B models.

On BFCLv3 (function/tool calling), Granite-4.0-1B led with a score of 54.8, the highest in its size class.

On safety benchmarks (SALAD and AttaQ), the Granite models scored over 90%, surpassing similarly sized competitors.

Overall, the Granite-4.0-1B achieved a leading average benchmark score of 68.3% across general knowledge, math, code, and safety domains.

This performance is especially significant given the hardware constraints these models are designed for.

They require less memory, run faster on CPUs or mobile devices, and don’t need cloud infrastructure or GPU acceleration to deliver usable results.

In the early wave of LLMs, bigger meant better — more parameters translated to better generalization, deeper reasoning, and richer output.

But as transformer research matured, it became clear that architecture, training quality, and task-specific tuning could allow smaller models to punch well above their weight class.

IBM is banking on this evolution. By releasing open, small models that are competitive in real-world tasks, the company is offering an alternative to the monolithic AI APIs that dominate today’s application stack.

In fact, the Nano models address three increasingly important needs:

Deployment flexibility — they run anywhere, from mobile to microservers.

Inference privacy — users can keep data local with no need to call out to cloud APIs.

Openness and auditability — source code and model weights are publicly available under an open license.

IBM’s Granite team didn’t just launch the models and walk away — they took to Reddit’s open source community r/LocalLLaMA to engage directly with developers.

In an AMA-style thread, Emma (Product Marketing, Granite) answered technical questions, addressed concerns about naming conventions, and dropped hints about what’s next.

Notable confirmations from the thread:

A larger Granite 4.0 model is currently in training

Reasoning-focused models ("thinking counterparts") are in the pipeline

IBM will release fine-tuning recipes and a full training paper soon

More tooling and platform compatibility is on the roadmap

Users responded enthusiastically to the models’ capabilities, especially in instruction-following and structured response tasks. One commenter summed it up:

“This is big if true for a 1B model — if quality is nice and it gives consistent outputs. Function-calling tasks, multilingual dialog, FIM completions… this could be a real workhorse.”

Another user remarked:

“The Granite Tiny is already my go-to for web search in LM Studio — better than some Qwen models. Tempted to give Nano a shot.”

IBM’s push into large language models began in earnest in late 2023 with the debut of the Granite foundation model family, starting with models like Granite.13b.instruct and Granite.13b.chat. Released for use within its Watsonx platform, these initial decoder-only models signaled IBM’s ambition to build enterprise-grade AI systems that prioritize transparency, efficiency, and performance. The company open-sourced select Granite code models under the Apache 2.0 license in mid-2024, laying the groundwork for broader adoption and developer experimentation.

The real inflection point came with Granite 3.0 in October 2024 — a fully open-source suite of general-purpose and domain-specialized models ranging from 1B to 8B parameters. These models emphasized efficiency over brute scale, offering capabilities like longer context windows, instruction tuning, and integrated guardrails. IBM positioned Granite 3.0 as a direct competitor to Meta’s Llama, Alibaba’s Qwen, and Google's Gemma — but with a uniquely enterprise-first lens. Later versions, including Granite 3.1 and Granite 3.2, introduced even more enterprise-friendly innovations: embedded hallucination detection, time-series forecasting, document vision models, and conditional reasoning toggles.

The Granite 4.0 family, launched in October 2025, represents IBM’s most technically ambitious release yet. It introduces a hybrid architecture that blends transformer and Mamba-2 layers — aiming to combine the contextual precision of attention mechanisms with the memory efficiency of state-space models. This design allows IBM to significantly reduce memory and latency costs for inference, making Granite models viable on smaller hardware while still outperforming peers in instruction-following and function-calling tasks. The launch also includes ISO 42001 certification, cryptographic model signing, and distribution across platforms like Hugging Face, Docker, LM Studio, Ollama, and watsonx.ai.

Across all iterations, IBM’s focus has been clear: build trustworthy, efficient, and legally unambiguous AI models for enterprise use cases. With a permissive Apache 2.0 license, public benchmarks, and an emphasis on governance, the Granite initiative not only responds to rising concerns over proprietary black-box models but also offers a Western-aligned open alternative to the rapid progress from teams like Alibaba’s Qwen. In doing so, Granite positions IBM as a leading voice in what may be the next phase of open-weight, production-ready AI.

In the end, IBM’s release of Granite 4.0 Nano models reflects a strategic shift in LLM development: from chasing parameter count records to optimizing usability, openness, and deployment reach.

By combining competitive performance, responsible development practices, and deep engagement with the open-source community, IBM is positioning Granite as not just a family of models — but a platform for building the next generation of lightweight, trustworthy AI systems.

For developers and researchers looking for performance without overhead, the Nano release offers a compelling signal: you don’t need 70 billion parameters to build something powerful — just the right ones.

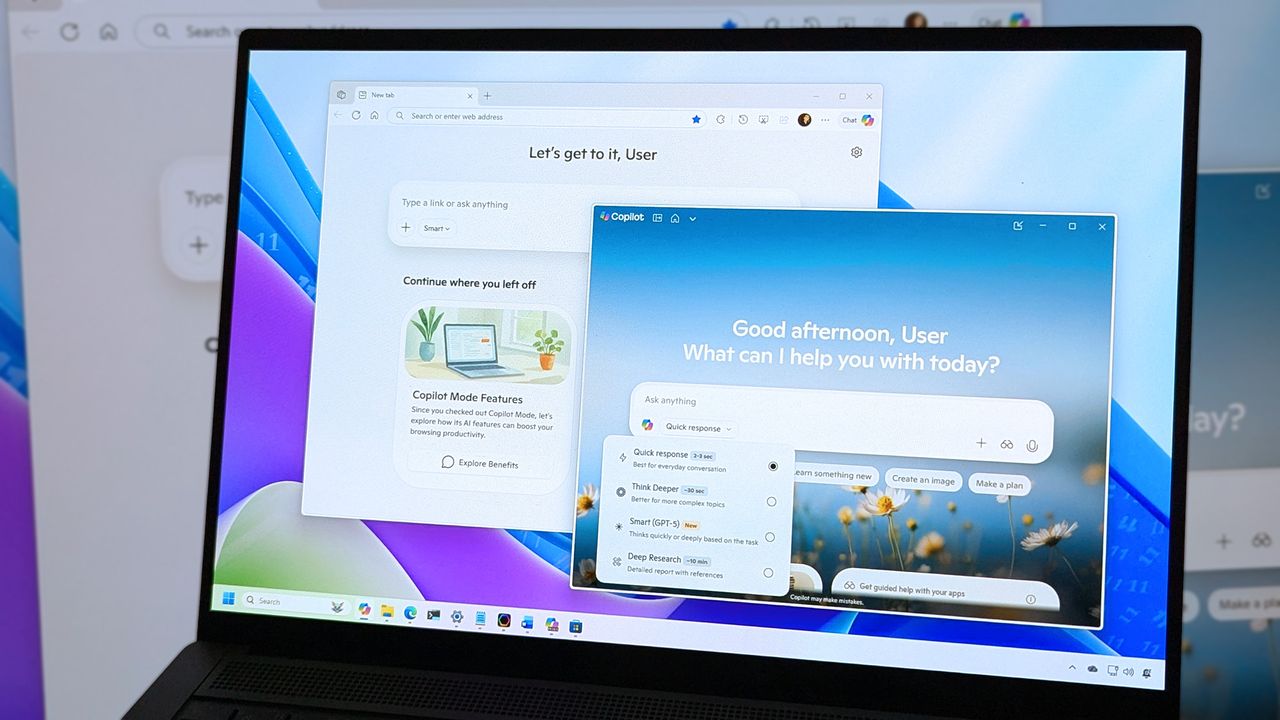

Microsoft is launching a significant expansion of its Copilot AI assistant on Tuesday, introducing tools that let employees build applications, automate workflows, and create specialized AI agents using only conversational prompts — no coding required.

The new capabilities, called App Builder and Workflows, mark Microsoft's most aggressive attempt yet to merge artificial intelligence with software development, enabling the estimated 100 million Microsoft 365 users to create business tools as easily as they currently draft emails or build spreadsheets.

"We really believe that a main part of an AI-forward employee, not just developers, will be to create agents, workflows and apps," Charles Lamanna, Microsoft's president of business and industry Copilot, said in an interview with VentureBeat. "Part of the job will be to build and create these things."

The announcement comes as Microsoft deepens its commitment to AI-powered productivity tools while navigating a complex partnership with OpenAI, the creator of the underlying technology that powers Copilot. On the same day, OpenAI completed its restructuring into a for-profit entity, with Microsoft receiving a 27% ownership stake valued at approximately $135 billion.

The new features transform Copilot from a conversational assistant into what Microsoft envisions as a comprehensive development environment accessible to non-technical workers. Users can now describe an application they need — such as a project tracker with dashboards and task assignments — and Copilot will generate a working app complete with a database backend, user interface, and security controls.

"If you're right inside of Copilot, you can now have a conversation to build an application complete with a backing database and a security model," Lamanna explained. "You can make edit requests and update requests and change requests so you can tune the app to get exactly the experience you want before you share it with other users."

The App Builder stores data in Microsoft Lists, the company's lightweight database system, and allows users to share finished applications via a simple link—similar to sharing a document. The Workflows agent, meanwhile, automates routine tasks across Microsoft's ecosystem of products, including Outlook, Teams, SharePoint, and Planner, by converting natural language descriptions into automated processes.

A third component, a simplified version of Microsoft's Copilot Studio agent-building platform, lets users create specialized AI assistants tailored to specific tasks or knowledge domains, drawing from SharePoint documents, meeting transcripts, emails, and external systems.

All three capabilities are included in the existing $30-per-month Microsoft 365 Copilot subscription at no additional cost — a pricing decision Lamanna characterized as consistent with Microsoft's historical approach of bundling significant value into its productivity suite.

"That's what Microsoft always does. We try to do a huge amount of value at a low price," he said. "If you go look at Office, you think about Excel, Word, PowerPoint, Exchange, all that for like eight bucks a month. That's a pretty good deal."

The new tools represent the culmination of a nine-year effort by Microsoft to democratize software development through its Power Platform — a collection of low-code and no-code development tools that has grown to 56 million monthly active users, according to figures the company disclosed in recent earnings reports.

Lamanna, who has led the Power Platform initiative since its inception, said the integration into Copilot marks a fundamental shift in how these capabilities reach users. Rather than requiring workers to visit a separate website or learn a specialized interface, the development tools now exist within the same conversational window they already use for AI-assisted tasks.

"One of the big things that we're excited about is Copilot — that's a tool for literally every office worker," Lamanna said. "Every office worker, just like they research data, they analyze data, they reason over topics, they also will be creating apps, agents and workflows."

The integration offers significant technical advantages, he argued. Because Copilot already indexes a user's Microsoft 365 content — emails, documents, meetings, and organizational data — it can incorporate that context into the applications and workflows it builds. If a user asks for "an app for Project Spartan," Copilot can draw from existing communications to understand what that project entails and suggest relevant features.

"If you go to those other tools, they have no idea what the heck Project Spartan is," Lamanna said, referencing competing low-code platforms from companies like Google, Salesforce, and ServiceNow. "But if you do it inside of Copilot and inside of the App Builder, it's able to draw from all that information and context."

Microsoft claims the apps created through these tools are "full-stack applications" with proper databases secured through the same identity systems used across its enterprise products — distinguishing them from simpler front-end tools offered by competitors. The company also emphasized that its existing governance, security, and data loss prevention policies automatically apply to apps and workflows created through Copilot.

While Microsoft positions the new capabilities as accessible to all office workers, Lamanna was careful to delineate where professional developers remain essential. His dividing line centers on whether a system interacts with parties outside the organization.

"Anything that leaves the boundaries of your company warrants developer involvement," he said. "If you want to build an agent and put it on your website, you should have developers involved. Or if you want to build an automation which interfaces directly with your customers, or an app or a website which interfaces directly with your customers, you want professionals involved."

The reasoning is risk-based: external-facing systems carry greater potential for data breaches, security vulnerabilities, or business errors. "You don't want people getting refunds they shouldn't," Lamanna noted.

For internal use cases — approval workflows, project tracking, team dashboards — Microsoft believes the new tools can handle the majority of needs without IT department involvement. But the company has built "no cliffs," in Lamanna's terminology, allowing users to migrate simple apps to more sophisticated platforms as needs grow.

Apps created in the conversational App Builder can be opened in Power Apps, Microsoft's full development environment, where they can be connected to Dataverse, the company's enterprise database, or extended with custom code. Similarly, simple workflows can graduate to the full Power Automate platform, and basic agents can be enhanced in the complete Copilot Studio.

"We have this mantra called no cliffs," Lamanna said. "If your app gets too complicated for the App Builder, you can always edit and open it in Power Apps. You can jump over to the richer experience, and if you're really sophisticated, you can even go from those experiences into Azure."

This architecture addresses a problem that has plagued previous generations of easy-to-use development tools: users who outgrow the simplified environment often must rebuild from scratch on professional platforms. "People really do not like easy-to-use development tools if I have to throw everything away and start over," Lamanna said.

The democratization of software development raises questions about governance, maintenance, and organizational complexity — issues Microsoft has worked to address through administrative controls.

IT administrators can view all applications, workflows, and agents created within their organization through a centralized inventory in the Microsoft 365 admin center. They can reassign ownership, disable access at the group level, or "promote" particularly useful employee-created apps to officially supported status.

"We have a bunch of customers who have this approach where it's like, let 1,000 apps bloom, and then the best ones, I go upgrade and make them IT-governed or central," Lamanna said.

The system also includes provisions for when employees leave. Apps and workflows remain accessible for 60 days, during which managers can claim ownership — similar to how OneDrive files are handled when someone departs.

Lamanna argued that most employee-created apps don't warrant significant IT oversight. "It's just not worth inspecting an app that John, Susie, and Bob use to do their job," he said. "It should concern itself with the app that ends up being used by 2,000 people, and that will pop up in that dashboard."

Still, the proliferation of employee-created applications could create challenges. Users have expressed frustration with Microsoft's increasing emphasis on AI features across its products, with some giving the Microsoft 365 mobile app one-star ratings after a recent update prioritized Copilot over traditional file access.

The tools also arrive as enterprises grapple with "shadow IT" — unsanctioned software and systems that employees adopt without official approval. While Microsoft's governance controls aim to provide visibility, the ease of creating new applications could accelerate the pace at which these systems multiply.

Microsoft's ambitions for the technology extend far beyond incremental productivity gains. Lamanna envisions a fundamental transformation of what it means to be an office worker — one where building software becomes as routine as creating spreadsheets.

"Just like how 20 years ago you put on your resume that you could use pivot tables in Excel, people are going to start saying that they can use App Builder and workflow agents, even if they're just in the finance department or the sales department," he said.

The numbers he's targeting are staggering. With 56 million people already using Power Platform, Lamanna believes the integration into Copilot could eventually reach 500 million builders. "Early days still, but I think it's certainly encouraging," he said.

The features are currently available only to customers in Microsoft's Frontier Program — an early access initiative for Microsoft 365 Copilot subscribers. The company has not disclosed how many organizations participate in the program or when the tools will reach general availability.

The announcement fits within Microsoft's larger strategy of embedding AI capabilities throughout its product portfolio, driven by its partnership with OpenAI. Under the restructured agreement announced Tuesday, Microsoft will have access to OpenAI's technology through 2032, including models that achieve artificial general intelligence (AGI) — though such systems do not yet exist. Microsoft has also begun integrating Copilot into its new companion apps for Windows 11, which provide quick access to contacts, files, and calendar information.

The aggressive integration of AI features across Microsoft's ecosystem has drawn mixed reactions. While enterprise customers have shown interest in productivity gains, the rapid pace of change and ubiquity of AI prompts have frustrated some users who prefer traditional workflows.

For Microsoft, however, the calculation is clear: if even a fraction of its user base begins creating applications and automations, it would represent a massive expansion of the effective software development workforce — and further entrench customers in Microsoft's ecosystem. The company is betting that the same natural language interface that made ChatGPT accessible to millions can finally unlock the decades-old promise of empowering everyday workers to build their own tools.

The App Builder and Workflows agents are available starting today through the Microsoft 365 Copilot Agent Store for Frontier Program participants.

Whether that future arrives depends not just on the technology's capabilities, but on a more fundamental question: Do millions of office workers actually want to become part-time software developers? Microsoft is about to find out if the answer is yes — or if some jobs are better left to the professionals.

Also, expanded revenue opportunities via creator subscriptions.

Google DeepMind researchers have developed BlockRank, a new method for ranking and retrieving information more efficiently in large language models (LLMs).

What BlockRank changes. ICR is expensive and slow. Models use a process called “attention,” where every word compares itself to every other word. Ranking hundreds of documents at once gets exponentially harder for LLMs.

How BlockRank works. BlockRank restructures how an LLM “pays attention” to text. Instead of every document attending to every other document, each one focuses only on itself and the shared instructions.

By the numbers. In experiments using Mistral-7B, Google’s team found that BlockRank:

Why we care. BlockRank could change how future AI-driven retrieval and ranking systems work to reward user intent, clarity, and relevance. That means (in theory) clear, focused content that aligns with why a person is searching (not just what they type) should increasingly win.

What’s next. Google/DeepMind researchers are continuing to redefine what it means to “rank” information in the age of generative AI. The future of search is advancing fast – and it’s fascinating to watch it evolve in real time.

An IAB report finds AI speeds discovery but adds steps, sending shoppers to retailers, search, reviews, and forums to verify details before buying.

The post Trust In AI Shopping Is Limited As Shoppers Verify On Websites appeared first on Search Engine Journal.

The post NVIDIA Boosts Navy AI Training with DGX GB300 appeared first on StartupHub.ai.

NVIDIA's DGX GB300 system is empowering the Naval Postgraduate School with advanced NVIDIA Navy AI training, enabling secure, on-premises generative AI and high-fidelity digital twin simulations for critical defense applications.

The post NVIDIA Boosts Navy AI Training with DGX GB300 appeared first on StartupHub.ai.

The post NVIDIA Charts America’s AI Future with Industrial-Scale Vision appeared first on StartupHub.ai.

NVIDIA's GTC Washington, D.C., keynote unveiled a strategic blueprint for America's AI future, emphasizing national infrastructure, physical AI, and industry transformation.

The post NVIDIA Charts America’s AI Future with Industrial-Scale Vision appeared first on StartupHub.ai.

The post NVIDIA AI Fuels US Economic Development appeared first on StartupHub.ai.

NVIDIA is driving significant AI economic development across the US by partnering with states, cities, and universities to democratize AI access and foster innovation.

The post NVIDIA AI Fuels US Economic Development appeared first on StartupHub.ai.

The post Microsoft’s OpenAI Bet Yields 10x Return, Igniting AI Infrastructure Race appeared first on StartupHub.ai.

Microsoft’s staggering ten-fold return on its OpenAI investment, now valued at $135 billion, signals a new era where strategic AI stakes redefine corporate power and valuation. This monumental gain, highlighted by CNBC’s MacKenzie Sigalos, follows a significant corporate restructure at OpenAI that redefines its partnership terms with Microsoft, granting the tech giant a 27% equity […]

The post Microsoft’s OpenAI Bet Yields 10x Return, Igniting AI Infrastructure Race appeared first on StartupHub.ai.

The post Desktop Commander raises €1.1M to advance AI desktop automation appeared first on StartupHub.ai.

Desktop Commander raised €1.1 million to develop its AI tool that allows non-technical users to automate computer tasks using natural language.

The post Desktop Commander raises €1.1M to advance AI desktop automation appeared first on StartupHub.ai.

The post Grasp raises $7M to advance its multi-agent AI for finance appeared first on StartupHub.ai.

AI startup Grasp raised $7 million to expand its multi-agent platform that automates complex financial analysis and reporting for consultants and investment banks.

The post Grasp raises $7M to advance its multi-agent AI for finance appeared first on StartupHub.ai.

The post SalesPatriot raises $5M to advance AI defence procurement appeared first on StartupHub.ai.

SalesPatriot is developing AI-driven procurement software to help defence and aerospace suppliers automate orders and increase processing speeds.

The post SalesPatriot raises $5M to advance AI defence procurement appeared first on StartupHub.ai.

The post Dott extends funding to $150M to expand its e-bike fleet appeared first on StartupHub.ai.

Micro-mobility company Dott extended its funding to over $150 million to expand its e-bike fleet and enter new European markets.

The post Dott extends funding to $150M to expand its e-bike fleet appeared first on StartupHub.ai.

The post Nokia secures $1B from Nvidia to build AI telecoms networks appeared first on StartupHub.ai.

Nvidia is investing $1 billion in Nokia to accelerate the development of AI-powered 5G and 6G telecommunications networks.

The post Nokia secures $1B from Nvidia to build AI telecoms networks appeared first on StartupHub.ai.

The post Socratix AI raises $4.1M to build autonomous AI coworkers appeared first on StartupHub.ai.

Socratix AI raised $4.1M to build autonomous AI coworkers that automate investigations for fraud and risk teams at financial institutions.

The post Socratix AI raises $4.1M to build autonomous AI coworkers appeared first on StartupHub.ai.

The post NVIDIA AI Physics Simulation Reshapes Engineering appeared first on StartupHub.ai.

NVIDIA AI physics simulation, powered by the PhysicsNeMo framework, is accelerating engineering design by up to 500x in aerospace and automotive.

The post NVIDIA AI Physics Simulation Reshapes Engineering appeared first on StartupHub.ai.

The post Energy as the New Geopolitical Currency in the AI Race appeared first on StartupHub.ai.

“Knowledge used to be power, now power is knowledge.” This stark redefinition, articulated by U.S. Secretary of the Interior Doug Burgum during a CNBC “Power Lunch” interview, cuts to the core of the contemporary global power struggle. Speaking with Brian Sullivan, Burgum outlined a comprehensive strategy for the United States to secure its position in […]

The post Energy as the New Geopolitical Currency in the AI Race appeared first on StartupHub.ai.

The post CoreStory raises $32M to advance AI legacy code modernization appeared first on StartupHub.ai.

AI startup CoreStory raised $32 million to help enterprises modernize legacy software with its platform that automatically documents and analyzes old code.

The post CoreStory raises $32M to advance AI legacy code modernization appeared first on StartupHub.ai.