X gets rid of its popular night mode in-app setting

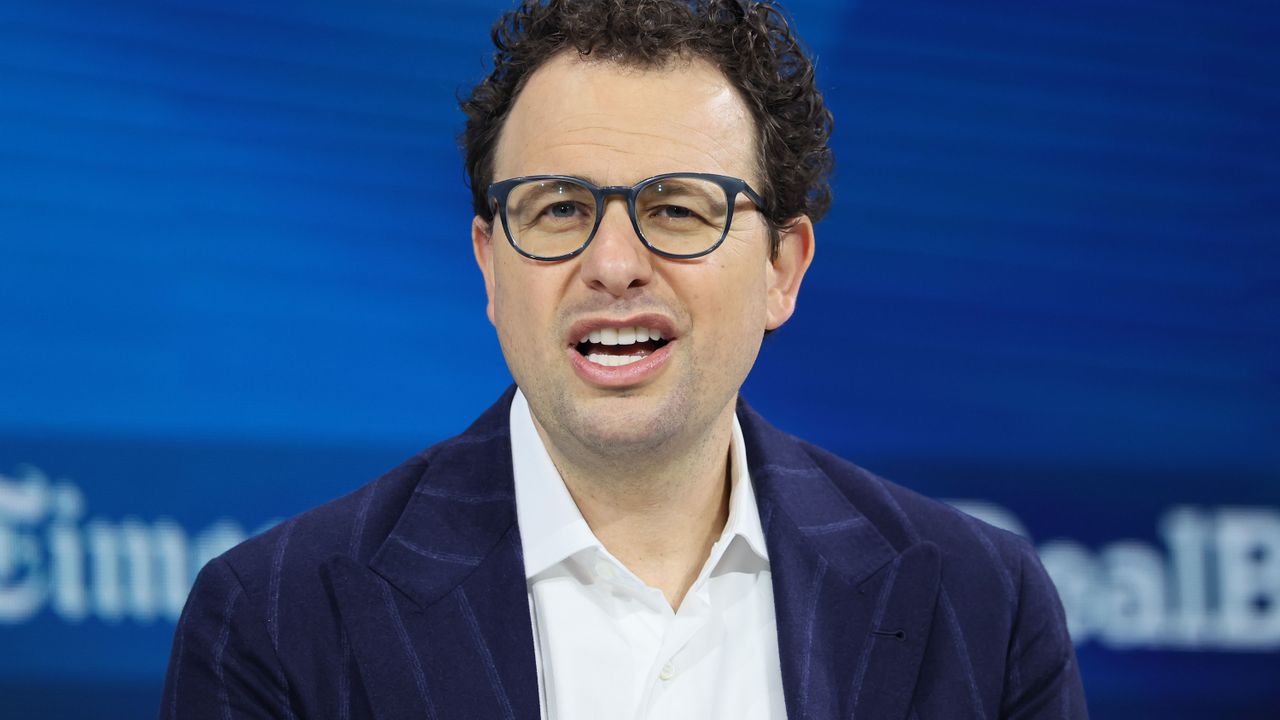

According to Nikita Bier, X’s head of product, users will now need to change their device settings to activate the feature.

According to Nikita Bier, X’s head of product, users will now need to change their device settings to activate the feature.

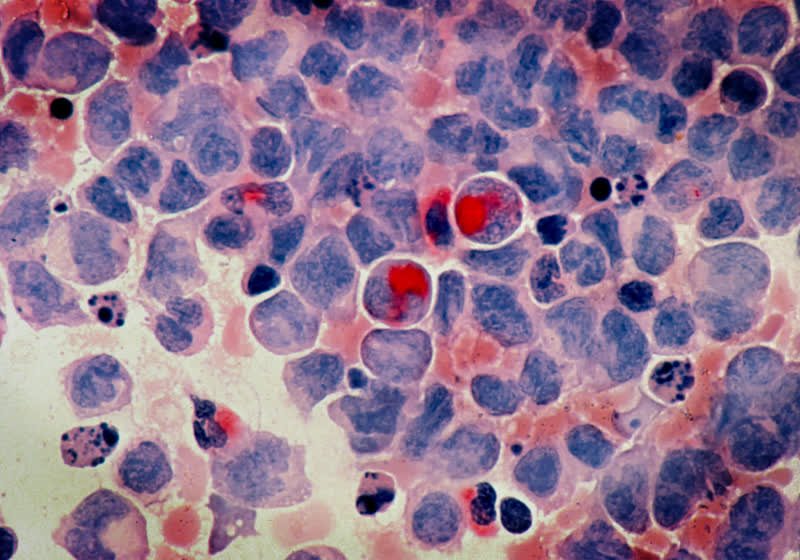

According to multiple reports, the company’s contractors in Kenya are allegedly reviewing users’ private intimate content for training purposes.

Since OpenAI began running ads four weeks ago, data tracked by Sensor Tower showed more than 100 individual brand promotions.

The company was pressured into this concession by the EU Commission and is allowing competitors access in an effort to prevent regulatory proceedings.

The company touted an uptick in construction jobs and infrastructure support, while also committing to the White House’s Ratepayer Protection Pledge.

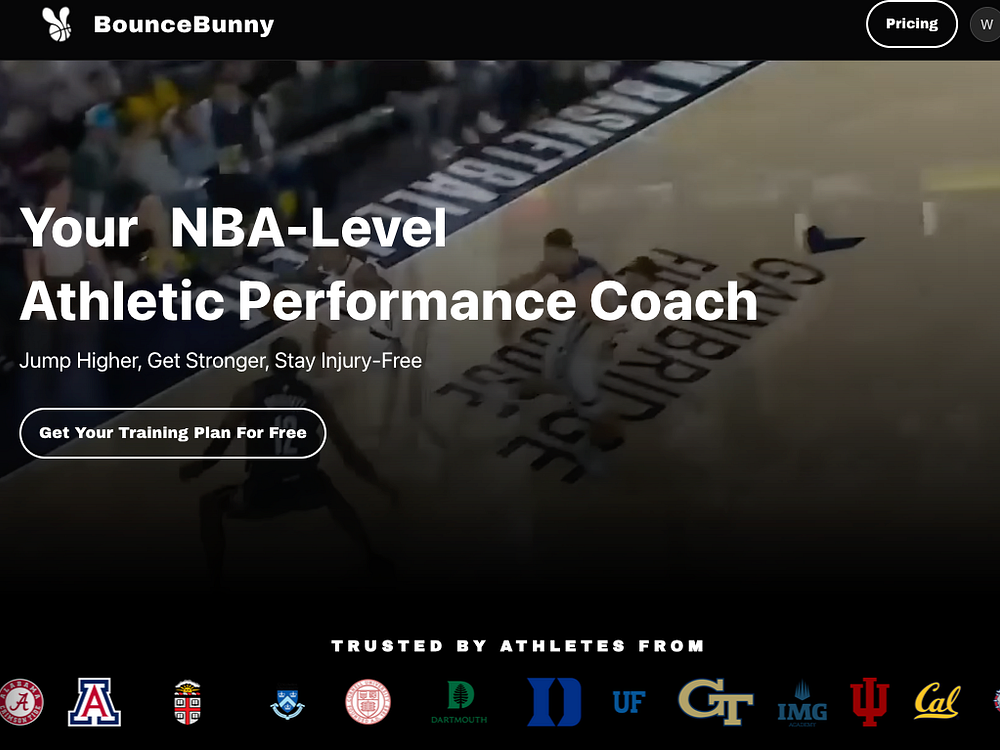

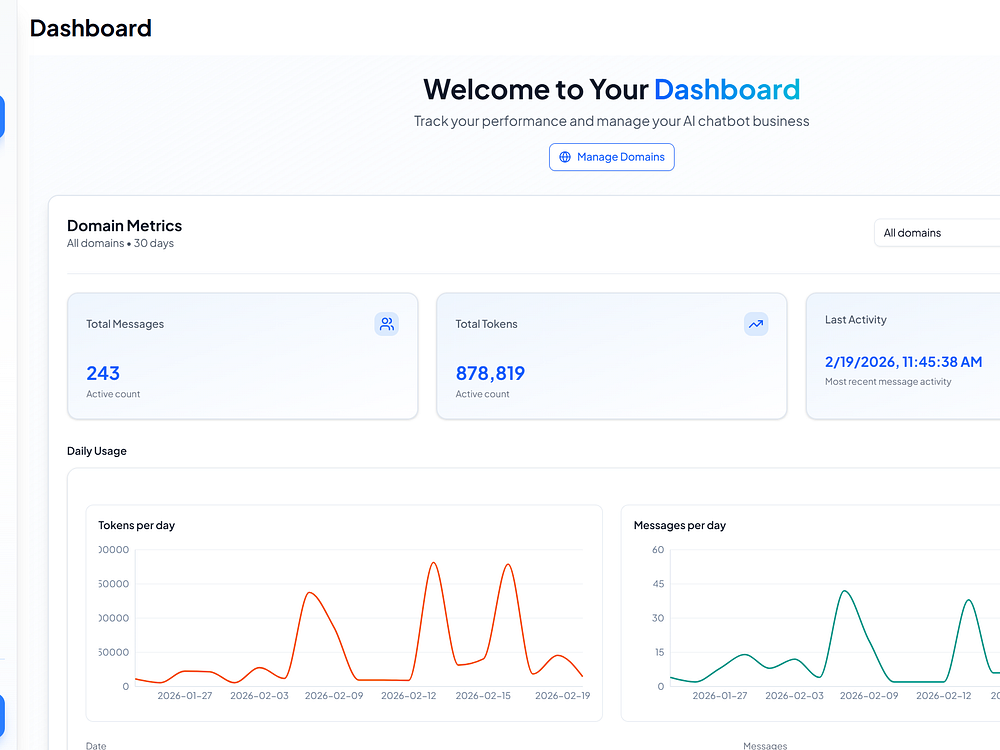

BounceBunny is an AI performance coach that creates personalized basketball training plans to help you jump higher, move faster, and excel on the court. Every plan is customized based on your body metrics, position, available equipment, and injury history. Experience the same quality training that NBA players use at a fraction of the cost. BounceBunny is trusted by over 100 college basketball teams, including UCLA, Cornell, and Northwestern. Train smarter, perform better, and stay injury-free.

NanoClaw's creator says Google ranks a fake website above his project's real site despite 18K GitHub stars, press coverage, and structured data setup.

The post NanoClaw Creator Loses SEO Battle To Impostor Website appeared first on Search Engine Journal.

Promotional performance in the app was significantly more effective than for traditional linear television advertising, according to new data from the company.

ToolsFree.ai offers a collection of free online calculators, converters, and utility tools across finance, health, web development, unit conversion, text, and math. Use it without signup or limits on any device; every tool runs in your browser, so your data stays on your device. Explore mortgage and loan calculators, BMI and calorie calculators, QR and password generators, JSON formatting, and more, while following updates as new tools launch.

The Nothing Phone (4a) Pro is now teaching a lesson to those willing to learn: a masterclass in how to debut new tech, replete with genuine innovation rather than gimmicks and outright falsehood à la what Samsung just did at its Galaxy Unpacked event for the new S26 series. Samsung's disastrous Galaxy Unpacked event: Pre-leaks and falsehoods For the benefit of those who might not be aware, Samsung experienced a particularly egregious bout of channel leaks in the run up to its Galaxy Unpacked event, one that saw an unreleased Galaxy S26 Ultra fall into the hands of a tech […]

Read full article at https://wccftech.com/nothing-phone-4a-pro-just-showed-samsungs-galaxy-s26-series-how-to-do-innovation/

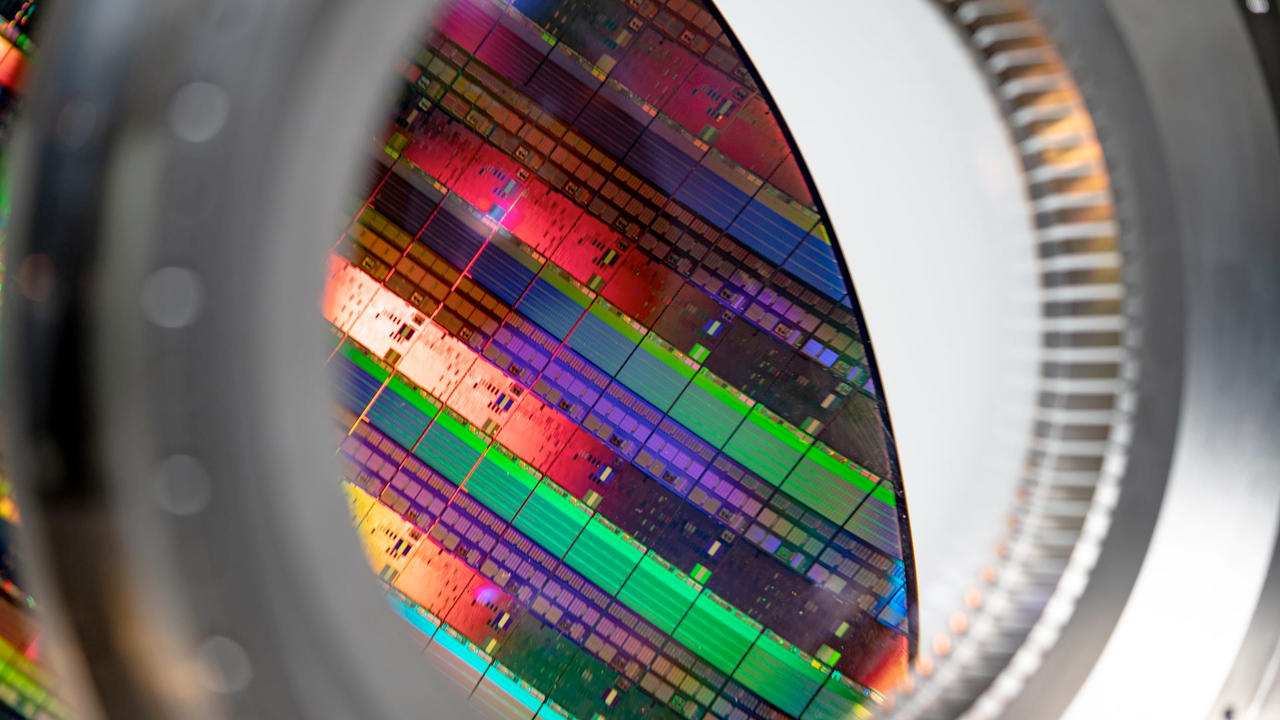

The record-breaking Q1 2026 quarter saw Apple bring in a mammoth $143.756 billion, but this impressive figure was also accompanied by a statement made by CEO Tim Cook, hinting at which silicon would be found in the newly announced MacBook Neo. The A18 Pro found in the latter is still an insanely powerful chip, but the A19 Pro is on another level, and had it not been for the supply situation, we’d be getting a different set of specifications for the latest low-cost portable Mac. Supply constraints from TSMC’s end meant that Apple couldn’t secure sufficient A19 Pro shipments to […]

Read full article at https://wccftech.com/tim-cook-statement-this-year-explains-why-macbook-neo-does-not-feature-a19-pro/

The Trump administration is exploring options to address AI chip exports, and initial reports suggest the proposed regulations are far more aggressive than the industry anticipated. The US Is Planning New AI Chip Export Regulations, By Looking at Compute Power Being Shipped Out The debate around AI chip exports has emerged several times since chip manufacturers like NVIDIA and AMD achieved significant compute breakthroughs. This matter was also under intense focus by the Biden administration, which introduced the "AI Diffusion" act that addresses AI chip exports by categorizing countries into different levels, each with its own caveats. The Diffusion Act […]

Read full article at https://wccftech.com/the-us-could-soon-turn-nvidia-and-amds-ai-chips-into-a-foreign-policy-tool/

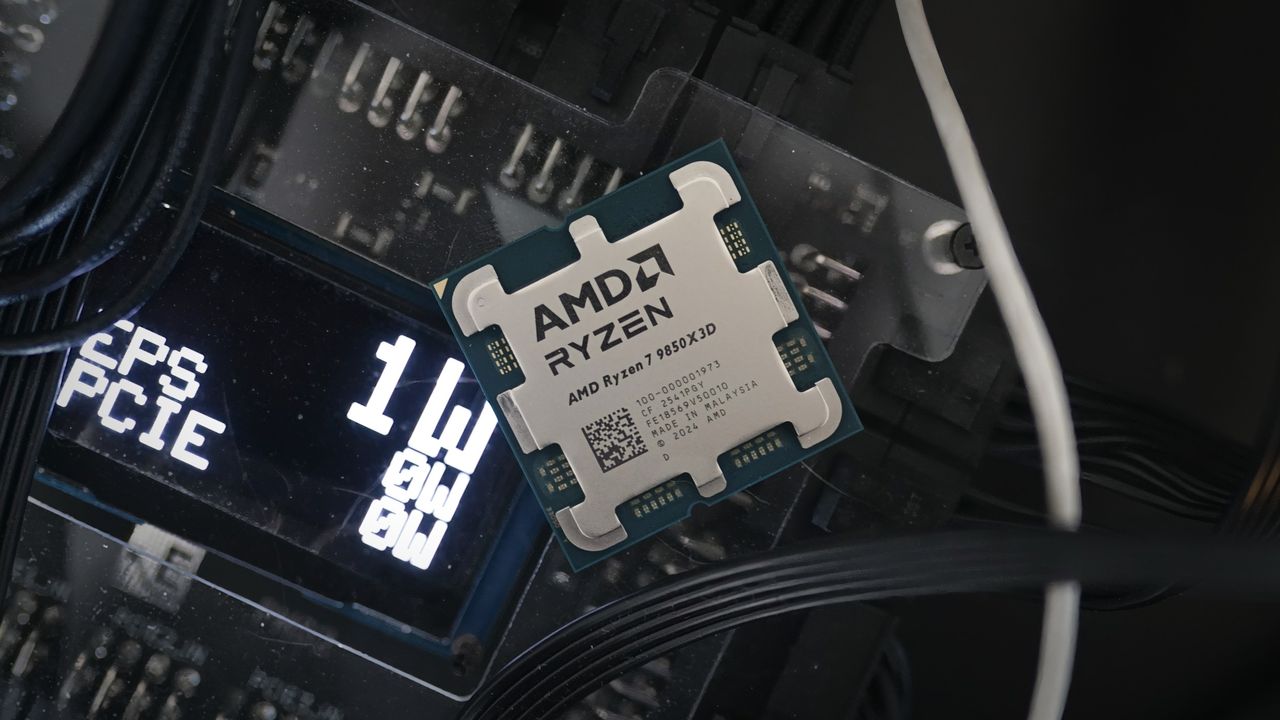

Gentlemen, we meet yet again. It's only been a week since the last time I wrote an article about Optiscaler, but development on the mod is happening at such a rapid pace that I once again have some updates to share with you. This time, it's AMD's Ray Regeneration that's getting the Optiscaler treatment. Thanks to the work done by DarkHelmet, you can now swap Nvidia's Ray Reconstruction for AMD's legally distinct Ray Regeneration denoiser. This is huge news, since currently there's a grand total of two titles with support for Ray Regen, and one of them isn't out till […]

Read full article at https://wccftech.com/i-tested-amd-ray-regeneration-in-cyberpunk-thanks-to-optiscaler-its-surprisingly-good/

MSI's X870 Tomahawk WiFi drops to $240 on Amazon with WiFi 7, USB4, and dual Gen5 M.2 slots for AM5 builds.

Read full article at https://wccftech.com/msi-mag-x870-tomahawk-wifi-drops-to-240-on-amazon/

For this year’s I/O Save the date, we’re showing how anyone can build incredible games with help from Gemini.

For this year’s I/O Save the date, we’re showing how anyone can build incredible games with help from Gemini. Expect to hear more about Microsoft’s “next generation” Xbox at GDC 2026 Microsoft’s Asha Sharma, the CEO of Microsoft Gaming (Xbox), has unveiled Project Helix. Helix is the codename for Microsoft’s next-generation Xbox console, and Microsoft plans to discuss the system in depth at GDC 2026. Project Helix will be able to play “Xbox and […]

The post Xbox unveils “Project Helix” and it can play “Xbox and PC games” appeared first on OC3D.

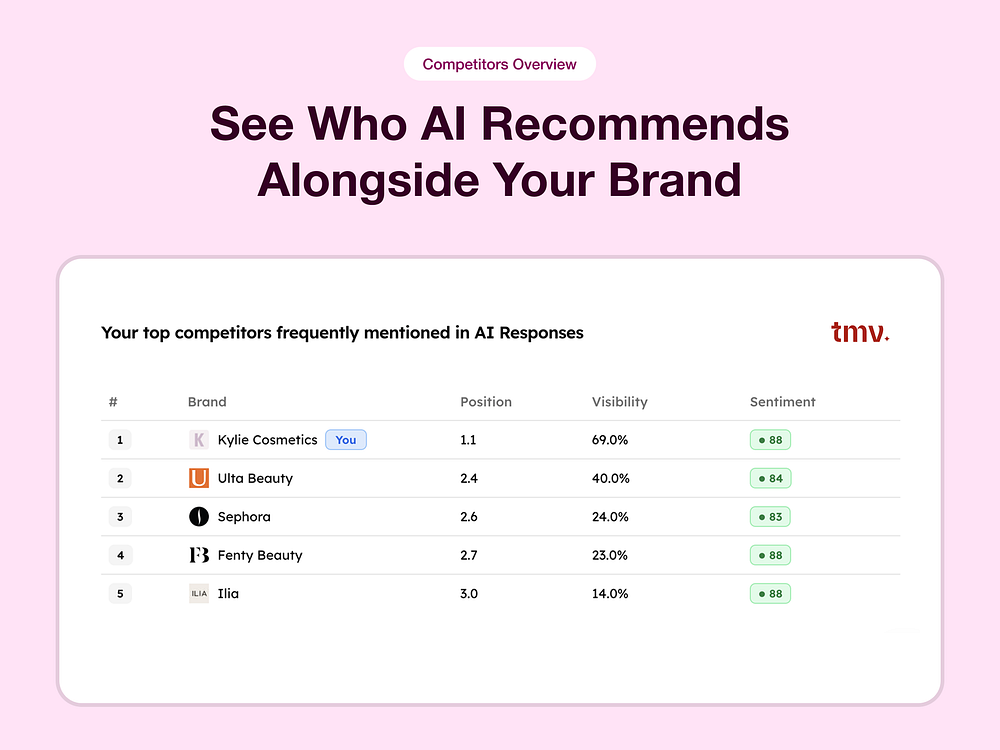

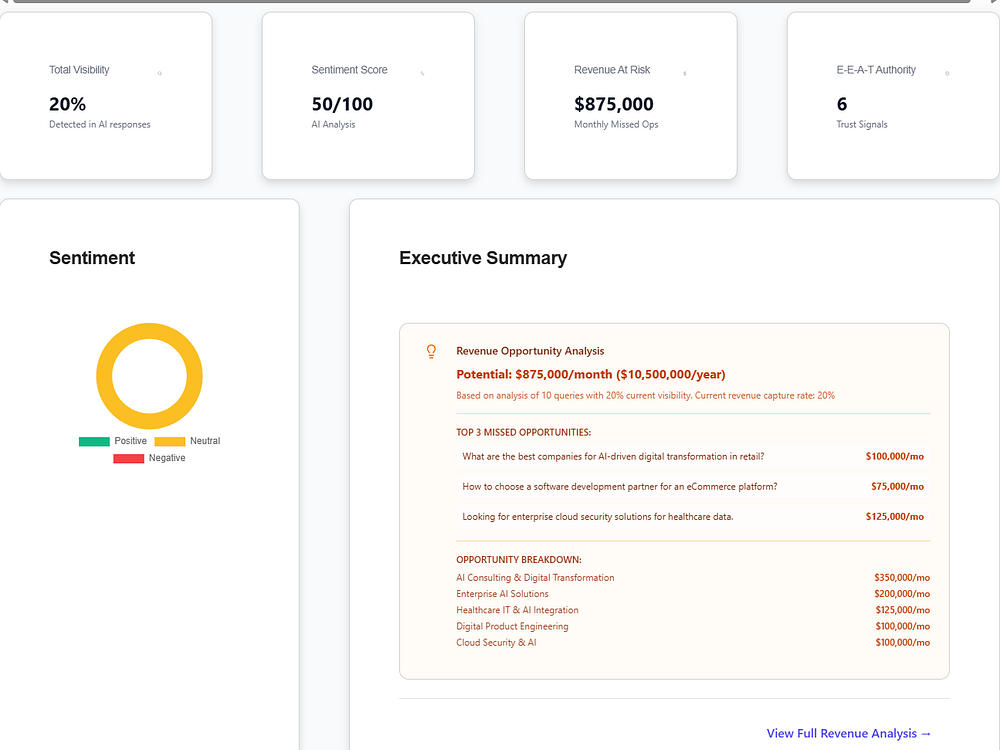

Track My Visibility monitors how your brand appears in AI-generated answers across ChatGPT, Claude, Perplexity, and Gemini. It runs prompts at scale, measures mentions, citations, position, and sentiment, and shows which pages drive visibility. You can compare against competitors, audit query coverage, and view URL-level citation paths. A dashboard displays model-wise coverage, share of voice, and AI-ready scores, while actionable recommendations help you address gaps and increase AI citations.

Asha Sharma, the recently installed chief executive officer of Microsoft Gaming and the new head of Xbox following Phil Spencer's retirement, has just teased the next-generation Xbox console in a post on her personal X (formerly Twitter) account, which we now know is codenamed Project Helix. Sharma shared the codename and what appears to be a new look for the Xbox logo, while also teasing that this new console will "lead in performance and play your Xbox and PC games," confirming reports that the next generation of consoles from Xbox will bea hybrid between a PC and console, capable of […]

Read full article at https://wccftech.com/asha-sharma-teases-next-gen-xbox-project-helix-pc-console-hybrid/

The launch of the 13-inch and 15-inch M5 MacBook Air is excellent news for those wanting a jaw-dropping deal on a portable Mac because Apple’s older-generation M4 MacBook Air has dropped by $300 on Amazon. Of course, it should be mentioned that the extensive price cuts have been observed on a few 15-inch models, but that’s still an attractive deal because we don’t remember the last time that such discounts were introduced. Unfortunately, the stock is slowly dwindling, and if you don’t act fast, you’ll be out of luck and a cheaper M4 MacBook Air. If a chipset upgrade and base […]

Read full article at https://wccftech.com/select-m4-macbook-air-models-300-off-on-amazon/

Over the past few months, I’ve been shopping around for a first home, and one of the requisites was to have a dedicated office space. Fast forward to November 1st of this year, and my wife and I signed the final documents to get the keys to our new home. Autonomous actually reached out to us much earlier in the year and sent samples of both their Desk 5 Pro and ErgoChair Ultra (in black and white to match the Wccftech logo), but remained sealed in a box until I finally had the space to install a brand new desk. […]

Read full article at https://wccftech.com/review/autonomous-desk-5-pro-and-ergochair-ultra-review-clean-and-professional/

Embark Studios' latest update for ARC Raiders isn't your bog-standard fixes or new content release. Instead, it's an update that the team rushed out the door this morning after a report from tech blogger and systems engineer Timothy Meadows pointed to an incident where two ARC Raiders players' private Discord DMs (direct messages) appeared in a game log file. Per Meadows report, the game's Discord SDK captured private messages between two users and a Discord Bearer token. It was a massive over-extension of the data that Embark collects through ARC Raiders' Discord SDK, an issue that is thankfully now fixed […]

Read full article at https://wccftech.com/arc-raiders-latest-update-fixes-massive-security-flaw-private-discord-dms-collected-by-embark/

Google AI Max drives revenue but at a higher cost, according to Smarter Ecommerce’s Mike Ryan, who analyzed 250+ campaigns. Outcomes vary, and much more testing is still needed.

Why we care. AI Max isn’t a minor update. It’s Google’s most significant reimagining of Search campaigns in years, shifting away from keyword syntax toward pure intent matching. For you, that’s both an opportunity (possible growth) and a risk (an efficiency tradeoff).

By the numbers. The result of the analysis:

Advertisers who activate AI Max typically see 14% more conversions or conversion value at a similar CPA or ROAS, rising to 27% for campaigns still relying on exact and phrase match keywords, Google says.

Turning on AI Max is essentially a coin toss: you may see a lift, but efficiency likely won’t follow, Ryan concluded

What AI Max actually is. Rather than forcing Search campaigns into Performance Max, Google went the other direction — bringing PMax-style automation into classic Search. The result is three core features:

Four pitfalls Smarter Ecommerce identified:

Between the lines. Google’s 14% uplift stat conspicuously excludes retail — an omission Ryan flags as significant for ecommerce advertisers. There’s also a deeper irony: you’re most likely to adopt AI Max if you’re already running Broad Match, DSA, and PMax — yet Google says those accounts will see the lowest incremental benefit.

What’s next. In a conversation with Ryan, Google Ads Liaison Ginny Marvin confirmed that Google plans to deprecate Dynamic Search Ads and migrate the technology into AI Max for Search. No firm timeline was given, though past Google deprecations often run about a year from announcement.

Ryan recommends activating AI Max’s keywordless features in your existing Search campaigns now and beginning to wind down DSA — not migrating it to PMax.

Ryan’s verdict is cautious optimism. About 16% of advertisers are testing AI Max, and few have gone all in. Start small, audit aggressively, and don’t let FOMO around AI Overviews drive your decision.

The report. The Ultimate Guide to AI Max for Google Search

Lightning Assist accelerates your typing with instant text expansion, AI-powered commands, push-to-talk transcription, and smart hotkeys on Windows, macOS, and Linux. You can create resources and terminal resources, assign custom hotkeys, and insert code snippets, templates, or commands in any app. Use AI Enhance to polish text and AI Speech to dictate in real time, all backed by enterprise-grade security. Set it up in minutes and reclaim hours every day.

New SMEC study analyzes AI Max in Google Ads Search campaigns, showing a 13% conversion value lift but higher CPA and unpredictable ROAS results.

The post What SMEC’s Data Reveals About AI Max Performance appeared first on Search Engine Journal.

Grand Theft Auto developer Rockstar Games' parent company, Take-Two Interactive, is also the owner of 2K, the massive publisher and developer behind several titles, though most notably the annual basketball series, NBA 2K. Today, Rockstar announced that it would be combining the two franchises, with players subscribed to GTA+, which grants special bonuses in-game Grand Theft Auto V, getting access to NBA 2K26 on PS5 and Xbox Series X/S consoles for a limited time starting next week. The latest installment in the annualized basketball franchise will be accessible to GTA+ subscribers on March 10, and the full game, on top […]

Read full article at https://wccftech.com/rockstar-expands-gta-subscription-nba-2k26-ps5-xbox-series/

NVIDIA's ambitions for China are glooming down with each day, as a new report indicates the AI giant is now looking to scale back H200 production in favour of ramping up Vera Rubin production. NVIDIA Plans to Shift H200 Production Towards Vera Rubin, as it Prefers 'Consistency' Over Revenue We have reported extensively on the NVIDIA-China saga in the past as well, and one of the more common trends in these stories is that both NVIDIA and China seem to be running in cycles, trying to catch each other. We'll discuss this aspect further ahead, but for now, according to […]

Read full article at https://wccftech.com/china-catch-22-is-pushing-nvidia-to-the-brink/

Learn more about AI Mode in Search’s query fan-out method for visual search.

Learn more about AI Mode in Search’s query fan-out method for visual search.  This new unboxing video from Google shows off the Pixel 10a’s sleek, durable design and brand new Berry color.

This new unboxing video from Google shows off the Pixel 10a’s sleek, durable design and brand new Berry color.

Has OpenAI’s increasing independence from Microsoft and, by extension, Bing, become an overly dependent relationship with Google?

Our study comparing shopping query fan-outs (QFOs) in ChatGPT from both Google and Bing carousels appears to have provided at least a partial answer to that question. Let’s take a look at how this study was conceived and what we found.

In November 2025, a few researchers in the AI research space, including myself, detected a mysterious field in ChatGPT’s source code: id_to_token_map. But what that field revealed when decoded was even more intriguing.

This field is what’s called base64 encoded, but when we decoded it, it revealed what looked to be Google Shopping parameters, such as productid, and offerid, but also language/locale parameters. Even more interesting? This field revealed a query used to look up that particular product.

To categorically prove this was indeed a Google Shopping link, we would have to be able to reconstruct the shopping URL solely from the extracted parameters.

Let’s look at an example of what this looks like using the ChatGPT product carousel for the prompt “best smartphones under $500.”

If we decode the relevant field, we can recreate the Google Shopping link from the extracted parameters.

The big question was: Would this link correspond to the exact product in the ChatGPT product carousel? So we tried it:

It turns out that, in fact, yes it does!

But this decoding technique alone doesn’t answer any of these important questions:

Using Peec AI data, the following study aimed to robustly prove once and for all that ChatGPT does indeed mainly source from Google Shopping.

To do this we analyzed more than 40,000 carousel products and 200,000 organic products from each Google and Bing. By comparing the similarity of the products, we got a very clear picture of what was really happening behind the scenes. Let’s dig into our findings.

To answer whether shopping query fan-outs are different from normal search query fan-outs, we analyzed 1.1M shopping query fan-outs from Peec AI data and compared them to the normal search query fan-outs for the same user prompt. We found that they are almost always different:

| Shopping QFO unique to user prompt | 99.70% |

| Shopping QFO unique to normal query search fan-out | 98.31% |

To dive deeper, we explored the average word counts of both of these query fan-out types by calendar week.

The chart below clearly shows that normal fan-outs are significantly longer — 12 vs. seven words. That makes sense since search query fan-outs are used to retrieve contextual information. This means they need to be long enough to retrieve web results that are specific to the user prompt. Vector search (or comparing embeddings) works best with more context.

Shopping fan-outs, on the other hand, typically target a specific shopping results page and therefore do not need to be as long. It appears the main goal is to retrieve products based on the shopping fan-out. Rather than compare chunks of text, the data in this study supports the hypothesis that ChatGPT relies heavily on Google organic shopping results to populate its carousel.

Further evidence of the distinct nature of the shopping fan-outs surfaces when we look at how many are used per prompt. On average, 2.4 search fan-outs are used per prompt vs. just 1.16 for shopping fan-outs. For reasons similar to above, retrieving more contextual information often requires more search fan-outs vs. simply retrieving products. To populate an eight product carousel in ChatGPT, it seems that, for the most part, one page of Google Shopping results is enough.

To answer this question in the fairest possible way, we extracted around 5,000 ChatGPT carousels comprising 43,000 products from the Peec AI dataset. Prompts were chosen to be as diverse as possible (see Methodology for the creation process).

We then extracted the organic shopping pages and retrieved the top 40 organic products for both Google and Bing shopping results. Paid ads and sponsored products were excluded from the analysis.

We used a three-step matching algorithm (see Methodology for exact details) to attain a similarity score between the ChatGPT product title and the title found in organic shopping results. This is because not only is ChatGPT probabilistic, but so is, to a certain extent, Google Shopping. Product titles can be rewritten with or without certain product features and results are very sensitive to the exact proxy location where the results are retrieved.

We counted a product as matching if it reached a threshold of 0.8 or above, effectively, if it was the same brand and product name and exhibited a very high degree of similarity.

The results are summarized in the chart below.

Impressively, across 43,000 highly diverse ChatGPT carousel products, 45.8% were found to have an exact title match in the corresponding Google top 40 organic shopping products for that exact shopping fan-out.

For Bing, this exact match rate was just 0.48%.

If we simply look at the percentage of strong product matches across all eight ChatGPT carousel positions, over 83% were found in the Google top 40 products, but that number drops to just under 11% for products found on Bing. This is very strong evidence that ChatGPT sources its carousel products from organic Google Shopping results.

We also see a very high number of weak matches in Bing at over 62%. This implies that the top 40 returned products for each shopping fan-out differ significantly across Google and Bing. This makes sense as there are many 1000s of possible combinations of brand and product that can be surfaced in shopping results.

Even if Bing found around 11% of ChatGPT carousel products, how many of those products were only found by Bing? Across the 43,000 carousel products Bing only found 70 that were not found in Google Shopping, constituting just 0.16%. This means that in almost every case there was a match in Bing there was also a match in Google.

It seems unlikely, then, that ChatGPT is also sourcing products from Bing Shopping in the vast majority of cases.

Here we explore the most common positions (mean and median shown) of Google shopping product positions for each ChatGPT carousel position:

For example, for the first carousel position we can see that the average Google Shopping position is around five. Note that we see a sloping trendline for the carousel positions that correspond to higher Google Shopping positions. This implies that ChatGPT sources top carousel products from higher Google Shopping positions.

Plotted another way, we can visualize the cumulative number of strong matches across organic Google Shopping positions. This chart allows us to see that 60% of the strong product matches are found in the top 10 Google shopping results alone.

Comparing the top 20 vs. positions 21-40, ChatGPT’s favoritism for higher positions becomes clear, with an overwhelming majority of matches (almost 84%) coming from the top 20:

Finally, we explored whether the prompt being branded vs. non-branded made a difference to the product matching results.

The results show a similar high level of product matching for both branded and non-branded prompts, with only slightly higher match rates for non-branded:

This study analyzed over 43,000 ChatGPT carousel products across 10 industry verticals and compared them against 200,000+ organic shopping results from both Google and Bing. The findings painted a clear picture.

Over 83% of ChatGPT carousel products were found as strong matches in Google’s top 40 organic shopping results. For Bing, that figure was just 11%, and of those, only 70 products across the entire dataset (0.16%) were found exclusively in Bing. In almost every case where Bing returned a match, Google had already returned the same product.

The data strongly supports this. Shopping query fan-outs are distinct from normal search fan-outs 98.3% of the time. They are significantly shorter (seven vs. 12 words), and ChatGPT uses far fewer of them per prompt (1.16 vs. 2.4 words). This makes sense; populating a product carousel is a fundamentally different task from gathering contextual information to construct a written answer. One is about retrieving structured product listings from a shopping index while the other is meant to retrieve web pages rich enough in context for vector search and re-ranking to work effectively.

The data shows a clear positional bias, with 60% of strong matches coming from the top 10 Google Shopping results and nearly 84% from the top 20. ChatGPT carousel position correlates with Google Shopping rank, meaning products that rank higher in Google Shopping are more likely to appear earlier in the ChatGPT carousel.

Since these patterns hold across branded and non-branded prompts, and across all 10 verticals tested, this reinforces that this is a systematic architectural behavior rather than a category-specific or query-specific artifact.

For brands and retailers, the implication is straightforward: Your Google Shopping ranking strongly influences whether your products make it into ChatGPT’s carousel. These findings indicate that the selection set of carousel products in many cases is effectively the top 40 organic Google Shopping positions for the corresponding shopping fan-out query.

But while product ranking in Google Shopping plays a role, it doesn’t tell the full story. It is likely that other factors, such as overall product mentions and sentiment in the context sources retrieved, also factor into the final ChatGPT carousel selection and ranking.

Understanding the full picture in terms of how your products are perceived across relevant sources, as well as how you show up on Google Shopping, could be the key to understanding ChatGPT product carousels.

For the AI research community, this study provides robust, large-scale evidence that ChatGPT’s product carousel operates as an independent retrieval pipeline for the selection set of products, separate from the contextual web search that powers the written portion of its responses. It is possible, and even likely, that for the final selection and ranking of products, ChatGPT uses contextual clues such as product sentiment from the sources retrieved by the normal search fan-outs.

As always, this represents a snapshot of current behavior. OpenAI could change its retrieval sources or methods at any time, but this behavior has been consistent in our findings for at least the last four months.

Measure how much product overlap there is between ChatGPT Shopping (via product carousels) and Google Shopping organic results for the same queries, across 10 industry verticals. This was contrasted to Bing shopping results as a control using an identical pipeline.

Specifically, the study evaluated:

Prompts were created with the purpose of triggering ChatGPT carousels. To maximize diversity, a mixture of branded and non-branded prompts were used, as well as prompts that explicitly included a price and ones that did not.

Additionally, a diverse selection of verticals were chosen to make the findings more robust. These were: Apparel & Footwear, Baby & Kids, Beauty & Personal Care, Electronics, Home Improvement, Home & Kitchen, Office Supplies, Pet Supplies, Sports & Outdoors, Toys & Games.

The product matching algorithm compared ChatGPT product titles against the top 40 Google Shopping titles using a three-stage cascade approach

The goal was to find the best match between a ChatGPT product title and the corresponding Google Shopping titles. A match was determined using a cascade of three stages:

This approach was set to be fairly conservative, and 0.8 was determined as a reasonable threshold for a product match as this often corresponds very closely to the same brand and product.

Real examples of matching thresholds from the data:

| Match threshold | Description | ChatGPT product | Google Shopping | Differences observed |

| 1.0 | Exact string match, no differences | Hot Wheels RC 1:64 Mustang GTD | Hot Wheels RC 1:64 Mustang GTD | None |

| 0.95 | Near exact, minor differences such as hyphen, punctuation only | Learning Resources Snap-n-Learn Matching Dinos | Learning Resources Snap‑n‑Learn Matching Dinos | The hyphen character is different in unicode |

| 0.9 | Same brand and product, additional non-crucial words allowed | Block Tech 250 Piece Set | Block Tech 250 Piece Building Blocks Set | “Building” added to blocks, but product and brand are the same |

| .85 | Same product and brand, potentially slightly different word order and additional, non-crucial words | LEGO Japanese Red Maple Bonsai Tree | Japanese Red Maple Bonsai Tree LEGO Botanicals | Different word order and one additional word “Botanicals,” same product and brand |

| .8 good match threshold Same brand, same product | Same brand and product, possibly additional descriptors | Cards Game Against FRIENDS – Limited Edition | Cards Game Against FRIENDS – Limited Edition – Party Card Games For Adults | Same brand and product with additional descriptors that don’t affect the match |

| .75 | Same brand and product line, very minor product differences such as size or dimensions | My Sweet Love 14-inch My Cuddly Baby Doll | My Sweet Love 8-Inch MinWeBaby Doll | Same brand and product line but different size dimension |

| .7 | Same brand, often slightly different product, but within same category | Adventure Force Ram Truck RC Car | Adventure Force McLaren 765LT RC Car | Same brand and product category but different individual product |

| .65 | Same brand, often slightly different product but within same category | Mattel 300‑Piece Puzzle | Mattel 80th Anniversary Puzzle | Same brand and product category but different individual product |

| .6 | Typically same product category, but often different brand and product line | Tell Me Without Telling Me Party Card Game | Elimino! Card Game | Different brand and product line, the same overall category of “card game” |

| .55 | Similar product category but usually not either different brand and/or different product | Furby Interactive Plush Toy Interactive Digital Pet Toy | Interactive Digital Pet Toy | Different brand, similar product category but different specific product |

Nvidia reportedly targets “around Computex” launch for its 9GB RTX 5050 Benchlife.info claims to have confirmed yesterday’s reports of Nvidia’s planned RTX 5050 9GB graphics card, and has “confirmed” a release date for the GPU around Computex 2026. Nvidia’s reportedly planning to upgrade its RTX 5050 graphics card to 3GB 28 Gbps GDDR7 memory modules, […]

The post Nvidia targets RTX 5050 9GB launch around Computex appeared first on OC3D.

After layoffs at Skate developer Full Circle just last week, the remainder of the team at EA's studio continues to work on updating the game with new content, the latest of which is Season 3: Fluid Flashback, which begins on March 10, 2026, and promises to bring skaters "back to skateboarding's first major era," which it describes as a time when "polyurethane wheels replaced clay and metal ones," and when the sport began to grow and evolve rapidly. That means elements of San Vansterdam will be taken back to the 1970s, "with vibrant colors and bold designs." Areas like Rolling […]

Read full article at https://wccftech.com/ea-skate-season-3-fluid-flashback-revealed/

This afternoon, independent Polish developer Reikon Games announced that RUINER 2 is in development for PC. As suggested by the title, it will be a sequel to 2017's twin-stick shooter, which was well-received by fans and critics. RUINER earned an 8 out of 10 score on Wccftech: RUINER is a no-brainer if you are interested in fast-paced action games that require real skill to truly perfect. However, RUINER 2 will be greatly expanded: it will support co-op gameplay and shift its genre to "cyberpunk action RPG". Marek Roefler, Game Director of the sequel at Reikon, said in a statement: We […]

Read full article at https://wccftech.com/ruiner-2-announced-pc-co-op-cyberpunk-action-rpg/

Epic Games is officially suing former contractor AdiraFNInfo, marking a significant escalation in the industry-wide hard stance against leakers. Following Activision's recent shutdown of a Call of Duty leaker, Epic’s legal action confirms that gaming companies have had enough of confidential IP and trade secrets being shared ahead of official announcements. As confirmed today with a message on X, Epic Games "took legal action against a former contractor who repeatedly leaked confidential partner IP and trade secrets that they received while working with Epic." The publisher absolutely does "not allow this and will continue to take action when Epic team […]

Read full article at https://wccftech.com/epic-games-sues-former-contractor-and-known-fortnite-leaker-adirafninfo/

Assassin's Creed Unity has received a 60 FPS patch today for PlayStation 5, Xbox Series X, and Xbox Series S, which may have packed an undocumented bump to 4K resolution. While a previous-generation title, this update makes the 2014 classic relevant again for those wishing to experience the series' old, more straightforward gameplay formula. According to multiple online reports, today's Assassin's Creed Unity patch increases resolution up to 4K. While no one has made an actual pixel count, the game's PlayStation 5 update history mentions 4K resolution and 60 FPS gameplay. Some users, such as ResetERA's RayCharlizard, shared screenshots captured […]

Read full article at https://wccftech.com/assassins-creed-unity-ps5-xsx-patch-4k/

Yesterday, the official X (formerly Twitter) account for the United States White House published a video promoting its ongoing strikes on Iran, which contained footage and UI elements from Microsoft and Activision's popular shooter franchise, Call of Duty. The United States and Israel launched strikes against Iran this past weekend, which recent reports estimate have resulted in 1,230 casualties. Amidst the responses to the video, which began with Call of Duty footage followed by real-life footage of the strikes, Chance Glasco, one of the original founders of Infinity Ward and developer on Call of Duty said that the video was […]

Read full article at https://wccftech.com/call-of-duty-co-founder-says-activision-wanted-cod-about-iran-attacking-israel/

Here are Google’s latest AI updates from February 2026

Here are Google’s latest AI updates from February 2026

For 20 years, the web has run on a simple trade: publish content that meets a person’s needs, rank in search, earn traffic, then monetize that traffic through products, services, affiliate referrals, or ads.

Zero-click answers and AI search are rewriting that relationship. The new question is whether AI will cite you as a source — and whether that visibility can turn into revenue.

To understand who gets included and who gets routed around, I ran over 200 AI visibility audits across 10 industries.

The pattern was consistent: Most sites are easy to parse, but hard to justify citing. And the industries that rely on discovery traffic the most are often the ones making themselves the hardest to access.

I ran 201 audits using the same rubric and captured an overall AI visibility score, plus four subscores:

The dataset included 201 audits across 10 industries:

Note that there was a page type skew — the sample is homepage-heavy (131 homepages, 13 articles, with the remainder a mix of pages). That matters because homepages tend to be marketing-heavy and evidence-light.

I also tracked access failures because “error” results are part of the story. 38 of the 201 audits (18.9%) returned an error, meaning the agent was likely blocked or couldn’t reliably access the content.

An additional eight audits were technically processed but scored 0 due to missing subscores, consistent with partial extraction or app-style rendering that yields little accessible content.

When I summarized score distributions, I focused on the successfully processed audits (163 sites), so “cannot access” didn’t get mixed with “low quality.” I treated error rate by industry as its own signal because it indicated whether AI systems could reliably use a site as a source.

The table below shows how the industries in the dataset performed in the audits.

| Rank | Industry | Error rate | Median overall | Median authority | Median extractability | At risk |

| 1 | Travel booking and trip planning | 33.3% | 45.5 | 31.0 | 52.0 | High |

| 2 | Job boards and career marketplaces | 40.0% | 64.0 | 44.0 | 74.0 | High |

| 3 | Legal directories and lead gen | 35.0% | 63.0 | 44.0 | 74.0 | High |

| 4 | Coupons and deals | 20.0% | 62.0 | 36.0 | 74.0 | High |

| 5 | Local directories and lead gen | 5.3% | 64.0 | 38.0 | 74.0 | Medium |

| 6 | Online courses and learning marketplaces | 30.0% | 67.5 | 46.5 | 80.0 | Medium |

| 7 | Health info and symptom lookups | 15.0% | 69.0 | 52.0 | 80.0 | Low |

| 8 | Personal finance comparison | 5.0% | 67.0 | 52.0 | 78.0 | Low |

| 9 | Affiliate product reviews | 0.0% | 69.5 | 54.0 | 74.0 | Low |

| 10 | Recipes and cooking content | 5.0% | 75.0 | 55.5 | 81.5 | Low |

The findings show that most websites aren’t built to be cited consistently. Here are the three numbers that matter.

38 of 201 sites (18.9%) returned an error. In some categories, it was far worse: job boards (40%), legal directories (35%), travel booking (33%), and course marketplaces (30%). In those spaces, a third to nearly half of the market is effectively AI-dark by default.

Legal directories had the highest AI blocking of any industry.

Across the 163 processed audits:

Translation: Most brands aren’t built to be reliably used and cited.

Median subscores across processed audits:

Most pages are easy to parse. Far fewer are easy to justify citing. Two repeated findings explain why:

That should change how you think about risk. More than losing traffic, the bigger threat is being removed from the consideration set.

Dig deeper: What 4 AI search experiments reveal about attribution and buying decisions

Industries disappear for three reasons. You can think of them as three failure modes.

If agents can’t consistently access your content, the model has less to work with and will either route around you or fill in the gaps from other sources.

What access failure looks like:

Why this causes vanishing:

Trust failure is quieter. The agent can access your page, parse it, and summarize it, but the page doesn’t provide enough proof for the model to confidently cite it as a source.

This was the dominant pattern in the completed audits. In plain language: Your content is readable, but it isn’t defensible.

The clearest proof of this showed up when I compared page types:

A polished homepage isn’t proof. If you want to be cited for anything beyond your brand name, a typical homepage alone isn’t enough. Evidence usually lives in articles, explainers, data pages, policy pages, and methodology pages.

Utility failure is the most painful. You might get included. You might get cited. But if your value is only information, AI can compress it into an answer, and the user never needs to visit your site.

Visibility determines whether you appear in the conversation. Utility determines whether appearing turns into revenue.

A practical way to think about it:

Access failure gets you excluded. Trust failure gets you skipped. Utility failure gets you summarized.

Once access, trust, and utility get viewed together, the vulnerable industries stop looking random.

The categories that repeatedly showed high risk in my dataset share three traits:

That’s why travel booking, job boards, legal directories, and coupon sites clustered as the most exposed categories in this dataset.

The bigger takeaway? Your website can be built in a way that invites exclusion, even if your business is healthy.

Dig deeper: Why every AI search study tells a different story

Some industries will feel this harder than others. A site funded primarily by high-volume informational traffic is more exposed to zero-click behavior. But even in those categories, the path forward is to stop selling information alone.

The big mistake right now is treating AI search like a ranking update, when it’s an economic update. The audits made two things obvious:

The threat is invisibility. You don’t win by hiding. You win by becoming cite-worthy and by building something the user still needs after the answer is delivered.

Trust plus utility is the new moat. Anything else is just playing from yesterday’s playbook.

Sony reportedly halts PC porting efforts for single-player PlayStation 5 games Recent years have seen Sony bring more and more of its classic PlayStation titles to PC, to the point that it created its PlayStation PC publishing unit in 2021 to support these efforts. Now, it looks like Sony has fallen out of love with […]

The post Sony shifts PC strategy towards console exclusivity appeared first on OC3D.

NVIDIA's CEO has talked about the 'agentic AI' inflection point at the Morgan Stanley conference, and he has called out OpenClaw as the "most important" software release of our times. NVIDIA's CEO Says that Agentic AI Has Brought Uses 1,000x Higher Tokens, Bringing In Immense Compute Demand Jensen has talked about AI being a "5-layer cake", and one of the more interesting layers that yields the most returns to hyperscalers and frontier labs is the applications layer. OpenClaw and AI agents are examples of how AI, when placed in a hyper-personalized environment, yields results that replicate human workloads. NVIDIA's CEO […]

Read full article at https://wccftech.com/nvidia-ceo-says-openclaw-did-in-3-weeks-what-linux-took-30-years/

VR developer and publisher nDreams, the studio behind VR titles like Reach, Vendetta Forever, Synapse, Ghostbusters: Rise of the Ghost Lord, and more, have just announced "a significant reduction in overall staffing levels," resulting in the closure of two of its internal studios, Near Light and Compass studios. Confirmed in a statement on the company's LinkedIn page, the layoffs will impact studios across nDreams' suite of teams as the company gets restructured to put nDreams Elevation at its core, though only the aforementioned Near Light and Compass will be shuttered. Between the studios, 78 developers are impacted by the closures. […]

Read full article at https://wccftech.com/vr-studio-ndreams-announces-significant-layoffs-shuts-down-two-internal-studios/

Apple has finally debuted its latest chronically hyped up budget offering, dubbed the MacBook Neo, replete with specs that barely qualify for 2016, let alone 2026. Of course, budget offerings almost always cut corners in some way or the other. But how do you justify two USB-C ports with wildly different characteristics and no way of knowing which is which until you actually plug in your peripheral? What about a heavily binned SoC, a hobbled trackpad, and pricing tiers that make an M3 MacBook Air appear like a godsend? Apple seems to have designed the MacBook Neo to specifically cater […]

Read full article at https://wccftech.com/apple-just-debuted-a-glorified-piece-of-e-junk-and-called-it-macbook-neo/

A company that prides itself on its stringent focus on detail can often make head-scratching blunders that you’d never expect it to. On this occasion, one eagle-eyed individual caught Apple comparing a 15-inch M1 MacBook Air, a product that has never existed in its lineup, to the M5, which currently powers the technology giant’s latest portable Mac. Fortunately, the technology giant rectified the mistake, but not before someone posted it on social media for millions to see. Larger 15-inch MacBook Air models were added to the lineup after the M2’s release To remind you, Apple’s M1, which launched back in […]

Read full article at https://wccftech.com/apple-accidentally-mentioned-non-existent-15-inch-m1-macbook-air-in-m5-comparison/

To combat the current GPU shortages due to higher VRAM prices, NVIDIA is reportedly bringing back its popular RTX 30 series budget GPU. Five-Year-Old GPU to Return to Shelves; AIBs Will Reportedly Start Getting the GPUs Between March 10 and March 20 It's remarkable how we are going back to older hardware, but it's one of the only measures left for hardware manufacturers to maintain a steady supply and meet the demand. Turing architecture-based GPU, GeForce RTX 3060, is about to make a comeback, as we reported recently. RTX 3060 has been one of the most popular graphics cards in […]

Read full article at https://wccftech.com/nvidia-geforce-rtx-3060-expected-to-make-a-comeback-in-mid-march/

Today, California-based independent developer Ember Lab announced that Kena: Bridge of Spirits will launch on Nintendo Switch 2 this Spring. Kena: Bridge of Spirits first launched on PlayStation platforms and PC in September 2021 and went on to win several awards, including Best Independent Game and Best Debut Indie Game at The Game Awards 2021. On Wccftech, the game earned an 8 out of 10 score from reviewer Francesco De Meo, who wrote at the time: Despite featuring a very familiar experience inspired by The Legend of Zelda series, Kena: Bridge of Spirits manages to stand out from the competition […]

Read full article at https://wccftech.com/kena-bridge-spirits-nintendo-switch-2-spring-2026/

After celebrating its sixth anniversary last month, NVIDIA has prepared another large lineup of PC games for the GeForce NOW cloud platform in March. Throughout the month, NVIDIA is planning to add fifteen games to the library, starting with the following eight this week: The most interesting new releases are landing later this month, though, with Crimson Desert from Pearl Abyss leading the pack. The game will support NVIDIA DLSS Super Resolution for GeForce NOW users with the Premium tier and also NVIDIA DLSS Multi Frame Generation and Ray Reconstruction for GeForce NOW users with the Ultimate tier. Weirdly enough, […]

Read full article at https://wccftech.com/geforce-now-march-2026-lineup-crimson-desert-15-games/

The "upgraded" GeForce RTX 5050 is expected to arrive in June this year at the Computex event. NVIDIA Will Reportedly Debut its 9 GB RTX 5050 GPU at Computex; Same GPU, but Newer GDDR7 Memory With an Additional Gigabyte of VRAM Instead of increasing the VRAM to 12 GB, NVIDIA is straight up reducing RTX 5050's memory bus width to offer 9 GB VRAM capacity. The original version comes with 8 GB GDDR6 memory and is the only RTX 50 series GPU in the series to run on previous-gen GDDR memory. That said, with the new GPU planned for launch, […]

Read full article at https://wccftech.com/nvidia-geforce-rtx-5050-with-9-gb-gddr7-memory-rumored-to-debut-at-computex/

Superpollutants have been responsible for close to half of planetary warming, and without action they’ll continue to warm the planet rapidly in the decades to come.That’…

Superpollutants have been responsible for close to half of planetary warming, and without action they’ll continue to warm the planet rapidly in the decades to come.That’…

How content is structured in an article or blog post might not seem controversial. But, apparently, Google doesn’t want you to create bite-sized chunks of content simply to please LLMs. Called “chunking,” this technique helps get your content noticed by AI models and reflects how readers actually engage with online content.

Chunking may make content more retrievable or citable in AI search, but ultimately, it improves the flow of content and makes concepts easier for people to understand. Let’s talk about how chunking works and when to use it.

Chunking is the practice of organizing text into distinct, self-contained units of meaning. When content is chunked, information is segmented so each paragraph focuses on a single idea and contains everything the reader needs to understand the basics of that idea simply and quickly.

Someone should be able to read a single paragraph and grasp the concept without having to hunt for context in the surrounding words.

The recent criticism from Google suggests that the practice of chunking over-optimizes content, specifically so that it will show up in AI answers. The idea that people are writing specifically for AI assumes that what’s good for AI is somehow bad for human readers.

But really, chunking helps communicate ideas for both readers and search retrieval systems. When content is chunked, it doesn’t dumb down or artificially fragment ideas. It organizes information to match how people actually read online content, making articles easier to scan.

Chunking also helps AI systems because they operate at the passage level rather than the page level. For example, when a system needs to identify an answer for “how to measure keyword cannibalization,” a heading that says exactly that, followed by a focused paragraph, would create a clear match.

In contrast, when an answer to that same question is buried in a dense paragraph covering three other topics, that information gets diluted. The AI might see relevant keywords, but if the text meanders between ideas, it will have a lower confidence that the passage definitively answers the query.

Clear structure creates clear meaning.

Chunking helps both readers to scan content and AI systems to accurately identify what your content says.

Dig deeper: Chunk, cite, clarify, build: A content framework for AI search

When writing from scratch, integrate chunking into your process from the start.

However, it may not be worth your time to edit existing content solely to chunk it. You may find that some articles already follow chunking principles, even if they weren’t explicitly planned to do so. Others may be out of date or poorly structured, requiring more substantial rewrites.

If you want to chunk existing content, prioritize pieces that:

Skip chunking edits for content that:

If you have content that is impactful because it creates an emotional arc, chunking or breaking it down into discrete chunks could hurt the piece. If your content succeeds by carrying readers through a journey rather than letting them jump to an answer, preserve that flow.

For example:

Dig deeper: Chunks, passages and micro-answer engine optimization wins in Google AI Mode

A chunk in a piece of content should be long enough to explain one thought. This often results in shorter paragraphs — the defining feature is a singular focus, not the word count.

These focused paragraphs sit under clear headings. The heading tells the reader what to expect, and the chunks beneath it deliver on that expectation.

To include chunking in your writing, the most effective approach is to integrate it from the start.

Define for yourself or other writers which ideas or concepts in a given topic constitute a chunk, focusing on paragraphs and heading descriptions.

If using content briefs, make it clear in your outlines that each H2 or H3 should cover one complete concept and the content under that heading should fully explain the concept.

Focus your efforts on high-value pages first when editing existing content. Prioritize pages that receive traffic but struggle with engagement or pages that rank well but aren’t being cited.

Don’t let Google convince you that chunking is a hack. Chunking makes content work better for everyone and everything — from readers scanning for specific information to AI systems matching queries to answers.

Dig deeper: How to build a context-first AI search optimization strategy

You’ve probably heard developers talk about the DOM. Maybe you’ve even inspected it in DevTools or seen it referenced in Google Search Console.

But what, exactly, is it? And why should SEOs care? Let’s take a look at what it is, why it’s important, and how to best optimize it.

The Document Object Model (DOM) is a browser’s live, in-memory representation of your webpage. It acts as the interface that allows programs like JavaScript to interact with your content.

The DOM is organized as a hierarchical tree, similar to a family tree:

<body>, <p>, and <a> become branches (or “nodes”).This hierarchy is critical because it allows the browser (and search engines) to understand the relationship between different parts of your content. For example, proper hierarchical order lets your browser understand that a specific paragraph belongs to a specific heading.

The DOM itself is actually a JavaScript object structure stored in memory, but browsers show it to you as markup that looks very much like HTML.

You can see this HTML representation of the DOM by right-clicking on a page and selecting Inspect > Elements. This is called the Elements panel. I’ve outlined it in the red box below:

In the Elements panel inside DevTools, you can:

Note that DevTools doesn’t necessarily show you what Googlebot sees. I’ll circle back to what that means later in this article.

To understand why the DOM often looks different from your HTML file, you first need to understand how the browser creates it. That begins with your browser building the DOM tree.

When your browser requests a page, the server sends back an HTML file. The browser reads this response line by line and translates it into “tokens” (tags like <html>, <body>, <div>).

These tokens are then converted into distinct “nodes,” which serve as the building blocks of the page. The browser links these nodes together in a parent-child hierarchy to form the tree structure.

You can visualize the process like this:

It’s important to know that the browser simultaneously creates a tree-like structure for CSS, known as the CSS Object Model (CSSOM), which allows JavaScript to read and modify CSS dynamically. However, for SEO, the CSSOM matters far less than the DOM.

JavaScript often executes while the tree is still being built. If the browser encounters a <script> tag (without defer or async attributes, which allow for the script to load asynchronously), it pauses construction, runs the script, and then finishes building the tree.

During this execution, scripts can modify the DOM by injecting new content, removing nodes, or changing links. This is why the HTML you see in View Source often looks different from what you see in the Elements panel.

Here’s an example of what I mean. Each time I click the button below, it adds a new paragraph element to the DOM, updating what the user sees.

Your HTML is the starting point, a blueprint, if you will, but the DOM is what the browser builds from that blueprint.

Once the DOM is created, it can change dynamically without ever touching the underlying HTML file.

Dig deeper: JavaScript SEO: How to make dynamic content crawlable

Modern search engines, such as Google, render pages using a headless browser (Chromium). This means that they evaluate the DOM rather than just the HTML response.

When Googlebot crawls a page, it first parses the HTML, then uses the Web Rendering Service to execute JavaScript and take a DOM snapshot for indexing.

The process looks like this:

However, there are important limitations to understand and keep in mind for your website:

Looking ahead to a world that’s becoming more AI-dependent, AI agents will increasingly need to interact with websites to complete tasks for users, not just crawl for indexing.

These agents will need to navigate your DOM, click elements, fill forms, and extract information to complete their tasks, making a well-structured, accessible DOM even more critical than ever.

The URL inspection tool in Google Search Console shows how Google renders your page’s DOM, also known in SEO terms as the “rendered HTML,” and highlights any issues Googlebot might have encountered.

This tool is crucial because it reveals the version of the page Google indexes, not just what your browser renders. If Google can’t see it, it can’t index it, which could impact your SEO efforts.

In GSC, you can access this by clicking URL inspection, entering a URL, and selecting View Crawled Page.

The panel below, marked in red, displays Googlebot’s version of the rendered HTML.

If you don’t have access to the property, you can also use Google’s Rich Results Test, which lets you do the same thing for any webpage.

Dig deeper: Google Search Console URL Inspection tool: 7 practical SEO use cases

The shadow DOM is a web standard that allows developers to encapsulate parts of the DOM. Think of it as a separate, isolated DOM tree attached to an element, hidden from the main DOM.

The shadow tree starts with a shadow root, and elements attach to it the same way they do in the light (normal) DOM. It looks like this:

Why does this exist? It’s primarily used to keep styles, scripts, and markup self-contained. Styles defined here cannot bleed out to the rest of the page, and vice versa. For example, a chat widget or feedback form might use shadow DOM to ensure its appearance isn’t affected by the host site’s styles.

I’ve added a shadow DOM to our sample page below to show what it looks like in practice. There’s a new div in the HTML file, and JavaScript then adds a div with text inside it.

When rendering pages, Googlebot flattens both shadow DOM and light DOM and treats shadow DOM the same as other DOM content once rendered.

As you can see below, I put this page’s URL into Google’s Rich Results Test to view the rendered HTML, and you can see the paragraph text is visible.

Follow these practices to ensure search engines can crawl, render, and index your content effectively.

Your most important content must be in the DOM and appear without user interaction. This is imperative for proper indexing. Remember, Googlebot renders the initial state of your page but doesn’t click, type, or hover on elements.

Content that is added to the DOM only after these interactions may not be visible to crawlers. One caveat is that accordions and tabs are fine as long as the content already exists in the DOM.

As you can see in the screenshot below, the paragraph text is visible in the Elements panel even when the accordion tab has not been opened or clicked.

As we all know, links are fundamental to SEO. Search engines look for standard <a> tags with href attributes to discover new URLs. To ensure they discover your links, ensure the DOM shows real links. Otherwise, you risk crawl dead ends.

You should also avoid using JavaScript click handlers (e.g., <button onclick="...">) for navigation, as crawlers generally won’t execute them.

Like this:

Use heading tags (<h1>, <h2>, etc.) in logical hierarchy and wrap content in semantic elements like <article>, <section>, and <nav> that correctly describe the site’s content. Search engines use this structure to understand pages.

A common issue with page builders is making DOMs full of nested <div> elements without semantic meaning. This does little to help search engines understand your page and sets up problems for you or future devs trying to maintain the code on your site.

Ensure to maintain the same semantic standards you’d follow in static HTML.

Here’s a snippet of semantic HTML as an example:

<!-- Semantic HTML -->

<nav>

<ul>

<li><a href="/">Home</a></li>

<li><a href="/about">About</a></li>

</ul>

</nav>Here’s an example of “div soup” HTML that’s non-semantic and harder for search engines and assistive technologies to understand.

<!-- Non-Semantic HTML -->

<div class="nav">

<div class="nav-list">

<div class="nav-item"><a href="/">Home</a></div>

<div class="nav-item"><a href="/about">About</a></div>

</div>

</div>Keep the DOM lean, ideally under ~ 1,500 nodes, and avoid excessive nesting. Remove unnecessary wrapper elements to reduce style recalculation, layout, and paint costs.

Here’s an example from web.dev of excessive nesting and an unnecessarily deep DOM:

<div>

<div>

<div>

<div>

<!-- Contents -->

</div>

</div>

</div>

</div>While DOM size is not a Core Web Vital itself, excessive and deeply nested DOMs can indirectly impact performance, especially on lower-end devices.

To mitigate these impacts:

A workable understanding of the DOM can help you not only diagnose SEO issues, but also effectively communicate with developers and others on your team.

We know that the DOM impacts Core Web Vitals, crawlability, and indexing. As AI agents increasingly interact with websites, DOM optimization becomes more critical. It’s important to master these fundamentals now to stay ahead of evolving search and AI technologies.

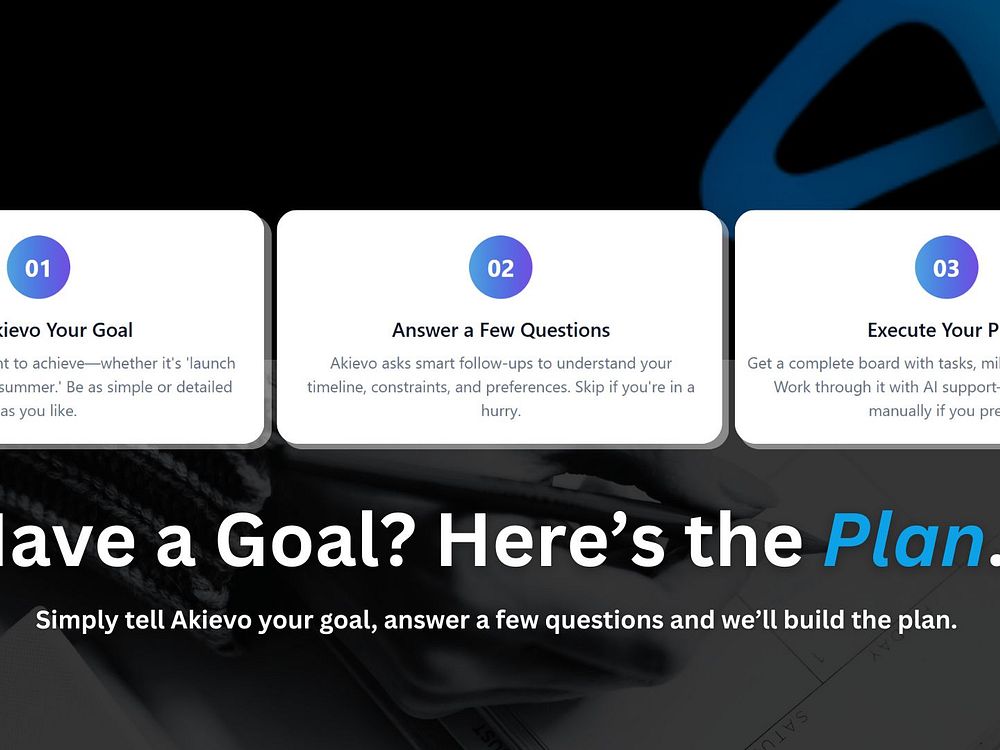

Akievo brings AI-powered project planning to everyone. Tell Akievo what you want to achieve, whether it's a wedding, a business idea, a financial plan, or any other goal. It instantly creates a complete, structured plan with tasks, timelines, and milestones. Akievo gives everyday people the power of professional project management.

Kaomojiya is the largest free collection of Japanese kaomoji (text emoticons) on the web. With over 3,000 kaomoji organized across 500+ categories, from happy and sad to animals, food, and seasonal events, finding the perfect expression takes just one tap. Every kaomoji is pure Unicode text, meaning it works everywhere: chat apps, emails, social media, code comments, and more. No app to install and no account to create. Just browse, tap, and paste.

Remedy Entertainment has already kicked off the marketing campaign for Control RESONANT in earnest. Following the announcement at The Game Awards 2025, the Finnish studio shared the first gameplay trailer during February's State of Play showcase. This week, they've continued to reveal more footage and information about the game. Yesterday, we reported that Remedy has promised a base minimum of 60 frames per second on all platforms, and that the combat gameplay shown demonstrated fast-paced melee-focused action akin to Devil May Cry or Bayonetta. Now, YouTuber Hidden Machine reports that Remedy confirmed Jesse Faden will not be playable at all […]

Read full article at https://wccftech.com/jesse-faden-isnt-playable-control-resonant/

Apple’s updated MacBook Pro lineup with M5 Pro and M5 Max options is available to pre-order, but not every customer wanting a portable Mac will keep track of the company’s announcements, as some of them had already placed an order for some M4 Max versions just days before the unveiling. While we can picture their shock when Apple announced its new chipsets, what’s an even bigger surprise is that the company outright canceled those M4 Max MacBook Pro orders, only to update them with M5 Max variants. Now that’s top-tier customer service. One lucky customer who placed an order for the M4 MacBook […]

Read full article at https://wccftech.com/m4-max-macbook-pro-orders-getting-canceled-by-apple-replaced-by-m5-max-versions/

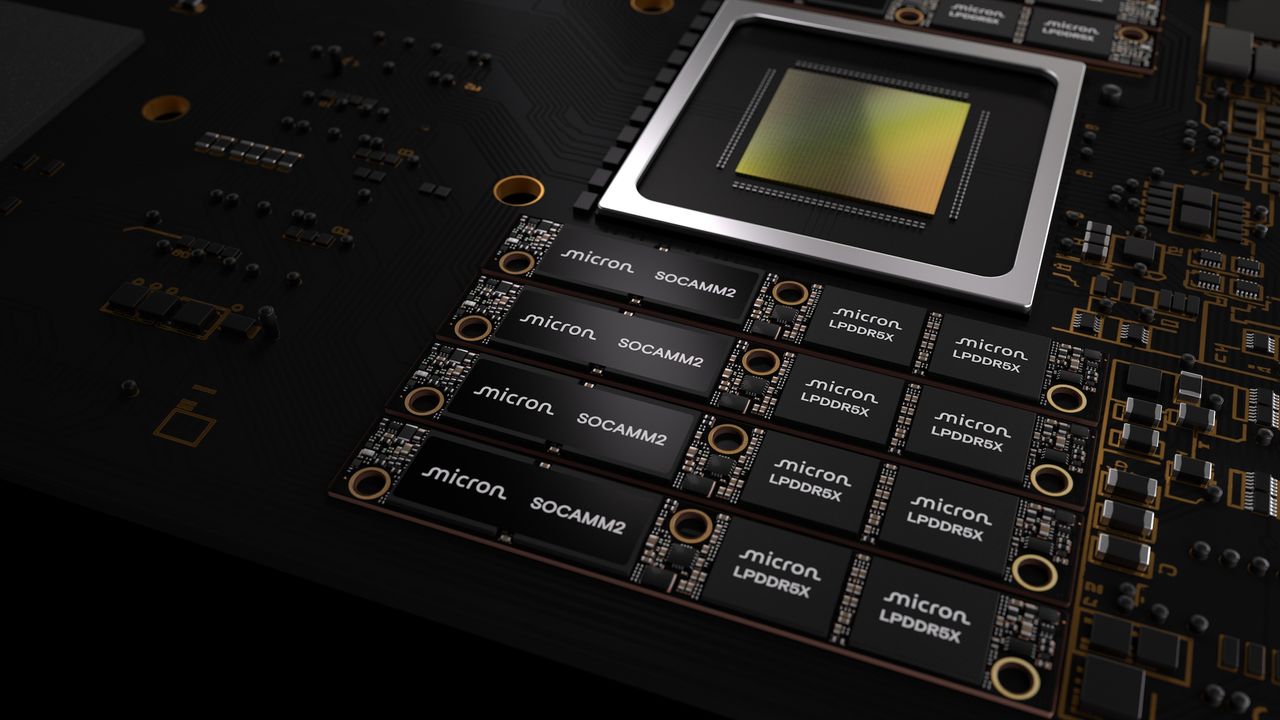

Looks like CXMT is also producing LPCAMM2 memory modules and not just the regular DDR4 and DDR5 DRAM chips. CXMT is Reportedly Making LPCAMM2 Memory Modules, and Lenovo's ThinkBook 2026 is Likely the First Device to use it Chinese memory maker, CXMT, is reportedly also making memory modules apart from producing memory chips. Unlike other smaller players, CXMT isn't just limiting itself to DDR4 and DDR5 memory chips but is reportedly assembling modules for mobile devices. The company is now reportedly making the new LPCAMM2 memory modules, which replace the soldered memory chips in laptops. We recently reported on the Lenovo […]

Read full article at https://wccftech.com/lenovo-thinkbook-2026-reportedly-utilizes-cxmts-lpcamm2-memory-modules/

Today we’re opening the Google AI Center Berlin. This new space will serve as a hub for leading AI researchers and developers from Google DeepMind, Google Research and G…

Today we’re opening the Google AI Center Berlin. This new space will serve as a hub for leading AI researchers and developers from Google DeepMind, Google Research and G…

There’s a growing problem in SEO and content marketing that doesn’t get talked about enough: everything is starting to sound the same. The same phrasing and structure, the same bland tone, the same safe language, the same robotic rhythm.

The web is filling up with perfectly optimized content that no one actually enjoys reading. And that’s the real risk. Not that AI will replace SEOs, Google will penalize AI content, or automation will destroy search.

The real danger is that brands lose their voice, their personality, and their identity in the name of efficiency.

AI should make your SEO better, not blander. Faster, not flatter. Scalable, not soulless.

Here’s how to use AI without turning your brand into beige wallpaper — and without losing what makes it worth ranking in the first place.

AI doesn’t replace a marketing plan, positioning model, or clear brand direction. It supports them. In the same way that tools like Google Analytics, Semrush, and Screaming Frog help you understand what’s happening, AI helps you work more efficiently and supports thinking.

If your SEO strategy is simply, “We use AI,” you don’t have a strategy. You have a software subscription. Without a clear understanding of your audience, what they care about, the problems they’re trying to solve, how they speak, what tone they respond to, and what your brand stands for, AI will just produce generic content at scale.

AI is genuinely good at certain parts of SEO, particularly areas that rely on scale, structure, and data processing. These include:

This is where AI earns its place. It handles repetitive manual work, speeds up research, reduces basic human error, and helps teams operate more consistently at scale. None of that is threatening. It’s simply practical.

Used properly, AI removes friction from SEO work and gives teams more space to focus on strategy and decision-making. The problems begin when people expect AI to execute SEO work it isn’t built for, treating it as a shortcut rather than a support system. When used this way, the output inevitably falls short of expectations.

Dig deeper: How to train in-house LLMs on your brand voice

AI struggles with the parts of marketing that build trust. Emotional intelligence, cultural awareness, tone, humor, empathy, and genuine understanding are difficult for it to replicate. It doesn’t truly grasp brand positioning, long-term thinking, or commercial judgment, and it can’t make ethical decisions in any meaningful way.

It can copy patterns, but it doesn’t understand meaning. It can recreate tone, but it doesn’t feel it. It can build structure, but it doesn’t create identity.

That’s why so much AI content feels fine but ultimately forgettable. It does the job, ticks the boxes, answers the question, follows SEO rules, and hits the word count. But it doesn’t create a connection that turns traffic into trust, and trust into customers.

The biggest risk with AI in SEO isn’t penalties or algorithm changes. It’s gradual brand dilution. Over time, content becomes more neutral, more generic, and less distinctive.

Visibility may stay the same, but identity weakens. Traffic grows, but loyalty doesn’t. Performance looks healthy, but trust doesn’t compound.

Effectively using AI in SEO requires role clarity. Let AI handle the structure and scale, but keep meaning firmly in human hands.

AI is well-suited to researching, analyzing, clustering, outlining, drafting frameworks, data processing, repetitive optimizing, and detecting patterns. These are process-driven tasks where automation adds real value.

However, everything that defines the brand and the relationship with the audience — voice, tone, storytelling, personality, trust building, emotional connection, commercial messaging, ethical judgment, and real audience understanding — should remain a human endeavor.

AI can help you build faster, but it shouldn’t decide what you’re building. It supports the process, but the design still belongs to you.

Dig deeper: How to blend AI and human input in your content approach

If you don’t define your brand voice, AI will default to something neutral and generic. That doesn’t happen because the technology is broken. It happens because you haven’t given it anything clear to work with.

Before using AI for content, clarify:

Many people assume better prompts can fix weak content. But prompts, no matter how detailed, don’t replace thinking, brand clarity, audience understanding, or positioning.

You can write the most detailed prompt in the world, but if your brand identity is fuzzy, the output will still be fuzzy. AI amplifies whatever you input, whether that’s clarity or chaos. There’s no middle ground.

Dig deeper: Content marketing in an AI era: From SEO volume to brand fame

Here’s what works in the real world and not just in tool demos.

Google doesn’t care whether content is AI-generated. It evaluates whether the content is useful, helpful, original, trustworthy, and valuable.

Low-quality human content gets punished. Low-quality AI content gets punished. High-quality content wins, regardless of who or what created it.

The myth that “AI content gets penalized” misses the point. What actually gets penalized is bad content, and AI simply makes it easier to produce bad content faster.

The brands that will lead SEO over the next few years won’t be the ones with the biggest AI tech stacks. They’ll be the ones that combine human strategy with AI efficiency, clear positioning with scalable systems, and strong brand voice with intelligent automation. They’ll use AI to move faster, but not to think for them.

Brands with clarity and identity will strengthen their position. Brands without them will simply become louder without standing out.

Dig deeper: How to balance speed and credibility in AI-assisted content creation

Every once in a while, a product launch doubles as a marketing masterclass. Recently, Selena Gomez’s Rare Beauty released a new fragrance, and it wasn’t just the scent that captured attention. It was the bottle. Designed with accessibility in mind, the easy-to-use packaging quickly became the story, sparking conversations and praise from accessibility advocates and consumers alike.

The takeaway for marketers is hard to miss. An inclusive design decision became the campaign itself, delivering more cultural impact than any ad spend could buy. And the lesson for marketers is equally clear: accessibility drives loyalty, enhances brand reputation, ensures compliance, and acts as a measurable growth driver.

Rare Beauty’s commitment to accessibility wasn’t a one-off. From packaging to pricing to its ongoing mental health advocacy, the brand has consistently embedded inclusivity into its DNA. That authenticity matters. Consumers can tell the difference between a stunt and a strategy, and they reward brands that lead with values.

And Rare Beauty isn’t alone. Across industries, leading brands are increasingly surfacing accessibility as a differentiator, not a footnote. Apple has consistently highlighted accessibility features as part of its core product storytelling, positioning them as innovation rather than accommodation. Microsoft has done the same by showcasing inclusive design in mainstream campaigns, including adaptive gaming products that reframed accessibility as a driver of creativity and connection. In fashion and retail, brands like Tommy Hilfiger and Unilever have brought adaptive design into the spotlight, integrating accessibility into product launches and brand identity rather than siloing it as a niche offering.

According to studies from Edelman and McKinsey, 73% of Gen Z choose to buy from brands they believe in, and 70% say they try to purchase products from companies they consider ethical. These aren’t fringe preferences, they’re mainstream expectations that can redefine how marketers approach building trust and growth with their audiences.

More than 1.3 billion people globally live with a disability, and together with their friends and family, they control over $18 trillion in spending power, according to the Return on Disability Group. For marketers, this isn’t just about compliance. It’s about growth, reputation, and building genuine trust in one of the world’s largest and most passionate consumer groups. That passion translates to powerful advocacy.

In discussions with AudioEye’s A11iance Team, a group of individuals with disabilities who regularly share feedback on real-world accessibility experiences, one member stated, “If I find a website that works and works very well for me, I will always recommend it to friends and family because I want people to have the same experience that I have.”

As another A11iance Team member, Maxwell Ivey, put it, “The cheapest form of advertising is word of mouth, and people with disabilities can have some of the loudest voices when we find people willing to make the effort. Because it’s that sincere effort over time that really counts with us.”

When accessibility becomes part of the customer experience, it creates something money can’t buy: trust and loyalty that scale through advocacy. But the opposite is also true. In a survey of assistive technology users, 54% said they don’t feel eCommerce companies care about earning their business.

Most brands are still competing for the same oversaturated demographics while overlooking this opportunity hiding in plain sight. In doing so, they’re leaving loyalty, advocacy, and revenue on the table.

Here’s where many brands stumble: accessibility usually stops at the shelf. Marketers invest heavily in packaging, store displays and product design, while digital experiences, the first and often primary touchpoint for customers, lag behind.

As accessibility-led design continues to earn attention, loyalty and earned media, the gap between physical product innovation and digital experience has become harder to ignore.

AudioEye’s 2025 Digital Accessibility Index found an average of 297 accessibility issues per web page detectable by automation alone. Each one represents friction in the customer journey, a conversion lost, or a compliance risk under frameworks like the Americans with Disabilities Act (ADA) and the European Accessibility Act (EAA).

Just as no campaign would launch without a brand review or legal check, no digital touchpoint should go live without an accessibility review.

Too often, accessibility is treated as a risk to manage instead of an advantage to leverage. The marketers who win will be the ones who flip that script. Here are four actions to start with.

Don’t hide it, lead with it. Brands like Rare Beauty have proved that inclusive design is the story. Build campaigns where accessibility isn’t a footnote but the differentiator that captures attention and loyalty.

Accessibility shouldn’t sit off to the side. Make Web Content Accessibility Guidelines (WCAG) alignment part of your brand guidelines, right alongside typography, logos and tone of voice. When accessibility is codified, it becomes second nature across every campaign.

Marketers are storytellers, and numbers seal the story. Track accessibility improvements such as fewer user-reported barriers, higher accessibility scores and fixes like improved alt text, color contrast or form usability. Connect those metrics to existing business outcomes like conversion, reach, and sentiment to show how accessibility drives ROI, not just compliance.

Just as you’d never risk brand safety in ad placements, don’t risk it in your digital touchpoints. Every update, seasonal campaign, or product drop should be monitored for accessibility. Trust and reputation are too valuable to leave exposed.

Rare Beauty’s fragrance launch proved something powerful: when you lead with accessibility, the story writes itself. The loyalty builds authentically, and the momentum flows naturally.

But here’s the opportunity: most brands still don’t get it. They’re treating accessibility as a compliance checkbox instead of the growth strategy it really is.

For marketers, that’s the wake-up call. Accessibility builds loyalty. It enhances brand reputation. It keeps your brand compliant. And it drives measurable growth across marketing efforts.

Rare Beauty showed how accessibility can capture attention at the shelf. The next opportunity is making sure it carries through online. Because when every touchpoint welcomes everyone, every campaign maximizes its impact.

We built Gloomin to make screen recording feel simple again, fast, lightweight, and free from the clutter of heavy video tools. Gloomin is a lightweight, Chrome-first tool that helps you record your screen, capture screenshots, annotate, blur, make quick edits, and share instantly. There are no complex timelines or setup—just hit record, explain visually, and move on. It’s designed for everyday async communication, not video production.

Although the Steam Machine has yet to be released, the new Valve hardware has sparked quite a bit of discussion among gaming enthusiasts. While some believe it will end up being a niche system, others believe Valve's gaming system could send the console market into an upheaval, especially if it is priced right. With the current RAM prices, however, it remains to be seen how Valve will handle this critical element (and its release date), and with things unlikely to improve anytime soon, it's looking less likely that the system could be released within the price range of a traditional […]

Read full article at https://wccftech.com/steam-machine-biggest-competitor-playstation/

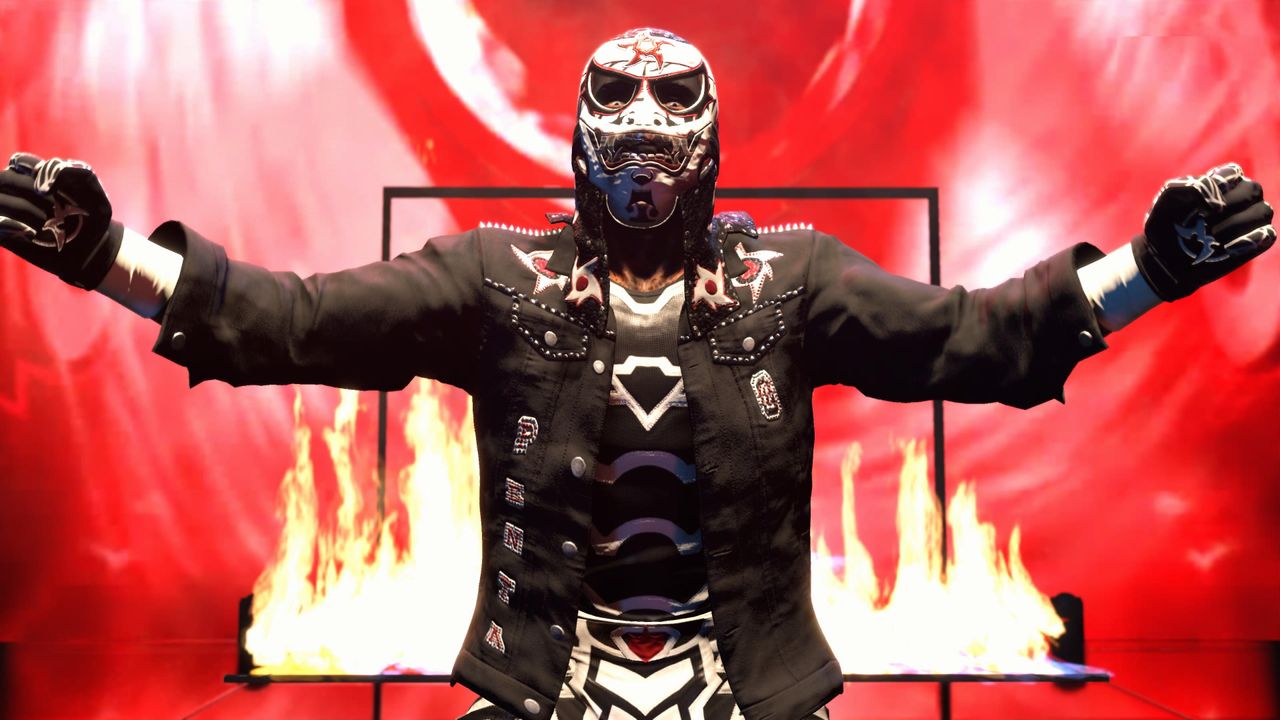

Like many of the wrestlers the franchise lets you play as, the WWE 2K games have been on top of the world, down and out, and everything in between. Last year's WWE 2K25 was not a career high point as the game focused heavily on The Island, a new online hub in the vein of NBA 2K's The City, to the exclusion of almost everything else. Thankfully, as I described in my hands-on impressions, WWE 2K26 seems to be spreading the love a bit more with new features. Does WWE 2K26 light a fire under the franchise? Or is it […]

Read full article at https://wccftech.com/review/wwe-2k26/

Capcom releases its first PC patch for Resident Evil Requiem Capcom has officially released its first PC patch/update for Resident Evil Requiem. This update includes stability fixes that make the game less likely to freeze or crash on certain hardware configurations. Additionally, this update also adds performance optimisation for RTX 40 and RTX 5o series […]

The post Resident Evil Requiem PC patch optimises RTX 40/50 performance appeared first on OC3D.

Ubisoft confirms that more than three Assassin’s Creed games are in development It’s official, Ubisoft has confirmed that Assassin’s Creed Black Flag Resynced is real. Ubisoft’s worst-kept secret is finally official. The Assassin’s Creed series is finally returning to the seven seas. For now, Ubisoft has only released the following statement and the artwork below. […]

The post Ubisoft officially confirms Assassin’s Creed Black Flag Resynced appeared first on OC3D.

Today, Bloomberg reports an indirect threat for the Nintendo Switch 2 console coming from the ongoing memory storage crisis. As you probably are already aware of if you're a regular Wccftech reader, the global rush to build artificial intelligence hardware has brought major increases in the price of NAND flash memory: NAND contract prices are forecast to surge by up to 90% in this quarter compared with the previous three-month period, when prices had already spiked by over 30%. This is an issue for the Nintendo Switch 2 because of its limited storage. The hybrid console only has 256GB of […]

Read full article at https://wccftech.com/switch-2-nintendo-games-vehicle-third-parties/

Apple’s low-cost iPhone launch strategy lived on with the arrival of the iPhone 16e, with the company making the required efforts to announce the iPhone 17e just a year later. Naturally, to save up on production costs as much as possible and in light of the DRAM shortage, there isn’t expected to be much of a cosmetic difference, but you might be interested in what’s underneath the hood. Here’s everything you need to know about the iPhone 17e’s launch, features, and specifications. Design and display Unfortunately, those looking forward to the Dynamic Island change on the iPhone 17e will be disappointed, […]