Your car with Google built-in is about to get smarter, thanks to Gemini

Thanks to deep integrations with both your vehicle and your apps, Gemini in cars with Google built-in will help drivers do more safely while still focusing on the road.

Thanks to deep integrations with both your vehicle and your apps, Gemini in cars with Google built-in will help drivers do more safely while still focusing on the road.  Thanks to deep integrations with both your vehicle and your apps, Gemini in cars with Google built-in will help drivers do more safely while still focusing on the road.

Thanks to deep integrations with both your vehicle and your apps, Gemini in cars with Google built-in will help drivers do more safely while still focusing on the road.  Sundar Pichai is on the cover of Time magazine for its 100 most influential companies issue for 2026.

Sundar Pichai is on the cover of Time magazine for its 100 most influential companies issue for 2026.

Google is filling a key measurement gap between awareness and consideration, giving advertisers a clearer view of how their brand is actually perceived — not just remembered.

What’s new. Google Ads has introduced a new “Association” metric within Brand Lift Studies. Advertisers can define a concept, category or attribute, and Google will ask users a survey-style question: which brands they associate with that specific idea.

How it works. Instead of measuring simple recall, the metric evaluates whether audiences connect your brand to a desired positioning. That could mean “premium,” “sustainable,” or even a product category — offering a more nuanced read on brand perception.

Why we care. Google is giving you a way to measure brand positioning, not just awareness or recall. The new Association metric helps determine whether campaigns are actually shaping how consumers perceive a brand — a critical step between being known and being chosen. It also enables more strategic optimization of creative and messaging, especially for brands trying to own specific attributes or categories.

Between the lines. Brand Lift has traditionally focused on awareness, recall and consideration. Association sits in between, helping advertisers understand whether their messaging is shaping how people think about the brand, not just whether they recognize it.

The catch. There’s still a constraint: advertisers can only select three Brand Lift metrics per study, so adding Association means making trade-offs with existing KPIs.

The bottom line. Association gives advertisers a more strategic lens on brand building — measuring not just visibility, but whether campaigns are landing the intended message.

First seen. This update was first spotted by Google Ads expert, Thomas Eccel who shared the update on LinkedIn.

Reddit is quickly becoming a powerful platform shaping how people discover and perceive brands. As AI search engines increasingly surface Reddit threads and comments, these conversations now influence visibility.

To understand this shift, I analyzed 117 SaaS brands on Reddit. People reveal what they really think there, which doesn’t always match polished marketing.

As communities shape brand perception, Reddit is no longer optional.

Here’s my analysis, plus how you can use Reddit to your advantage.

My analysis of 117 brands across the SaaS industry started with identifying the verticals to address:

From there, I created a Google sheet with the brand names for each vertical. Then, I mapped out the following details for each brand:

Across all 117 brands, I analyzed over 300 Reddit threads, including brand mentions, sentiment, community engagement, and brand participation.

Let’s dive into the key findings.

One thing became clear early on: people respond to people, not corporate brands.

Brands run by moderators who were helpful, honest, and non-promotional were received more favorably than those using a polished, corporate tone. Redditors tended to ignore or downvote obvious marketing copy.

In general, redditors don’t want to be marketed to. They want real opinions and real experiences.

As a result, peer recommendations felt more credible than brand messaging. When redditors asked questions or shared frustrations, the most authentic answers came from other users.

When brands stepped in with scripted or promotional responses, they often struggled to gain traction.

However, when brands answered directly, acknowledged limitations, and used conversational language, responses improved. In some cases, brand moderators even earned upvotes and thanks.

Redditors talk about brands, whether or not they’re present on the platform. In many cases, brands simply aren’t there.

Thirty of the 117 brands I analyzed have no Reddit presence. Another 23 are on Reddit, but their subreddits are abandoned.

In several instances, users asked direct questions like:

They received responses from other redditors sharing experiences, opinions, recommendations, and problems.

When brands aren’t there, the conversation continues without them. Over time, their reputation on Reddit exists outside the brand’s control.

Other negative outcomes can follow. When brands aren’t present, others can take their place.

In one instance, I found a community using a popular brand name that had nothing to do with the brand. This shows how easily brand presence can be shaped or misrepresented.

Redditors are already discussing your brand. The only question is whether you’re part of that conversation.

Reddit is an incredible source of unfiltered customer insights.

If you want to know what drives people away, what people value, and how people compare tools, you’ll find the answers on Reddit.

Here are some ways Reddit helps with customer research.

On Reddit, you’ll find people asking questions and sharing:

Reddit users tend to say exactly what they think. This kind of honesty is hard to find anywhere else.

These insights are critical for improving SaaS products. Traditional feedback methods don’t always capture these comments — but Reddit does.

Your Reddit community is a good place for happy customers to advocate for your brand. For example, this Reddit post by Monday shares a brand ambassador program.

In the comments, some brand advocates share insights into their experience, helping elevate the post.

When discussing some community-led brands, redditors often highlight solutions to problems and help fill brand gaps. For example, I noticed users helped each other with troubleshooting, sharing fixes, and recommending integrations.

In some cases, these communities were almost fully self-sustaining, requiring little brand involvement.

Across the topics I reviewed, redditors often expressed negative sentiment about pricing and suggested alternatives, especially for enterprise SaaS tools.

As a result, SaaS brands are often associated with soaring costs and limited pricing transparency, which can hurt perception. When users highlight competitor features, they surface gaps and alternative tools to consider.

Reddit attracts people who discuss how they use software. In my analysis, I observed that users shared:

These posts and comments give brands insight into real use cases they can use to improve products.

Reddit is no longer a side conversation. It’s where brand perception is shaped in real time.

Across the 117 brands I analyzed, conversations are happening on Reddit — even when the brand isn’t present. Increasingly, those conversations feed into AI search, influencing what people see, trust, and choose.

Smart brands shouldn’t ignore Reddit. They should track mentions, listen closely, show up where it matters, and treat Reddit as both a reputation channel and a product insight engine.

Why does it take Noctua so long to release Chromax Black fans? Noctua is preparing to release its Chromax Black NF-A12x25 G2 fans, which will arrive around 10 months after the release of its standard brown/beige versions. Ahead of this release, Noctua has decided to give its fans a glimpse behind the curtain and explain […]

The post Noctua explains – Why do their Chromax Black fans take so long? appeared first on OC3D.

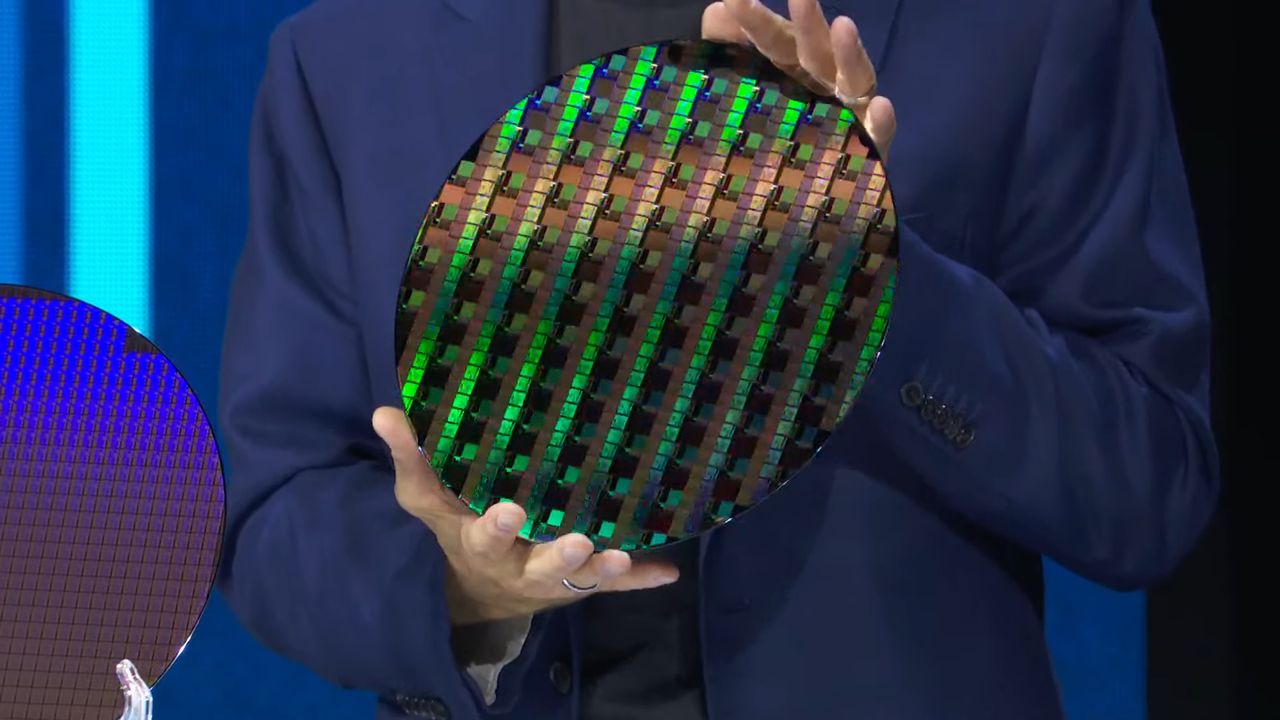

Another Intel Wildcat Lake CPU arrives on PassMark, showing equivalent performance to its smaller sibling. PassMark Reveals Intel Core 5 330 Delivers 4,215 Points in Single and 14,947 Points in Multi-Core Tests Some of the Intel Wildcat Lake CPUs have now appeared on popular benchmarking platforms like PassMark and Geekbench. We first saw a glimpse of the only 1+4 Core CPU, Core 3 304, on Geekbench, and then the Core 5 320 appeared a few days ago on the popular platform PassMark. We saw the Core 5 320 competing with the Apple A19 Pro in MT, but trailing in single-threaded […]

Read full article at https://wccftech.com/intel-core-5-330-spotted-on-passmark-for-the-first-time/

NVIDIA has revealed the games it plans to add to the GeForce NOW cloud streaming library for the month of May 2026, but what's arguably more significant this month is how NVIDIA is expanding the list of games that are classed as RTX 5080-ready. Beginning today, "across nearly the entire GeForce NOW Ready-to-Play library," players subscribed to GeForce NOW Ultimate can play their games with the power of an RTX 5080 behind them. It's a massive expansion of the list of RTX 5080-ready games, which had previously expanded in trickles of a select few games getting added to GeForce NOW […]

Read full article at https://wccftech.com/nvidia-geforce-now-16-games-may-2026-nearly-entire-ready-to-play-library-rtx-5080-ready/

People find new ways of building PCs, but this one caught our attention as fitting regular-sized components in a CRT chassis is challenging. Redditor Builds a Whole PC Inside a CRT Monitor Using Desktop Parts; Replaces Display With a Laptop Panel and Deploys Several Case Fans Decades-old CRT display just became a fully functional computer, thanks to u/Discipline_Great, who, even though he couldn't revive the old CRT monitor, had some other plans. He says he picked it up from an e-waste, and it was already broken. So, he decided to turn it into a PC building project, which appears challenging, […]

Read full article at https://wccftech.com/modder-turns-crt-monitor-into-a-full-pc-build/

Benchmarks of Intel's upcoming and fastest gaming handheld SoC, the Arc G3 Extreme, have been leaked, surpassing the Ryzen Z2 by 25%. Intel Packs Its Strongest Battlemage GPU, & 14 CPU Cores Inside the Arc G3 Extreme Gaming Handheld SoC We recently covered Intel's first Arc G3 gaming handheld, which has been listed by online retailers. While the retailer listing was void of details for the SoC itself, we now have more specs and even benchmarks of the upcoming chip & they look phenomenal. Starting with the CPU, the Intel Arc G3 Extreme is going to be the top offering […]

Read full article at https://wccftech.com/intels-arc-g3-extreme-handheld-chip-crushes-ryzen-z2-extreme-benchmark-leak/

Yesterday, after reports that it would be shutting down, Greedfall and Steelrising makers Spiders, a studio that had survived in the video game industry for nearly two decades, officially closed its doors after its parent company Nacon failed to find a buyer after Spiders had filed for insolvency. While Nacon itself and three more of its subsidiaries have all filed for insolvency, Nacon continues to truck onward and reveals its Nacon Connect event will indeed return in May 2026, as it previously promised when the event was postponed earlier this year. The showcase event will premiere on May 7, 2026, […]

Read full article at https://wccftech.com/nacon-connect-set-may-2026-amidst-studio-closure-concerns/

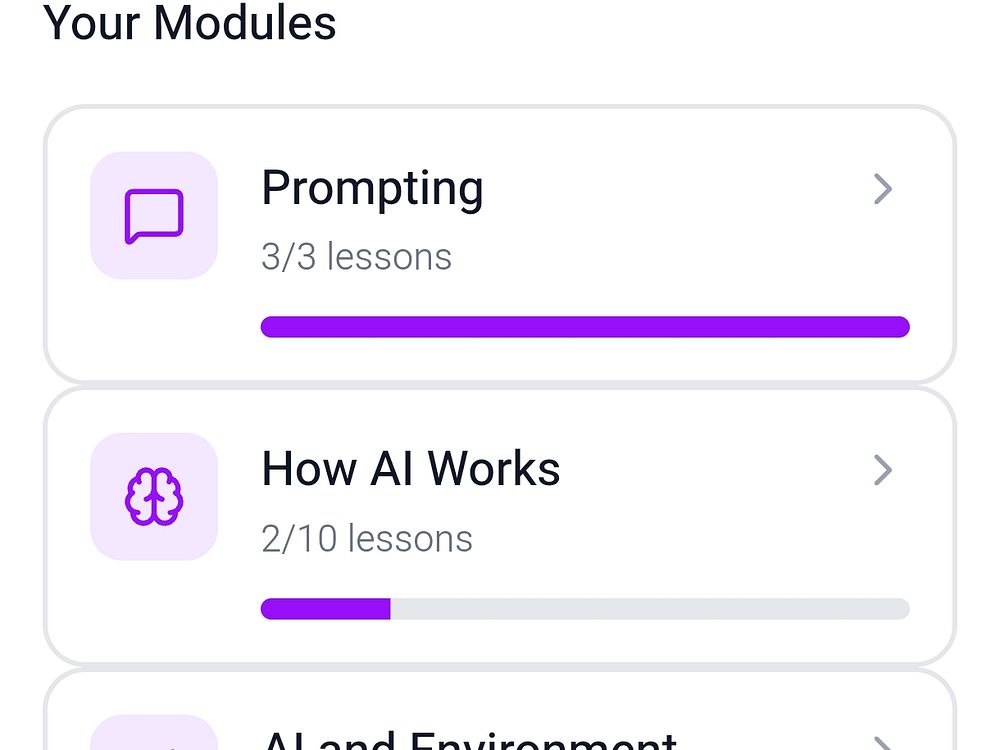

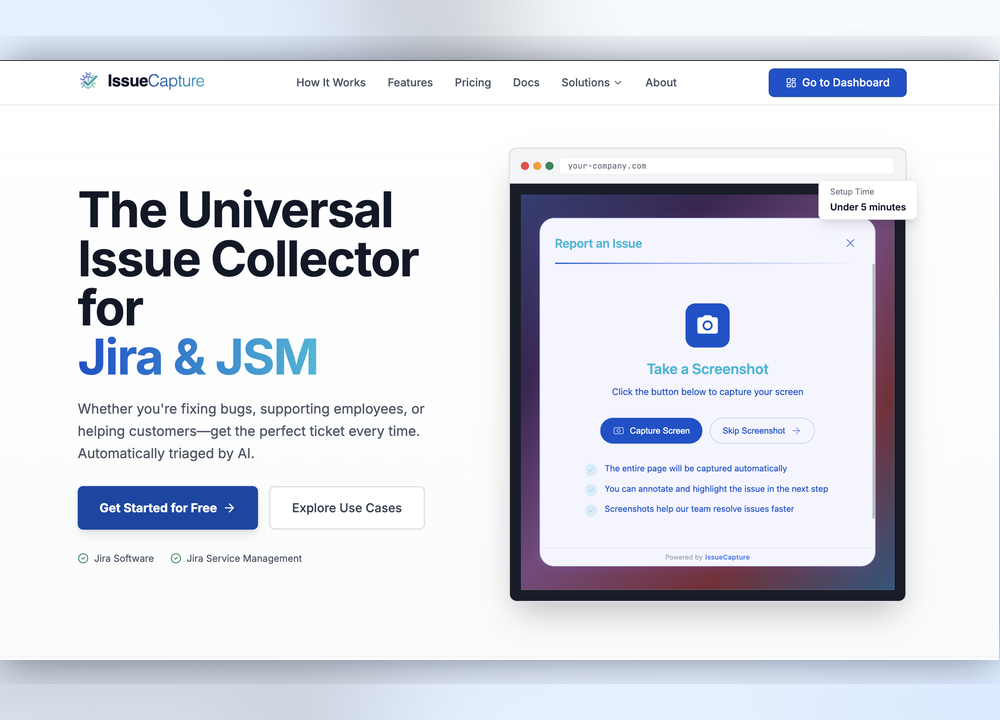

Spaiky is like Duolingo for AI learning: it teaches AI literacy through bite-size, gamified lessons on your phone. Explore modules like Mastering AI Prompts and How LLMs Work, and learn in five minutes a day with quizzes, analogies, and no jargon. Earn XP, keep streaks, and collect trophies while tracking progress across 80+ interactive lessons. The app is free to start and is available now on Android, with iPhone coming soon.

The Spool List is a peer-to-peer fabrication marketplace that connects buyers with verified makers in 3D printing, laser cutting, CNC, embroidery, metalwork, and more. Post a job, get fixed-price quotes or open bids, and pay securely through escrow. Track progress in real time, message your maker, and release funds only when satisfied. The platform supports card and USDC payments, has zero buyer fees, and includes dispute resolution, making it easy to source anything from a single prototype to small-batch production.

In celebration of Route 66’s 100th anniversary, Google Maps is rolling out two new ways to help you explore it, virtually or IRL.

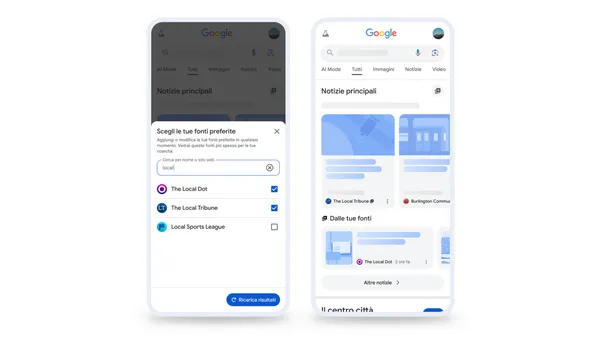

In celebration of Route 66’s 100th anniversary, Google Maps is rolling out two new ways to help you explore it, virtually or IRL.  Preferred Sources is now rolling out globally in all supported languages, giving users more control over the news they see on Search.

Preferred Sources is now rolling out globally in all supported languages, giving users more control over the news they see on Search.  Built for the next generation of Search, AI Max for Shopping campaigns helps retailers reach shoppers the moment discovery begins.

Built for the next generation of Search, AI Max for Shopping campaigns helps retailers reach shoppers the moment discovery begins.  Upgrade to AI Max to simplify your workflow and access our most advanced tools.

Upgrade to AI Max to simplify your workflow and access our most advanced tools.  As AI Max turns 1, we’re helping you capture even more opportunities in the expanding Search universe.

As AI Max turns 1, we’re helping you capture even more opportunities in the expanding Search universe.

Google’s Preferred Sources now supports all languages, not just the English language. “Preferred Sources is now rolling out globally in all supported languages,” Google wrote on its blog this morning.

“This feature gives you more control over the news you see on Search by letting you choose the outlets and sites you want to appear more often in Top Stories,” Google added.

In December, Google rolled out preferred sources globally but it only supported English. Now it supports all languages globally as well.

Stats. Google added some interesting data including:

Preferred Sources. Preferred Sources let searchers star publications in the Top Stories section of Google Search, and Google uses that signal to show more stories from those starred outlets. The feature entered beta in June, rolled out in the U.S. and India in August, and is now expanding globally.

How it works. You click the star icon to the right of the Top Stories header in search results. After that, you can choose your preferred sources – assuming the site is publishing fresh content.

Google will then start to show you more of the latest updates from your selected sites in Top Stories “when they have new articles or posts that are relevant to your search,” Google added.

More details can be found over here.

Why we care. Traffic from Google Search is hard and if you can get your readers, loyal readers, to make your site a preferred source, that can help. Google said those users are twice as likely to click, which can help drive more traffic.

So add the preferred source icon to your site and encourage users to sign up. You can make Search Engine Land a preferred source by clicking here.

The difference between a 2% margin and a 20% margin increasingly comes down to whether you’re renting attention or owning the answer.

For years, search rewarded the ability to buy visibility. That model is weakening.

As AI systems increasingly resolve queries without a click, the value shifts from traffic acquisition to answer formation.

When you move from buying clicks to engineering answers (i.e., structuring content so it can be surfaced, cited, and trusted by AI systems), you change what you own. Instead of renting placement, you build answer equity: durable inclusion in the outputs that shape decisions.

The goal isn’t to turn off paid search. It’s to stop relying on it as your primary source of demand. Over time, this can lower acquisition costs and reduce volatility, because you’re not competing for every impression.

To operationalize this shift, you need a content structure that maximizes what AI systems can extract. Think of it as an “atomic sandwich.”

An atomic sandwich content structure shifts the focus from chasing traffic to maximizing intent density. Here’s how:

Most organizations treat their search budget like a high-interest payday loan.

You keep pouring cash into the paid bucket for that immediate hit of traffic, and it feels like you’re winning.

But the moment you stop feeding the meter, your brand disappears.

For many organizations, this isn’t just marketing inefficiency — it’s an organizational risk.

In the emerging Answer Economy, your rented audience is evaporating. Data from Seer Interactive (Sept 2025) shows paid CTR on informational queries has dropped 68% when Google’s AI Overviews are present.

You’re not just paying for clicks. In many cases, your paid traffic contributes to awareness that AI systems can later satisfy without requiring a click.

The “box” has changed.

Here’s the structural leak in your balance sheet: to survive 2026, you must stop buying a crowd and start engineering the answer.

If your brand isn’t among the trusted sources behind the machine’s answer, your visibility — and influence — shrinks significantly.

We’ve moved from a search engine that directs users to a generative engine that validates information. Every dollar you spend on ads to cover a lack of E-E-A-T is money you’re burning.

The data is clear: appearing in search results is no longer a viable model on its own.

The goal is no longer just to rank in search, but to be consistently included among the sources AI systems rely on.

Without trust, you’re paying for ghost impressions.

In the old box, you could survive by being loud. In the new box, you survive by being certain.

Most companies are in organizational denial.

You see the cost of rented clicks rising and quality falling, but you’re too afraid to stop because you’ve neglected your information architecture and have no foundation. That’s a balance sheet liability.

Use this checklist in your next review to find where your Answer Equity is leaking.

Stop rewarding word count. Every piece of content must deliver a “meat” layer — information gain a retriever can’t synthesize from the rest of the web. That’s how you reclaim your margins.

Dig deeper: Information gain in SEO: What it is and why it matters.

Stop treating schema as a technical extra. It’s your trust score on the digital exchange. Ensure your authors have strong provenance so AI retrievers can instantly crawl and confirm your expertise.

Dig deeper: Decoding Google’s E-E-A-T: A comprehensive guide to quality assessment signals.

If your traffic drops but lead quality holds, you’re winning. Focus on users who bypass the summary because they need the deep, forensic expertise only you provide.

Dig deeper: Measuring zero-click search: Visibility-first SEO for AI results.

The shift from renting an audience to owning the answer is the most significant strategic pivot your organization will make this decade. It moves you from a marketing expense to a balance sheet asset.

The paid trap offers a temporary high but leads to a fiscal dead end. Every dollar spent there is consumable — used once and gone when the auction ends.

When you move that capital into your information infrastructure, you stop paying for the privilege of being ignored. You start building a digital entity that owns its facts, earns trust, and controls its future in the Answer Economy.

Your first step: don’t boil the ocean.

Take your top-performing paid landing page and run the seven-point health check. If it’s a “zombie fact” environment, engineer information gain back into the page.

Stop asking for a ranking report; start asking for an entity audit.

The 2026 organization isn’t defined by how much it spends to rent an audience, but by how much it proves it owns the answer.

You have the blueprints. You have the data. Now stop funding the payday loan and start building answer equity.

Across 90 prompts we tested in ChatGPT, commercial prompts triggered web searches 78.3% of the time. Informational prompts did so just 3.1%.

That gap changes what you should write if you want to appear in a ChatGPT answer.

ChatGPT doesn’t pull every response from the same place. Some answers come from training data; others use live web search — a behavior called query fan-out. The model expands your prompt into multiple background searches, then retrieves and synthesizes across those subtopics. If your page isn’t on those branches, it won’t be pulled in.

So the question is no longer just how to rank. It’s which pages open the fan-out door in the first place.

In our sample, informational pages didn’t. Read on to discover where the system went instead.

We tested 90 prompts across three industries: beauty, legaltech/regtech, and IT. We analyzed prompt intent, downstream query expansion, and the intent those expansions reflected.

Here’s the breakdown and the core finding: most queries aligned with commercial intent, not purely informational prompts.

Query fan-outs change the content game because the system isn’t limited to the literal prompt.

It expands the request into multiple background searches, then retrieves and synthesizes across those subtopics.

Fan-outs trigger parallel web searches tied to the initial prompt, creating opportunities for retrieval, mention, and link citation.

Multi-query expansion is a core design pattern in modern generative search systems. Google describes AI Mode this way: it breaks a question into subtopics, searches them in parallel across multiple sources, then combines the results into a single response.

That raises a strategic SEO question: should you invest more in top-of-funnel educational content, or in lower-funnel comparison, shortlist, and recommendation content?

This experiment framed that problem.

The objective was to test, across selected industries, where fan-out appears by intent category: informational, commercial, transactional, or branded.

The initial hypothesis was direct: informational prompts wouldn’t trigger fan-out, while commercial prompts would, and those fan-outs would stay at the same funnel level or move lower.

We found that ChatGPT-generated fan-outs are overwhelmingly associated with commercial intent.

Disclaimer: This experiment measures observed prompt expansion behavior in ChatGPT. Google AI Mode is cited only as context to show multi-query expansion as a broader pattern in generative search, not as proof of ChatGPT’s internal architecture.

The core sample includes 90 numbered prompts, heavily weighted toward informational intent.

| Prompt intent | Prompts | Share of sample | Prompts with fan-out | Fan-out rate |

| Informational | 65 | 72.2% | 2 | 3.1% |

| Commercial | 23 | 25.6% | 18 | 78.3% |

| Branded | 1 | 1.1% | 0 | 0.0% |

| Transactional | 1 | 1.1% | 0 | 0.0% |

The sample skews heavily toward informational prompts, with some commercial ones and minimal branded and transactional queries.

We structured the experiment around the sectors in the brief: beauty/personal care, legaltech/regtech, and IT/tech.

The main finding is clear.

Out of 90 prompts, 20 triggered fan-out. Of those, 18 were commercial and 2 informational.

Informational prompts made up about 10% of fan-out triggers (2 of 20). When they did trigger expansion, they were rewritten into more evaluative, solution-seeking subqueries.

In other words, 90% of fan-out-triggering prompts in the core sample came from commercial intent.

The contrast is stronger than the raw totals suggest. Commercial prompts triggered fan-out 78.3% of the time; informational prompts did so just 3.1%.

This supports the working hypothesis: in this sample, fan-out was overwhelmingly a commercial phenomenon.

Those 20 prompts produced 42 fan-out queries — an average of 2.1 per triggered prompt.

Of those 42 fan-out queries:

Even when a prompt triggered expansion, the system usually shifted toward comparison, product evaluation, feature filtering, shortlist creation, or brand-specific exploration — not broad educational discovery.

The experiment used 90 prompts across three industries, mostly informational, with a smaller set of commercial prompts and minimal branded and transactional queries.

In the analysis, we have:

The analysis then followed three steps:

That produced two distinct but complementary views:

That distinction matters: the first shows which prompts open the fan-out path, while the second shows where the system goes once it opens.

The cleanest interpretation is that, in this sample, fan-outs behave less like open-ended topic expansion and more like assisted decision support.

Commercial prompts almost always opened the door.

Once they did, fan-outs usually stayed commercial.

The system expanded into comparisons, feature-based filtering, product lists, pricing-adjacent queries, and brand-specific evaluations.

A few examples make that concrete.

The two informational exceptions are even more revealing than the rule.

So, even when the prompt starts broad, fan-out often translates that breadth into a lower-funnel retrieval path.

The takeaway isn’t to stop writing informational content.

It’s this: informational content alone is unlikely to align consistently with fan-out expansion, at least in this dataset.

If your goal is visibility in AI answers tied to product selection, vendor discovery, or option narrowing, you need stronger coverage of pages and passages that match those downstream commercial branches.

That may include:

In practical terms, your content model shouldn’t be just ToFU or BoFU, but ToFU with commercial bridges.

A broad article can still help, but it should include passages the system can easily reformulate into decision-support subqueries.

A purely educational piece that explains a category without naming products, tradeoffs, features, use cases, pricing logic, or selection criteria is much less likely to align with the fan-out paths seen here.

Put simply: Don’t just answer the obvious question — anticipate the next evaluative step the system is likely to generate in the background.

This result is directional, not universal.

The next version of this experiment should isolate the question more aggressively and expand the dataset.

A follow-up should map triggered fan-outs back to specific content formats.

The goal isn’t just to confirm that commercial intent wins. It’s to identify which page templates and passage structures best cover the fan-out branches AI systems prefer.

I keep hearing people say AI understands their brand. It doesn’t. Let’s get that out of the way first.

What it does is pattern-match at scale. It compresses your positioning, product, proof, and tone into a bundle of signals it can retrieve and remix at speed.

Those patterns come from two places:

So “AI SEO” isn’t a new channel. It’s a new representation problem: which version of your brand gets encoded, retrieved, and repeated.

Most brands are already in the game. They’re just not playing with purpose.

Classic SEO was a library problem. You publish a URL. Google indexed it. A human searched and found it.

AI search is a conversation that stretches out the demand curve. Head terms still drive the majority of visibility, but, ever so slowly, more volume is moving into context-heavy prompts.

Your job is to be the most relevant match inside a model’s memory and retrieval pipeline.

Not by being ranked. But by being represented.

AI doesn’t run on opinions. It runs on associations.

Classic SEO competed for keywords. Then it shifted to entities. AI systems go one layer deeper. They turn entities into vectors.

Your brand becomes a coordinate in dimensional space. Close to some concepts. Distant from others. Pulled by whatever your content and mentions repeatedly associate you to.

If your brand is consistently associated with “enterprise analytics”, “real-time dashboards” and “data governance”, your vector lives near those clusters.

If your messaging sprawls into adjacent territory because someone got bored of writing about the same things, the vector spreads. Precision drops. The model still has a position for you. It’s just fuzzier, less confident, and easier to swap for a competitor with cleaner signals.

Before you “fix AI SEO,” identify which layer your brand is failing on. The same tactics don’t work everywhere.

Your historical footprint. Press, blogs, documentation, reviews, every old thread on a forum you forgot existed.

You can’t fully control it.

But you can reduce fragmentation by finding and editing all possible past mentions (social profiles, directory listings, wikis, etc) to create a consistent identity across the internet.

Understand the training layer by asking an AI chatbot to describe your brand with web search turned off.

Your live surface area. Indexed pages, product feeds, APIs. This is where traditional technical SEO of crawling, indexing and rendering matter most. It defines what the AI system can access for citations.

Understand the retrieval layer by running branded intent and market category intents prompts daily using a LLM tracker and reviewing which sources are consistently cited.

That is the output seen in AI Overviews, AI Mode, ChatGPT or whatever your brand gets reassembled in front of an actual customer. Your brand will be written into the answer only if it’s a must.

So ask yourself, what unique, quotable, additive content forces the LLM to mention you?

Understand the generation layer by using the same LLM tracker data, but reviewing brand mentions within responses and their semantic associations.

Think of these as the forces quietly shaping your representation across the layers.

AI systems merge different references to the same brand if it’s obvious they belong together.

Most brands don’t have one clear identity. They often have:

Humans merge that automatically. Models don’t. They consolidate by pattern, not intent. Every inconsistent self-reference is a vote for fragmentation.

Allow your brand to be written five different ways and split your visibility signals five times.

Models learn what appears together:

Repeat the right pairings, and the association strengthens. Be inconsistent, and it weakens. It’s genuinely that simple.

Models track who is being described, by whom, in what context.

Your own site is one layer. Third-party mentions are another. High-trust sources carry more weight.

Not because of “authority” in the classic SEO sense, but because they appear frequently inside reliable contexts in the training data and retrieval corpora. Similar outcome. Different mechanisms.

When generating answers, AI systems decide which information to use. That decision depends on clarity, relevance, uniqueness, and ease of extraction.

If key facts are buried in narrative copy, implied through metaphor, scattered across sections, the model will simply pull from somewhere else.

On the other hand, if you repeat them, structure them, and make them explicit, you are more likely to be chosen by the model.

In your content, on-page and off-page, make the core entities unmissable. Your brand. Your products. Your categories. Your audience. Your differentiators.

Craft a clear, consistent, canonical positioning that the machine can’t misread by creating a canonical brand bio:

[Brand] is a [market category] for [audience] who need [use case], differentiated by [proof].

Then, honestly ask yourself if your answer could also describe your competition. Or better, ask AI that question. If the answer is yes, rewrite it’s unmistakably you.

Then roll out that positioning everywhere. On-page with “retrieval-ready” chunks, in structured data, in “sameAs” references, industry publications, partner sites, user reviews, community discussions, social posts.

Repeat key associations deliberately across pages until it feels excessive. Reduce unnecessary variation in terminology. Then the associations strengthen. Are reinforced. Compound.

Beware brand drift, where inconsistencies allow misrepresentations, and a lack of information allows hallucination to creep in. Police all the edges. Consolidate or kill the pages that introduce conflicting descriptions of your brand.

This is not about gaming AI. It is about reducing entropy.

If that sounds boring, good. The brands that win the AI era are not going to win it with cleverness. They are going to win it with discipline.

Because if answers are inconsistent across sources, your brand won’t be cleanly encoded. And the version of you that AI systems are quietly passing along to customers won’t be the one you intended.

If AI systems can’t confidently represent your brand, they will default to a safer option. Usually, it’s a competitor with cleaner signals. Not because that competitor is “better”. Because that competitor is easier for the machine to use.

AI doesn’t need to understand your brand perfectly. It needs to approximate it well enough to recommend you. Your job is to control that approximation through consistency, structure, and distribution.

Not by publishing more. By making your brand impossible to misunderstand.

Google is doubling down on AI-driven ads just as search behavior shifts toward conversational queries, giving advertisers more automation while trying to preserve control.

What’s new.

AI Max expands beyond Search: Now rolling out to Shopping campaigns and travel-specific formats, broadening reach across more advertiser types.

AI Brief (powered by Gemini): A new interface that lets advertisers steer AI using natural language inputs.

Text disclaimers + URL automation: Compliance-friendly updates to pair with automated landing page selection.

Why we care. Google is making AI Max a core layer across Search, Shopping and Travel, meaning automation will increasingly determine how ads are matched to user intent. This update expands reach into more conversational, high-intent queries that traditional keyword strategies miss, helping brands capture demand earlier in the journey.

At the same time, tools like AI Brief and new compliance features give advertisers more control over messaging and targeting, reducing the risk of fully automated campaigns feeling like a “black box.”

Shopping gets smarter. For retailers, AI Max for Shopping uses Merchant Center data to generate more adaptive ads that can respond to long-tail and exploratory queries, helping brands appear earlier in the discovery phase rather than only at the point of purchase. The rollout is positioned as a simple upgrade for existing Shopping campaigns, suggesting Google wants rapid adoption.

Travel gets consolidated. Travel advertisers get a consolidation play. Search Campaigns for Travel bring previously fragmented formats into a single interface with unified reporting and integrated AI Max capabilities. The move reduces operational complexity while reinforcing Google’s push toward centralized, AI-driven campaign management.

More control with AI Brief. The most notable addition is AI Brief, which attempts to solve a long-standing advertiser concern: lack of compliance control in automated systems. Advertisers can define messaging rules, specify which queries to prioritize or avoid, and shape how different audiences are addressed. The system then generates previews, allowing feedback before campaigns go live.

Automation meets compliance. Google is refining how traffic is directed to websites. Final URL expansion uses AI to select the most relevant landing page for each query, and the new text disclaimer feature ensures required legal messaging remains intact even when automation is active. This signals a push to make AI usable in more regulated industries without sacrificing compliance.

The bottom line. AI Max is evolving from a Search add-on into a foundational layer across Google Ads, combining automation, cross-format reach and advertiser input to adapt to a more AI-driven, conversational search landscape.

LG delivers ultra-sharpness with its 5K Hyper Mini LED 27GM950B monitor LG has officially released its new UltraGear Evo AI GM9 (27GM950B) 5K Hyper Mini LED monitor. This new 27-inch gaming screen boasts a 5K resolution with a maximum refresh rate of 165Hz. Furthermore, with Dual Mode, this screen also supports 1440p at up to […]

The post LG launches its first UltraGear Evo Hyper Mini LED 5K Gaming Monitor appeared first on OC3D.

Aleyda Solis's Similarweb analysis of 10 markets shows that AI search clicks frequently redirect to local domains, with the distribution differing by industry.

The post AI Search Clicks Often Go To Local Domains: Report appeared first on Search Engine Journal.

Google adds AI Brief and text disclaimers to AI Max. See how new controls help regulated advertisers adopt automation while maintaining compliance and messaging accuracy.

The post New: AI Brief And Text Disclaimers Come To Google AI Max appeared first on Search Engine Journal.

Google expands AI Max to Shopping and Travel campaigns. Learn what’s changing, how it works, and what advertisers should prepare for ahead of broader rollout.

The post Google Launches AI Max For Shopping and Travel Campaigns appeared first on Search Engine Journal.

Microsoft says Bing reached 1B monthly active users, as search ad revenue grew 12% and Edge gained share for the 20th straight quarter.

The post Microsoft Says Bing Reached 1B Monthly Active Users appeared first on Search Engine Journal.

After a slip-up by Fuse Games spoiled the surprise a few days early, we officially learned today that Star Wars Galactic Racer will arrive on PC, PS5, and Xbox Series X/S on October 6, 2026. We also got a full breakdown of the different editions of the game that'll be available, and something that didn't leak, which was the full release date trailer with some incredible-looking fast-paced gameplay. After Fuse Games quelled any fears that Star Wars Galactic Racer wouldn't include pod racers, today's official release date trailer is sure to put the iconic vehicles front and center, with several […]

Read full article at https://wccftech.com/star-wars-galactic-racer-editions-release-date-fuse-games-official-reveal-gameplay/

MSI's upcoming Claw 8 EX AI+ Gaming handheld, which will be powered by the Intel Arc G3 Extreme SoC, has been listed online. MSI's Next-Gen Claw Handheld Spotted At Italian Retailer: Features Intel Arc G3 Extreme SoC, 32 GB Memory & 1 TB Storage Intel will soon be introducing its next-generation handheld SoCs called Arc G3. These will come in two flavors: a standard G3 and a high-end G3 Extreme. The Arc G3 series is Intel's big entry into the gaming handheld through dedicated SoCs, similar to what AMD does with its Ryzen Z series SoCs. Now, the first handheld […]

Read full article at https://wccftech.com/msi-claw-8-ex-ai-gaming-handheld-with-intel-arc-g3-extreme-listed-online-1599-euros/

The new flagship motherboard boasts an all-white design, boasting incredible VRM, powerful connectivity, and a powerful feature-set for high-end and flagship Ryzen CPUs such as Ryzen 9 9950X3D2 Dual Edition. ASRock Introduces An All-White X870E Taichi White Motherboard, Featuring 27 Power Phase VRM, PCIe Gen 5.0 Support, and Modern Connectivity Popular hardware manufacturer, ASRock, has debuted a new flagship motherboard for the high-end Ryzen CPUs called X870E Taichi White. The X870E Taichi White is the first-ever all-white flagship Taichi series motherboards that brings a new color scheme to the lineup. The motherboard uses fully white PCB, white heatsinks, and white […]

Read full article at https://wccftech.com/asrock-launches-x870e-taichi-white/

Atomfall, a very British take on games like Fallout or STALKER from Rebellion that landed on PC and consoles in March 2025, is the latest recent release to get its own adaptation. Two Brothers Pictures, the production company behind hit shows like Fleabag and The Assassin will lead up a TV show adaptation of the game, which also just took home the BAFTA for Best British Game two weeks ago. News of the adaptation comes via a report from Deadline, who add that the Two Brothers Pictures founders, Harry and Jack Williams, will also be creative leads for the adaptation […]

Read full article at https://wccftech.com/atomfall-tv-show-adaptation-fleabag-producers-lead/

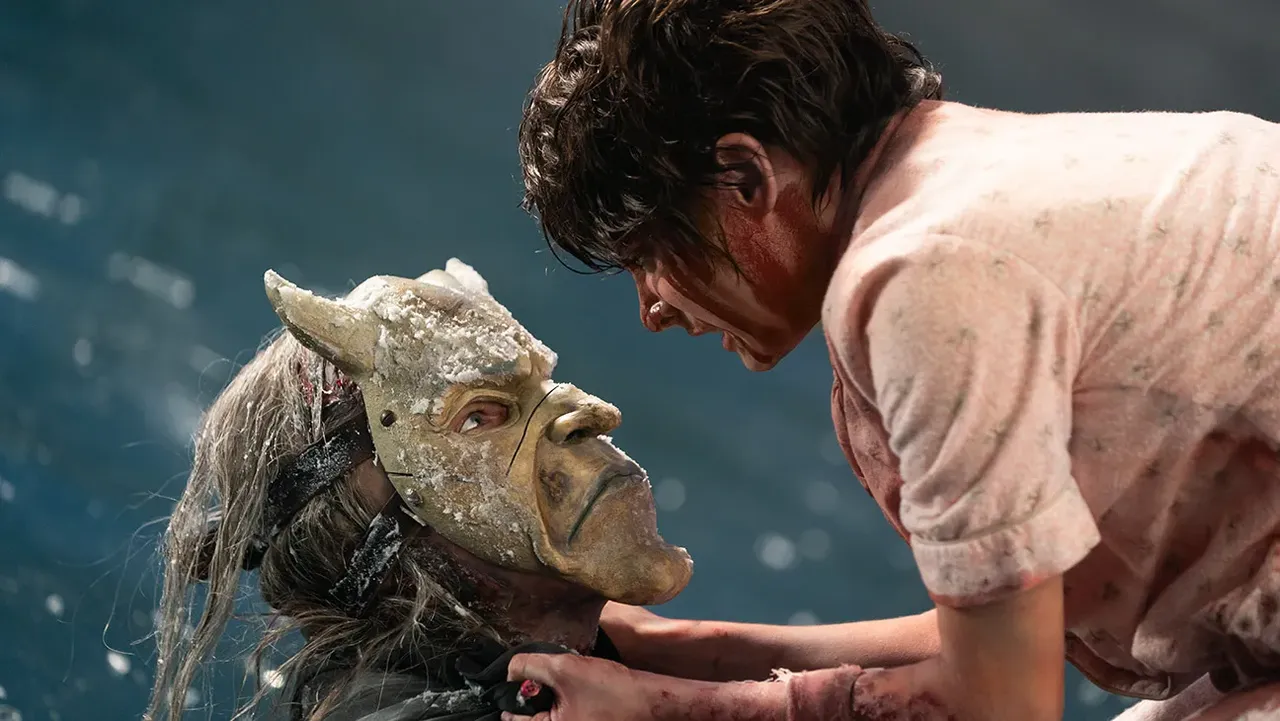

We've known since the game's announcement that The Blood of Dawnwalker would not be quite as large as The Witcher III: Wild Hunt. This makes perfect sense, as Rebel Wolves is smaller than CD Projekt RED was when it made its masterpiece. However, what exactly is the scope that players can expect in the full game? As reported by Gamereactor, Creative Director Mateusz Tomaszkiewicz revealed that The Blood of Dawnwalker turned out to be even bigger than the studio had originally planned. The goal was to target a 40-hour average playthrough, but now that all the content is in place […]

Read full article at https://wccftech.com/blood-of-dawnwalker-playtime-40-70-hours/

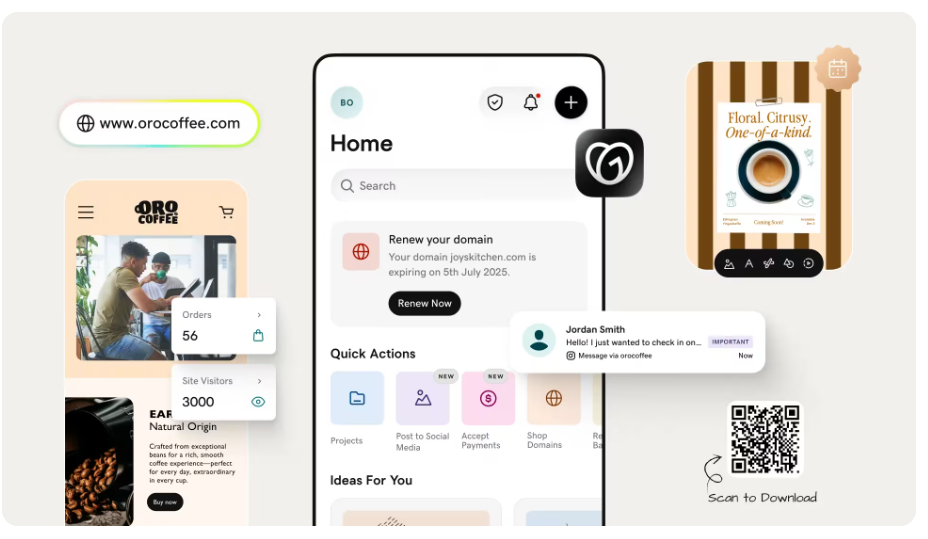

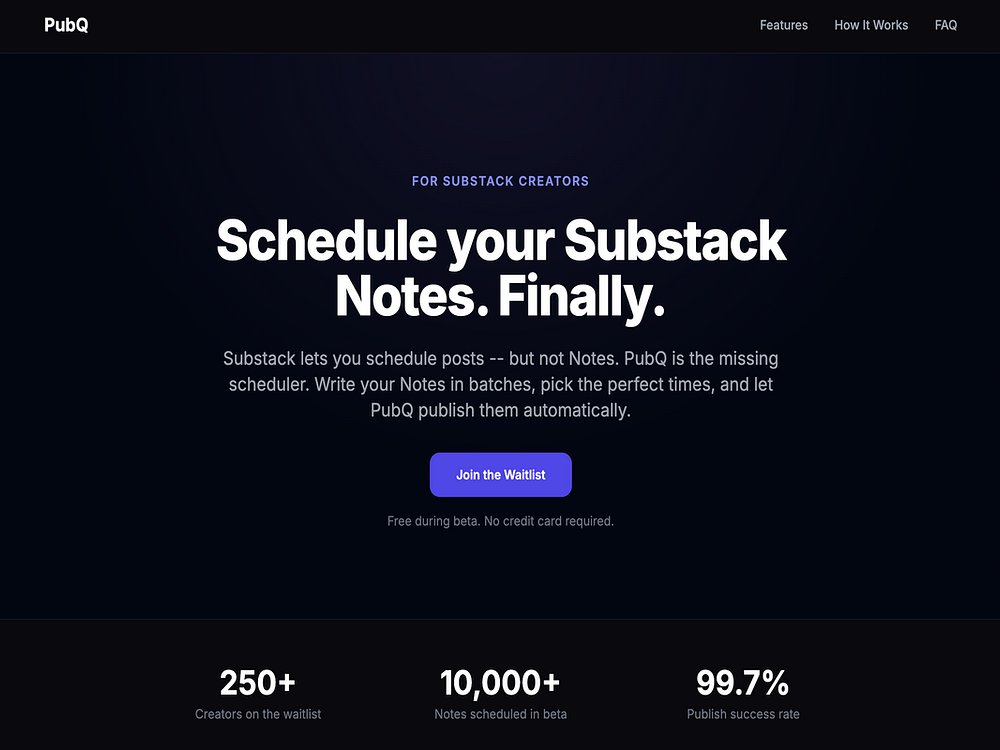

PubQ is a scheduling tool built specifically for Substack Notes. Substack has no native scheduler for Notes, only for long-form posts. PubQ fills that gap: a Chrome extension captures your Substack session and posts your Notes automatically at your chosen time.

The workflow is simple: write your Notes in batches, set a schedule, and PubQ handles posting at peak times while you do other things. A free tier is available with no card required.

PRDFlow connects to your GitHub repo and auto-translates every code merge into stakeholder-ready updates, requiring no engineer effort. Founders see business impact like "Payment processing is live, start charging customers," PMs see roadmap progress such as "3 of 5 acceptance criteria met," and sales sees what's demo-ready like "SSO is live, safe to promise clients." No more status meetings, Slack archaeology, or interrupting engineers in deep work. PRDFlow replaces the broken game of telephone between engineering and the rest of the company with a single source of truth pulled directly from the code.

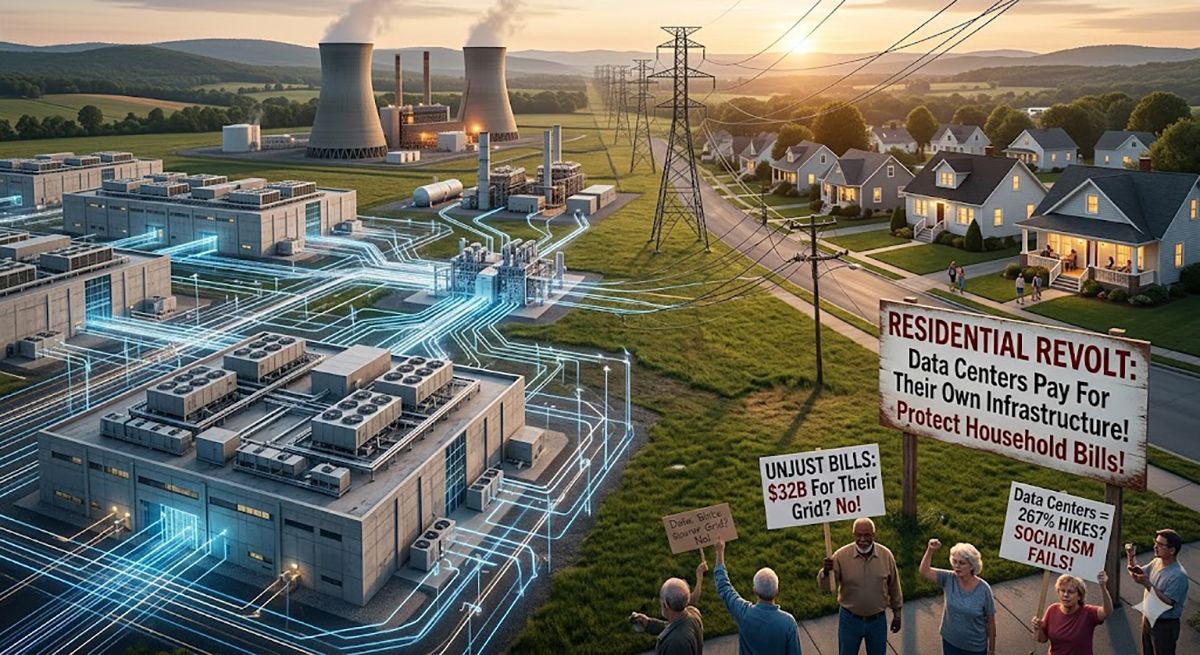

A new agreement between Google and OG&E to protect energy affordability in Oklahoma.

A new agreement between Google and OG&E to protect energy affordability in Oklahoma.

AI may not see your brand the way you think it does, according to Scott Stouffer, co-founder and CTO at Market Brew.

Brands still publish content, optimize pages, build authority, and follow SEO best practices. But that may not be enough anymore.

Search has moved away from a simple battle over keywords, links, and page-level signals. It’s now shaped by meaning, intent, embeddings, and retrieval, Stouffer said during his SEO Week presentation.

In legacy SEO, a page could rank lower and still exist in the search results. In AI-driven systems, the first question isn’t whether you rank. It’s whether you’re ever retrieved.

“If you’re not retrieved, you do not exist to AI,” Stouffer said.

Your brand already exists inside AI systems as a mathematical object. You may call yourself one thing. Your homepage may say another. Your brand guidelines may promise a clear position. But AI systems build their own view of your brand from the content you have published.

That computed version of your brand may be different from the one you intended to build.

AI visibility begins before ranking, Stouffer said.

In traditional SEO, marketers focus on positions — first, third, or tenth. But AI systems apply a filter earlier. Before anything is ranked, the system determines which content is eligible for consideration.

That is retrieval.

When a user asks a question, the system pulls a limited set of passages or chunks that best match the query. Those passages define the answer space.

If your content isn’t included, you get no impressions, no clicks, and no visibility at all, Stouffer said.

The real shift is moving from exclusion to inclusion.

“You don’t lose. You just never entered the game,” Stouffer said.

AI systems don’t treat a webpage as one clean unit, Stouffer said. They don’t evaluate pages as whole objects or prioritize layout, structure, or formatting.

Content is broken apart. A page becomes chunks: passages, sections, and individual ideas.

Each chunk is evaluated independently. A paragraph deep in a guide can compete on its own. A single sentence can be selected if it aligns closely with the query.

This shifts competition from page versus page to passage versus passage.

Most of a page may never be considered. Only the most aligned chunks are evaluated.

Each chunk is converted into a vector, Stouffer explained.

This vector represents meaning as a position in a high-dimensional space. It captures context and intent rather than exact wording.

Two pieces of content can use different words but sit close together if they express the same idea. Others can share keywords, but sit far apart if they represent different meanings.

“It’s comparing meaning, not wording, measuring distance, not keyword overlap,” Stouffer said.

Relevance is determined by proximity. The closer a chunk is to a query in this space, the more likely it is to be retrieved.

As chunks are mapped into this space, they group together.

Content with similar meaning forms clusters, even across different pages. These clusters reflect how AI systems understand topics.

This understanding comes from how content naturally groups by meaning, not by site structure or labels, Stouffer said.

If content is consistent, clusters become dense and clear. If content is scattered, clusters become fragmented.

What matters is not what a brand intends to say, but what its content actually communicates.

Within these clusters, there is a center point — the centroid, Stouffer said.

The centroid represents the average position of all related content. It reflects the site’s core meaning.

Every page and paragraph influences that position. Consistent content creates a clear, stable centroid. Inconsistent content dilutes it.

That centroid is how AI understands your brand.

Not your homepage. Not your messaging. Not your brand guidelines.

Your centroid is the combined signal of everything you have published, Stouffer said.

“Your centroid doesn’t care about intent. It reflects the math of everything you’ve ever published,” Stouffer said.

This changes how content should be evaluated.

The key question isn’t whether a page is optimized in isolation. It’s whether it aligns with the rest of the site.

Each page either strengthens the centroid or pulls it in a different direction.

“Optimization without alignment creates drift, and drift is what breaks consistency,” Stouffer said.

As drift increases, the site becomes harder for AI systems to interpret and retrieve.

“You don’t write pages, you project meaning,” Stouffer said.

When a query is entered, the system converts it into a vector, Stouffer said.

It then searches for the closest matches in meaning space.

This includes both individual chunks and the centroids that represent broader content clusters.

If your content is close enough, it enters the candidate set. If it is too far away, it is excluded.

Only after this stage do traditional ranking signals apply.

Content quality, links, and structure matter — but only if the content is first retrieved.

If not, those signals are never evaluated, he said.

Many brands follow similar strategies, use the same sources, and produce similar content.

As a result, their centroids converge in the same region, Stouffer said.

He described this as cluster collision.

When multiple brands occupy the same space, AI systems don’t select all of them. They choose a few and ignore the rest.

“They’re not failing best practices. They’re colliding with everyone else using them,” Stouffer said.

Producing more content or improving existing content isn’t enough. If content remains similar in meaning, it remains in the same space.

“You need a distinct centroid,” Stouffer said.

A clear, separate position in meaning space reduces competition and increases the likelihood of retrieval.

This is not a one-time adjustment.

Every piece of content shifts the centroid.

That requires an ongoing process of measurement and adjustment, Stouffer said.

Teams need to monitor alignment continuously and correct drift as it occurs.

Over time, this creates a more stable system where new content reinforces the existing structure.

Most teams can’t see how their content exists in this system.

They can’t see clusters, centroids, or distances — or why content is excluded.

So they rely on trial and error, Stouffer said.

They publish, optimize, and wait for results. When nothing changes, they try something else.

Without visibility into the system, they react to outcomes rather than understanding causes.

Your brand already exists as a mathematical object inside AI systems, Stouffer said.

You do not get to choose that.

You only choose whether to measure and control it or let it drift.

AI does not see your brand the way you describe it. It sees the aggregate meaning of your content.

“If you control your centroid, you control your visibility,” Stouffer said.

For more than two decades (nearly as long as I’ve been in SEO), backlinks have been core to SEO. Google’s PageRank changed search by using backlinks as a proxy for trust.

A link wasn’t just a pathway; it was a vote. The more votes you had and the more authoritative the voters were, the higher you ranked.

But as Google and AI systems matured, entity-based understanding emerged. AI models became better at understanding content, context, and credibility without always needing a hyperlink as a crutch.

Today, visibility isn’t driven solely by links. It’s strengthened by the broader signals your brand has earned: how often it’s mentioned, cited, and trusted across authoritative sources.

Search engines and AI platforms now prioritize these signals.

Modern AI systems can evaluate trust and expertise in ways that were impossible a decade ago. AI has changed how authority, trust, and expertise are measured. It can now assess authority through signals once approximated mainly by backlinks.

AI can:

A brand mention in a reputable publication—even without a link—reinforces entity authority. Consistent expert citations validate expertise. These signals can’t be faked.

The result is a new era where links still matter, but they’re no longer the only star. Authority is now a network of signals.

As Google relies less on raw link signals, something else has increased: entities — the people, brands, organizations, and concepts behind the content. Google increasingly showcases brands based on who they are and how they’re discussed across the web, alongside their backlink profile.

At its core, entity-first SEO means Google and LLMs are mapping relationships: identifying brands, understanding what they’re known for, and evaluating how they’re referenced in trusted sources.

For example, an outdoor gear company with a modest backlink profile began appearing in AI Overviews for “best hiking backpacks” after repeated mentions in Reddit threads, YouTube reviews, and a few expert roundups. Only some mentions included links, but the brand appeared consistently in trusted, topic-relevant conversations. Google interpreted those unlinked mentions as proof of real-world relevance.

If your brand consistently appears in a positive light in topic-related conversations, AI sees that as proof you’re relevant and trusted. The brands that win now have the strongest entity presence.

PR-style links and editorial coverage are earned mentions in reputable publications — the kind that signal real-world authority, not algorithmic manipulation.

Old-school, volume-based link building is less effective as AI improves at detecting manufactured patterns. But high-quality, relevance-driven link building—especially when paired with PR signals—is more valuable than ever.

Editorial PR links from journalists, analysts, and industry voices who choose to reference a brand because it’s newsworthy or authoritative reflect genuine credibility. They’re the digital equivalent of a trusted expert saying, “This brand matters.”

| Authority-Based Link Building | Volume-Based Link Building |

| Strong editorial context | Thin or generic content |

| High topical relevance | Limited relevance |

| Natural language anchors | Over‑optimized anchors |

| Trusted authors and publications | Sites with weak editorial oversight |

| Clear entity associations | Obvious link‑selling footprints |

AI doesn’t just look at the presence of a link; it evaluates the context around it. Models are trained to reward authenticity. Search aims to reward the most authoritative entities.

The real power comes from a combination of signals. As search has evolved, quality has become more powerful than quantity.

Now AI is driving another shift. You can grow traditional, relevance-focused links alongside new brand signals.

A single earned placement done well can generate:

This is multi-signal authority — holistic credibility that AI systems are designed to reward. It tells Google and LLMs: you’re known, trusted, and relevant. You need to be part of the conversation.

As powerful as PR signals are, they’re only one part of a larger authority ecosystem. AI evaluates brands through a multi-signal trust profile that determines visibility.

Authority is now defined by the breadth and consistency of signals that validate who your brand is across the web. It’s evaluated as humans do: reputation, recognition, expertise, and prominence.

Authority is no longer a single metric tied to links. It’s a network of signals, including:

Together, these signals create a holistic authority profile that AI can interpret. The brands that win have the strongest multi-signal authority footprint.

Brand strength quietly outweighs other signals. The data shows it: brands in the top 25% for web mentions average 169 AI Overview citations, while the next quartile averages just 14.

That’s not a small gap.

This aligns with Ahrefs’ analysis of ~75,000 brands. The strongest correlations with appearing in AI Overviews were branded web mentions, branded anchors, and branded search volume—all signals of real-world brand presence.

Consider two competing fitness apps. One has thousands of backlinks from generic listicles. The other is frequently mentioned in Reddit threads, YouTube reviews, and TikTok “day in the life” videos. The second app appears consistently in AI Overviews because AI sees it as part of the real-world fitness conversation, not just the link graph.

The brands dominating AI Overviews have the strongest brand presence, supported by consistent links, mentions, citations, and contextual relevance.

By 2027, link building will undergo radical change. The shift from a numbers game to a confidence game will become the norm, and Share of Authority or Voice will be the new metric.

Here are my top three predictions for what’s next.

Link building will expand to include “seeding” information in AI training hubs. Instead of mass outreach to low-tier blogs, strategies will target user-preferred sources like Reddit, LinkedIn, Substack, and GitHub, which LLMs use for high-quality, human-led data.

Brands that appear most often in training data, trusted sources, and high-authority conversations will earn visibility. This is the next step in a world where signals determine authority.

| Traditional Metric | Predicted Metric | Why the Change |

| Backlink Count | Entity Citation Frequency | AI values brand mentions as much as links |

| Domain Authority (DA) | Source Reliability Score | Focus on the trustworthiness of the source |

| Anchor Text | Semantic Context | AI reads the intent around the link, not just the text |

| PageRank | Share of Model (SoM) | Success is being the AI’s preferred answer |

As AI systems rely more on multi-signal authority, proprietary data becomes one of the most powerful assets a brand can produce. Data isn’t just content — it’s a signal engine. It naturally earns the signals AI trusts most:

Traditional link building still provides foundational authority, but data-driven assets are the accelerant. They create high-trust, high-context signals that AI models weigh heavily.

On a platform where visibility depends on how often your brand appears in authoritative contexts, proprietary data is the most scalable way to increase your Share of Authority.

Traditional contextual links will continue to build the foundation. But beyond that, search engines will track every time your brand appears alongside specific topics. Links will need “semantic context.”

Every mention of your brand in news, podcasts, reviews, forums, social posts, and roundups becomes a signal that strengthens your entity.

The future of off-page SEO isn’t a battle between traditional link building and AI-driven signals. It’s the realization that links were always just one signal. Now search engines can understand dozens more.

Traditional link building still matters. It provides the foundational authority, crawl paths, and topical relevance every site needs.

AI has widened the field. It can read context, interpret sentiment, understand entities, and evaluate brand presence.

These signals don’t replace links — they amplify them.

Links built the foundation.

Signals build the skyscraper.

Ask any paid search manager who has tried to get an AI agent to do something genuinely useful with a Google Ads account and you will hear a version of the same story. They exported performance data, pasted it into a chat window, got a solid answer, and then did the exact same thing the next day.

Exporting, pasting, repeating — that isn’t automation. That’s the same manual work you were doing before, performed in a different window.

The AI tools are not the problem. Any of the major ones can do solid analysis when the right data is in front of them.

The problem is getting that data to them live, current, and without a human in the middle copying it across. It’s the reason most PPC accounts in 2026 still run almost exactly the way they did before anyone started talking about agents. Call it the data wall.

Every ad platform is a silo by default. Google Ads records a conversion. Your CRM records whether that lead is qualified. Your inventory system records whether the product behind that click is still on the shelf. None of them talk to each other without deliberate plumbing.

PPC managers have bridged that gap manually for years: weekly exports, cross-referenced spreadsheets, dashboards that were stale by Monday morning.

That was workable when a human was doing the bridging on a set schedule. It becomes a structural problem the moment you hand execution over to an agent that must act in real time.

Take a keyword showing healthy volume, an acceptable CPA, and a CVR in range — all according to Google Ads. In HubSpot, those same conversions are tagged as disqualified leads: wrong territory, no budget, wrong company size entirely. The agent has no way to know. It keeps bidding. The budget keeps spending. And the problem doesn’t surface until someone runs the monthly review.

That is a data access problem, not a prompting problem. Better prompts don’t fix it. But a better pipeline does.

The Model Context Protocol (MCP) is an open standard that lets AI clients connect to external tools and data sources without a custom integration for each one. Before MCP, getting an agent to read from Google Ads, your CRM, and an inventory system meant building and maintaining three separate connectors, with the burden compounding every time you added a source.

MCP standardizes the handshake. A platform publishes an MCP server once, and any compatible AI client — Claude, ChatGPT’s agent mode, your team’s custom agent — can connect to it.

Google has already open-sourced its Ads API MCP server on GitHub, which allows agents to run Google Ads Query Language (GAQL) queries directly against live account data. The infrastructure problem that has blocked most real-world agentic PPC work is finally being addressed at the platform level.

The CRM gap closes first. An agent connected to both Google Ads and HubSpot can pull last month’s conversions, cross-reference them against CRM disposition, identify the keywords producing disqualified leads, and lower bids on those sources — on a schedule, without a human compiling the report. A loop that used to swallow half a day runs automatically.

Inventory creates the same kind of blind spot. An agent connected to Shopify can check stock levels before weekend campaigns go live. When an SKU drops below the threshold, the corresponding product group is paused before traffic hits a page that no longer converts.

Even the data-pipeline work itself gets faster.

On a recent “PPC Town Hall“ episode, Lars Maat — a PPC expert and agency founder in Rotterdam — described building a Python pipeline with no prior Python experience, connecting the Google Maps API, Google’s Things To Do feature, and Ahrefs to generate optimized landing pages for a parking client to identify nearby attractions, check search volumes, and feed the content to a generator.

The whole thing was live in two weeks. The only constraint was getting the right data in front of the AI and not what it could do.

Here’s where things get interesting, and where most of the MCP hype is skating past a real issue.

Write access to a live Google Ads account, in the hands of a probabilistic language model, without institutional constraints, is a new category of risk. An agent that can pause a campaign needs defined parameters: what threshold triggers the action, who gets notified before it fires, which campaign types require human sign-off. Those parameters don’t exist inside the AI tool. They have to be built around it.

Advertisers can grant granular permissions to the Optmyzr MCP to stay in control of what the connector is allowed to do on its own, what it can never do, and what it can do with human approval.

Advertisers can grant granular permissions to the Optmyzr MCP to stay in control of what the connector is allowed to do on its own, what it can never do, and what it can do with human approval.

On another “PPC Town Hall“ episode, Ann Stanley — founder of Anicca Digital and one of the UK’s most experienced paid media practitioners — described effective AI deployment as a sandwich: humans at the front who understand the goal and can give precise instructions, humans at the back who review the output and decide what ships, and AI handling execution in the middle. The quality of what comes out depends on the quality of what goes in and on whether the middle layer has any constraints at all.

This is where raw API access stops being enough.

Google’s open-source MCP server is a good piece of infrastructure. But it is not a safety net. It will happily run any GAQL query and any mutation the agent constructs, and if the agent hallucinates a campaign ID or picks the wrong lookback window, the ad account absorbs the consequences.

LLMs are probabilistic. Ad platform APIs are not. So, something has to sit in between.

We have spent over a decade encoding how Google Ads actually behaves — not just what the API exposes, but the interdependencies between settings, the edge cases around campaign types, the nuances of what makes a “duplicate keyword” a true duplicate versus a false positive. That work lives inside Optmyzr as a business intelligence layer. Our MCP connector is how we let your AI agent borrow it.

When Claude, ChatGPT, or your team’s custom agent connects to the Optmyzr MCP, it gains access to the same Sidekick capabilities your team uses inside Optmyzr: pulling PPC performance reports with rich filtering and segmentation, surfacing configured and triggered alerts, creating and editing alerts, retrieving merchant feed details, summarizing portfolio health across every active account, and — this is the one most people miss — generating and executing a full Rule Engine strategy from a plain-English description of what you’re trying to accomplish.

That matters for three reasons most DIY setups miss:

The end result is an AI agent that operates across your portfolio with the reach of an API, the judgment of a platform that has been in this space since before AI agents were a category, and a safety posture that doesn’t require you to build your own circuit breakers.

If you want to experiment with read-only access across raw ad platforms, Windsor.ai and Zapier’s MCP integration are the fastest on-ramps. If you’re comfortable managing your own guardrails, Google’s open-source Ads API MCP server on GitHub gives you precise GAQL control at the cost of building the safety layer yourself.

If you run client accounts where a misfire is unaffordable — or you just want your AI agent to think across your whole portfolio with the judgment of a senior PPC strategist — the Optmyzr MCP is the fastest path to an agent that is actually safe to give the keys to. It works with Claude Desktop (via custom Connectors or manual config), Claude Code, ChatGPT (via Developer Mode apps), and any MCP-compatible client. And, you can set it up in minutes: generate an API key from the MCP Integration panel in your Optmyzr settings, paste the server URL into your AI client, and your agent is operating across every active account on your Optmyzr profile.

Full MCP setup guide and instructions.

The data wall is coming down either way. The question is whether your agent walks through it with a plan, or a prompt and a prayer.

ASRock expands its AM5 motherboard lineup with its new X870E Taichi White model ASRock has officially launched its new X870E Taichi White motherboard, a high-end offering ready for AMD’s Ryzen 9 9950X3D2 Dual Edition and other future AMD Ryzen CPUs. On the hardware side, the X870E Taichi White features a robust 24+2+1 phase VRM, a […]

The post ASRock unveils ultra-high-end X870E Taichi White AM5 motherboard appeared first on OC3D.

Microsoft confirms a shift to a “quality” focus within its consumer business During Microsoft’s Q3 2026 earnings call, the company’s CEO, Satya Nadella, confirmed that they are doing “foundational work” within its consumer business. Microsoft plans to “win back fans” of Windows, Xbox, Bing, and Edge. By shifting its focus to quality and serving its […]

The post Microsoft CEO confirms “foundational work” to “win back” fans of Windows and Xbox appeared first on OC3D.

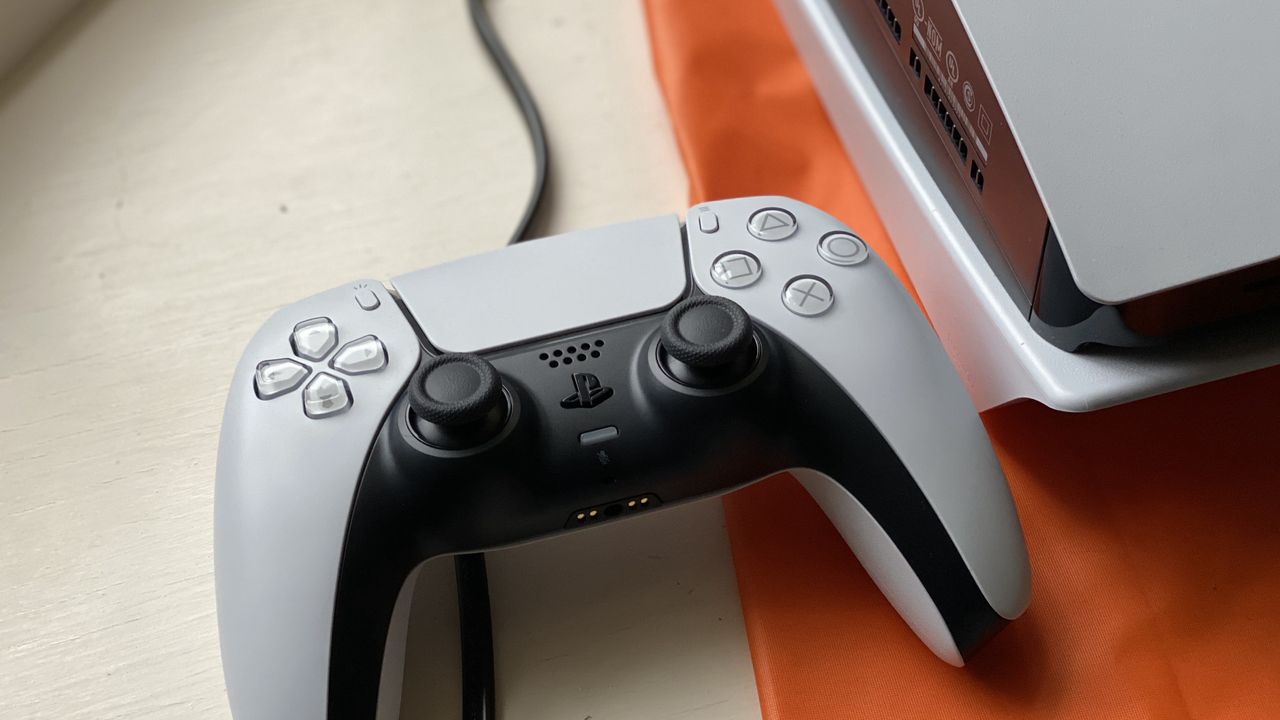

The PlayStation 5 DualSense controller features have been put to great use to enhance immersion in Saros, but for many players, adaptive triggers are only delivering frustration. The default configuration uses this feature on L2: a half-press activates Alt-Fire, while a full press through the resistance triggers your Power Weapon. In the heat of combat, it is incredibly easy to accidentally press too hard and waste your Power Weapon when you only intended to use an Alt-Fire ability. If you are struggling with these pressure levels, there is a much more reliable way to play. Separating Alt-Fire from the Trigger […]

Read full article at https://wccftech.com/how-to/saros-how-to-fix-alt-fire-controls-the-circle-button-trick/

While some of the shotguns are very solid weapon choices in the early game thanks to their powerful Alt-Fire modes, there comes a point in Saros where staggering an enemy is no longer enough. To clear the endgame biomes, defeat the final Overlords, and clear the game with ease, you will need the absolute best weapon: the Ripsaw Chakram. The Ripsaw Chakram is a high-skill, high-reward weapon that functions differently from anything else in Arjun’s arsenal. Once you "unwire" your brain from using the other more traditional weapons in the game, you will realize this is arguably the most powerful […]

Read full article at https://wccftech.com/how-to/saros-the-best-weapon-to-melt-bosses-ripsaw-chakram-guide/

AMD’s “bridge die” tech could be revolutionary for Zen 6 AMD aims to deliver a “latency revolution” with Zen 6, at least according to the leaker “Moore’s Law is Dead”. With a “fundamentally better memory controller” and “bridge die” technology, AMD aims to greatly lower memory latencies and boost inter-CCD communication speeds. This addresses the […]

The post AMD to deliver “latency revolution” with Zen 6 Ryzen interconnect tech appeared first on OC3D.

As Nintendo is unlikely to ever release its games on PC, emulation has been the only way for users to enjoy them on hardware other than the original consoles for a long time. However, over the past few years, we have seen the rise of native PC ports, including ports of Zelda: Ocarina of Time and Majora's Mask, which offer advanced features over emulated versions, such as support for higher resolutions. The latest of these is a native port of the original Super Smash Bros., which also serves as an example of the magnitude of what AI can achieve for a project like this, […]

Read full article at https://wccftech.com/nintendo-native-pc-port-super-smash-bros-ai-generated/

The Exynos 2600 will eventually be replaced by the Exynos 2700, as Samsung expands its 2nm GAA chipset portfolio later this year with the introduction of a successor. Until now, we have only heard about rumors and leaks surrounding the SoC. However, during the Korean giant’s Q1 2026 earnings call, it was the first time that the company publicly revealed that it’s developing the Exynos 2700 and intends to extend its market share with the latter’s release. Exynos 2700 to make up a higher percentage of Galaxy S27 shipments, as Samsung looks to reduce dependency on Qualcomm and its Snapdragon family […]

Read full article at https://wccftech.com/samsung-reveals-exynos-2700-details-during-q1-2026-earnings-call/

Ever since the system's release in late 2020, developers have been hard at work expanding the PlayStation 5's functionality beyond what Sony intended, helped by leaks that could lead to permanent jailbreaks. Now, over five years since the console's release, those with an older phat system still running older versions of the system software can turn Sony's current generation into a highly capable Linux PC, and take advantage of its Zen 2 8 CPU cores and RDNA 2 GPU to run emulators and Steam games with impressive fluidity thanks to a new loader released online by Andy Nguyen a.k.a. TheFl0w. […]

Read full article at https://wccftech.com/playstation-5-linux-breakthrough-reshape-consoles/

It's been almost a year since Studio Asobo and Focus Home revealed Resonance: A Plague Tale Legacy, the third game in the peculiar action/adventure series. After a long silence, the French studio has shared a lot of info about this prequel, including its development status, in a fresh devblog. There's good news on that front, as Resonance: A Plague Tale Legacy is in the final production phase with the last recording sessions and the final notes of the score wrapping up. The blog post teases that "the release is almost here", suggesting it will be released this year, possibly ahead […]

Read full article at https://wccftech.com/resonance-plague-tale-legacy-release-almost-here/

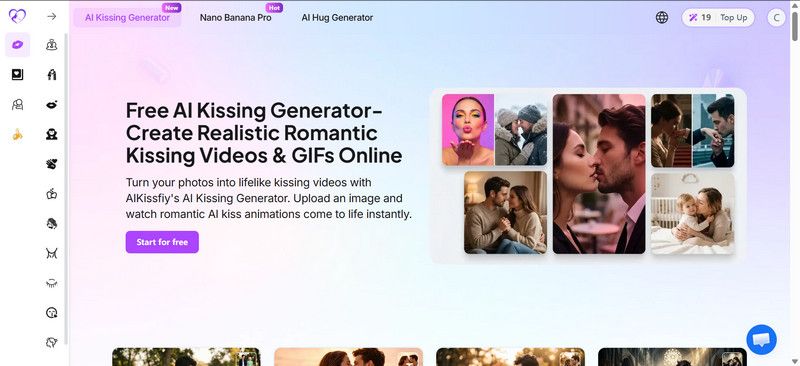

AIKissfiy turns one or two photos into realistic kissing videos and GIFs. Upload clear face images, click Generate, and get results in 10–30 seconds. The platform supports JPG, JPEG, WEBP, and PNG, and lets you download MP4s to share on TikTok, Instagram, or Snapchat. Use it for romantic surprises, social content, character reimagining, or avatar interactions. Images are processed over encrypted connections and deleted within 24 hours.

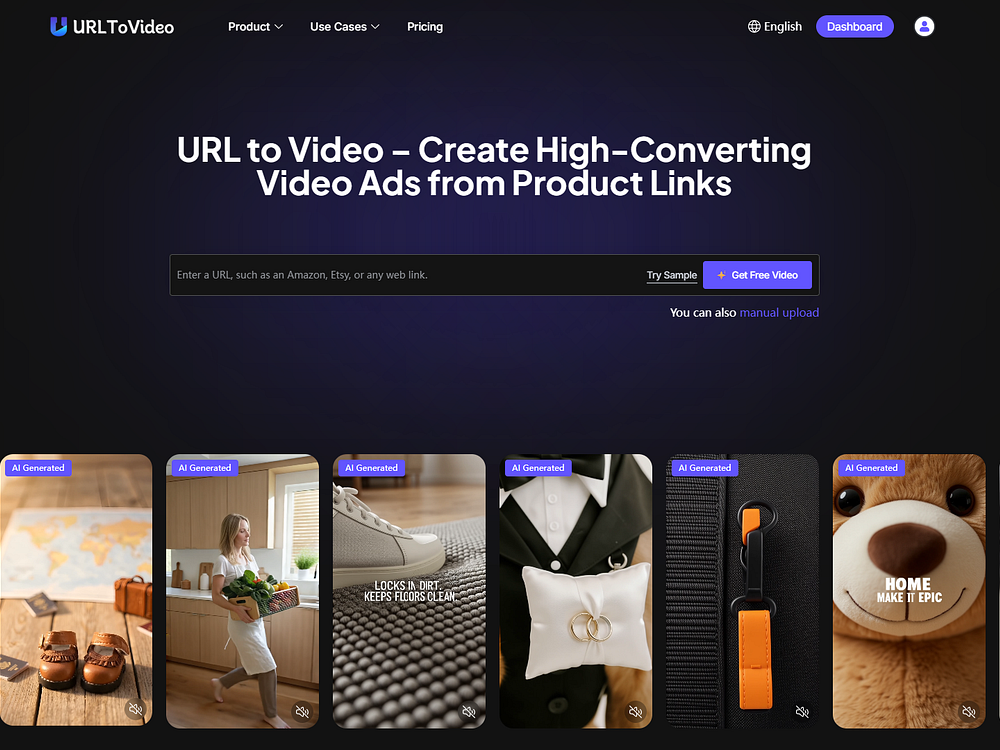

URL to Video converts Shopify, Amazon, TikTok Shop, and Etsy product pages into ready-to-run video ads in minutes. It analyzes a product URL, extracts content, generates scripts, and assembles creatives using proven ad frameworks. You can access a library of winning ads to replicate styles, add UGC-style AI avatars, voice cloning, and text-to-speech, then export platform-ready MP4s. Ecommerce brands, dropshippers, and performance marketers use it to scale variations and launch campaigns faster.

Inquir is a serverless platform to deploy AI agents, REST APIs, cron jobs, and webhooks without managing infrastructure. Write functions in Node.js, Python, or Go, click deploy, and get a live endpoint with an API gateway, schedules, secret management, and observability built in. Hot containers keep frequent routes warm, isolation improves security, and predictable per-invocation pricing helps control cost. Teams can ship AI pipelines, Stripe webhooks, and backend tasks quickly and easily.

Open-source Terminal UI, just record & get exhaustive tests

The AI design agent that works on your canvas

Secret Mode has removed Denuvo from Star Wars Galactic Racer It looks like Denuvo has been removed from Star Wars Galactic Racer, the upcoming Star Wars-themed high-speed racing experience. All references to the controversial anti-tamper technology have been removed from the game’s Steam page. This suggests that the game’s publisher has decided against using Denuvo. […]

The post Denuvo Removed from Star Wars Galactic Racer ahead of launch appeared first on OC3D.

A Sony spokesperson has finally provided an official response to the PlayStation Online DRM controversy. Gamespot received the following statement after asking for some much-needed clarification: Players can continue to access and play their purchased games as usual. A one-time online check is required to confirm the game's license, after which no further check-ins are required. The controversy began in late April when several PlayStation users noticed a 30-day countdown timer appearing on the license information page of newly purchased digital games on PS4 and PS5. The timer displayed a "Valid Period" start and end date, along with "Remaining Time," […]

Read full article at https://wccftech.com/sony-playstation-drm-official-response-one-time-check/

A French retailer is now selling defective RTX 5090 GPUs starting at €1499, but once purchased, you can't even return them for a refund. Defective RTX 5090 GPUs Cost Half As Much As A New RTX 5090, But You'll Be Lucky To Get One Running LDLC, a French retailer, has started selling defective NVIDIA GeForce RTX 5090 GPUs. The term defective could mean a lot of things, but to make things simple, LDLC says that none of the cards work, and it's up to buyers to figure out a way to get them to work. The two variants that have […]

Read full article at https://wccftech.com/french-retailer-lists-defective-nvidia-rtx-5090-gpus-starting-at-e1499-no-refunds/

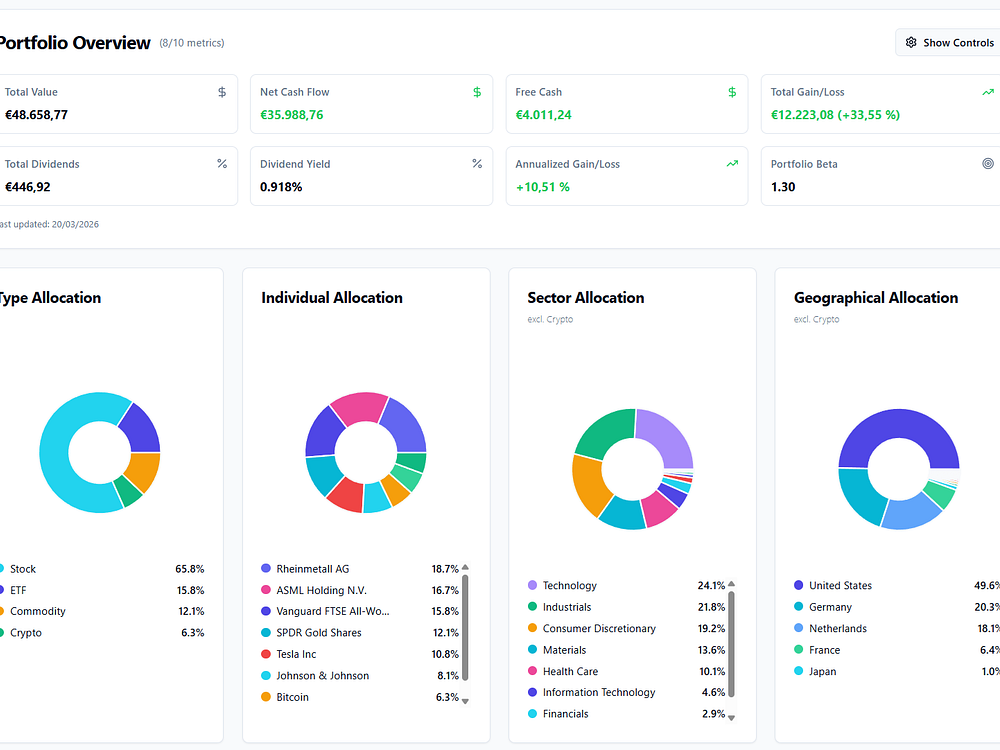

TrackinV helps you track your full investment portfolio across multiple brokers in one dashboard. Import transactions via CSV or manual entry and see time-weighted returns, benchmark comparisons to S&P 500, MSCI World, and European indices, FX impact, and dividend income. Use portfolio analytics to view sector and geographic allocation, top and bottom performers, and realized vs unrealized P/L. The default currency is EUR but it supports any currency worldwide, so you can compare performance and make better decisions faster.

Learn about AI & watch text become tokens in a node graph

Explore the entire universe in your browser, in real 3D

Turn members into a living relationship graph

Business that Runs Itself

A 128B model for coding, reasoning, and long tasks

Autonomous, goal-driven testing for web & mobile apps

Pin, group, and remove apps easily from your dock

The event invite that actually gets people to show up

Extract web data and automate browsers, no scraper required.

Builds entire dashboards from a single prompt

An open-source spec for Codex orchestration

Real profit tracking for WooCommerce + Google Ads.

Your all-in-one video workflow

AI chat and API that keeps your conversations fully private

One API to build production-ready voice agents

Open-source file storage, sharing, collaboration & syncing

Turn Gmail into Google Tasks with AI-powered

Create studio-quality launch videos with AI

Generate production-ready files directly in your chat

Markdown wit LaTeX in a modern typesetting system

Turn product releases into feature adoption

Non-invasive AI secretary to help without context switching

Deploy pre-built voice and chat agents for support, sales

A foldable phone built for pen-first productivity

AI-native collaborative editor

AI-assisted music creation with built-in discovery, royalty

Start your business using AI in Claude and Replit

Web and MCP research agents, now in Gemini API

The ongoing DRAM shortages are now apparently compelling Apple to aggressively water down its ambitions for the upcoming A20 chip that is slated to power the base iPhone 18, clearly illustrating that even Apple is not entirely immune to the vagaries of the DRAM market these days. Apple's upcoming A20 chip is unlikely to leverage the new WMCM packaging tech that unlocks an unprecedented level of versatility Up until recently, Apple's upcoming A20 chip was expected to make a switch from TSMC's InFO (Integrated Fan-Out) packaging tech, which integrates components like the AP and DRAM onto a single die without […]