Bring back the joy of buying new tech and toys

PixelPanda is an AI photoshoot platform for product photography, UGC marketing videos, and background removal. Upload a product, choose a style or model, and generate listing-ready photos and talking-head videos in seconds. The platform includes AI model generation, background removal, image upscaling, and fashion try-on, plus ad-ready layouts for Amazon, Shopify, and social channels. Over 10,000 e-commerce brands use PixelPanda to create studio-quality assets fast without costly shoots.

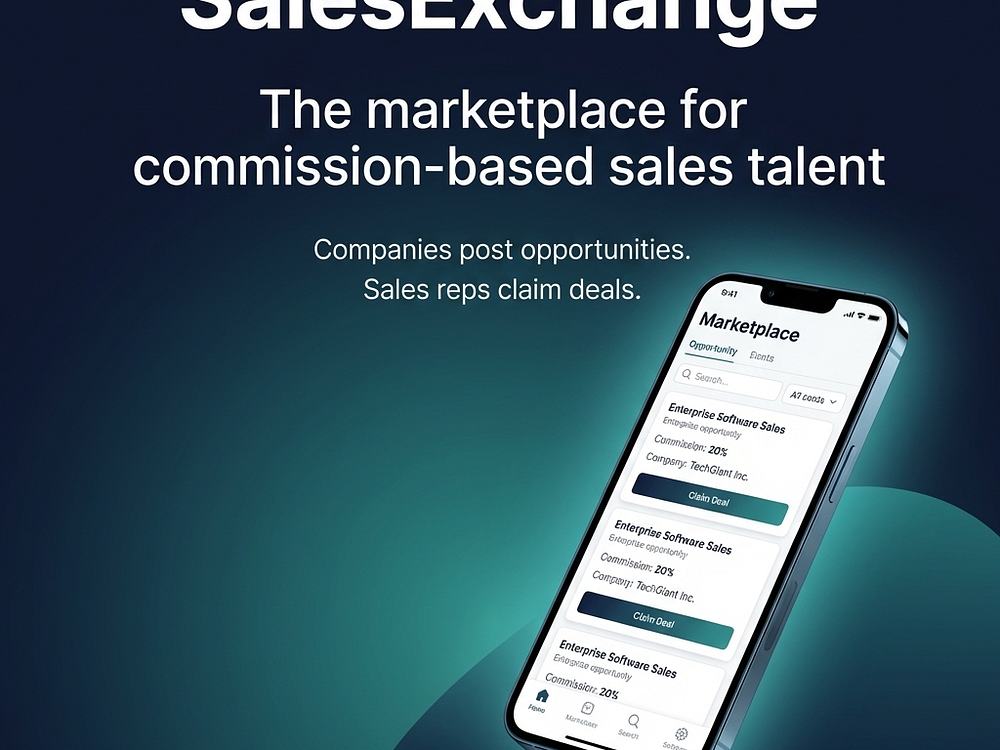

Inside Sales Manager connects companies with motivated commission-based sales reps through real, posted opportunities. Companies post opportunities, set terms, control visibility, and chat with interested reps to expand coverage without adding full-time headcount. Sales reps browse and request opportunities, message companies in-app, track status, and earn referral fees or commissions.

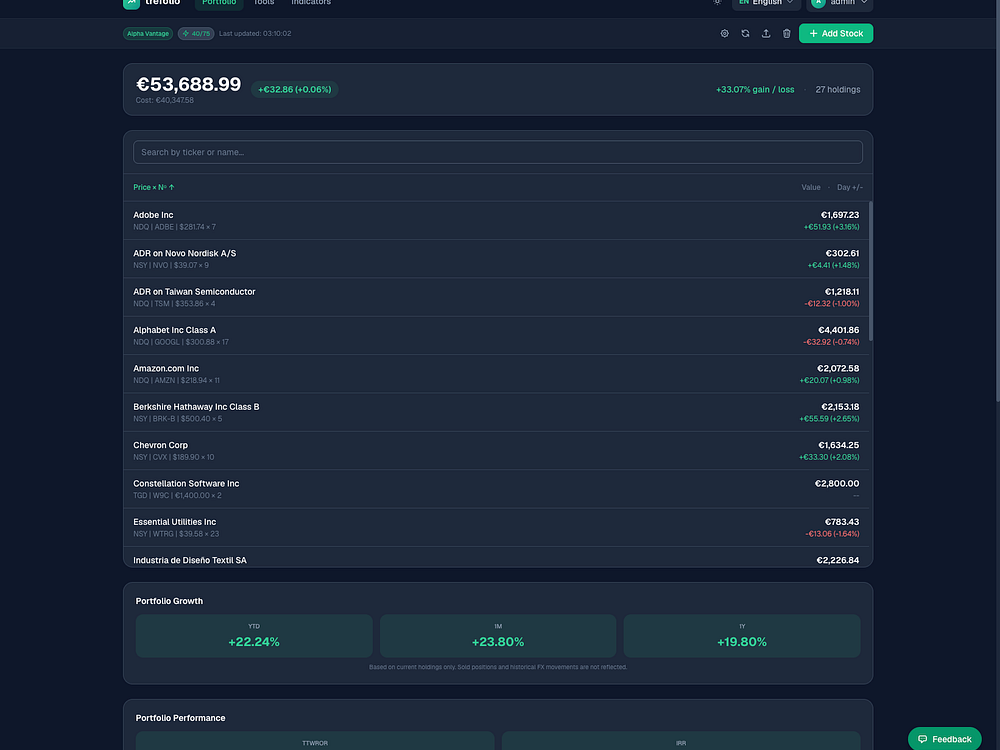

trefolio is a portfolio tracker built for European investors. You can import from DEGIRO, IBKR, Trading 212, or Revolut in one click. It offers real-time quotes, AI stock analysis, dividend projections, and performance metrics in 35 European languages. There is a free tier available and a Pro plan for €4.99/month.

OpenAI’s AI video slop generator is dead – Will OpenAI soon follow? OpenAI has confirmed that it has discontinued its Sora AI video generation tool and has pivoted away from video generation tools entirely. Now, OpenAI appears to be focusing on other forms of AI. Presumably, this will be forms of AI that weren’t burning […]

The post OpenAI closes Sora AI Video Generator and cancels $1bn Disney partnership appeared first on OC3D.

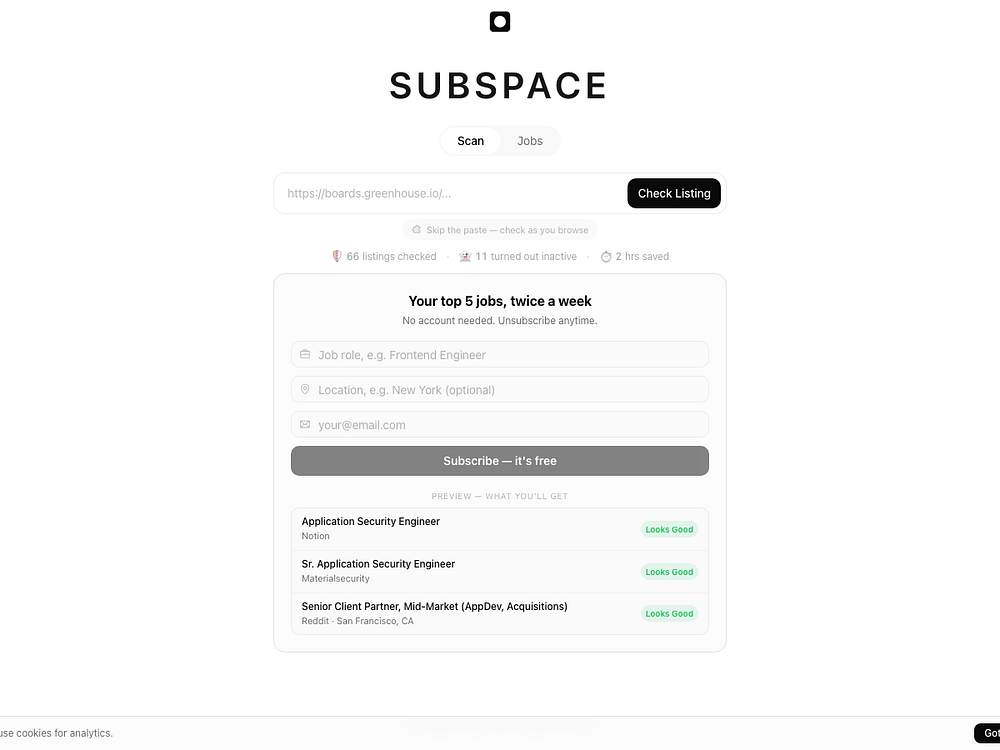

Subspace checks what you can’t see behind job postings so you avoid dead ends. Paste a direct link from a company careers page and it scores the listing for signals like whether it’s still active, salary disclosure, hiring manager, role substance, and employer quality, then returns a clear Job Health Score. Start free with limited checks, or go Pro for unlimited checks, a full seven-category breakdown, and a job board sorted by listings worth applying to.

Google Analytics launched Scenario Planner and Projections to help advertisers forecast performance, optimize budgets, and plan cross-channel media spend more strategically.

The post Google Analytics Launches Scenario Planner and Projections appeared first on Search Engine Journal.

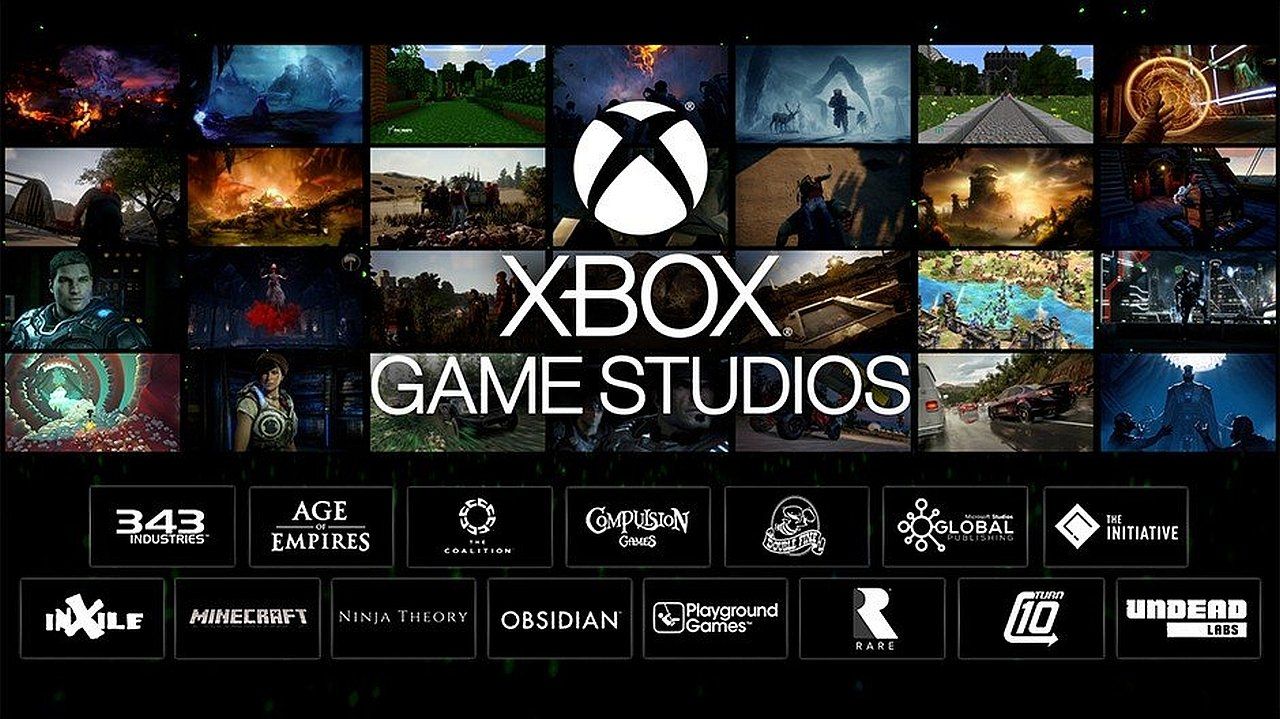

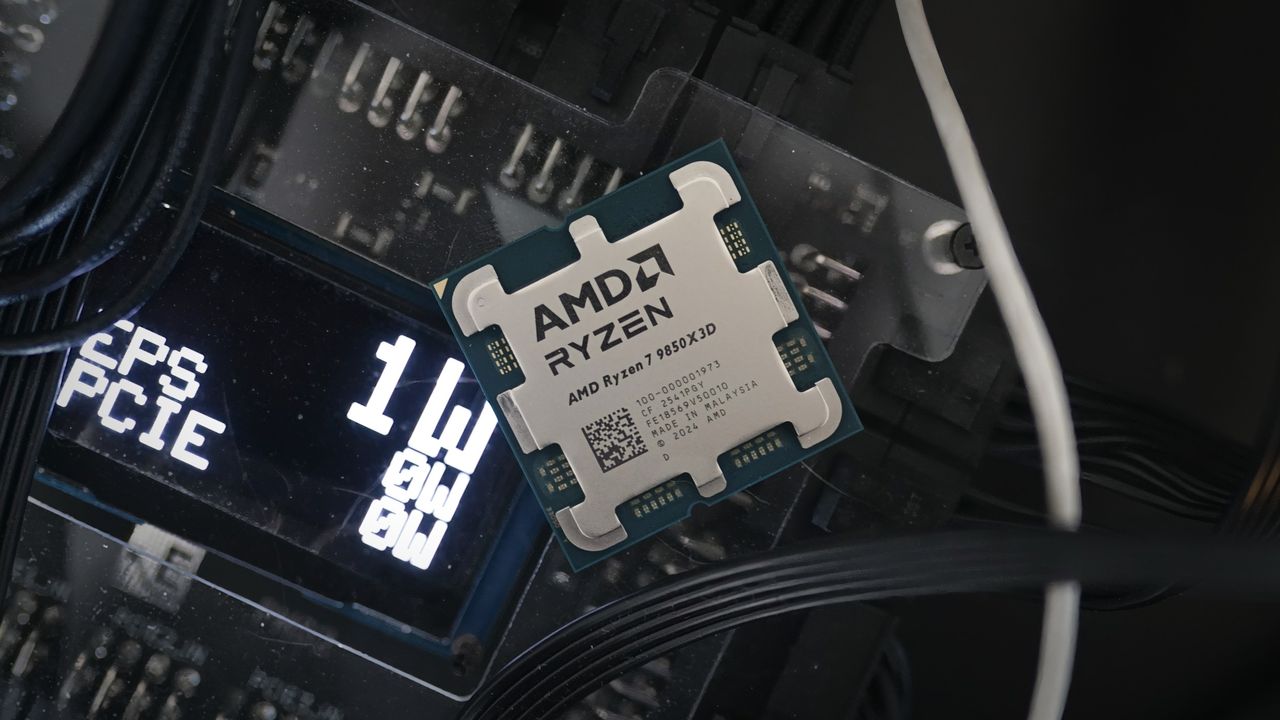

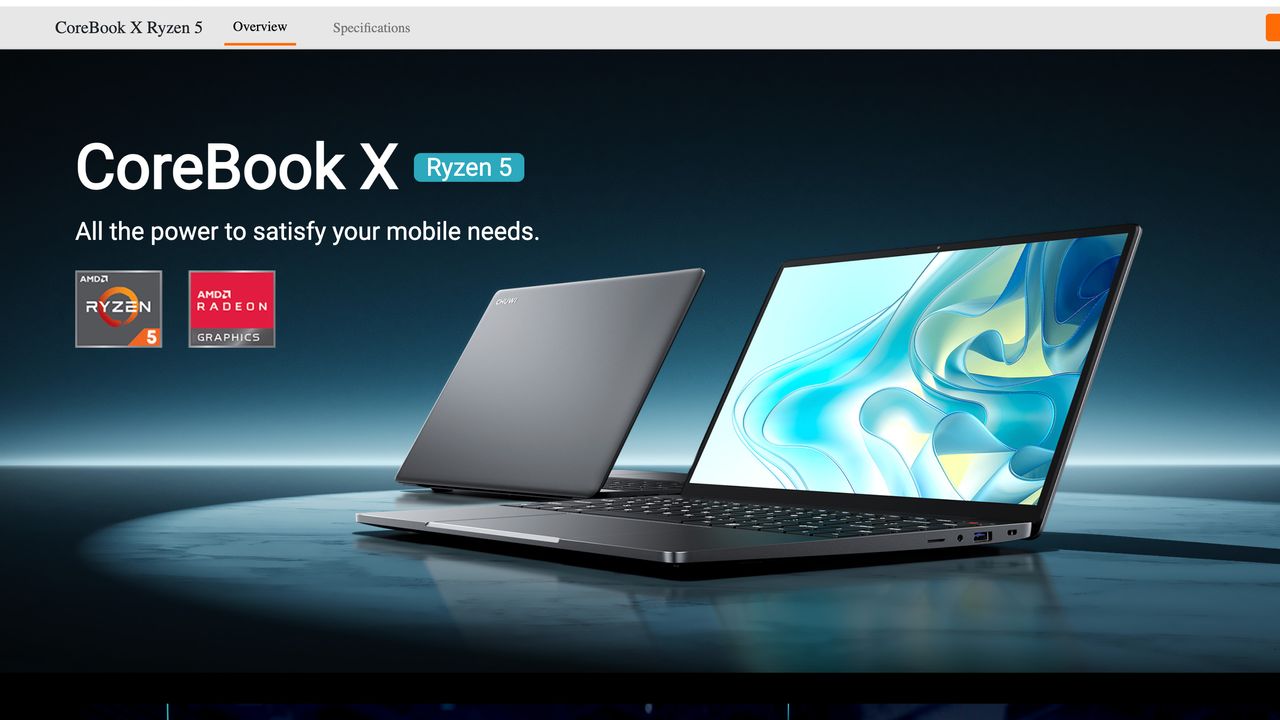

Forza Horizon 6’s system requirements are a breath of fresh air for PC gamers Playground Games has officially released its PC system requirements for Forza Horizon 6, and it’s great news for PC gamers. On PC, the newest Forza game will be highly scalable, supporting platforms as low-end as Valve’s Steam Deck and ASUS’ entry-level […]

The post Is your PC ready for Forza Horizon 6? PC Requirements Released appeared first on OC3D.

Getly is an independent marketplace for buying and selling digital products like templates, design assets, music, video, courses, and AI prompts. Creators keep 80% per sale, accept Stripe or stablecoins, and deliver instant downloads to buyers. The platform offers creator stores, analytics, marketing automation, bundles, and a Pro subscription with unlimited downloads from a curated catalog. Shoppers can browse thousands of items, filter by price and rating, and checkout securely worldwide.

Feevio turns your voice notes into polished invoices and quotes so you can bill clients before details fade. Speak what you did, who it was for, and time or rates, and it drafts clear line items, handles totals and tax, and applies your branding. Use it on phone or desktop to capture jobs, tidy the draft, and email a professional PDF in minutes. Track revenue and outstanding invoices, keep client records together, and bulk-download PDFs when it’s time to share paperwork.

newnity is a crowdfunding platform built on Base (Coinbase's L2) where creators launch campaigns and backers fund them in USDC. Every campaign uses on-chain escrow with all-or-nothing settlement: if the goal is met, the creator receives the funds. If not, every backer is automatically refunded. No middleman holds your money.

Supporters earn XP for every campaign they back, building reputation across the platform. Creators can run campaigns for games, music, digital art, and more. Transaction fees on Base are fractions of a cent. Currently live on Base Sepolia testnet, with mainnet launching in Summer 2026.

Lyria 3 is now available in paid preview through the Gemini API and for testing in Google AI Studio.

Lyria 3 is now available in paid preview through the Gemini API and for testing in Google AI Studio.  We are bringing Lyria 3 to the tools where professionals work and create every day.

We are bringing Lyria 3 to the tools where professionals work and create every day.  Top tips and best practices for collaborating with Ads Advisor and Analytics Advisor

Top tips and best practices for collaborating with Ads Advisor and Analytics Advisor

Once upon a time, in the delightfully chaotic 1990s, web copywriting was all about exact-match keywords and relentless meta tag stuffing. As algorithms matured, so did SEO copywriting.

Now, with proposition-based retrieval systems, writing like you’re in the business of tricking a crawler into seeing relevance through keyword repetition is no longer a viable strategy.

Below is a playbook for generative AI-friendly copywriting, broken down into self-contained, high-density concepts.

Large language models (LLMs) don’t seek less information. They seek higher information density. Google’s Gemini operates on a limited budget of retrieved information, according to research by DEJAN AI, which analyzed over 7,000 queries.

The grounding budget is roughly 1,900 words per query, split across multiple sources. For an individual webpage, your typical allocation is around 380 words. You’re competing for a tiny slice of a fixed pie, so being precise helps the AI’s matching process.

If Schema.org is the external scaffolding of a building, structured language is the load-bearing internal frame. Language itself is the structure we provide machines, such as “semantic triplets” (subject → predicate → object). When a copywriter moves structure inside the language, the sentences become inherently machine-readable.

Google’s passage ranking, AI Overviews, and third-party LLMs like ChatGPT all evaluate content at the passage level using similar retrieval infrastructure. A sentence that works for one works for all of them.

A properly structured sentence fulfills four strict data criteria:

| Feature | The marketing fluff | Structured language (GEO-friendly) |

| Example | “Our revolutionary platform makes managing your team easier than ever. It is affordable and comes with great support.” | “The Asana Enterprise Plan [Entity] streamlines [Relationship] cross-functional project tracking [Specifics] for teams over 100 people [Condition], starting at $24.99 per user [Data].” |

| Machine utility | Low (Vague, hard to extract) | High (Decomposable into atomic claims) |

Traditional copywriting flows like a row of dominoes. When an AI “chunks” your page, it snaps those dominoes apart. If your sentences aren’t load-bearing on their own, the logic collapses.

Ensure every single sentence explicitly names its subject. Vague pronouns like “this,” “it,” or “the above” become dead bits when extracted.

Keyword stuffing introduces inference errors. Effective structured language explicitly states the relationship between nodes.

Provide anchorable statements instead of fluff: dense passages equipped with clear claims and specific evidence.

The gold standard example:

Research shows LLMs reliably extract claims near the beginning or end of a text. Adding more content often dilutes your coverage.

Here’s the four-step formula for citation bait.

Clear headings above a paragraph can improve its mathematical relevance (cosine similarity) to AI systems by up to 17.54%.

Developed by Ramon Eijkemans, this scoring system measures the likelihood of content being cited:

Here’s a table of the most common pitfalls when it comes to extractability:

| Pattern | Example | Problem |

| Unresolved pronoun (what?) | “It features a 120Hz display” | What device? |

| Vague demonstrative (what + what?) | “This gives it an advantage” | What gives what an advantage? |

| Context-dependent (which?) | “The above specs outperform the competition” | Which specs? Which competition? |

| Stripped conditions (when? how much?) | “The price has dropped significantly” | From what? To what? When? |

| Assumed knowledge (what? who?) | “The popular supplement helps with recovery” | Which supplement? Recovery from what? |

| Relative claim (how much? compared to what?) | “Our fastest-selling product” | How fast? Compared to what? Over what period? |

To ensure your high-value pages are programmatically extractable, run these four stress tests on your mid-page copy.

The action: Select a single sentence completely at random from the middle of a webpage and read it in total isolation.

The goal: If the sentence relies on preceding paragraphs to make sense or uses vague pronouns (e.g., “This allows for…”), the page has a utility gap. Every sentence should be self-contained.

The action: Scroll down twice on a homepage so the hero banner and primary H1 disappear, then start reading from wherever your eyes land.

The goal: If a reader (or a machine “chunking” that section) can’t immediately identify the product or service without the top visual layout, the mid-page text fails the context test.

The action: Read a mid-page sentence out loud and ask: Could this apply to the deforestation of the Amazon or a steamy romance novel?

The goal: If a sentence is wildly generic (e.g., “We empower our clients to achieve more”), an LLM will struggle to map it to your specific entity. Specifics prevent misinterpretation.

The action: Run the live URL through an LLM agent or NotebookLM.

The goal: If convoluted JavaScript, heavy code bloat, or aggressive bot protection prevents an agent from “seeing” the raw text, generative search engines may skip the content entirely.

Here are answers to common questions about optimizing content for AI search.

Yes. Formalized by researchers at the University of Washington and Columbia, it focuses on optimizing for “citation frequency” through dense, condition-preserving sentences.

Traditional SEO relies on bolt-on machine-readable code to make human narratives SEO-worthy. AI search optimization requires embedding explicit entity relationships and structure directly inside your copy.

Open with a dense 40-60-word declarative statement. Information buried deep in long paragraphs is rarely retrieved.

Yes. Because Google uses vector embeddings to evaluate content at the passage level, structuring language for an LLM improves traditional visibility.

No. Density beats length. Pages under 5,000 characters see a 66% extraction rate, while pages over 20,000 characters plummet to 12%.

The AI inverted pyramid means abandoning the slow, conversational introduction and placing your core entities, exact claims, and specific conditions in the very first sentence to guarantee flawless machine extraction.

The content creator is now a machine-readability engineer. Our job is to build narratives that are persuasive to humans while being programmatically extractable for neural networks.

If your content lacks explicit entity relationships, perfectly self-contained sentences, and highly “anchorable” citable claims, the machines will simply look right through you.

Google released the March 2026 spam update less than 24 hours ago and it is already done rolling out. The update finished today at 10:40 a.m. ET.

Why we care. This is the second Google algorithm update announced in 2026. It’s unclear what spam it targeted, but if you see ranking or traffic changes in the next few days, the Google March 2026 spam update could be the cause.

More on spam update. Google’s documentation says:

“While Google’s automated systems to detect search spam are constantly operating, we occasionally make notable improvements to how they work. When we do, we refer to this as a spam update and share when they happen on our list of Google Search ranking updates.

For example, SpamBrain is our AI-based spam-prevention system. From time-to-time, we improve that system to make it better at spotting spam and to help ensure it catches new types of spam.

Sites that see a change after a spam update should review our spam policies to ensure they are complying with those. Sites that violate our policies may rank lower in results or not appear in results at all. Making changes may help a site improve if our automated systems learn over a period of months that the site complies with our spam policies.

In the case of a link spam update (an update that specifically deals with link spam), making changes might not generate an improvement. This is because when our systems remove the effects spammy links may have, any ranking benefit the links may have previously generated for your site is lost. Any potential ranking benefits generated by those links cannot be regained.”

Impact. This update should only impact sites spamming Google Search, so hopefully you didn’t see any major negative impact.

Kali Linux 2026.1 lands with a fresh look, a Linux 6.18 kernel, and a batch of new pentesting tools – but the standout is a nostalgic BackTrack mode that recreates the classic desktop for longtime users. It's a relatively light release, yet one that blends practical updates with a throwback twist security pros seem to appreciate.

An overview of how Google is accelerating its timeline for post-quantum cryptography migration.

An overview of how Google is accelerating its timeline for post-quantum cryptography migration.

Influencer content isn’t just a brand awareness play. It’s showing up in Google SERPs, Google AI Overviews, and AI answers, making keyword strategy an essential part of every influencer brief.

When we brief an influencer, we assign them a keyword. Not as a nice-to-have, but as a required part of the strategy, usually woven into the script, the caption, the on-screen text, and the hashtags.

That might sound like an SEO team overreaching into an influencer team’s lane. But in 2026, the lane lines don’t exist.

Social content is search inventory. If your influencer marketing program isn’t built around that reality, you’re leaving a significant and measurable share of voice on the table.

For most of search’s history, optimization meant ranking on Google. That’s still important, but it’s no longer the full story.

Today, nearly half of U.S. consumers (49%) use TikTok as a search engine. Gen Z may lead that adoption, but it cuts across generations.

Over a third of consumers now prefer to start their search journey with AI tools like ChatGPT over Google. Platforms like YouTube, Instagram, and Pinterest have also become primary discovery engines for product research, how-to queries, and purchase decisions.

This is what search everywhere may look like in practice:

Each of these touchpoints is a search moment, and there’s a strong chance they involve influencer content. The brands showing up at every step are the ones treating influencer marketing content as search content from the beginning.

Ross Simmonds, CEO of Foundation Marketing, shared with me:

Dig deeper: Why creator-led content marketing is the new standard in search

This is where things get concrete.

Google’s What people are saying SERP feature is a carousel that appears directly in search results and surfaces user-generated and creator content from platforms like YouTube, TikTok, LinkedIn, Instagram, and Reddit for relevant queries.

It’s now a default feature in U.S. search results and consistently shows up for mid- to bottom-of-funnel keywords, exactly where purchase decisions are made. A brand can appear in this SERP feature (either directly or indirectly via an influencer) without ranking in the traditional Top 10 results.

Additionally, the Short videos SERP feature is another prime spot for your influencer content to take up shelf space on Google. This means an influencer video optimized with the right SEO keyword can surface in multiple spots on Google for a commercial query your brand’s own site might never rank for.

It’s not theoretical. It’s happening now.

Meanwhile, AI answers are pulling from social content at scale. An analysis of 40 million AI search results found Reddit to be the single most-cited domain across ChatGPT, Copilot, and Perplexity. Ahrefs research confirms that YouTube mentions and branded web mentions are among the top factors correlating with AI brand visibility in ChatGPT, AI Mode, and AI Overviews.

Samanyou Garg, CEO of Writesonic, shared with me:

The more creators talk about your product with consistent language, the more confident AI becomes in recommending you. So if your influencer content doesn’t contain the SEO keywords your audience is actually searching for, it won’t be surfaced in all the places that matter.

Dig deeper: Short-form, big impact: What creators can teach performance marketers

Keyword research should be a standard step in every influencer campaign. Start by identifying your target keyword from data across three sources:

Once the keyword is identified, embed it into every element of the creator’s content:

Don’t confuse this with keyword stuffing. It’s modern content architecture.

There’s a big difference between a creator naturally saying, “If you’re searching for the best running shoes right now…” versus a brand clunkily forcing a phrase into otherwise natural content. The influencer brief sets the requirement, yes, but the creator’s job is to incorporate their unique voice.

Ashley Liddell, co-founder and Search Everywhere director at Deviation, shared:

Once the content is live, track whether the creator’s post is surfacing for the target keyword across:

Screenshot and log positions immediately (because rankings can quickly shift). This data tells a story clients aren’t used to seeing from an influencer program.

There’s a reason this matters beyond any individual campaign. Google organic CTRs have declined dramatically, by as much as 61% on queries where AI Overviews appear.

With Google SERP features increasingly highlighting video and social content, traditional web content is losing surface area on the SERPs. Social content, conversely, is gaining traction, and we cannot ignore this.

For brands, influencer content has taken on a much stronger value: scalable, authentic, human-first search inventory distributed across platforms where their audiences spend time. It doesn’t replace a traditional SEO program, but it extends reach into channels where creator voices tend to outperform brand-owned content.

Younger audiences search socially first. In some categories, a meaningful share of consideration-stage audiences see creator content before they ever search for your brand. If your influencers don’t use the language your audience searches, you’re invisible in the moments that matter most.

Search everywhere optimization comes down to one thing: showing up where your audience actually searches with content worth stopping for.

Dig deeper: Why social search visibility is the next evolution of discoverability

The biggest barrier to building keyword optimization into influencer programs is structural. SEO and influencer teams often sit within different parts of an organization, owned by different teams with different KPIs, and little reason to collaborate.

Even when those teams are close, a common hesitation remains: adding a keyword requirement to a creator brief may make the content feel scripted or inauthentic. That concern is valid, but somewhat misplaced. A keyword isn’t a constraint on creativity — it’s a topic signal.

Creators integrate talking points, product messaging, and brand language into their content all the time. A search term is no different, as long as the brief gives them room to use it in their own voice.

Closing that gap requires a few concrete changes.

Influencer content has always shaped brand perception. Today, it also shapes search visibility across social platforms, Google’s evolving SERP features, and AI-generated answers.

Brands that recognize this apply a search strategy to a channel that, until recently, operated without it. You treat every influencer video as search content — briefing keywords and reporting on search performance as you would for other organic channels.

Influencer content is search inventory. The only question is whether you’re optimizing it.

Does schema markup really benefit AI search optimization? Some suggest it can 3x your citations or dramatically boost AI visibility. But when you dig into the evidence, the picture is far more nuanced.

Let’s separate what’s known from what’s assumed, and look at how schema actually fits into an AI search strategy.

Search is shifting from surfacing a SERP with blue links to AI Overviews, generative answers, and chat‑style summaries that collate content in addition to links.

To get your content to appear in this model, your site has to be understood as entities — singular, unique things or concepts, such as a person, place, or event — and the relationships between them, not just strings of text.

Schema markup is one of the few tools SEOs have to make those entities and relationships explicit and understandable for an AI: This is a person, they work for this organization, this product is offered at this price, this article is authored by that person, etc.

For AI, three elements matter the most:

offeredBy, worksFor, authoredBy, and sameAs schema tags).When schema is implemented with stable values (@id) and a structure (@graph), it starts to behave like a small internal knowledge graph.

AI systems won’t have to guess who you are and how your content fits together, and will be able to follow explicit connections between your brand, your authors, and your topics.

Dig deeper: Why entity authority is the foundation of AI search visibility

Two major platforms have confirmed that schema markup helps their AIs understand content. For these platforms, it is confirmed infrastructure, not speculation.

We don’t know how these platforms use schema yet. They haven’t publicly confirmed whether they preserve schema during web crawling or use it for extraction. The technical capability exists for LLMs to process structured data, but that doesn’t mean their search systems do.

Dig deeper: When and how to use knowledge graphs and entities for SEO

Here are a few studies that show how schema can benefit AI search.

A December 2024 study from Search/Atlas found no correlation between schema markup coverage and citation rates. Sites with comprehensive schema didn’t consistently outperform sites with minimal or no schema markup.

This doesn’t mean schema is useless, it means schema alone doesn’t drive citations. LLM systems appear to prioritize relevance, topical authority, and semantic clarity over whether content has structured markup.

A February 2024 Nature Communications study found that LLMs extract information more accurately when given structured prompts with defined fields versus unstructured “extract what matters” instructions.

Put differently, LLMs perform best when you give them a structured form to fill out, not a blank canvas. When models are asked to extract into predefined fields, they make fewer errors than when told to simply “pull out what matters.”

Schema markup on a page is the web equivalent of that form: a set of explicit entity, brand, product, price, author, and topic fields that a system can map to, rather than inferring everything from unstructured prose.

This tells us that LLMs have the technical capability to process structured data more accurately than unstructured text.

However, this doesn’t tell us whether AI search systems preserve schema markup during web crawling, whether they use it to guide extraction from web pages, or whether this results in better visibility.

The leap from “LLMs can process structured data” to “web schema markup improves AI search visibility” requires assumptions we can’t verify for most platforms.

For Microsoft Bing and Google AI Overviews, schema likely improves extraction accuracy, since they’ve confirmed they use it. For other platforms, we don’t have confirmation of actual implementation.

Dig deeper: Entity-first SEO: How to align content with Google’s Knowledge Graph

AI search is so new — for example, ChatGPT search only launched in October 2024 — that companies haven’t disclosed their indexing methods. Measurement is difficult with non-deterministic AI responses. There are significant gaps in what we can verify.

To date, there are no peer-reviewed studies on schema’s impact on AI search visibility, or controlled experiments on LLM citation behavior and schema markup.

OpenAI, Anthropic, Perplexity, and other platforms besides Microsoft or Google haven’t published their indexing methods.

This gap exists because AI search is genuinely new (ChatGPT search launched in October 2024), companies don’t disclose indexing methods, and measurement is difficult with non-deterministic AI responses.

In traditional SEO, many implementations stop at adding Article or Organization markup in isolation. For AI search, the more useful pattern is to connect nodes into a coherent graph using @id. For example:

Organization node with a stable @id that represents your brand.Person node for the author who works for your organization.Article node authoredBy that person and publishedBy that organization, with about properties that declare the main topics.{

"@context": "https://schema.org",

"@graph": [

{

"@id": "https://example.com/#organization",

"@type": "Organization",

"name": "Example Digital"

},

{

"@id": "https://example.com/#person-jane-doe",

"@type": "Person",

"name": "Jane Doe",

"worksFor": { "@id": "https://example.com/#organization" }

},

{

"@type": "Article",

"@id": "https://example.com/blog/schema-markup-ai-search",

"headline": "Schema Markup for AI Search",

"author": { "@id": "https://example.com/#person-jane-doe" },

"publisher": { "@id": "https://example.com/#organization" }

}

]

} That connected pattern turns your schema from a set of disconnected hints into a reusable entity graph. For any AI system that preserves the JSON‑LD, it becomes much clearer which brand owns the content, which human is responsible for it, and what high‑level topics it is about, regardless of how the page layout or copy changes over time.

| Aspect | Traditional SEO schema | Entity graph schema |

| Structure | Single @type object per page | @graph array of interconnected nodes |

| Entity ID | None (anonymous) | Stable @id URLs for reuse across site |

| Relationships | Nested, one‑way (author: “name”) | Bidirectional via @id refs (worksFor, authoredBy) |

| Primary benefit | Rich snippets, SERP CTR | Entity disambiguation, extraction accuracy for AI |

| AI impact | Minimal (tokenization often strips) | Makes site a unified knowledge graph source if preserved |

| Implementation | Easy, page‑by‑page | Requires site‑wide @id consistency |

Dig deeper: How structured data supports local visibility across Google and AI

For AI search, the best way to position schema right now is to:

Use schema markup for:

However, don’t expect:

Priority schema types (based on platform guidance) include:

Organization (brand entity identity).Article or BlogPosting (content attribution and authorship)Person (author authority and entity connections).Product or Service (commercial entity clarity).FAQPage (Q&A content formats).Dig deeper: The entity home: The page that shapes how search, AI, and users see your brand

Schema markup is infrastructure, not a magic bullet. It won’t necessarily get you cited more, but it’s one of the few things you can control that platforms such as Bing and Google AI Overviews explicitly use.

The real opportunity isn’t schema in isolation. It’s the combination of structured data with proper entity relationships, high-quality, topically authoritative content, clear entity identity and brand signals, and the strategic use of @graph and @id to build entity connections.

Big Battlemage arrives with Intel’s ARC Pro B70 and B65 graphics cards Intel has officially launched its first “Big Battlemage” graphics cards, new, higher-end Xe2 discrete GPUs that stand above Intel’s prior products. The Intel ARC Pro B70 will become available today, March 25th, with pricing starting at $949, while the ARC Pro B65 will […]

The post Intel officially launch their ARC PRO B70 and B65 GPUs appeared first on OC3D.

Expect fewer upsells in the future from Windows 11 Big changes are coming to Windows 11, as Microsoft appears to be finally taking feedback seriously. Microsoft has confirmed that it plans to make Windows 11 more performant and reliable. Additionally, Microsoft appears to be looking into removing mandatory logins from the OS, freeing PC users […]

The post Windows 11 to become “calmer and more chill” OS with “fewer upsells” appeared first on OC3D.

Minecraft's latest "Tiny Takeover" update gives baby mobs a full glow-up, with new models, sounds, and even a Golden Dandelion item that lets you keep them small a little longer. It's a lighter, charm-focused drop, but one players are already embracing for how much personality it adds to everyday gameplay.

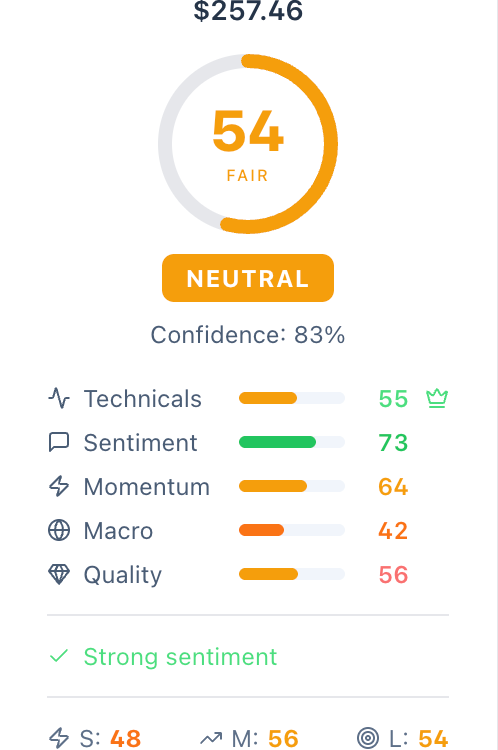

TradeMatrix scores every stock from 0 to 100 using 25 indicators organized into five factors: Technicals, Sentiment, Momentum, Macro, and Quality. Each stock gets three separate scores: short-term, mid-term, and long-term, as different factors matter at different horizons.

Short-term scores weight technicals at 40%, while long-term scores weight business quality at 60%. The same stock can be a Buy for a swing trader and a Hold for a long-term investor, and you can see exactly why. We cover the S&P 500 and NIFTY 500 (Indian market) with full factor breakdowns showing exactly which indicators drive each score. Currently in beta and seeking feedback from active investors.

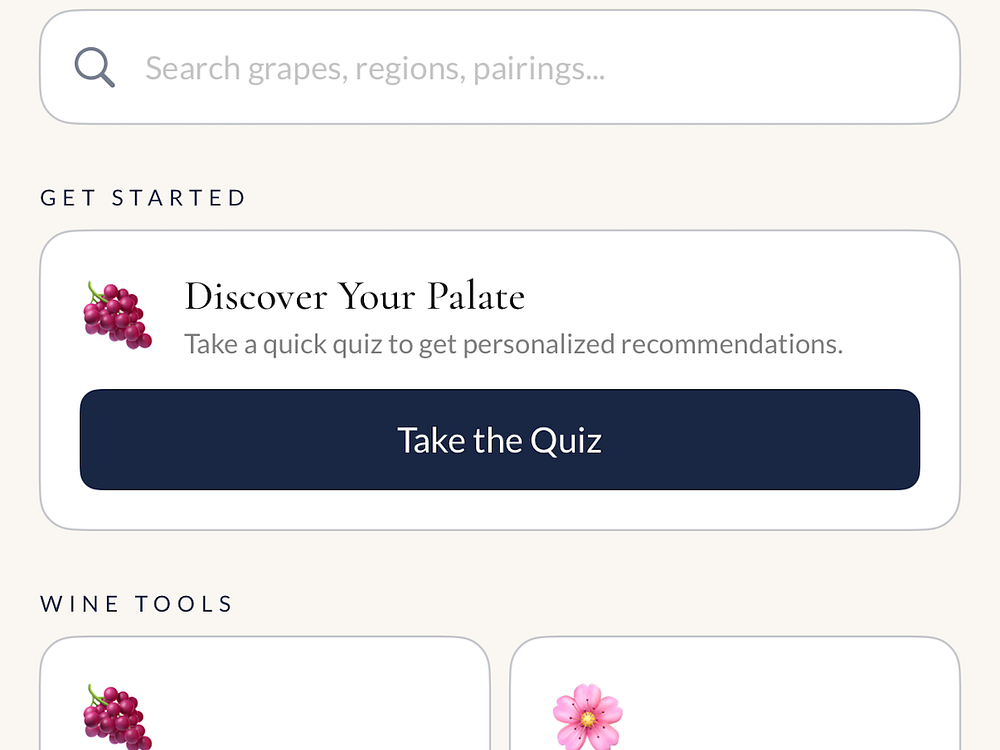

Anchored Vines offers wine education, reviews, and consulting for curious drinkers and wineries. Explore interactive resources like the Periodic Table of Wine, regions map, food pairing guides, aroma wheel, and grape encyclopedia, plus blogs and travel itineraries. You can book personalized consulting to build tasting confidence or get winery support, and use the companion iOS app to learn on the go.

We’re breaking down the steps — from lead scoring to data hygiene — to enable your campaigns to deliver high quality lead volume.

We’re breaking down the steps — from lead scoring to data hygiene — to enable your campaigns to deliver high quality lead volume.

You know the feeling.

You launch a new TikTok ad. Early metrics look great — low CPCs, high engagement, and a ROAS that makes you look like a pro. Then, a few days later, performance slips.

Ad frequency creeps up, the hook rate drops, and you’re suddenly back at the drawing board.

Some call it creative fatigue. On TikTok, it’s closer to creative exhaustion.

A TikTok ad’s “half-life” is shorter than any other platform. If you’re still treating it like a Meta ad campaign, you’ll lose.

To win, treat creative like a supply chain, not a campaign asset.

On intent-based platforms like Google, Amazon, or Pinterest, people search for things. On social platforms, people look for family, friends, and other people. On TikTok, above all, people go for entertainment (though they still discover things and people).

TikTok’s algorithm favors variety, and you consume content at lightning speed. The moment something feels repetitive or stale, you swipe.

Your creative decays faster because the platform runs on high-velocity novelty. You’re competing with thousands of creators and brands.

If your process relies on long feedback loops — from storyboarding to shooting to editing — you’ll fall behind. By the time your ad goes live, the trend has shifted, the audio is dated, the hooks are stale, and your audience has moved on.

To keep up, treat your creative like a fast supply chain:

Use ongoing content capture to avoid bottlenecks and keep up with TikTok’s shrinking content half-life.

Dig deeper: Cross-platform, not copy-paste: Smarter Meta, TikTok, and Pinterest ad creative

Every high-performing TikTok ad can be broken down into three distinct modules.

The most volatile part. It stops the scroll and fatigues fastest.

Film 5–7 variations for each concept. Use pattern interrupts—start mid-action, zoom in, throw a box. Try a negative constraint: “Stop doing [common mistake] if you want [result].”

Use green screen reactions with trending news or customer reviews as the backdrop, with your commentary over it. Strong statements and questions keep it open-ended.

This is where you retain attention, deliver value, and show the “why” or “how.” It’s more educational or narrative and lasts longer than the hook.

Test “us vs. them” in a split-screen showing your product solving a common problem.

Test first-person use in real settings—at home, in the kitchen, outside, at the gym, or at work.

This is where you close. Test psychological triggers to see what moves the needle:

When a winning ad fatigues, don’t kill it. Keep the body and CTA, swap in a new hook. TikTok weights the first seconds for audience matching — use that to reset fatigue and extend performance.

A common mistake is cutting an ad too soon and missing its potential—or letting it run too long and wasting budget.

Your intuition matters, but TikTok’s algorithm sees more. An ad may fatigue with one audience and find a second life with another, so don’t give up too quickly. Here’s when to pause and when to move it elsewhere:

With fast iteration cycles, your TikTok budget can’t be static. Dedicate 20% to 30% of your monthly budget to testing new creative concepts. This budget isn’t for hitting your target ROAS — it’s for buying data and insight.

Once you find a winner, move it into scaling campaigns. This prevents performance from dropping when a single creative hits its half-life.

Dig deeper: How to use TikTok Creator Search Insights to find content opportunities

Brands winning on TikTok aren’t the ones with the biggest budgets or name recognition. They create and test the most.

Capture everything—packaging, shipping, unboxings, product use, customer testimonials—as raw material in your creative supply chain. Shorten the distance between a brand event and launch.

The shrinking ad half-life won’t slow you down. It will become your advantage.

G.Skill pushes its memory speeds past 10K with Intel’s latest CPUs G.Skill has confirmed that its DDR5 memory kits are ready for Intel’s new Core Ultra 200S PLUS CPUs (see our review here). This includes support for G.Skill’s standard DDR5 (DIMM) modules and their CU-DIMM XMP 3 memory kits. G.Skill has noted that many of […]

The post G.Skill showcases DDR5-10000 speeds with Intel Core Ultra 270K PLUS CPU appeared first on OC3D.

Mozilla has released Firefox 149, bringing a new mode for side-by-side browsing, a built-in free VPN with limited rollout, and improved PDF performance thanks to hardware acceleration. The update also adds a share button and enhances security by blocking notifications and known malicious sites by default.

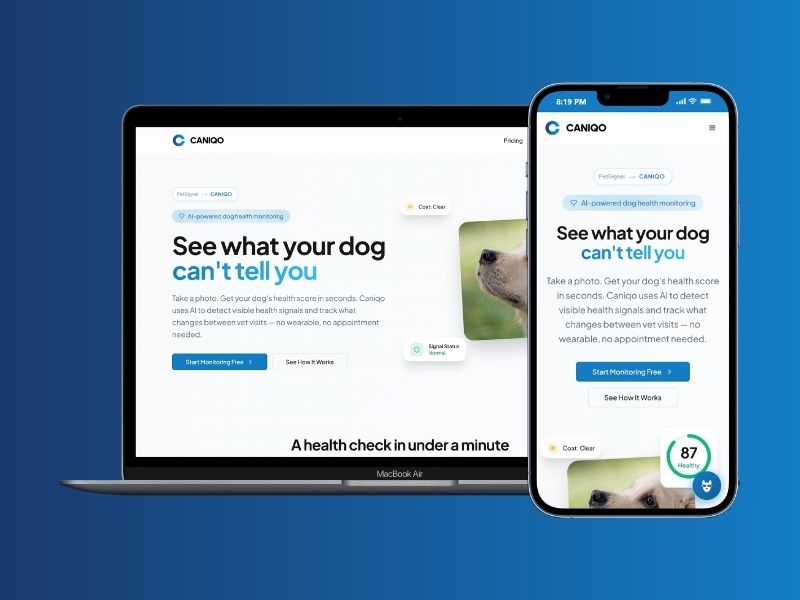

CANIQO is an AI-powered dog health monitoring web app that analyzes photos of your dog to detect visible health signals, such as coat condition, skin appearance, and body posture, and turns them into an objective health score. Dog owners use PetSignal to track their dog's health over time, spot changes early, and get clear guidance on when a vet visit makes sense. It takes less than two minutes, works from any phone, and builds a health timeline that makes every vet appointment more informed.

For the past several years, marketing strategy has reorganized itself around a simple premise. Third-party data is fading. Privacy expectations are rising. The solution, we are told, is first-party data.

Collect more of it. Centralize it. Build the customer view around it.

In many ways, the shift was necessary. Direct relationships with customers are more durable than rented audiences. Consent and transparency matter. Organizations that invested early in their own data ecosystems are better positioned today than those that relied entirely on external signals.

But the industry’s confidence in first-party data has grown so strong that it now obscures a more complicated reality.

Owning customer data does not automatically translate into understanding customers.

Most marketing leaders have sensed this tension already. Despite increasingly sophisticated technology stacks, many organizations still struggle with familiar questions. Which records represent active individuals? Which identities are stale or misattributed? How much of the customer view reflects current behavior versus historical assumptions?

These are not philosophical concerns. They surface in everyday operational decisions. Campaigns that reach fewer real customers than expected. Personalization efforts that plateau. Measurement models that appear precise but produce inconsistent outcomes.

The problem is not the absence of data. If anything, the opposite is true.

The problem is the assumption that the data sitting inside our systems still reflects reality.

One of the quiet characteristics of customer data is how quickly it shifts from present tense to past tense.

Most organizations gather identity information at moments of interaction. Account creation, purchases, subscriptions, service requests. These events create durable records that enter CRM systems, marketing platforms and data warehouses.

From that point forward, the records largely persist as they were captured.

What changes is the world around them.

Consumers rotate devices. Email addresses evolve from primary to secondary. People move, change jobs, create new accounts, abandon others. Behavioral patterns shift with new platforms, new habits, and new privacy controls.

The record still exists, but the certainty surrounding the identity begins to loosen.

Marketing teams encounter this reality in subtle ways. Lists that appear healthy but deliver diminishing engagement. Customer profiles that fragment across systems. Identity graphs that require constant reconciliation as signals drift out of alignment.

None of this means first-party data is wrong. It simply means it ages.

The moment of collection is precise. The months and years that follow are less so.

The idea of a unified customer profile has become foundational to modern marketing infrastructure. Customer data platforms, identity graphs and advanced analytics environments all attempt to bring scattered signals together into a coherent picture.

When the signals align, the results can be powerful.

But the effectiveness of these systems depends heavily on the integrity of the identifiers entering them. Email addresses, login credentials, device associations and other identity anchors serve as the connective tissue between records.

When those anchors drift or degrade, the unified profile begins to lose clarity.

This is not a failure of the technology itself. Most identity platforms perform exactly as designed. They connect the signals available to them.

The challenge is that many of those signals were captured months or years earlier, during moments when the system had limited visibility into the broader identity context surrounding the individual.

As the digital environment evolves, the original record becomes one reference point among many.

Marketing leaders recognize this gap when their systems produce technically accurate profiles that still fail to explain current customer behavior. The database reflects what was known. The customer reflects what is happening now.

Closing that gap requires something more dynamic than stored attributes alone.

In recent years, some organizations have begun looking beyond the traditional boundaries of customer records and focusing more closely on signals that indicate whether an identity is still active within the broader digital ecosystem.

Activity signals provide a different kind of intelligence.

Instead of asking what information was collected about a customer in the past, they ask whether the identity attached to that information continues to exhibit real-world behavior today.

These questions are becoming increasingly important for teams responsible for both growth and risk management.

For marketing, activity signals help clarify which audiences remain reachable and which identities have quietly gone dormant. For fraud teams, they help differentiate legitimate consumers from synthetic identities that appear valid on the surface but lack authentic behavioral patterns.

Both disciplines are ultimately trying to answer the same question.

Does this identity correspond to a real person who is active in the digital world right now?

Stored data alone rarely answers that question with confidence.

Among the many identifiers circulating through the digital ecosystem, one has proven particularly resilient over time.

Email.

For decades it served as both a communication channel and a persistent identity anchor. It appears in authentication systems, commerce transactions, subscriptions, customer service interactions and countless other digital touchpoints.

That ubiquity produces a secondary effect. Email addresses generate a continuous stream of activity signals that reflect how identities move through the online world.

When those signals are analyzed across large networks, they reveal patterns that extend far beyond a single company’s customer database.

They can indicate whether an identity is actively engaged in digital life or has fallen silent. They can highlight inconsistencies that suggest risk. They can surface connections that help reconcile fragmented customer views.

In other words, they transform a simple identifier into a dynamic indicator of identity health.

Organizations that understand this dynamic tend to treat email differently. It becomes less of a campaign endpoint and more of a reference point for understanding identity across channels.

Over the past decade, marketing technology has made extraordinary progress in storing and organizing customer data. Few organizations today lack the infrastructure to capture and analyze enormous volumes of information.

The next frontier is not accumulation. It is validation.

Knowing a customer increasingly depends on the ability to verify that the identities inside a database still correspond to real individuals with ongoing digital activity.

This shift changes how teams think about data quality.

Instead of focusing solely on completeness, forward-looking organizations pay closer attention to vitality. Which identities remain active. Which have quietly faded. Which exhibit patterns that suggest fraud or synthetic creation.

These distinctions influence everything from campaign reach to attribution accuracy to risk exposure.

When identity signals are strong, the rest of the marketing ecosystem performs more reliably. Personalization becomes more relevant. Measurement reflects real outcomes. Customer experiences align more closely with actual behavior.

When identity signals weaken, even the most advanced tools begin operating on uncertain ground.

The industry’s embrace of first-party data was an important correction after years of dependence on opaque third-party sources.

But ownership alone does not guarantee clarity.

Customer records capture moments in time. The people behind them continue to evolve.

For organizations that want to truly understand their customers, the challenge is no longer simply collecting data. It is maintaining an accurate connection between stored identities and real-world activity.

That requires looking beyond the database itself and paying closer attention to the signals that reveal whether an identity remains alive in the digital ecosystem.

Companies that make that shift discover something important.

The most valuable customer data is not the information they collect once.

It is the intelligence that helps them keep that data connected to real people over time.

Primate Labs call Geekbench results with Intel’s IBOT tool “invalid” Primate Labs, the company behind Geekbench, the popular cross-platform benchmarking tool, has responded to the release of Intel’s Core Ultra 200S PLUS series CPUs (see our review here). The company has stated that all Geekbench 6 results using Intel’s new CPU “may be invalid” due […]

The post Geekbench declares all Intel Core Ultra PLUS CPU benchmarks potentially “invalid” appeared first on OC3D.

Prowl automates competitor tracking for pricing, website changes, hiring, news, and social channels. It delivers clear weekly reports explaining what changed, why it matters, and how to respond, plus real-time email or Slack alerts for critical updates. Use dashboards for trend analysis, side-by-side comparisons, and sales battlecards. Get started free for two competitors with no setup required.

QR Dex lets you create, brand, and manage dynamic QR codes while tracking every scan with real-time analytics. You can customize codes with your logo and colors, choose from URL, Email, Phone, SMS, WhatsApp, and Wi-Fi types, and update destinations anytime without reprinting.

Collaborate with your team using folders and roles, view campaign performance across locations, and export reports. The platform secures data in transit and offers SSO for teams that need centralized control.

VaultIt helps parents preserve their children's artwork, photos, and quotes in a secure, organized space. Capture memories quickly, tag by child, date, or theme, and find milestones fast without paper clutter. Choose who sees what, keep everything private, and upgrade for unlimited memories, advanced tags, custom timelines, and HD media. Build a digital time capsule today and later turn it into beautiful printed albums.

Spawn vision-enabled AI agents autonomously browsing the web

Help AI agents recommend you more often to the right people

Create specialized AI agents for real tasks and workflows

Generate design images and 3D models for product design

Stop BS in real-time with AI that fact-checks as you listen

Repurpose social media posts with unique content per format

A unified foundation model that thinks in pixels

Publish your markdown as a beautiful website – in seconds.

Set a budget and get alerted when flights get cheap

Pulls in changes from your tools and generates release notes

AI workspaces for building and running apps on Kubernetes

Where AI agents work at a schedule in the cloud

Create 3D, apps, and websites with parallel agents

AI-native global banking on stablecoins for emerging markets

Teach your repo how to run itself

Your tasks are the interface

Fully autonomous data analysis agent for daily insights

Turns screen recording into structured, AI-generated tasks

Deploy and Host AI Agents for $1/month

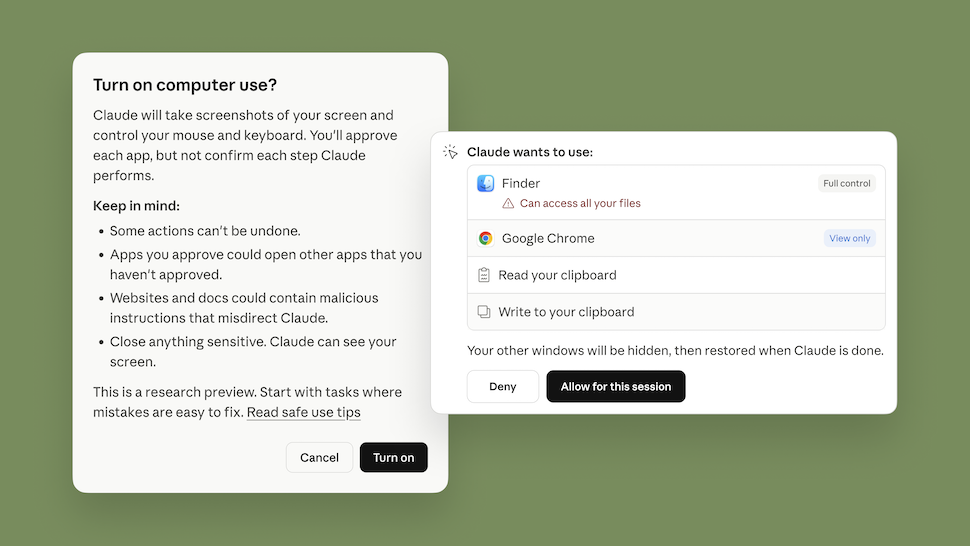

Let Claude make permission decisions on your behalf

AI that turns traffic into more revenue while you sleep

Agentic pentesting, now inside Lovable

New LLM compression algorithm by Google

AI teams that run your work

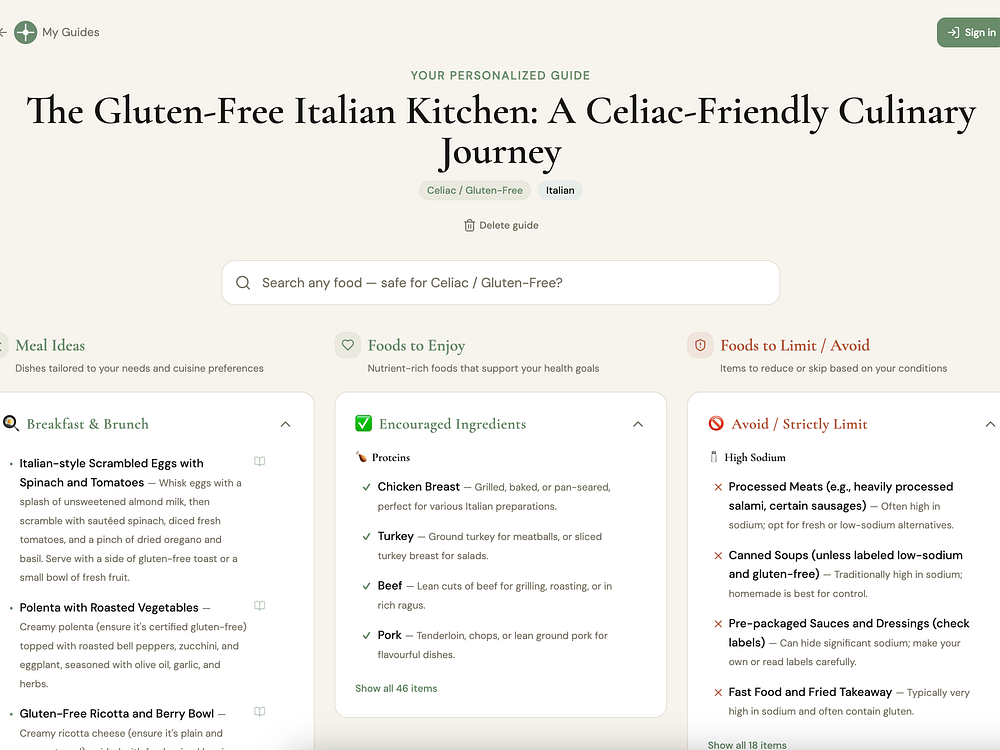

Most nutrition apps start with a calorie target and work backward. NutritionGuide starts with the food you love — your cuisine preferences, health condition, and lifestyle — and builds a 7-day guide from there. There's no calorie counting or macro tracking. Balance is shown as food groups, not numbers. Every meal is swappable, and your guide regenerates every week.

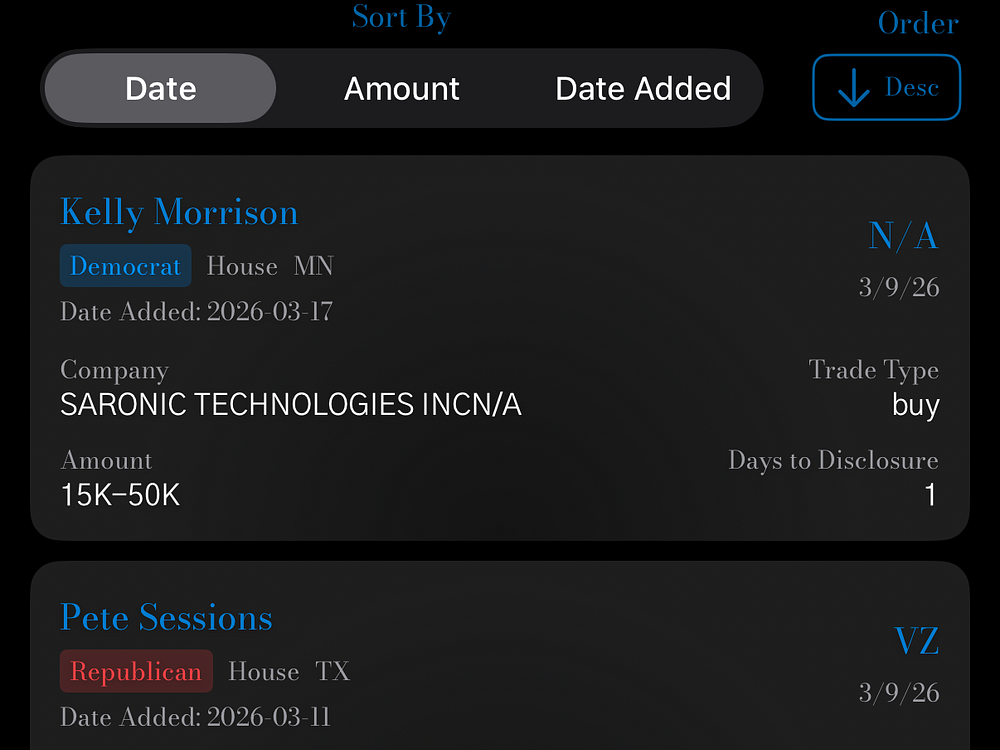

OtterQuant delivers live market intelligence with AI-powered analysis and interactive data. You can track custom portfolios, generate instant financial reports with OtterBot, and chat to screen stocks using natural language. Explore a congressional trade tracker, daily Reddit sentiment, and full earnings call transcripts. View fast intraday charts, analyst targets, calendars, and news for thousands of US tickers. Use free core tools or upgrade for faster updates and higher AI limits.

ManyLens lets you type a real-life dilemma and view structured perspectives side by side from philosophy, psychology, religion, and other traditions. It keeps each lens distinct, highlights common ground, and helps you reflect by saving insights over time. Use it to compare reasoning, spot convergences, and make decisions with context rather than one blended answer.

Reward your brain, feed your Dactyl, get stuff done! Taskadactyl is a gamified task app built for ADHD brains bored by other productivity tools. Your tasks don't get to win anymore. Your Dactyl eats first. Tasks become quests, completions trigger real rewards, with over 50 badges and game themes. Something unlocks at 3 referrals, with clues in the app.

Built by an ADHD founder who got tired of being eaten alive and decided to build the predator instead.

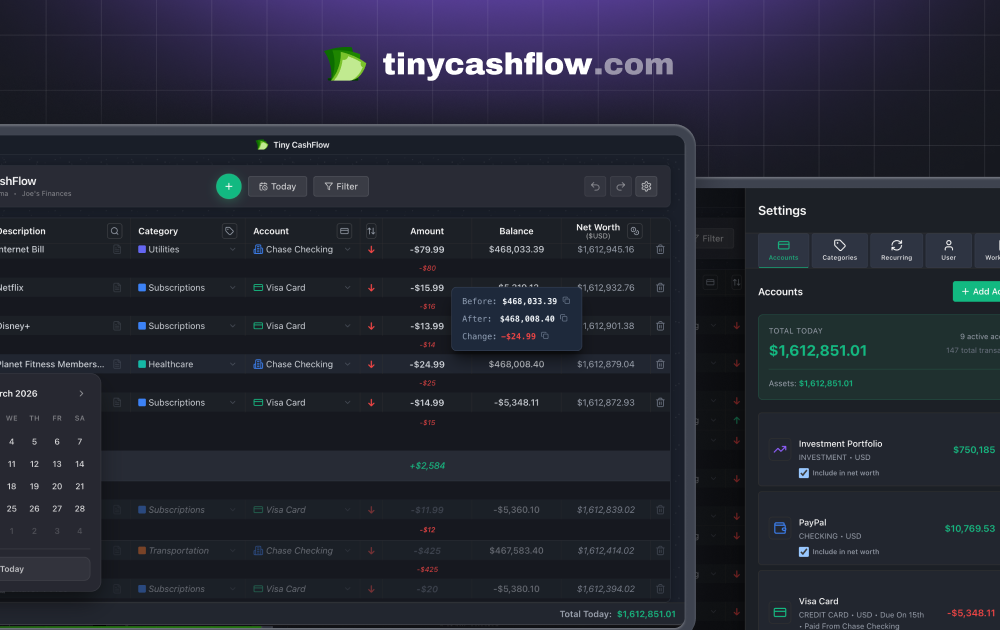

TinyCashFlow is a manual cashflow tracker with an infinite timeline. Instead of just showing your past spending, it projects forward — scroll to any future date and see your exact balance, accounting for all your recurring transactions. Built around a spreadsheet-style interface, everything is on one screen. Edit inline, filter on the fly, and quick-sum any selection. It supports multiple currencies, crypto, and shows a running net worth column across all your accounts. No bank connections or sign-up are required. The free tier is genuinely useful, while premium adds cloud sync, mobile, and multi-sheet support. It works on Mac, Windows, iOS, and Android, and is fully offline first.

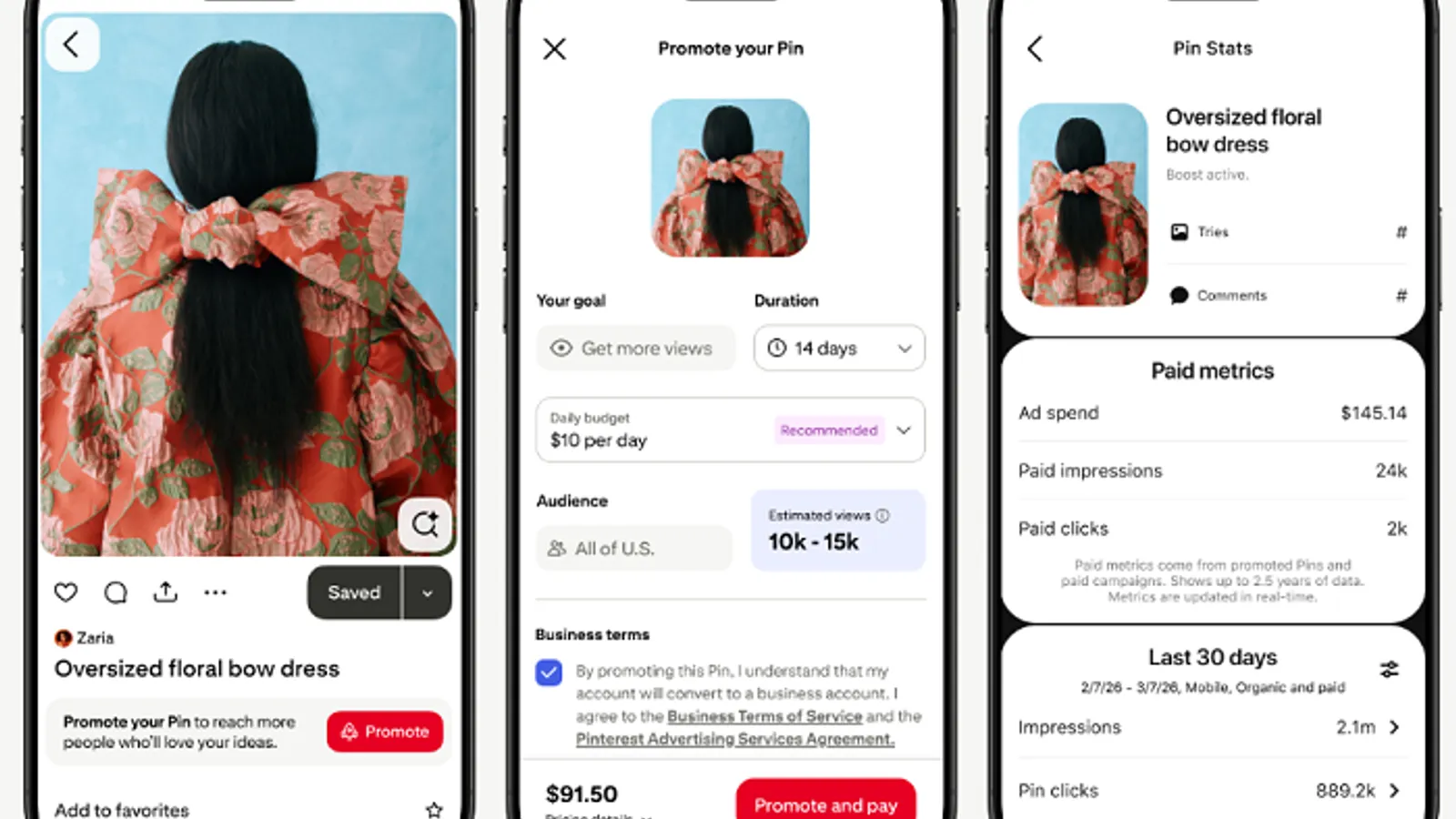

Meta announced a range of new in-app shopping updates at ShopTalk 2026.

Augmented reality developers will be able to create their own effects and integrate those clips into their Lenses using a closed-prompt approach.

The company said the number amounts to about 3.8 million Snaps per minute, although the app’s overall momentum appears to be stalling.

Advertisers will be able to include shoppable tiles and promotional overlays, which can help them reach the platform’s growing community of high-intent shoppers.

The app introduced Total Snap Takeovers and is developing a Snap-specific promotional option in an effort to win more marketing dollars.

The updated premium placement promotional opportunities include Logo Takeover, TopReach and an expanded Pulse suite.

The new option will offer creators and brands a flexible budget option to showcase content and reach more of the platform’s 619 million active users.

New elements are designed to improve ad performance and engagement tracking, as well as assist in campaign setup.

The platform is merging creator and advertising elements into a single space to facilitate collaboration opportunities and streamline affiliate marketing.

The much-requested feature will let creators edit the order of their images and videos after publishing.

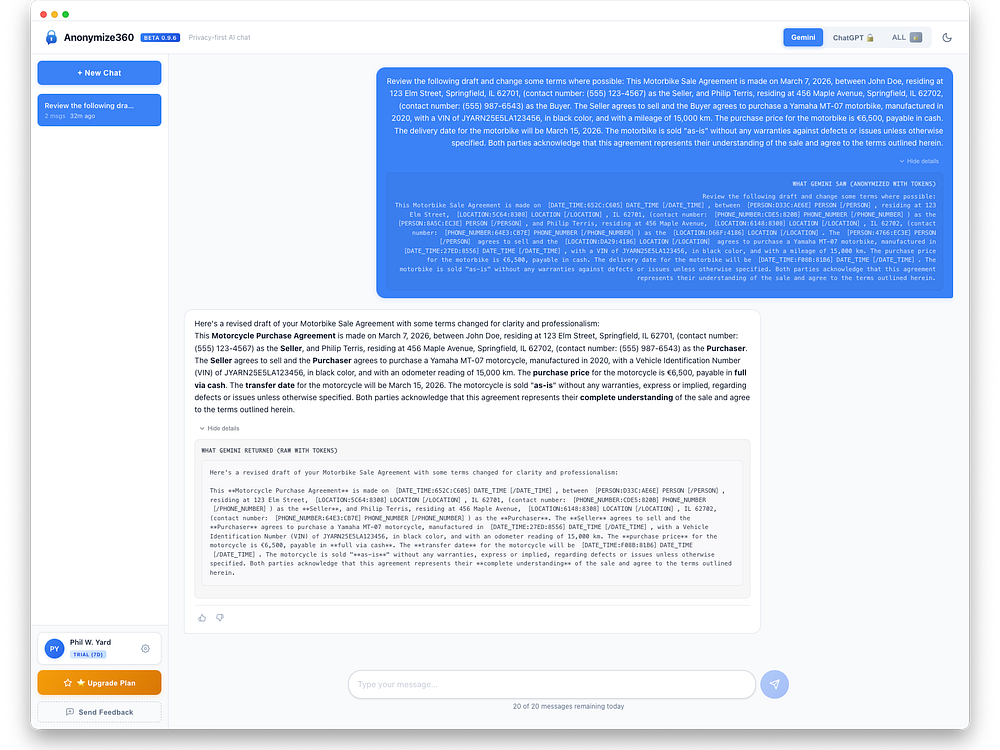

Anonymize360 protects sensitive data in AI chats by rewriting it on your device before it leaves and restoring it on return. It detects PII like names, addresses, SSNs, and medical or financial details, replaces them with tokens, and encrypts the originals locally with AES-256. The system runs on-device with a zero-knowledge design and works seamlessly with AI models. Enterprises gain privacy-by-default workflows and compliance support, while individuals can download and start with a free trial.

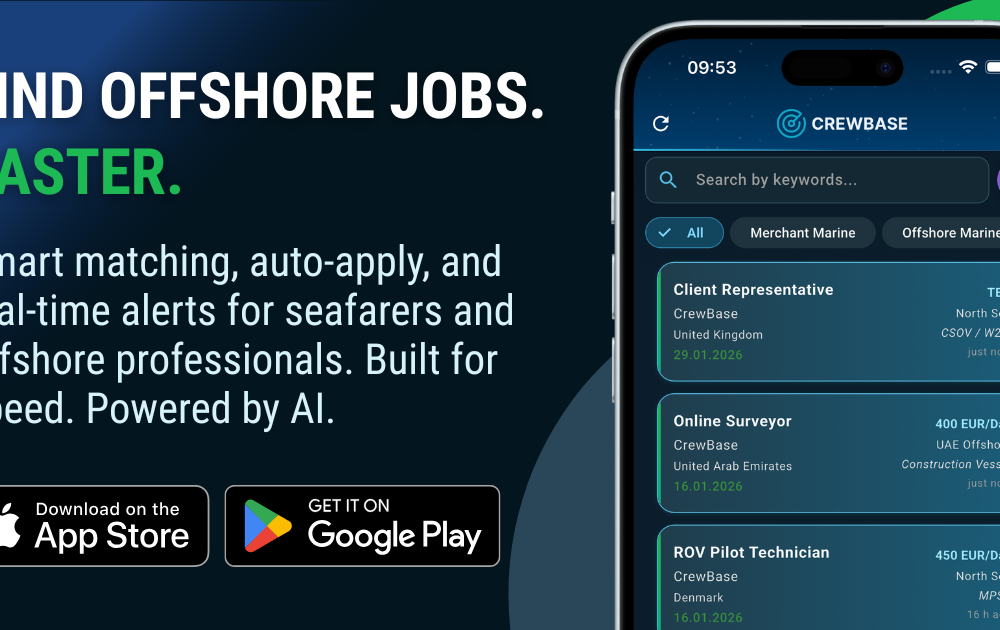

CrewBase connects seafarers and offshore professionals with verified maritime jobs using AI-powered matching, smart filters, and real-time alerts. It lets you search instantly, set auto-apply rules, and generate a polished CV, with seamless access on iOS, Android, and web. Employers post vacancies in minutes, search a growing verified talent pool, and manage applications with secure proxy email and desktop-optimized workflows, enabling fast, targeted maritime recruiting at scale.

Google updated its Discussion Forum and Q&A Page structured data docs with new properties, including a way to label AI- and machine-generated content.

The post Google Adds AI & Bot Labels To Forum, Q&A Structured Data appeared first on Search Engine Journal.

Google has finished rolling out the March 2026 spam update. The update applies globally and to all languages, with rollout taking a few days.

The post Google’s March 2026 Spam Update Is Already Complete appeared first on Search Engine Journal.

Google released its March 2026 spam update today at 3:20 p.m. It’s the second announced Google algorithm update of 2026, following the February 2026 Discover core update.

Timing. This update may only “take a few days to complete,” Google said. On LinkedIn, Google added:

Why we care. This is the second announced Google algorithm update of 2026. It’s unclear what spam this update targets, but if you see ranking or traffic changes in the next few days, it could be due to it.

More on spam update. Google’s documentation says:

“While Google’s automated systems to detect search spam are constantly operating, we occasionally make notable improvements to how they work. When we do, we refer to this as a spam update and share when they happen on our list of Google Search ranking updates.

For example, SpamBrain is our AI-based spam-prevention system. From time-to-time, we improve that system to make it better at spotting spam and to help ensure it catches new types of spam.

Sites that see a change after a spam update should review our spam policies to ensure they are complying with those. Sites that violate our policies may rank lower in results or not appear in results at all. Making changes may help a site improve if our automated systems learn over a period of months that the site complies with our spam policies.

In the case of a link spam update (an update that specifically deals with link spam), making changes might not generate an improvement. This is because when our systems remove the effects spammy links may have, any ranking benefit the links may have previously generated for your site is lost. Any potential ranking benefits generated by those links cannot be regained.”

Update, March 25. The update completed in less than 24 hours. See: Google March 2026 spam update done rolling out

UDN, Machine TranslatedYesterday, ASUS, in partnership with Qualcomm, held a press conference for its new Zenbook A16 laptop. During an interview, Liao Yi-hsiang, General Manager of ASUS United Technology Systems Business, revealed that ASUS has confirmed that PC prices in Taiwan will increase by 25% to 30% or more in the second quarter, with varying increases across different models.

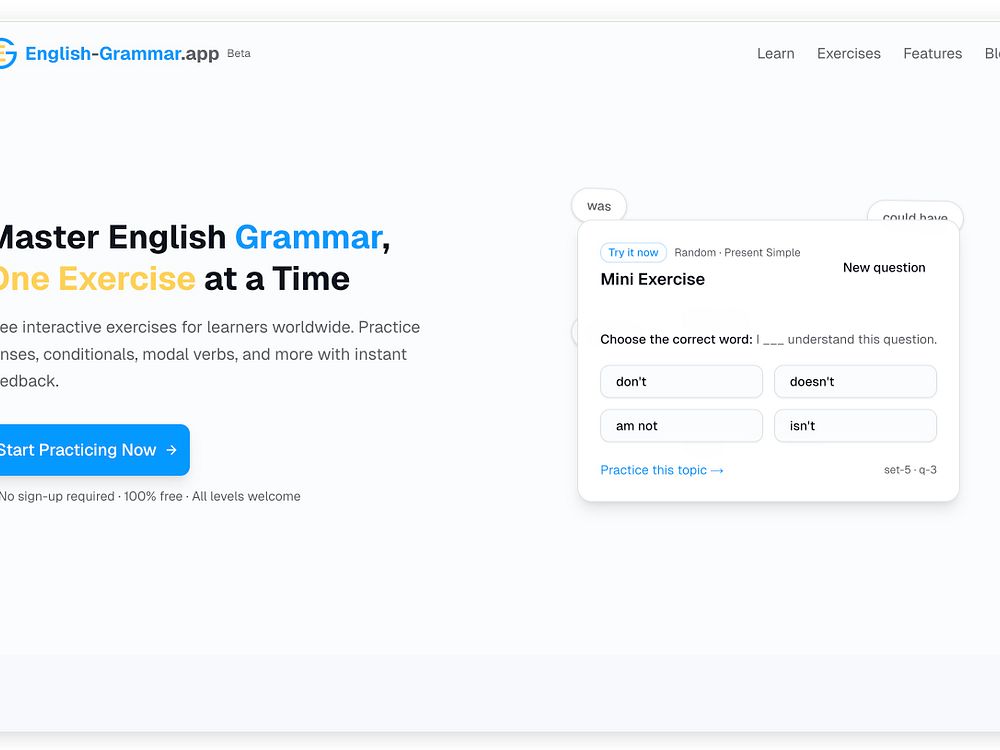

English Grammar guides you to master tenses, conditionals, modal verbs, and more through interactive exercises with instant feedback. Choose multiple choice or fill-in-the-blank, see clear visual cues, and read detailed explanations for every answer. It covers A1 to C1 levels across 20 grammar categories, with hundreds of exercises and more in development. Practice anytime on any device to build confident, accurate English.

Reddit is rolling out new Dynamic Product Ad features, including a shoppable Collection Ads format and Shopify integration, the company announced today.

What’s new.

The numbers. Reddit DPA delivered an average 91% higher ROAS year over year in Q4 2025. Liquid I.V. reports DPA already accounts for 33% of its total platform revenue and outperforms its other conversion campaigns by 40%.

Why now. Reddit has seen a 40% year-over-year increase in shopping conversations. Also, 84% of shoppers say they feel more confident in purchases after researching products on Reddit.

Why we care. The new tools, especially the Shopify integration, lower the barrier to getting started with Dynamic Product Ads. Reddit might still be viewed by some as an undervalued paid media channel, but there’s an opportunity to get in before competition and costs rise.

Bottom line. Reddit is increasingly a serious performance channel for ecommerce, and these tools make it easier to get started. If you’re not yet running DPA on Reddit, the combination of undervalued inventory and improving ad formats makes this a good time to test.

Reddit’s announcement. Introducing More Ways to Tap into Shopping on Reddit

Linkeezy is a compliant workflow tool that brings your LinkedIn inbox, saved posts, and feeds into one organized workspace. Instead of jumping between tabs and losing track of conversations or content, you can manage messages in a clean, Gmail-style view, organize saved posts into a searchable library, and follow focused feeds built around the people and topics that matter most.

Linkeezy runs through a web app and Chrome extension that retrieves your messages and content without storing them. It is designed to align with LinkedIn's terms of service, with no profile scraping, automation, or AI-generated interactions, so you stay in control while keeping your workflow efficient and focused.

Google was just named #1 on Fast Company's 2026 World’s Most Innovative Companies list.

Google was just named #1 on Fast Company's 2026 World’s Most Innovative Companies list.  An overview of Google Quantum AI’s work on superconducting and neutral atom quantum computers.

An overview of Google Quantum AI’s work on superconducting and neutral atom quantum computers.

AI search citations favor a small set of formats. Listicles, articles, and product pages drive over half of all mentions across major LLMs, according to new Wix Studio AI Search Lab research analyzing 75,000 AI answers and more than 1 million citations across ChatGPT, Google AI Mode, and Perplexity.

The findings. Listicles led at 21.9% of citations, followed by articles (16.7%) and product pages (13.7%). Together, these three formats made up 52% of all AI citations.

Why intent wins. Query intent — not industry or model — most strongly predicts which content gets cited. This pattern held across industries, from SaaS to health.

Why we care. This research indicates that you want to map content types to user goals rather than just creating more content. Articles educate, listicles drive comparison, and product pages convert. Aligning content format with user intent could help you capture more AI citations and increase visibility.

Not all listicles perform equally. Third-party listicles accounted for 80.9% of citations in professional services, compared to 19.1% for self-promotional lists. That seems to indicate LLMs prefer neutral, editorial comparisons over brand-led rankings.

Model differences. All models favored listicles, but diverged after that.

Industry patterns. Content preferences shifted slightly by vertical:

The research. The content types most cited by LLMs

A quiet but important policy update is coming to Google Shopping ads next month, requiring some merchants to verify their accounts before running ads featuring political content.

What’s changing. From April 16, merchants running Shopping ads with certain political content in nine countries will need to verify their Google Ads account as an election advertiser. Google will also outright prohibit some political Shopping ads in India.

The countries affected. Argentina, Australia, Chile, Israel, Mexico, New Zealand, South Africa, the United Kingdom, and the United States.

Why we care. Shopping ads aren’t typically associated with political advertising — this update signals that Google is broadening its election integrity efforts beyond search and display into commerce formats. Merchants selling politically themed merchandise, campaign materials, or other related products in the affected countries need to act before the April 16 deadline.

What to do now.

The bottom line. This affects a narrow but specific set of merchants — but the consequences of missing the deadline could mean ads being disapproved or accounts being flagged. If you sell anything with a political angle in the listed countries, check your eligibility now.

MyDreamGirlfriend is an AI-powered dating platform where users create customized AI companions with interactive conversations, voice messaging, and roleplaying features. Optimized for both mobile and desktop, it offers a freemium subscription model. Users can exchange voice notes and photos, unlocking content and deeper interactions with gems. Start free and upgrade for unlimited messages, multiple companions, and extras. All conversations are end-to-end encrypted for complete privacy.

LYNARA is a browser-based platform for precise multi-layer system design. It visualizes complex software landscapes in 3D and lets you structure user interface, services, and data layers for clarity. Use fast keyboard shortcuts to select, copy, paste, and navigate across layers, all without installation or a credit card.

New Gemini features for Google TV include richer visual answers, deep dives, and sports briefs, making it easier to explore the topics you love.

New Gemini features for Google TV include richer visual answers, deep dives, and sports briefs, making it easier to explore the topics you love.  Android Automotive OS is expanding as an open-source platform for core car functions, enabling new features and updates from manufacturers.

Android Automotive OS is expanding as an open-source platform for core car functions, enabling new features and updates from manufacturers.

AI citations in ChatGPT are far more concentrated than citation distributions in traditional search. Roughly 30 domains capture 67% of citations within a topic.

The details. Citation visibility wasn’t evenly distributed. In product comparison topics, the top 10 domains accounted for 46% of citations; the top 30, 67%.

What changed. Ranking No. 1 in Google still matters, but it’s not enough. Of pages ranking No. 1, 43.2% were cited by ChatGPT — 3.5x more often than pages beyond the top 20.

Why we care. Publishing the “best answer” for one keyword isn’t enough. ChatGPT rewards domains that cover a topic from multiple angles, not pages optimized for isolated terms. And discovery often happens outside the keyword universe you track.

The patterns. Longer pages generally earned more citations, with variation by vertical. The biggest lift appeared between 5,000 to 10,000 characters. Pages above 20,000 characters averaged 10.18 citations vs. 2.39 for pages under 500.

On-page behavior. ChatGPT cited heavily from the upper part of a page. The 10% to 20% section performed best across all industries.

About the data. Indig analyzed ~98,000 citation rows from ~1.2 million ChatGPT responses (Gauge), isolating seven verticals. The study used structural page parsing, positional mapping, and entity and sentiment analysis to identify which pages earned citations and where they come from.

The study. The science of how AI picks its sources

A new creative feature has been spotted inside Google Ads Performance Max campaigns — and it could change how advertisers without video budgets approach animated display advertising.

What was found. Vice President of Search at JumpFly, Inc. Nikki Kuhlman spotted an option to generate animated video clips directly within PMax asset groups, using AI to enhance and animate a single source image.

How it works.

Early results from testing. A logo generated a spinning animation of the image element. A house with a sold sign produced a slow cinematic pan. Simple inputs, but the output quality appears usable for display advertising without any video production required.

Where the ads appear. Google hasn’t provided in-product documentation on placement, but early testing shows animated clips surfacing in Display ad previews when added to an asset group.

Why we care. Video assets continue to be a strong creative option on Paid Media — but producing video has always required time, budget, and resources many advertisers don’t have. This feature effectively removes that barrier — turning a single product photo or logo into animated display creative in seconds, at no additional production cost.

For advertisers who’ve been running PMax on static images alone, this could be a meaningful and easy win.

The bottom line. This feature is still unconfirmed by Google, but advertisers running PMax should check their asset groups now. If it’s available in your account, it’s worth testing — especially for campaigns that have been running on static images alone.

First seen. Kuhlman shared spotting this new feature on LinkedIn.

AI tools and visibility have dominated the SEO conversation in the past two years. But while discussions focus on these new technologies, most of the biggest SEO risks in 2026 will come from somewhere else: within your own organization.

Fragmented data, unclear ownership, outdated KPIs, and weak collaboration can quietly destroy even the best strategies. As SEO expands beyond the website and into AI-driven discovery, the role of the SEO team is becoming broader, more influential, and, paradoxically, harder to define.

Here are some of the risks your team should start thinking about now.

Many SEO teams now rely on AI for everything, from generating briefs to analyzing data. That’s often necessary. You can’t spend hours creating a brief when AI can produce something usable in minutes. But that’s also where the risk starts.

AI can generate content quickly, but “acceptable” won’t differentiate you. You still need a clear point of view — what story you’re telling and what unique angle you bring. Without that, your content becomes generic, predictable, and indistinguishable from competitors using the same tools.

The issue is simple: if you ask similar tools similar questions, you’ll get similar answers. And your competitors have access to the same tools.

Some companies try to stand out by training models on proprietary data. In reality, few teams do this at scale. Most prioritize speed over quality.

There’s also risk in using AI for analysis without understanding the data behind it. AI is fast, but it can misinterpret or hallucinate results.

I’ve seen this firsthand. An AI tool hallucinated part of a calculation during an urgent analysis, making every insight that followed incorrect. It only acknowledged the mistake after it was explicitly pointed out.

More broadly, AI excels at identifying patterns. But in SEO, competitive advantage rarely comes from following patterns. The most effective strategies don’t just mirror what everyone else is doing. Sometimes the best opportunity isn’t the obvious one.

AI is reshaping how SEO work gets done, how impact is measured, and whether it can be measured at all.

Dig deeper: Why most SEO failures are organizational, not technical

The SEO toolkit you know, plus the AI visibility data you need.

For years, SEO professionals have worked with incomplete datasets. We’ve never had a full view of the user journey. That’s one reason organic impact has often been underestimated. In the past, though, we could still piece together a reasonably clear picture — from ranking to click to conversion.

Today, that picture is far more fragmented. AI tools have changed how people research and discover products. Users now start in AI assistants – asking questions, comparing options, and building shortlists before ever visiting a website. By the time they land on your page, part of the decision-making process is already done.

The problem is we have zero visibility into that journey. If a user discovers your brand through an AI-generated answer, adds you to a shortlist, then later searches for you directly, the signals that influenced that decision are invisible. We only see the final step.

Microsoft Bing has introduced basic reporting for AI searches, but it’s limited. We still can’t see the prompts behind specific page visibility.

At the same time, SEO teams are still expected to prove impact. Some companies are adding questions to lead forms to understand how users discovered them. In theory, this adds signal. In practice, it depends on accurate self-reporting. I know how I fill out forms, so I question how reliable that data really is. Still, it’s a start.

Fragmented data creates another risk: focusing on the wrong KPIs. Stakeholders still ask about traffic. No matter how often SEO teams explain that its role has changed, traffic remains a default measure of success. For years, organic growth meant more sessions, users, and visits. That mindset hasn’t fully shifted.

At the same time, stakeholders are drawn to newer metrics — AI visibility, citations, and mentions. These aren’t inherently wrong, but they need to be used carefully.

Most tools measure AI visibility using a predefined set of queries. That’s where risk creeps in. Teams can become too focused on improving visibility scores, even if it means optimizing for prompts that look good in reports rather than those that matter to the business.

For example, appearing for “What is XYZ software?” isn’t the same as showing up for “Which XYZ software is best?” The first may drive visibility, but the second is much closer to a purchase decision.

To avoid this, visibility metrics need to be tied to business outcomes — a real challenge given the fragmented data problem.

Tracking AI visibility also opens another rabbit hole: debates over which prompts to track, how many to include, and why. This can quickly overcomplicate measurement, especially if teams lose sight of the goal. The objective isn’t to track every phrasing, but to understand the intent behind it. Trying to capture every variation is impossible.

Dig deeper: Why governance maturity is a competitive advantage for SEO

SEO teams are expected to own AI visibility strategy much like they owned SEO strategy. But strategy is often treated as execution.

Even in the past, SEO was never fully independent. It relied on other teams — engineering to implement changes and content to create pages. The difference is that most of this work used to happen on the company’s own website.

That’s no longer true. Visibility in AI answers requires presence beyond your domain — Reddit threads, YouTube videos, and media mentions all play a role.

This significantly expands the scope of work. At the same time, many of these surfaces don’t have clear owners inside organizations. Even when they do, there’s a tendency to assume that if SEO owns the strategy, it should also own execution or at least be accountable for outcomes.

The opposite happens, too. If other teams own execution, they may take ownership of the entire strategy. In reality, neither model works well.

SEO teams can’t manage every platform that influences AI visibility. They don’t have the expertise to produce YouTube content or run PR campaigns. Their strength is knowing what works and helping optimize it. For example, advising on how a video should be structured to perform on YouTube.

Owning strategy also doesn’t mean deciding who owns execution. That’s a leadership responsibility. It requires visibility across teams and the authority to assign ownership. Otherwise, one team is left deciding how its peers should operate.

Even when companies recognize the importance of AI visibility, cross-team collaboration remains a challenge.

Roles and processes are often unclear. SEO teams may expect others to execute, while those teams assume it’s SEO’s responsibility. In other cases, teams don’t prioritize AI visibility because their KPIs focus elsewhere.

This is where leadership alignment becomes critical. If AI visibility is truly a strategic priority, it needs to be reflected in goals and KPIs across all relevant teams. When AI-related KPIs sit only with SEO, it creates an imbalance: one team is accountable for outcomes, while execution depends on many others.

Many teams are also unsure how to work with SEO. Some don’t involve SEO early enough. Others choose not to follow recommendations because they don’t agree with them.

SEO teams share responsibility here, too. They need to actively onboard other teams and clearly connect SEO efforts to broader business goals. It’s our job to show that lack of visibility means lost revenue.

I’ve seen cases where teams critical to AI visibility hadn’t even read the strategy document. In these situations, the issue isn’t one-sided. Teams need to understand what’s expected of them, and SEO needs to push for alignment and involve stakeholders early. Simply moving forward without that alignment doesn’t work.

SEO teams also don’t always explain the “why.” AI visibility can end up treated as a standalone SEO metric rather than a business driver. Even when there’s agreement on its importance, a lack of clear processes, shared goals, and training keeps collaboration inconsistent.

Dig deeper: Why 2026 is the year the SEO silo breaks and cross-channel execution starts

With rapid changes in search, SEO teams often spend more time on theory — reading, analyzing, building frameworks, and refining strategies — instead of making changes to the website.

That doesn’t mean teams should stop learning. Quite the opposite. But strategy without execution quickly loses value. In many organizations, SEO teams are expected to produce in-depth strategy documents meant to align teams and define priorities. In reality, many go unread outside the SEO team. They require significant effort but deliver little impact.

Part of the problem is that strategies are often too theoretical. They explain the why but miss the what. The value of a strategy isn’t the document, but the actions that follow. Other teams need to understand what to do and how to contribute.

AI is also accelerating how quickly search evolves. Waiting months to test ideas no longer works. A more practical approach is to understand the direction, implement changes, observe results, and iterate. Smaller experiments often lead to faster learning.

SEO has always been a consulting function. Success depends on collaboration with teams like engineering, content, and product. Today, that dynamic is more visible than ever. In many cases, SEO teams don’t execute directly. Their role is to enable others.

In mature organizations, this works well. Collaboration is strong, and credit is shared. SEO’s consulting role is recognized without forcing the team to own areas outside its expertise. In less mature environments, it can lead to SEO being undervalued or seen as unnecessary.

AI adds another layer. It can generate keyword ideas, outlines, and optimization suggestions, making SEO look deceptively simple, much like writing content. AI lowers the barrier to entry, but it doesn’t replace expertise. Without that expertise, teams produce work that’s technically correct but average.

It’s a familiar pattern: copy-pasting a Screaming Frog SEO Spider error list into a task doesn’t demonstrate real understanding. This creates a paradox. The more SEO becomes a company-wide capability, the more the SEO team risks becoming invisible.

Dig deeper: SEO execution: Understanding goals, strategy, and planning

Track, optimize, and win in Google and AI search from one platform.

SEO teams won’t fail in 2026 because of a lack of knowledge. They’ll fail if they can’t turn that knowledge into action, influence, and business impact.

The challenge is no longer just optimizing pages. It’s building processes, partnerships, and measurement models that reflect how visibility works today.

Success also depends on leadership support. Many of the biggest risks are structural — fragmented data, unclear ownership, weak collaboration, outdated KPIs, and the gap between strategy and execution.

AI visibility expands beyond the website and into the broader organization. That doesn’t make SEO less important, but it does make it harder to define, measure, and defend.

The companies that succeed will stop treating SEO as a traffic function and start treating it as a business capability that drives visibility, discovery, and growth.

Apple is preparing to introduce sponsored listings in Apple Maps, marking a significant expansion of its advertising business beyond the App Store.

How it will work. According to Bloomberg’s Mark Gurman, the system will function similarly to Google Maps — allowing retailers and brands to bid for ad slots against search queries. Sponsored businesses will appear in Maps search results, much like sponsored apps already appear in App Store searches.

The timeline. An announcement could come as early as this month, with ads beginning to appear inside Maps as early as this summer across iPhone, other Apple devices, and the web version.

Why Apple is doing this. Advertising is a growing and high-margin revenue stream for Apple’s services business. Maps — with its massive built-in user base across Apple devices — is a natural next step, particularly as location-based advertising continues to grow.

Why we care. Apple Maps has a massive built-in user base across iPhone and Apple devices, and users searching within Maps are expressing clear, high-intent signals — they’re actively looking for somewhere to go or something to buy. This opens up a brand new location-based advertising channel that previously didn’t exist on Apple’s platform, giving local businesses and retailers a way to reach those users at exactly the right moment.

Advertisers already running Google Maps or local search campaigns should pay close attention, as this could quickly become a significant complementary channel.